“Deep Fake” Video Technology Is Advancing Faster Than Our Policies Can Keep Up

Artificial avatars for hire and sophisticated video manipulation carry profound implications for society.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Alethea.ai sports a grid of faces smiling, blinking and looking about. Some are beautiful, some are oddly familiar, but all share one thing in common—they are fake.

Alethea creates "synthetic media"— including digital faces customers can license saying anything they choose with any voice they choose. Companies can hire these photorealistic avatars to appear in explainer videos, advertisements, multimedia projects or any other applications they might dream up without running auditions or paying talent agents or actor fees. Licenses begin at a mere $99. Companies may also license digital avatars of real celebrities or hire mashups created from real celebrities including "Don Exotic" (a mashup of Donald Trump and Joe Exotic) or "Baby Obama" (a large-eared toddler that looks remarkably similar to a former U.S. President).

Naturally, in the midst of the COVID pandemic, the appeal is understandable. Rather than flying to a remote location to film a beer commercial, an actor can simply license their avatar to do the work for them. The question is—where and when this tech will cross the line between legitimately licensed and authorized synthetic media to deep fakes—synthetic videos designed to deceive the public for financial and political gain.

Deep fakes are not new. From written quotes that are manipulated and taken out of context to audio quotes that are spliced together to mean something other than originally intended, misrepresentation has been around for centuries. What is new is the technology that allows this sort of seamless and sophisticated deception to be brought to the world of video.

"At one point, video content was considered more reliable, and had a higher threshold of trust," said Alethea CEO and co-founder, Arif Khan. "We think video is harder to fake and we aren't yet as sensitive to detecting those fakes. But the technology is definitely there."

"In the future, each of us will only trust about 15 people and that's it," said Phil Lelyveld, who serves as Immersive Media Program Lead at the Entertainment Technology Center at the University of Southern California. "It's already very difficult to tell true footage from fake. In the future, I expect this will only become more difficult."

How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

As the U.S. 2020 Presidential Election nears, the potential moral and ethical implications of this technology are startling. A number of cases of truth tampering have recently been widely publicized. On August 5, President Donald Trump's campaign released an ad featuring several photos of Joe Biden that were altered to make it seem like was hiding all alone in his basement. In one photo, at least ten people who had been sitting with Biden in the original shot were cut out. In other photos, Biden's image was removed from a nature preserve and praying in church to make it appear Biden was in that same basement. Recently several videos of Speaker of the House Nancy Pelosi were slowed down by 75 percent to make her sound as if her speech was slurred.

During a campaign event in Florida on September 15 of this year, former Vice President Joe Biden was introduced by Puerto Rican singer-songwriter Luis Fonsi. After he was introduced, Biden paid tribute to the singer-songwriter—he held up his cell phone and played the hit song "Despecito". Shortly afterward, a doctored version of this video appeared on self-described parody site the United Spot replacing the Despicito with N.W.A.'s "F—- Tha Police". By September 16, Donald Trump retweeted the video, twice—first with the line "What is this all about" and second with the line "China is drooling. They can't believe this!" Twitter was quick to mark the video in these tweets as manipulated media.

Twitter had previously addressed several of Donald Trump's tweets—flagging a video shared in June as manipulated media and removing altogether a video shared by Trump in July showing a group promoting the hydroxychloroquine as an effective cure for COVID-19. Many of these manipulated videos are ultimately flagged or taken down, but not before they are seen and shared by millions of online viewers.

These faked videos were exposed rather quickly, as they could be compared with the original, publicly available source material. But what happens when there is no original source material? How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

"This type of fake media is a profound threat to our democracy," said Reid Blackman, the CEO of VIRTUE--an ethics consultancy for AI leaders. "Democracy depends on well-informed citizens. When citizens can't or won't discern between real and fake news, the implications are huge."

In light of the importance of reliable information in the political system, there's a clear and present need to verify that the images and news we consume is authentic. So how can anyone ever know that the content they are viewing is real?

"This will not be a simple technological solution," said Blackman. "There is no 'truth' button to push to verify authenticity. There's plenty of blame and condemnation to go around. Purveyors of information have a responsibility to vet the reliability of their sources. And consumers also have a responsibility to vet their sources."

Yet the process of verifying sources has never been more challenging. More and more citizens are choosing to live in a "media bubble"—gathering and sharing news only from and with people who share their political leanings and opinions. At one time, United States broadcasters were bound by the Fairness Doctrine—requiring them to present controversial issues important to the public in a way that the FCC deemed honest, equitable and balanced. The repeal of this doctrine in 1987 paved the way for new forms of cable news channels such as Fox News and MSNBC that appealed to viewers with a particular point of view. The Internet has only exacerbated these tendencies. Social media algorithms are designed to keep people clicking within their comfort zones by presenting members with only the thoughts and opinions they want to hear.

"I sometimes laugh when I hear people tell me they can back a particular opinion they hold with research," said Blackman. "Having conducted a fair bit of true scientific research, I am aware that clicking on one article on the Internet hardly qualifies. But a surprising number of people believe that finding any source online that states the fact they choose to believe is the same as proving it true."

Back to the fundamental challenge: How do we as a society root out what's false online? Lelyveld suggests that it will begin by verifying things that are known to be true rather than trying to call out everything that is fake. "The EU called me in to talk about how to deal with fake news coming out of Russia," said Lelyveld. "I told them Hollywood has spent 100 years developing special effects technology to make things that are wholly fictional indistinguishable from the truth. I told them that you'll never chase down every source of fake news. You're better off focusing on what can be proved true."

Arif Khan agrees. "There are probably 100 accounts attributed to Elon Musk on Twitter, but only one has the blue checkmark," said Khan. "That means Twitter has verified that an account of public interest is real. That's what we're trying to do with our platform. Allow celebrities to verify that specific videos were licensed and authorized directly by them."

Alethea will use another key technology called blockchain to mark all authentic authorized videos with celebrity avatars. Blockchain uses a distributed ledger technology to make sure that no undetected changes have been made to the content. Think of the difference between editing a document in a traditional word processing program and editing in a distributed online editing system like Google Docs. In a traditional word processing program, you can edit and copy a document without revealing any changes. In a shared editing system like Google Docs, every person who shares the document can see a record of every edit, addition and copy made of any portion of the document. In a similar way, blockchain helps Alethea ensure that approved videos have not been copied or altered inappropriately.

While AI companies like Alethea are moving to ensure that avatars based on real individuals aren't wrongly identified, the situation becomes a bit murkier when it comes to the question of representing groups, races, creeds, and other forms of identity. Alethea is rightly proud that the completely artificial avatars visually represent a variety of ages, races and sexes. However, companies could conceivably license an avatar to represent a marginalized group without actually hiring a person within that group to decide what the avatar will do or say.

"I don't know if I would call this tokenism, as that is difficult to identify without understanding the hiring company's intent," said Blackman. "Where this becomes deeply troubling is when avatars are used to represent a marginalized group without clearly pointing out the actor is an avatar. It's one thing for an African American woman avatar to say, 'I like ice cream.' It's entirely different thing for an African American woman avatar to say she supports a particular political candidate. In the second case, the avatar is being used as social proof that real people of a certain type back a certain political idea. And there the deception is far more problematic."

"It always comes down to unintended consequences of technology," said Lelyveld. "Technology is neutral—it's only the implementation that has the power to be good or bad. Without a thoughtful approach to the cultural, moral and political implications of technology, it often drifts towards the bad. We need to make a conscious decision as we release new technology to ensure it moves towards the good."

When presented with the idea that his avatars might be used to misrepresent marginalized groups, Khan was thoughtful. "Yes, I can see that is an unintended consequence of our technology. We would like to encourage people to license the avatars of real people, who would have final approval over what their avatars say or do. As to what people do with our completely artificial avatars, we will have to consider that moving forward."

Lelyveld frankly sees the ability for advertisers to create avatars that are our assistants or even our friends as a greater moral concern. "Once our digital assistant or avatar becomes an integral part of our life—even a friend as it were, what's to stop marketers from having those digital friends make suggestions about what drink we buy, which shirt we wear or even which candidate we elect? The possibilities for bad actors to reach us through our digital circle is mind-boggling."

Ultimately, Blackman suggests, we as a society will need to make decisions about what matters to us. "We will need to build policies and write laws—tackling the biggest problems like political deep fakes first. And then we have to figure out how to make the penalties stiff enough to matter. Fining a multibillion-dollar company a few million for a major offense isn't likely to move the needle. The punishment will need to fit the crime."

Until then, media consumers will need to do their own due diligence—to do the difficult work of uncovering the often messy and deeply uncomfortable news that's the truth.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

Thanks to safety cautions from the COVID-19 pandemic, a strain of influenza has been completely eliminated.

If you were one of the millions who masked up, washed your hands thoroughly and socially distanced, pat yourself on the back—you may have helped change the course of human history.

Scientists say that thanks to these safety precautions, which were introduced in early 2020 as a way to stop transmission of the novel COVID-19 virus, a strain of influenza has been completely eliminated. This marks the first time in human history that a virus has been wiped out through non-pharmaceutical interventions, such as vaccines.

The flu shot, explained

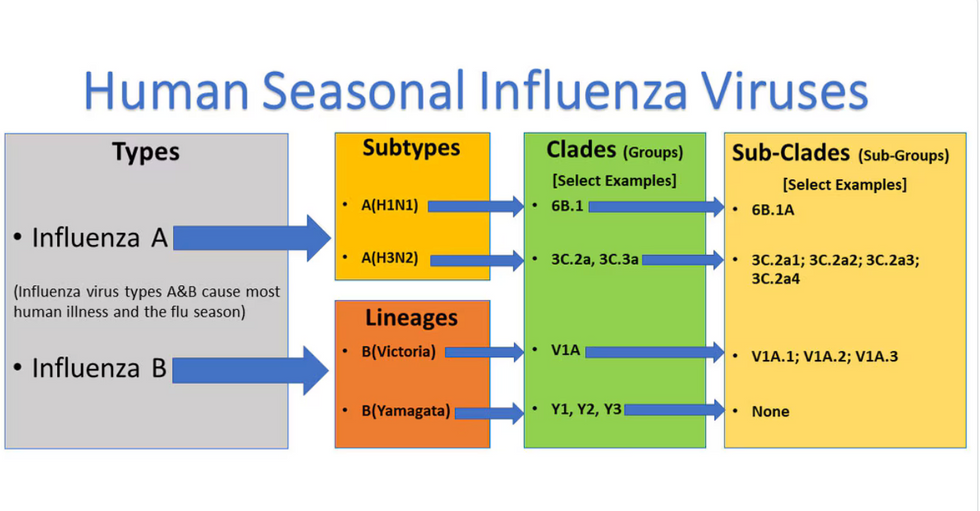

Influenza viruses type A and B are responsible for the majority of human illnesses and the flu season.

Centers for Disease Control

For more than a decade, flu shots have protected against two types of the influenza virus–type A and type B. While there are four different strains of influenza in existence (A, B, C, and D), only strains A, B, and C are capable of infecting humans, and only A and B cause pandemics. In other words, if you catch the flu during flu season, you’re most likely sick with flu type A or B.

Flu vaccines contain inactivated—or dead—influenza virus. These inactivated viruses can’t cause sickness in humans, but when administered as part of a vaccine, they teach a person’s immune system to recognize and kill those viruses when they’re encountered in the wild.

Each spring, a panel of experts gives a recommendation to the US Food and Drug Administration on which strains of each flu type to include in that year’s flu vaccine, depending on what surveillance data says is circulating and what they believe is likely to cause the most illness during the upcoming flu season. For the past decade, Americans have had access to vaccines that provide protection against two strains of influenza A and two lineages of influenza B, known as the Victoria lineage and the Yamagata lineage. But this year, the seasonal flu shot won’t include the Yamagata strain, because the Yamagata strain is no longer circulating among humans.

How Yamagata Disappeared

Flu surveillance data from the Global Initiative on Sharing All Influenza Data (GISAID) shows that the Yamagata lineage of flu type B has not been sequenced since April 2020.

Nature

Experts believe that the Yamagata lineage had already been in decline before the pandemic hit, likely because the strain was naturally less capable of infecting large numbers of people compared to the other strains. When the COVID-19 pandemic hit, the resulting safety precautions such as social distancing, isolating, hand-washing, and masking were enough to drive the virus into extinction completely.

Because the strain hasn’t been circulating since 2020, the FDA elected to remove the Yamagata strain from the seasonal flu vaccine. This will mark the first time since 2012 that the annual flu shot will be trivalent (three-component) rather than quadrivalent (four-component).

Should I still get the flu shot?

The flu shot will protect against fewer strains this year—but that doesn’t mean we should skip it. Influenza places a substantial health burden on the United States every year, responsible for hundreds of thousands of hospitalizations and tens of thousands of deaths. The flu shot has been shown to prevent millions of illnesses each year (more than six million during the 2022-2023 season). And while it’s still possible to catch the flu after getting the flu shot, studies show that people are far less likely to be hospitalized or die when they’re vaccinated.

Another unexpected benefit of dropping the Yamagata strain from the seasonal vaccine? This will possibly make production of the flu vaccine faster, and enable manufacturers to make more vaccines, helping countries who have a flu vaccine shortage and potentially saving millions more lives.

After his grandmother’s dementia diagnosis, one man invented a snack to keep her healthy and hydrated.

Founder Lewis Hornby and his grandmother Pat, sampling Jelly Drops—an edible gummy containing water and life-saving electrolytes.

On a visit to his grandmother’s nursing home in 2016, college student Lewis Hornby made a shocking discovery: Dehydration is a common (and dangerous) problem among seniors—especially those that are diagnosed with dementia.

Hornby’s grandmother, Pat, had always had difficulty keeping up her water intake as she got older, a common issue with seniors. As we age, our body composition changes, and we naturally hold less water than younger adults or children, so it’s easier to become dehydrated quickly if those fluids aren’t replenished. What’s more, our thirst signals diminish naturally as we age as well—meaning our body is not as good as it once was in letting us know that we need to rehydrate. This often creates a perfect storm that commonly leads to dehydration. In Pat’s case, her dehydration was so severe she nearly died.

When Lewis Hornby visited his grandmother at her nursing home afterward, he learned that dehydration especially affects people with dementia, as they often don’t feel thirst cues at all, or may not recognize how to use cups correctly. But while dementia patients often don’t remember to drink water, it seemed to Hornby that they had less problem remembering to eat, particularly candy.

Hornby wanted to create a solution for elderly people who struggled keeping their fluid intake up. He spent the next eighteen months researching and designing a solution and securing funding for his project. In 2019, Hornby won a sizable grant from the Alzheimer’s Society, a UK-based care and research charity for people with dementia and their caregivers. Together, through the charity’s Accelerator Program, they created a bite-sized, sugar-free, edible jelly drop that looked and tasted like candy. The candy, called Jelly Drops, contained 95% water and electrolytes—important minerals that are often lost during dehydration. The final product launched in 2020—and was an immediate success. The drops were able to provide extra hydration to the elderly, as well as help keep dementia patients safe, since dehydration commonly leads to confusion, hospitalization, and sometimes even death.

Not only did Jelly Drops quickly become a favorite snack among dementia patients in the UK, but they were able to provide an additional boost of hydration to hospital workers during the pandemic. In NHS coronavirus hospital wards, patients infected with the virus were regularly given Jelly Drops to keep their fluid levels normal—and staff members snacked on them as well, since long shifts and personal protective equipment (PPE) they were required to wear often left them feeling parched.

In April 2022, Jelly Drops launched in the United States. The company continues to donate 1% of its profits to help fund Alzheimer’s research.