“Deep Fake” Video Technology Is Advancing Faster Than Our Policies Can Keep Up

Artificial avatars for hire and sophisticated video manipulation carry profound implications for society.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Alethea.ai sports a grid of faces smiling, blinking and looking about. Some are beautiful, some are oddly familiar, but all share one thing in common—they are fake.

Alethea creates "synthetic media"— including digital faces customers can license saying anything they choose with any voice they choose. Companies can hire these photorealistic avatars to appear in explainer videos, advertisements, multimedia projects or any other applications they might dream up without running auditions or paying talent agents or actor fees. Licenses begin at a mere $99. Companies may also license digital avatars of real celebrities or hire mashups created from real celebrities including "Don Exotic" (a mashup of Donald Trump and Joe Exotic) or "Baby Obama" (a large-eared toddler that looks remarkably similar to a former U.S. President).

Naturally, in the midst of the COVID pandemic, the appeal is understandable. Rather than flying to a remote location to film a beer commercial, an actor can simply license their avatar to do the work for them. The question is—where and when this tech will cross the line between legitimately licensed and authorized synthetic media to deep fakes—synthetic videos designed to deceive the public for financial and political gain.

Deep fakes are not new. From written quotes that are manipulated and taken out of context to audio quotes that are spliced together to mean something other than originally intended, misrepresentation has been around for centuries. What is new is the technology that allows this sort of seamless and sophisticated deception to be brought to the world of video.

"At one point, video content was considered more reliable, and had a higher threshold of trust," said Alethea CEO and co-founder, Arif Khan. "We think video is harder to fake and we aren't yet as sensitive to detecting those fakes. But the technology is definitely there."

"In the future, each of us will only trust about 15 people and that's it," said Phil Lelyveld, who serves as Immersive Media Program Lead at the Entertainment Technology Center at the University of Southern California. "It's already very difficult to tell true footage from fake. In the future, I expect this will only become more difficult."

How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

As the U.S. 2020 Presidential Election nears, the potential moral and ethical implications of this technology are startling. A number of cases of truth tampering have recently been widely publicized. On August 5, President Donald Trump's campaign released an ad featuring several photos of Joe Biden that were altered to make it seem like was hiding all alone in his basement. In one photo, at least ten people who had been sitting with Biden in the original shot were cut out. In other photos, Biden's image was removed from a nature preserve and praying in church to make it appear Biden was in that same basement. Recently several videos of Speaker of the House Nancy Pelosi were slowed down by 75 percent to make her sound as if her speech was slurred.

During a campaign event in Florida on September 15 of this year, former Vice President Joe Biden was introduced by Puerto Rican singer-songwriter Luis Fonsi. After he was introduced, Biden paid tribute to the singer-songwriter—he held up his cell phone and played the hit song "Despecito". Shortly afterward, a doctored version of this video appeared on self-described parody site the United Spot replacing the Despicito with N.W.A.'s "F—- Tha Police". By September 16, Donald Trump retweeted the video, twice—first with the line "What is this all about" and second with the line "China is drooling. They can't believe this!" Twitter was quick to mark the video in these tweets as manipulated media.

Twitter had previously addressed several of Donald Trump's tweets—flagging a video shared in June as manipulated media and removing altogether a video shared by Trump in July showing a group promoting the hydroxychloroquine as an effective cure for COVID-19. Many of these manipulated videos are ultimately flagged or taken down, but not before they are seen and shared by millions of online viewers.

These faked videos were exposed rather quickly, as they could be compared with the original, publicly available source material. But what happens when there is no original source material? How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

"This type of fake media is a profound threat to our democracy," said Reid Blackman, the CEO of VIRTUE--an ethics consultancy for AI leaders. "Democracy depends on well-informed citizens. When citizens can't or won't discern between real and fake news, the implications are huge."

In light of the importance of reliable information in the political system, there's a clear and present need to verify that the images and news we consume is authentic. So how can anyone ever know that the content they are viewing is real?

"This will not be a simple technological solution," said Blackman. "There is no 'truth' button to push to verify authenticity. There's plenty of blame and condemnation to go around. Purveyors of information have a responsibility to vet the reliability of their sources. And consumers also have a responsibility to vet their sources."

Yet the process of verifying sources has never been more challenging. More and more citizens are choosing to live in a "media bubble"—gathering and sharing news only from and with people who share their political leanings and opinions. At one time, United States broadcasters were bound by the Fairness Doctrine—requiring them to present controversial issues important to the public in a way that the FCC deemed honest, equitable and balanced. The repeal of this doctrine in 1987 paved the way for new forms of cable news channels such as Fox News and MSNBC that appealed to viewers with a particular point of view. The Internet has only exacerbated these tendencies. Social media algorithms are designed to keep people clicking within their comfort zones by presenting members with only the thoughts and opinions they want to hear.

"I sometimes laugh when I hear people tell me they can back a particular opinion they hold with research," said Blackman. "Having conducted a fair bit of true scientific research, I am aware that clicking on one article on the Internet hardly qualifies. But a surprising number of people believe that finding any source online that states the fact they choose to believe is the same as proving it true."

Back to the fundamental challenge: How do we as a society root out what's false online? Lelyveld suggests that it will begin by verifying things that are known to be true rather than trying to call out everything that is fake. "The EU called me in to talk about how to deal with fake news coming out of Russia," said Lelyveld. "I told them Hollywood has spent 100 years developing special effects technology to make things that are wholly fictional indistinguishable from the truth. I told them that you'll never chase down every source of fake news. You're better off focusing on what can be proved true."

Arif Khan agrees. "There are probably 100 accounts attributed to Elon Musk on Twitter, but only one has the blue checkmark," said Khan. "That means Twitter has verified that an account of public interest is real. That's what we're trying to do with our platform. Allow celebrities to verify that specific videos were licensed and authorized directly by them."

Alethea will use another key technology called blockchain to mark all authentic authorized videos with celebrity avatars. Blockchain uses a distributed ledger technology to make sure that no undetected changes have been made to the content. Think of the difference between editing a document in a traditional word processing program and editing in a distributed online editing system like Google Docs. In a traditional word processing program, you can edit and copy a document without revealing any changes. In a shared editing system like Google Docs, every person who shares the document can see a record of every edit, addition and copy made of any portion of the document. In a similar way, blockchain helps Alethea ensure that approved videos have not been copied or altered inappropriately.

While AI companies like Alethea are moving to ensure that avatars based on real individuals aren't wrongly identified, the situation becomes a bit murkier when it comes to the question of representing groups, races, creeds, and other forms of identity. Alethea is rightly proud that the completely artificial avatars visually represent a variety of ages, races and sexes. However, companies could conceivably license an avatar to represent a marginalized group without actually hiring a person within that group to decide what the avatar will do or say.

"I don't know if I would call this tokenism, as that is difficult to identify without understanding the hiring company's intent," said Blackman. "Where this becomes deeply troubling is when avatars are used to represent a marginalized group without clearly pointing out the actor is an avatar. It's one thing for an African American woman avatar to say, 'I like ice cream.' It's entirely different thing for an African American woman avatar to say she supports a particular political candidate. In the second case, the avatar is being used as social proof that real people of a certain type back a certain political idea. And there the deception is far more problematic."

"It always comes down to unintended consequences of technology," said Lelyveld. "Technology is neutral—it's only the implementation that has the power to be good or bad. Without a thoughtful approach to the cultural, moral and political implications of technology, it often drifts towards the bad. We need to make a conscious decision as we release new technology to ensure it moves towards the good."

When presented with the idea that his avatars might be used to misrepresent marginalized groups, Khan was thoughtful. "Yes, I can see that is an unintended consequence of our technology. We would like to encourage people to license the avatars of real people, who would have final approval over what their avatars say or do. As to what people do with our completely artificial avatars, we will have to consider that moving forward."

Lelyveld frankly sees the ability for advertisers to create avatars that are our assistants or even our friends as a greater moral concern. "Once our digital assistant or avatar becomes an integral part of our life—even a friend as it were, what's to stop marketers from having those digital friends make suggestions about what drink we buy, which shirt we wear or even which candidate we elect? The possibilities for bad actors to reach us through our digital circle is mind-boggling."

Ultimately, Blackman suggests, we as a society will need to make decisions about what matters to us. "We will need to build policies and write laws—tackling the biggest problems like political deep fakes first. And then we have to figure out how to make the penalties stiff enough to matter. Fining a multibillion-dollar company a few million for a major offense isn't likely to move the needle. The punishment will need to fit the crime."

Until then, media consumers will need to do their own due diligence—to do the difficult work of uncovering the often messy and deeply uncomfortable news that's the truth.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

Nobel Prize goes to technology for mRNA vaccines

Katalin Karikó, pictured, and Drew Weissman won the Nobel Prize for advances in mRNA research that led to the first Covid vaccines.

When Drew Weissman received a call from Katalin Karikó in the early morning hours this past Monday, he assumed his longtime research partner was calling to share a nascent, nagging idea. Weissman, a professor of medicine at the Perelman School of Medicine at the University of Pennsylvania, and Karikó, a professor at Szeged University and an adjunct professor at UPenn, both struggle with sleep disturbances. Thus, middle-of-the-night discourses between the two, often over email, has been a staple of their friendship. But this time, Karikó had something more pressing and exciting to share: They had won the 2023 Nobel Prize in Physiology or Medicine.

The work for which they garnered the illustrious award and its accompanying $1,000,000 cash windfall was completed about two decades ago, wrought through long hours in the lab over many arduous years. But humanity collectively benefited from its life-saving outcome three years ago, when both Moderna and Pfizer/BioNTech’s mRNA vaccines against COVID were found to be safe and highly effective at preventing severe disease. Billions of doses have since been given out to protect humans from the upstart viral scourge.

“I thought of going somewhere else, or doing something else,” said Katalin Karikó. “I also thought maybe I’m not good enough, not smart enough. I tried to imagine: Everything is here, and I just have to do better experiments.”

Unlocking the power of mRNA

Weissman and Karikó unlocked mRNA vaccines for the world back in the early 2000s when they made a key breakthrough. Messenger RNA molecules are essentially instructions for cells’ ribosomes to make specific proteins, so in the 1980s and 1990s, researchers started wondering if sneaking mRNA into the body could trigger cells to manufacture antibodies, enzymes, or growth agents for protecting against infection, treating disease, or repairing tissues. But there was a big problem: injecting this synthetic mRNA triggered a dangerous, inflammatory immune response resulting in the mRNA’s destruction.

While most other researchers chose not to tackle this perplexing problem to instead pursue more lucrative and publishable exploits, Karikó stuck with it. The choice sent her academic career into depressing doldrums. Nobody would fund her work, publications dried up, and after six years as an assistant professor at the University of Pennsylvania, Karikó got demoted. She was going backward.

“I thought of going somewhere else, or doing something else,” Karikó told Stat in 2020. “I also thought maybe I’m not good enough, not smart enough. I tried to imagine: Everything is here, and I just have to do better experiments.”

A tale of tenacity

Collaborating with Drew Weissman, a new professor at the University of Pennsylvania, in the late 1990s helped provide Karikó with the tenacity to continue. Weissman nurtured a goal of developing a vaccine against HIV-1, and saw mRNA as a potential way to do it.

“For the 20 years that we’ve worked together before anybody knew what RNA is, or cared, it was the two of us literally side by side at a bench working together,” Weissman said in an interview with Adam Smith of the Nobel Foundation.

In 2005, the duo made their 2023 Nobel Prize-winning breakthrough, detailing it in a relatively small journal, Immunity. (Their paper was rejected by larger journals, including Science and Nature.) They figured out that chemically modifying the nucleoside bases that make up mRNA allowed the molecule to slip past the body’s immune defenses. Karikó and Weissman followed up that finding by creating mRNA that’s more efficiently translated within cells, greatly boosting protein production. In 2020, scientists at Moderna and BioNTech (where Karikó worked from 2013 to 2022) rushed to craft vaccines against COVID, putting their methods to life-saving use.

The future of vaccines

Buoyed by the resounding success of mRNA vaccines, scientists are now hurriedly researching ways to use mRNA medicine against other infectious diseases, cancer, and genetic disorders. The now ubiquitous efforts stand in stark contrast to Karikó and Weissman’s previously unheralded struggles years ago as they doggedly worked to realize a shared dream that so many others shied away from. Katalin Karikó and Drew Weissman were brave enough to walk a scientific path that very well could have ended in a dead end, and for that, they absolutely deserve their 2023 Nobel Prize.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

Scientists turn pee into power in Uganda

With conventional fuel cells as their model, researchers learned to use similar chemical reactions to make a fuel from microbes in pee.

At the edge of a dirt road flanked by trees and green mountains outside the town of Kisoro, Uganda, sits the concrete building that houses Sesame Girls School, where girls aged 11 to 19 can live, learn and, at least for a while, safely use a toilet. In many developing regions, toileting at night is especially dangerous for children. Without electrical power for lighting, kids may fall into the deep pits of the latrines through broken or unsteady floorboards. Girls are sometimes assaulted by men who hide in the dark.

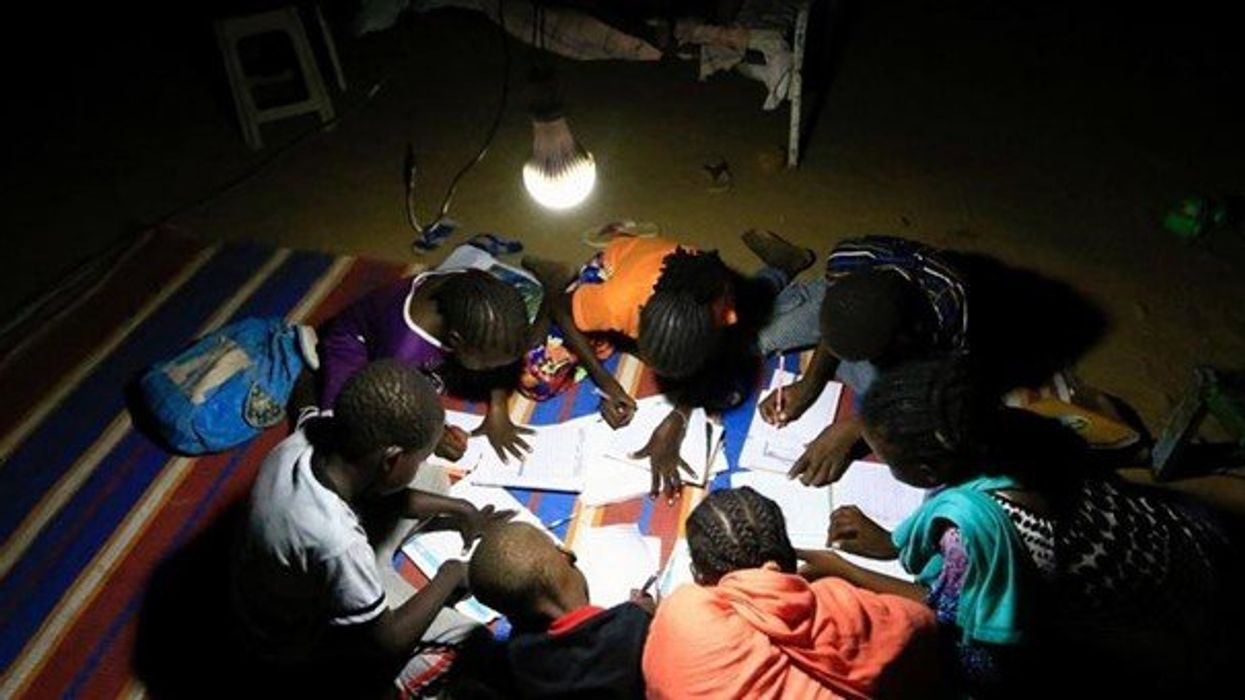

For the Sesame School girls, though, bright LED lights, connected to tiny gadgets, chased the fears away. They got to use new, clean toilets lit by the power of their own pee. Some girls even used the light provided by the latrines to study.

Urine, whether animal or human, is more than waste. It’s a cheap and abundant resource. Each day across the globe, 8.1 billion humans make 4 billion gallons of pee. Cows, pigs, deer, elephants and other animals add more. By spending money to get rid of it, we waste a renewable resource that can serve more than one purpose. Microorganisms that feed on nutrients in urine can be used in a microbial fuel cell that generates electricity – or "pee power," as the Sesame girls called it.

Plus, urine contains water, phosphorus, potassium and nitrogen, the key ingredients plants need to grow and survive. Human urine could replace about 25 percent of current nitrogen and phosphorous fertilizers worldwide and could save water for gardens and crops. The average U.S. resident flushes a toilet bowl containing only pee and paper about six to seven times a day, which adds up to about 3,500 gallons of water down per year. Plus cows in the U.S. produce 231 gallons of the stuff each year.

Pee power

A conventional fuel cell uses chemical reactions to produce energy, as electrons move from one electrode to another to power a lightbulb or phone. Ioannis Ieropoulos, a professor and chair of Environmental Engineering at the University of Southampton in England, realized the same type of reaction could be used to make a fuel from microbes in pee.

Bacterial species like Shewanella oneidensis and Pseudomonas aeruginosa can consume carbon and other nutrients in urine and pop out electrons as a result of their digestion. In a microbial fuel cell, one electrode is covered in microbes, immersed in urine and kept away from oxygen. Another electrode is in contact with oxygen. When the microbes feed on nutrients, they produce the electrons that flow through the circuit from one electrod to another to combine with oxygen on the other side. As long as the microbes have fresh pee to chomp on, electrons keep flowing. And after the microbes are done with the pee, it can be used as fertilizer.

These microbes are easily found in wastewater treatment plants, ponds, lakes, rivers or soil. Keeping them alive is the easy part, says Ieropoulos. Once the cells start producing stable power, his group sequences the microbes and keeps using them.

Like many promising technologies, scaling these devices for mass consumption won’t be easy, says Kevin Orner, a civil engineering professor at West Virginia University. But it’s moving in the right direction. Ieropoulos’s device has shrunk from the size of about three packs of cards to a large glue stick. It looks and works much like a AAA battery and produce about the same power. By itself, the device can barely power a light bulb, but when stacked together, they can do much more—just like photovoltaic cells in solar panels. His lab has produced 1760 fuel cells stacked together, and with manufacturing support, there’s no theoretical ceiling, he says.

Although pure urine produces the most power, Ieropoulos’s devices also work with the mixed liquids of the wastewater treatment plants, so they can be retrofit into urban wastewater utilities.

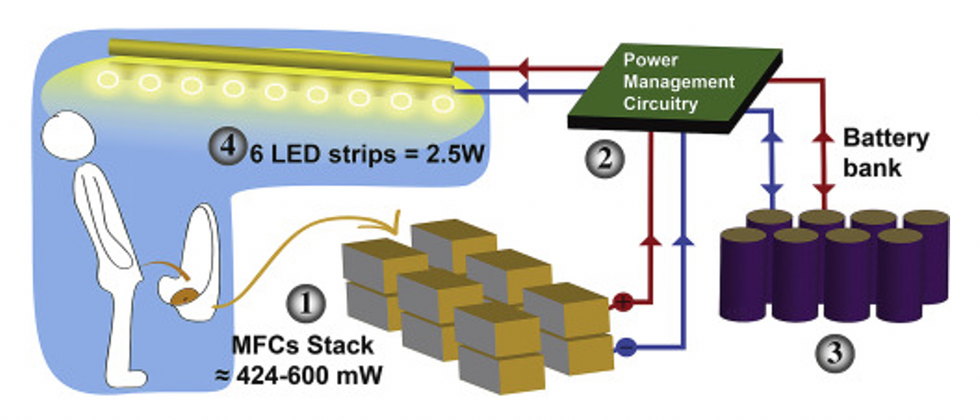

This image shows how the pee-powered system works. Pee feeds bacteria in the stack of fuel cells (1), which give off electrons (2) stored in parallel cylindrical cells (3). These cells are connected to a voltage regulator (4), which smooths out the electrical signal to ensure consistent power to the LED strips lighting the toilet.

Courtesy Ioannis Ieropoulos

Key to the long-term success of any urine reclamation effort, says Orner, is avoiding what he calls “parachute engineering”—when well-meaning scientists solve a problem with novel tech and then abandon it. “The way around that is to have either the need come from the community or to have an organization in a community that is committed to seeing a project operate and maintained,” he says.

Success with urine reclamation also depends on the economy. “If energy prices are low, it may not make sense to recover energy,” says Orner. “But right now, fertilizer prices worldwide are generally pretty high, so it may make sense to recover fertilizer and nutrients.” There are obstacles, too, such as few incentives for builders to incorporate urine recycling into new construction. And any hiccups like leaks or waste seepage will cost builders money and reputation. Right now, Orner says, the risks are just too high.

Despite the challenges, Ieropoulos envisions a future in which urine is passed through microbial fuel cells at wastewater treatment plants, retrofitted septic tanks, and building basements, and is then delivered to businesses to use as agricultural fertilizers. Although pure urine produces the most power, Ieropoulos’s devices also work with the mixed liquids of the wastewater treatment plants, so they can be retrofitted into urban wastewater utilities where they can make electricity from the effluent. And unlike solar cells, which are a common target of theft in some areas, nobody wants to steal a bunch of pee.

When Ieropoulos’s team returned to wrap up their pilot project 18 months later, the school’s director begged them to leave the fuel cells in place—because they made a major difference in students’ lives. “We replaced it with a substantial photovoltaic panel,” says Ieropoulos, They couldn’t leave the units forever, he explained, because of intellectual property reasons—their funders worried about theft of both the technology and the idea. But the photovoltaic replacement could be stolen, too, leaving the girls in the dark.

The story repeated itself at another school, in Nairobi, Kenya, as well as in an informal settlement in Durban, South Africa. Each time, Ieropoulos vowed to return. Though the pandemic has delayed his promise, he is resolute about continuing his work—it is a moral and legal obligation. “We've made a commitment to ourselves and to the pupils,” he says. “That's why we need to go back.”

Urine as fertilizer

Modern day industrial systems perpetuate the broken cycle of nutrients. When plants grow, they use up nutrients the soil. We eat the plans and excrete some of the nutrients we pass them into rivers and oceans. As a result, farmers must keep fertilizing the fields while our waste keeps fertilizing the waterways, where the algae, overfertilized with nitrogen, phosphorous and other nutrients grows out of control, sucking up oxygen that other marine species need to live. Few global communities remain untouched by the related challenges this broken chain create: insufficient clean water, food, and energy, and too much human and animal waste.

The Rich Earth Institute in Vermont runs a community-wide urine nutrient recovery program, which collects urine from homes and businesses, transports it for processing, and then supplies it as fertilizer to local farms.

One solution to this broken cycle is reclaiming urine and returning it back to the land. The Rich Earth Institute in Vermont is one of several organizations around the world working to divert and save urine for agricultural use. “The urine produced by an adult in one day contains enough fertilizer to grow all the wheat in one loaf of bread,” states their website.

Notably, while urine is not entirely sterile, it tends to harbor fewer pathogens than feces. That’s largely because urine has less organic matter and therefore less food for pathogens to feed on, but also because the urinary tract and the bladder have built-in antimicrobial defenses that kill many germs. In fact, the Rich Earth Institute says it’s safe to put your own urine onto crops grown for home consumption. Nonetheless, you’ll want to dilute it first because pee usually has too much nitrogen and can cause “fertilizer burn” if applied straight without dilution. Other projects to turn urine into fertilizer are in progress in Niger, South Africa, Kenya, Ethiopia, Sweden, Switzerland, The Netherlands, Australia, and France.

Eleven years ago, the Institute started a program that collects urine from homes and businesses, transports it for processing, and then supplies it as fertilizer to local farms. By 2021, the program included 180 donors producing over 12,000 gallons of urine each year. This urine is helping to fertilize hay fields at four partnering farms. Orner, the West Virginia professor, sees it as a success story. “They've shown how you can do this right--implementing it at a community level scale."