Genetically Sequencing Healthy Babies Yielded Surprising Results

A newborn and mother in the hospital - first touch. (© martin81/Shutterstock)

Today in Melrose, Massachusetts, Cora Stetson is the picture of good health, a bubbly precocious 2-year-old. But Cora has two separate mutations in the gene that produces a critical enzyme called biotinidase and her body produces only 40 percent of the normal levels of that enzyme.

In the last few years, the dream of predicting and preventing diseases through genomics, starting in childhood, is finally within reach.

That's enough to pass conventional newborn (heelstick) screening, but may not be enough for normal brain development, putting baby Cora at risk for seizures and cognitive impairment. But thanks to an experimental study in which Cora's DNA was sequenced after birth, this condition was discovered and she is being treated with a safe and inexpensive vitamin supplement.

Stories like these are beginning to emerge from the BabySeq Project, the first clinical trial in the world to systematically sequence healthy newborn infants. This trial was led by my research group with funding from the National Institutes of Health. While still controversial, it is pointing the way to a future in which adults, or even newborns, can receive comprehensive genetic analysis in order to determine their risk of future disease and enable opportunities to prevent them.

Some believe that medicine is still not ready for genomic population screening, but others feel it is long overdue. After all, the sequencing of the Human Genome Project was completed in 2003, and with this milestone, it became feasible to sequence and interpret the genome of any human being. The costs have come down dramatically since then; an entire human genome can now be sequenced for about $800, although the costs of bioinformatic and medical interpretation can add another $200 to $2000 more, depending upon the number of genes interrogated and the sophistication of the interpretive effort.

Two-year-old Cora Stetson, whose DNA sequencing after birth identified a potentially dangerous genetic mutation in time for her to receive preventive treatment.

(Photo courtesy of Robert Green)

The ability to sequence the human genome yielded extraordinary benefits in scientific discovery, disease diagnosis, and targeted cancer treatment. But the ability of genomes to detect health risks in advance, to actually predict the medical future of an individual, has been mired in controversy and slow to manifest. In particular, the oft-cited vision that healthy infants could be genetically tested at birth in order to predict and prevent the diseases they would encounter, has proven to be far tougher to implement than anyone anticipated.

But in the last few years, the dream of predicting and preventing diseases through genomics, starting in childhood, is finally within reach. Why did it take so long? And what remains to be done?

Great Expectations

Part of the problem was the unrealistic expectations that had been building for years in advance of the genomic science itself. For example, the 1997 film Gattaca portrayed a near future in which the lifetime risk of disease was readily predicted the moment an infant is born. In the fanfare that accompanied the completion of the Human Genome Project, the notion of predicting and preventing future disease in an individual became a powerful meme that was used to inspire investment and public support for genomic research long before the tools were in place to make it happen.

Another part of the problem was the success of state-mandated newborn screening programs that began in the 1960's with biochemical tests of the "heel-stick" for babies with metabolic disorders. These programs have worked beautifully, costing only a few dollars per baby and saving thousands of infants from death and severe cognitive impairment. It seemed only logical that a new technology like genome sequencing would add power and promise to such programs. But instead of embracing the notion of newborn sequencing, newborn screening laboratories have thus far rejected the entire idea as too expensive, too ambiguous, and too threatening to the comfortable constituency that they had built within the public health framework.

"What can you find when you look as deeply as possible into the medical genomes of healthy individuals?"

Creating the Evidence Base for Preventive Genomics

Despite a number of obstacles, there are researchers who are exploring how to achieve the original vision of genomic testing as a tool for disease prediction and prevention. For example, in our NIH-funded MedSeq Project, we were the first to ask the question: "What can you find when you look as deeply as possible into the medical genomes of healthy individuals?"

Most people do not understand that genetic information comes in four separate categories: 1) dominant mutations putting the individual at risk for rare conditions like familial forms of heart disease or cancer, (2) recessive mutations putting the individual's children at risk for rare conditions like cystic fibrosis or PKU, (3) variants across the genome that can be tallied to construct polygenic risk scores for common conditions like heart disease or type 2 diabetes, and (4) variants that can influence drug metabolism or predict drug side effects such as the muscle pain that occasionally occurs with statin use.

The technological and analytical challenges of our study were formidable, because we decided to systematically interrogate over 5000 disease-associated genes and report results in all four categories of genetic information directly to the primary care physicians for each of our volunteers. We enrolled 200 adults and found that everyone who was sequenced had medically relevant polygenic and pharmacogenomic results, over 90 percent carried recessive mutations that could have been important to reproduction, and an extraordinary 14.5 percent carried dominant mutations for rare genetic conditions.

A few years later we launched the BabySeq Project. In this study, we restricted the number of genes to include only those with child/adolescent onset that could benefit medically from early warning, and even so, we found 9.4 percent carried dominant mutations for rare conditions.

At first, our interpretation around the high proportion of apparently healthy individuals with dominant mutations for rare genetic conditions was simple – that these conditions had lower "penetrance" than anticipated; in other words, only a small proportion of those who carried the dominant mutation would get the disease. If this interpretation were to hold, then genetic risk information might be far less useful than we had hoped.

Suddenly the information available in the genome of even an apparently healthy individual is looking more robust, and the prospect of preventive genomics is looking feasible.

But then we circled back with each adult or infant in order to examine and test them for any possible features of the rare disease in question. When we did this, we were surprised to see that in over a quarter of those carrying such mutations, there were already subtle signs of the disease in question that had not even been suspected! Now our interpretation was different. We now believe that genetic risk may be responsible for subclinical disease in a much higher proportion of people than has ever been suspected!

Meanwhile, colleagues of ours have been demonstrating that detailed analysis of polygenic risk scores can identify individuals at high risk for common conditions like heart disease. So adding up the medically relevant results in any given genome, we start to see that you can learn your risks for a rare monogenic condition, a common polygenic condition, a bad effect from a drug you might take in the future, or for having a child with a devastating recessive condition. Suddenly the information available in the genome of even an apparently healthy individual is looking more robust, and the prospect of preventive genomics is looking feasible.

Preventive Genomics Arrives in Clinical Medicine

There is still considerable evidence to gather before we can recommend genomic screening for the entire population. For example, it is important to make sure that families who learn about such risks do not suffer harms or waste resources from excessive medical attention. And many doctors don't yet have guidance on how to use such information with their patients. But our research is convincing many people that preventive genomics is coming and that it will save lives.

In fact, we recently launched a Preventive Genomics Clinic at Brigham and Women's Hospital where information-seeking adults can obtain predictive genomic testing with the highest quality interpretation and medical context, and be coached over time in light of their disease risks toward a healthier outcome. Insurance doesn't yet cover such testing, so patients must pay out of pocket for now, but they can choose from a menu of genetic screening tests, all of which are more comprehensive than consumer-facing products. Genetic counseling is available but optional. So far, this service is for adults only, but sequencing for children will surely follow soon.

As the costs of sequencing and other Omics technologies continue to decline, we will see both responsible and irresponsible marketing of genetic testing, and we will need to guard against unscientific claims. But at the same time, we must be far more imaginative and fast moving in mainstream medicine than we have been to date in order to claim the emerging benefits of preventive genomics where it is now clear that suffering can be averted, and lives can be saved. The future has arrived if we are bold enough to grasp it.

Funding and Disclosures:

Dr. Green's research is supported by the National Institutes of Health, the Department of Defense and through donations to The Franca Sozzani Fund for Preventive Genomics. Dr. Green receives compensation for advising the following companies: AIA, Applied Therapeutics, Helix, Ohana, OptraHealth, Prudential, Verily and Veritas; and is co-founder and advisor to Genome Medical, Inc, a technology and services company providing genetics expertise to patients, providers, employers and care systems.

A newly discovered brain cell may lead to better treatments for cognitive disorders

Swiss researchers have found a type of brain cell that appears to be a hybrid of the two other main types — and it could lead to new treatments for brain disorders.

Swiss researchers have discovered a third type of brain cell that appears to be a hybrid of the two other primary types — and it could lead to new treatments for many brain disorders.

The challenge: Most of the cells in the brain are either neurons or glial cells. While neurons use electrical and chemical signals to send messages to one another across small gaps called synapses, glial cells exist to support and protect neurons.

Astrocytes are a type of glial cell found near synapses. This close proximity to the place where brain signals are sent and received has led researchers to suspect that astrocytes might play an active role in the transmission of information inside the brain — a.k.a. “neurotransmission” — but no one has been able to prove the theory.

A new brain cell: Researchers at the Wyss Center for Bio and Neuroengineering and the University of Lausanne believe they’ve definitively proven that some astrocytes do actively participate in neurotransmission, making them a sort of hybrid of neurons and glial cells.

According to the researchers, this third type of brain cell, which they call a “glutamatergic astrocyte,” could offer a way to treat Alzheimer’s, Parkinson’s, and other disorders of the nervous system.

“Its discovery opens up immense research prospects,” said study co-director Andrea Volterra.

The study: Neurotransmission starts with a neuron releasing a chemical called a neurotransmitter, so the first thing the researchers did in their study was look at whether astrocytes can release the main neurotransmitter used by neurons: glutamate.

By analyzing astrocytes taken from the brains of mice, they discovered that certain astrocytes in the brain’s hippocampus did include the “molecular machinery” needed to excrete glutamate. They found evidence of the same machinery when they looked at datasets of human glial cells.

Finally, to demonstrate that these hybrid cells are actually playing a role in brain signaling, the researchers suppressed their ability to secrete glutamate in the brains of mice. This caused the rodents to experience memory problems.

“Our next studies will explore the potential protective role of this type of cell against memory impairment in Alzheimer’s disease, as well as its role in other regions and pathologies than those explored here,” said Andrea Volterra, University of Lausanne.

But why? The researchers aren’t sure why the brain needs glutamatergic astrocytes when it already has neurons, but Volterra suspects the hybrid brain cells may help with the distribution of signals — a single astrocyte can be in contact with thousands of synapses.

“Often, we have neuronal information that needs to spread to larger ensembles, and neurons are not very good for the coordination of this,” researcher Ludovic Telley told New Scientist.

Looking ahead: More research is needed to see how the new brain cell functions in people, but the discovery that it plays a role in memory in mice suggests it might be a worthwhile target for Alzheimer’s disease treatments.

The researchers also found evidence during their study that the cell might play a role in brain circuits linked to seizures and voluntary movements, meaning it’s also a new lead in the hunt for better epilepsy and Parkinson’s treatments.

“Our next studies will explore the potential protective role of this type of cell against memory impairment in Alzheimer’s disease, as well as its role in other regions and pathologies than those explored here,” said Volterra.

Researchers claimed they built a breakthrough superconductor. Social media shot it down almost instantly.

In July, South Korean scientists posted a paper finding they had achieved superconductivity - a claim that was debunked within days.

Harsh Mathur was a graduate physics student at Yale University in late 1989 when faculty announced they had failed to replicate claims made by scientists at the University of Utah and the University of Wolverhampton in England.

Such work is routine. Replicating or attempting to replicate the contraptions, calculations and conclusions crafted by colleagues is foundational to the scientific method. But in this instance, Yale’s findings were reported globally.

“I had a ringside view, and it was crazy,” recalls Mathur, now a professor of physics at Case Western Reserve University in Ohio.

Yale’s findings drew so much attention because initial experiments by Stanley Pons of Utah and Martin Fleischmann of Wolverhampton led to a startling claim: They were able to fuse atoms at room temperature – a scientific El Dorado known as “cold fusion.”

Nuclear fusion powers the stars in the universe. However, star cores must be at least 23.4 million degrees Fahrenheit and under extraordinary pressure to achieve fusion. Pons and Fleischmann claimed they had created an almost limitless source of power achievable at any temperature.

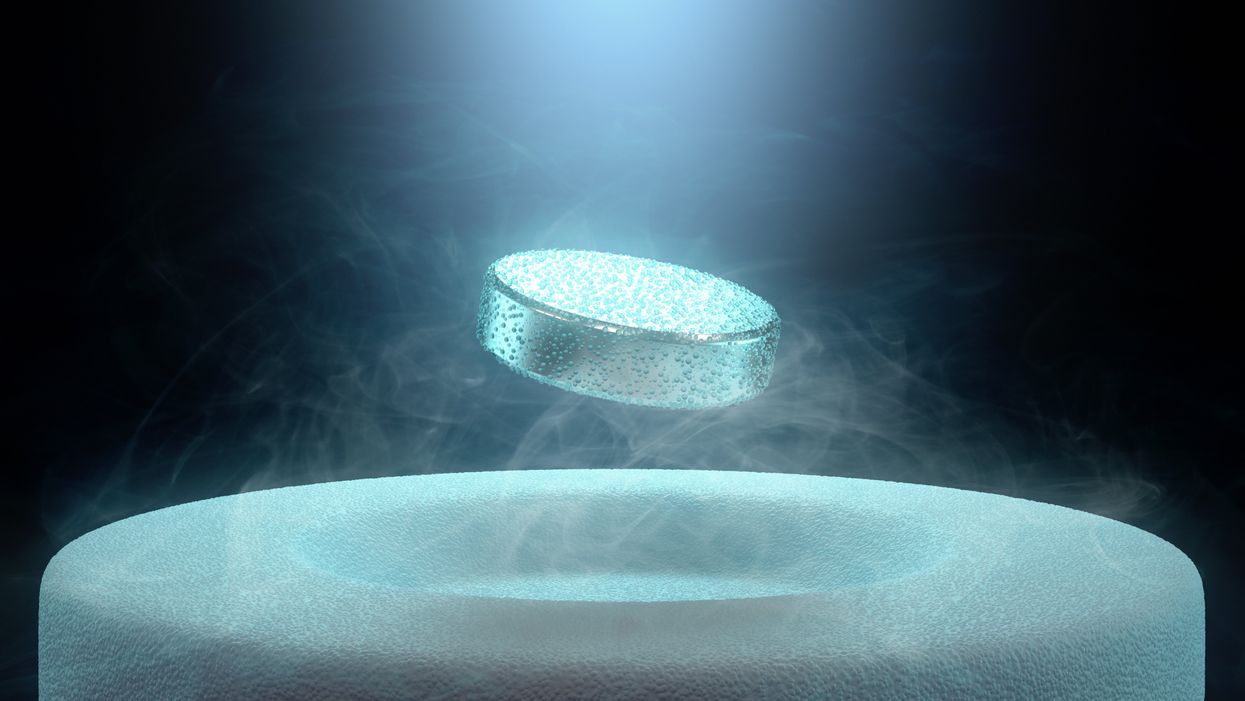

Like fusion, superconductivity can only be achieved in mostly impractical circumstances.

But about six months after they made their startling announcement, the pair’s findings were discredited by researchers at Yale and the California Institute of Technology. It was one of the first instances of a major scientific debunking covered by mass media.

Some scholars say the media attention for cold fusion stemmed partly from a dazzling announcement made three years prior in 1986: Scientists had created the first “superconductor” – material that could transmit electrical current with little or no resistance. It drew global headlines – and whetted the public’s appetite for announcements of scientific breakthroughs that could cause economic transformations.

But like fusion, superconductivity can only be achieved in mostly impractical circumstances: It must operate either at temperatures of at least negative 100 degrees Fahrenheit, or under pressures of around 150,000 pounds per square inch. Superconductivity that functions in closer to a normal environment would cut energy costs dramatically while also opening infinite possibilities for computing, space travel and other applications.

In July, a group of South Korean scientists posted material claiming they had created an iron crystalline substance called LK-99 that could achieve superconductivity at slightly above room temperature and at ambient pressure. The group partners with the Quantum Energy Research Centre, a privately-held enterprise in Seoul, and their claims drew global headlines.

Their work was also debunked. But in the age of internet and social media, the process was compressed from half-a-year into days. And it did not require researchers at world-class universities.

One of the most compelling critiques came from Derrick VanGennep. Although he works in finance, he holds a Ph.D. in physics and held a postdoctoral position at Harvard. The South Korean researchers had posted a video of a nugget of LK-99 in what they claimed was the throes of the Meissner effect – an expulsion of the substance’s magnetic field that would cause it to levitate above a magnet. Unless Hollywood magic is involved, only superconducting material can hover in this manner.

That claim made VanGennep skeptical, particularly since LK-99’s levitation appeared unenthusiastic at best. In fact, a corner of the material still adhered to the magnet near its center. He thought the video demonstrated ferromagnetism – two magnets repulsing one another. He mixed powdered graphite with super glue, stuck iron filings to its surface and mimicked the behavior of LK-99 in his own video, which was posted alongside the researchers’ video.

VanGennep believes the boldness of the South Korean claim was what led to him and others in the scientific community questioning it so quickly.

“The swift replication attempts stemmed from the combination of the extreme claim, the fact that the synthesis for this material is very straightforward and fast, and the amount of attention that this story was getting on social media,” he says.

But practicing scientists were suspicious of the data as well. Michael Norman, director of the Argonne Quantum Institute at the Argonne National Laboratory just outside of Chicago, had doubts immediately.

Will this saga hurt or even affect the careers of the South Korean researchers? Possibly not, if the previous fusion example is any indication.

“It wasn’t a very polished paper,” Norman says of the Korean scientists’ work. That opinion was reinforced, he adds, when it turned out the paper had been posted online by one of the researchers prior to seeking publication in a peer-reviewed journal. Although Norman and Mathur say that is routine with scientific research these days, Norman notes it was posted by one of the junior researchers over the doubts of two more senior scientists on the project.

Norman also raises doubts about the data reported. Among other issues, he observes that the samples created by the South Korean researchers contained traces of copper sulfide that could inadvertently amplify findings of conductivity.

The lack of the Meissner effect also caught Mathur’s attention. “Ferromagnets tend to be unstable when they levitate,” he says, adding that the video “just made me feel unconvinced. And it made me feel like they hadn't made a very good case for themselves.”

Will this saga hurt or even affect the careers of the South Korean researchers? Possibly not, if the previous fusion example is any indication. Despite being debunked, cold fusion claimants Pons and Fleischmann didn’t disappear. They moved their research to automaker Toyota’s IMRA laboratory in France, which along with the Japanese government spent tens of millions of dollars on their work before finally pulling the plug in 1998.

Fusion has since been created in laboratories, but being unable to reproduce the density of a star’s core would require excruciatingly high temperatures to achieve – about 160 million degrees Fahrenheit. A recently released Government Accountability Office report concludes practical fusion likely remains at least decades away.

However, like Pons and Fleischman, the South Korean researchers are not going anywhere. They claim that LK-99’s Meissner effect is being obscured by the fact the substance is both ferromagnetic and diamagnetic. They have filed for a patent in their country. But for now, those claims remain chimerical.

In the meantime, the consensus as to when a room temperature superconductor will be achieved is mixed. VenGennep – who studied the issue during his graduate and postgraduate work – puts the chance of creating such a superconductor by 2050 at perhaps 50-50. Mathur believes it could happen sooner, but adds that research on the topic has been going on for nearly a century, and that it has seen many plateaus.

“There's always this possibility that there's going to be something out there that we're going to discover unexpectedly,” Norman notes. The only certainty in this age of social media is that it will be put through the rigors of replication instantly.