How 30 Years of Heart Surgeries Taught My Dad How to Live

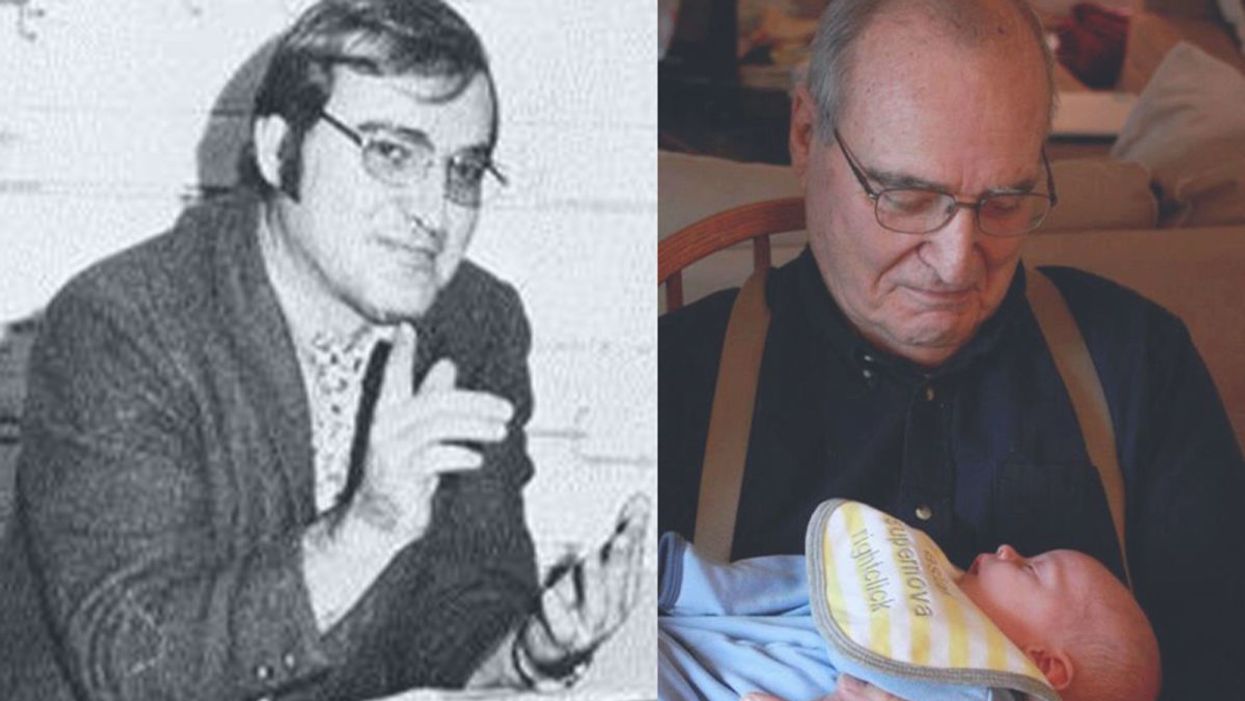

A mid-1970s photo of the author's father, and him holding a grandchild in 2012.

[Editor's Note: This piece is the winner of our 2019 essay contest, which prompted readers to reflect on the question: "How has an advance in science or medicine changed your life?"]

My father did not expect to live past the age of 50. Neither of his parents had done so. And he also knew how he would die: by heart attack, just as his father did.

In July of 1976, he had his first heart attack, days before his 40th birthday.

My dad lived the first 40 years of his life with this knowledge buried in his bones. He started smoking at the age of 12, and was drinking before he was old enough to enlist in the Navy. He had a sarcastic, often cruel, sense of humor that could drive my mother, my sister and me into tears. He was not an easy man to live with, but that was okay by him - he didn't expect to live long.

In July of 1976, he had his first heart attack, days before his 40th birthday. I was 13, and my sister was 11. He needed quadruple bypass surgery. Our small town hospital was not equipped to do this type of surgery; he would have to be transported 40 miles away to a heart center. I understood this journey to mean that my father was seriously ill, and might die in the hospital, away from anyone he knew. And my father knew a lot of people - he was a popular high school English teacher, in a town with only three high schools. He knew generations of students and their parents. Our high school football team did a blood drive in his honor.

During a trip to Disney World in 1974, Dad was suffering from angina the entire time but refused to tell me (left) and my sister, Kris.

Quadruple bypass surgery in 1976 meant that my father's breastbone was cut open by a sternal saw. His ribcage was spread wide. After the bypass surgery, his bones would be pulled back together, and tied in place with wire. The wire would later be pulled out of his body when the bones knitted back together. It would take months before he was fully healed.

Dad was in the hospital for the rest of the summer and into the start of the new school year. Going to visit him was farther than I could ride my bicycle; it meant planning a trip in the car and going onto the interstate. The first time I was allowed to visit him in the ICU, he was lying in bed, and then pushed himself to sit up. The heart monitor he was attached to spiked up and down, and I fainted. I didn't know that heartbeats change when you move; television medical dramas never showed that - I honestly thought that I had driven my father into another heart attack.

Only a few short years after that, my father returned to the big hospital to have his heart checked with a new advance in heart treatment: a CT scan. This would allow doctors to check for clogged arteries and treat them before a fatal heart attack. The procedure identified a dangerous blockage, and my father was admitted immediately. This time, however, there was no need to break bones to get to the problem; my father was home within a month.

During the late 1970's, my father changed none of his habits. He was still smoking, and he continued to drink. But now, he was also taking pills - pills to manage the pain. He would pop a nitroglycerin tablet under his tongue whenever he was experiencing angina (I have a vivid memory of him doing this during my driving lessons), but he never mentioned that he was in pain. Instead, he would snap at one of us, or joke that we were killing him.

I think he finally determined that, if he was going to have these extra decades of life, he wanted to make them count.

Being the kind of guy he was, my father never wanted to talk about his health. Any admission of pain implied that he couldn't handle pain. He would try to "muscle through" his angina, as if his willpower would be stronger than his heart muscle. His efforts would inevitably fail, leaving him angry and ready to lash out at anyone or anything. He would blame one of us as a reason he "had" to take valium or pop a nitro tablet. Dinners often ended in shouts and tears, and my father stalking to the television room with a bottle of red wine.

In the 1980's while I was in college, my father had another heart attack. But now, less than 10 years after his first, medicine had changed: our hometown hospital had the technology to run dye through my father's blood stream, identify the blockages, and do preventative care that involved statins and blood thinners. In one case, the doctors would take blood vessels from my father's legs, and suture them to replace damaged arteries around his heart. New advances in cholesterol medication and treatments for angina could extend my father's life by many years.

My father decided it was time to quit smoking. It was the first significant health step I had ever seen him take. Until then, he treated his heart issues as if they were inevitable, and there was nothing that he could do to change what was happening to him. Quitting smoking was the first sign that my father was beginning to move out of his fatalistic mindset - and the accompanying fatal behaviors that all pointed to an early death.

In 1986, my father turned 50. He had now lived longer than either of his parents. The habits he had learned from them could be changed. He had stopped smoking - what else could he do?

It was a painful decade for all of us. My parents divorced. My sister quit college. I moved to the other side of the country and stopped speaking to my father for almost 10 years. My father remarried, and divorced a second time. I stopped counting the number of times he was in and out of the hospital with heart-related issues.

In the early 1990's, my father reached out to me. I think he finally determined that, if he was going to have these extra decades of life, he wanted to make them count. He traveled across the country to spend a week with me, to meet my friends, and to rebuild his relationship with me. He did the same with my sister. He stopped drinking. He was more forthcoming about his health, and admitted that he was taking an antidepressant. His humor became less cruel and sadistic. He took an active interest in the world. He became part of my life again.

The 1990's was also the decade of angioplasty. My father explained it to me like this: during his next surgery, the doctors would place balloons in his arteries, and inflate them. The balloons would then be removed (or dissolve), leaving the artery open again for blood. He had several of these surgeries over the next decade.

When my father was in his 60's, he danced at with me at my wedding. It was now 10 years past the time he had expected to live, and his life was transformed. He was living with a woman I had known since I was a child, and my wife and I would make regular visits to their home. My father retired from teaching, became an avid gardener, and always had a home project underway. He was a happy man.

Dancing with my father at my wedding in 1998.

Then, in the mid 2000's, my father faced another serious surgery. Years of arterial surgery, angioplasty, and damaged heart muscle were taking their toll. He opted to undergo a life-saving surgery at Cleveland Clinic. By this time, I was living in New York and my sister was living in Arizona. We both traveled to the Midwest to be with him. Dad was unconscious most of the time. We took turns holding his hand in the ICU, encouraging him to regain his will to live, and making outrageous threats if he didn't listen to us.

The nursing staff were wonderful. I remember telling them that my father had never expected to live this long. One of the nurses pointed out that most of the patients in their ward were in their 70's and 80's, and a few were in their 90's. She reminded me that just a decade earlier, most hospitals were unwilling to do the kind of surgery my father had received on patients his age. In the first decade of the 21st century, however, things were different: 90-year-olds could now undergo heart surgery and live another decade. My father was on the "young" side of their patients.

The Cleveland Clinic visit would be the last major heart surgery my father would have. Not that he didn't return to his local hospital a few times after that: he broke his neck -- not once, but twice! -- slipping on ice. And in the 2010's, he began to show signs of dementia, and needed more home care. His partner, who had her own health issues, was not able to provide the level of care my father needed. My sister invited him to move in with her, and in 2015, I traveled with him to Arizona to get him settled in.

After a few months, he accepted home hospice. We turned off his pacemaker when the hospice nurse explained to us that the job of a pacemaker is to literally jolt a patient's heart back into beating. The jolts were happening more and more frequently, causing my Dad additional, unwanted pain.

My father in 2015, a few months before his death.

My father died in February 2016. His body carried the scars and implants of 30 years of cardiac surgeries, from the ugly breastbone scar from the 1970's to scars on his arms and legs from borrowed blood vessels, to the tiny red circles of robotic incisions from the 21st century. The arteries and veins feeding his heart were a patchwork of transplanted leg veins and fragile arterial walls pressed thinner by balloons.

And my father died with no regrets or unfinished business. He died in my sister's home, with his long-time partner by his side. Medical advancements had given him the opportunity to live 30 years longer than he expected. But he was the one who decided how to live those extra years. He was the one who made the years matter.

In The Fake News Era, Are We Too Gullible? No, Says Cognitive Scientist

Cognitive scientist Hugo Mercier says the real challenge is not fighting fake news, but figuring out "how to make it easier for people who say correct things to convince people."

One of the oddest political hoaxes of recent times was Pizzagate, in which conspiracy theorists claimed that Hillary Clinton and her 2016 campaign chief ran a child sex ring from the basement of a Washington, DC, pizzeria.

To fight disinformation more effectively, he suggests, humans need to stop believing in one thing above all: our own gullibility.

Millions of believers spread the rumor on social media, abetted by Russian bots; one outraged netizen stormed the restaurant with an assault rifle and shot open what he took to be the dungeon door. (It actually led to a computer closet.) Pundits cited the imbroglio as evidence that Americans had lost the ability to tell fake news from the real thing, putting our democracy in peril.

Such fears, however, are nothing new. "For most of history, the concept of widespread credulity has been fundamental to our understanding of society," observes Hugo Mercier in Not Born Yesterday: The Science of Who We Trust and What We Believe (Princeton University Press, 2020). In the fourth century BCE, he points out, the historian Thucydides blamed Athens' defeat by Sparta on a demagogue who hoodwinked the public into supporting idiotic military strategies; Plato extended that argument to condemn democracy itself. Today, atheists and fundamentalists decry one another's gullibility, as do climate-change accepters and deniers. Leftists bemoan the masses' blind acceptance of the "dominant ideology," while conservatives accuse those who do revolt of being duped by cunning agitators.

What's changed, all sides agree, is the speed at which bamboozlement can propagate. In the digital age, it seems, a sucker is born every nanosecond.

The Case Against Credulity

Yet Mercier, a cognitive scientist at the Jean Nicod Institute in Paris, thinks we've got the problem backward. To fight disinformation more effectively, he suggests, humans need to stop believing in one thing above all: our own gullibility. "We don't credulously accept whatever we're told—even when those views are supported by the majority of the population, or by prestigious, charismatic individuals," he writes. "On the contrary, we are skilled at figuring out who to trust and what to believe, and, if anything, we're too hard rather than too easy to influence."

He bases those contentions on a growing body of research in neuropsychiatry, evolutionary psychology, and other fields. Humans, Mercier argues, are hardwired to balance openness with vigilance when assessing communicated information. To gauge a statement's accuracy, we instinctively test it from many angles, including: Does it jibe with what I already believe? Does the speaker share my interests? Has she demonstrated competence in this area? What's her reputation for trustworthiness? And, with more complex assertions: Does the argument make sense?

This process, Mercier says, enables us to learn much more from one another than do other animals, and to communicate in a far more complex way—key to our unparalleled adaptability. But it doesn't always save us from trusting liars or embracing demonstrably false beliefs. To better understand why, leapsmag spoke with the author.

How did you come to write Not Born Yesterday?

In 2010, I collaborated with the cognitive scientist Dan Sperber and some other colleagues on a paper called "Epistemic Vigilance," which laid out the argument that evolutionarily, it would make no sense for humans to be gullible. If you can be easily manipulated and influenced, you're going to be in major trouble. But as I talked to people, I kept encountering resistance. They'd tell me, "No, no, people are influenced by advertising, by political campaigns, by religious leaders." I started doing more research to see if I was wrong, and eventually I had enough to write a book.

With all the talk about "fake news" these days, the topic has gotten a lot more timely.

Yes. But on the whole, I'm skeptical that fake news matters very much. And all the energy we spend fighting it is energy not spent on other pursuits that may be better ways of improving our informational environment. The real challenge, I think, is not how to shut up people who say stupid things on the internet, but how to make it easier for people who say correct things to convince people.

"History shows that the audience's state of mind and material conditions matter more than the leader's powers of persuasion."

You start the book with an anecdote about your encounter with a con artist several years ago, who scammed you out of 20 euros. Why did you choose that anecdote?

Although I'm arguing that people aren't generally gullible, I'm not saying we're completely impervious to attempts at tricking us. It's just that we're much better than we think at resisting manipulation. And while there's a risk of trusting someone who doesn't deserve to be trusted, there's also a risk of not trusting someone who could have been trusted. You miss out on someone who could help you, or from whom you might have learned something—including figuring out who to trust.

You argue that in humans, vigilance and open-mindedness evolved hand-in-hand, leading to a set of cognitive mechanisms you call "open vigilance."

There's a common view that people start from a state of being gullible and easy to influence, and get better at rejecting information as they become smarter and more sophisticated. But that's not what really happens. It's much harder to get apes than humans to do anything they don't want to do, for example. And research suggests that over evolutionary time, the better our species became at telling what we should and shouldn't listen to, the more open to influence we became. Even small children have ways to evaluate what people tell them.

The most basic is what I call "plausibility checking": if you tell them you're 200 years old, they're going to find that highly suspicious. Kids pay attention to competence; if someone is an expert in the relevant field, they'll trust her more. They're likelier to trust someone who's nice to them. My colleagues and I have found that by age 2 ½, children can distinguish between very strong and very weak arguments. Obviously, these skills keep developing throughout your life.

But you've found that even the most forceful leaders—and their propaganda machines—have a hard time changing people's minds.

Throughout history, there's been this fear of demagogues leading whole countries into terrible decisions. In reality, these leaders are mostly good at feeling the crowd and figuring out what people want to hear. They're not really influencing [the masses]; they're surfing on pre-existing public opinion. We know from a recent study, for instance, that if you match cities in which Hitler gave campaign speeches in the late '20s through early '30s with similar cities in which he didn't give campaign speeches, there was no difference in vote share for the Nazis. Nazi propaganda managed to make Germans who were already anti-Semitic more likely to express their anti-Semitism or act on it. But Germans who were not already anti-Semitic were completely inured to the propaganda.

So why, in totalitarian regimes, do people seem so devoted to the ruler?

It's not a very complex psychology. In these regimes, the slightest show of discontent can be punished by death, or by you and your whole family being sent to a labor camp. That doesn't mean propaganda has no effect, but you can explain people's obedience without it.

What about cult leaders and religious extremists? Their followers seem willing to believe anything.

Prophets and preachers can inspire the kind of fervor that leads people to suicidal acts or doomed crusades. But history shows that the audience's state of mind and material conditions matter more than the leader's powers of persuasion. Only when people are ready for extreme actions can a charismatic figure provide the spark that lights the fire.

Once a religion becomes ubiquitous, the limits of its persuasive powers become clear. Every anthropologist knows that in societies that are nominally dominated by orthodox belief systems—whether Christian or Muslim or anything else—most people share a view of God, or the spirit, that's closer to what you find in societies that lack such religions. In the Middle Ages, for instance, you have records of priests complaining of how unruly the people are—how they spend the whole Mass chatting or gossiping, or go on pilgrimages mostly because of all the prostitutes and wine-drinking. They continue pagan practices. They resist attempts to make them pay tithes. It's very far from our image of how much people really bought the dominant religion.

"The mainstream media is extremely reliable. The scientific consensus is extremely reliable."

And what about all those wild rumors and conspiracy theories on social media? Don't those demonstrate widespread gullibility?

I think not, for two reasons. One is that most of these false beliefs tend to be held in a way that's not very deep. People may say Pizzagate is true, yet that belief doesn't really interact with the rest of their cognition or their behavior. If you really believe that children are being abused, then trying to free them is the moral and rational thing to do. But the only person who did that was the guy who took his assault weapon to the pizzeria. Most people just left one-star reviews of the restaurant.

The other reason is that most of these beliefs actually play some useful role for people. Before any ethnic massacre, for example, rumors circulate about atrocities having been committed by the targeted minority. But those beliefs aren't what's really driving the phenomenon. In the horrendous pogrom of Kishinev, Moldova, 100 years ago, you had these stories of blood libel—a child disappeared, typical stuff. And then what did the Christian inhabitants do? They raped the [Jewish] women, they pillaged the wine stores, they stole everything they could. They clearly wanted to get that stuff, and they made up something to justify it.

Where do skeptics like climate-change deniers and anti-vaxxers fit into the picture?

Most people in most countries accept that vaccination is good and that climate change is real and man-made. These ideas are deeply counter-intuitive, so the fact that scientists were able to get them across is quite fascinating. But the environment in which we live is vastly different from the one in which we evolved. There's a lot more information, which makes it harder to figure out who we can trust. The main effect is that we don't trust enough; we don't accept enough information. We also rely on shortcuts and heuristics—coarse cues of trustworthiness. There are people who abuse these cues. They may have a PhD or an MD, and they use those credentials to help them spread messages that are not true and not good. Mostly, they're affirming what people want to believe, but they may also be changing minds at the margins.

How can we improve people's ability to resist that kind of exploitation?

I wish I could tell you! That's literally my next project. Generally speaking, though, my advice is very vanilla. The mainstream media is extremely reliable. The scientific consensus is extremely reliable. If you trust those sources, you'll go wrong in a very few cases, but on the whole, they'll probably give you good results. Yet a lot of the problems that we attribute to people being stupid and irrational are not entirely their fault. If governments were less corrupt, if the pharmaceutical companies were irreproachable, these problems might not go away—but they would certainly be minimized.

“Virtual Biopsies” May Soon Make Some Invasive Tests Unnecessary

Patient Dan Chessin met recently in Dr. Lee Ponsky's office at University Hospitals, discussing Chessin's history with prostate cancer.

At his son's college graduation in 2017, Dan Chessin felt "terribly uncomfortable" sitting in the stadium. The bouts of pain persisted, and after months of monitoring, a urologist took biopsies of suspicious areas in his prostate.

This innovation may enhance diagnostic precision and promptness, but it also brings ethical concerns to the forefront.

"In my case, the biopsies came out cancerous," says Chessin, 60, who underwent robotic surgery for intermediate-grade prostate cancer at University Hospitals Cleveland Medical Center.

Although he needed a biopsy, as most patients today do, advances in radiologic technology may make such invasive measures unnecessary in the future. Researchers are developing better imaging techniques and algorithms—a form of computer science called artificial intelligence, in which machines learn and execute tasks that typically require human brain power.

This innovation may enhance diagnostic precision and promptness. But it also brings ethical concerns to the forefront of the conversation, highlighting the potential for invasion of privacy, unequal patient access, and less physician involvement in patient care.

A National Academy of Medicine Special Publication, released in December, emphasizes that setting industry-wide standards for use in patient care is essential to AI's responsible and transparent implementation as the industry grapples with voluminous quantities of data. The technology should be viewed as a tool to supplement decision-making by highly trained professionals, not to replace it.

MRI--a test that uses powerful magnets, radio waves, and a computer to take detailed images inside the body--has become highly accurate in detecting aggressive prostate cancer, but its reliability is more limited in identifying low and intermediate grades of malignancy. That's why Chessin opted to have his prostate removed rather than take the chance of missing anything more suspicious that could develop.

His urologist, Lee Ponsky, says AI's most significant impact is yet to come. He hopes University Hospitals Cleveland Medical Center's collaboration with research scientists at its academic affiliate, Case Western Reserve University, will lead to the invention of a virtual biopsy.

A National Cancer Institute five-year grant is funding the project, launched in 2017, to develop a combined MRI and computerized tool to support more accurate detection and grading of prostate cancer. Such a tool would be "the closest to a crystal ball that we can get," says Ponsky, professor and chairman of the Urology Institute.

In situations where AI has guided diagnostics, radiologists' interpretations of breast, lung, and prostate lesions have improved as much as 25 percent, says Anant Madabhushi, a biomedical engineer and director of the Center for Computational Imaging and Personalized Diagnostics at Case Western Reserve, who is collaborating with Ponsky. "AI is very nascent," Madabhushi says, estimating that fewer than 10 percent of niche academic medical centers have used it. "We are still optimizing and validating the AI and virtual biopsy technology."

In October, several North American and European professional organizations of radiologists, imaging informaticists, and medical physicists released a joint statement on the ethics of AI. "Ultimate responsibility and accountability for AI remains with its human designers and operators for the foreseeable future," reads the statement, published in the Journal of the American College of Radiology. "The radiology community should start now to develop codes of ethics and practice for AI that promote any use that helps patients and the common good and should block use of radiology data and algorithms for financial gain without those two attributes."

Overreliance on new technology also poses concern when humans "outsource the process to a machine."

The statement's leader author, radiologist J. Raymond Geis, says "there's no question" that machines equipped with artificial intelligence "can extract more information than two human eyes" by spotting very subtle patterns in pixels. Yet, such nuances are "only part of the bigger picture of taking care of a patient," says Geis, a senior scientist with the American College of Radiology's Data Science Institute. "We have to be able to combine that with knowledge of what those pixels mean."

Setting ethical standards is high on all physicians' radar because the intricacies of each patient's medical record are factored into the computer's algorithm, which, in turn, may be used to help interpret other patients' scans, says radiologist Frank Rybicki, vice chair of operations and quality at the University of Cincinnati's department of radiology. Although obtaining patients' informed consent in writing is currently necessary, ethical dilemmas arise if and when patients have a change of heart about the use of their private health information. It is likely that removing individual data may be possible for some algorithms but not others, Rybicki says.

The information is de-identified to protect patient privacy. Using it to advance research is akin to analyzing human tissue removed in surgical procedures with the goal of discovering new medicines to fight disease, says Maryellen Giger, a University of Chicago medical physicist who studies computer-aided diagnosis in cancers of the breast, lung, and prostate, as well as bone diseases. Physicians who become adept at using AI to augment their interpretation of imaging will be ahead of the curve, she says.

As with other new discoveries, patient access and equality come into play. While AI appears to "have potential to improve over human performance in certain contexts," an algorithm's design may result in greater accuracy for certain groups of patients, says Lucia M. Rafanelli, a political theorist at The George Washington University. This "could have a disproportionately bad impact on one segment of the population."

Overreliance on new technology also poses concern when humans "outsource the process to a machine." Over time, they may cease developing and refining the skills they used before the invention became available, said Chloe Bakalar, a visiting research collaborator at Princeton University's Center for Information Technology Policy.

"AI is a paradigm shift with magic power and great potential."

Striking the right balance in the rollout of the technology is key. Rushing to integrate AI in clinical practice may cause harm, whereas holding back too long could undermine its ability to be helpful. Proper governance becomes paramount. "AI is a paradigm shift with magic power and great potential," says Ge Wang, a biomedical imaging professor at Rensselaer Polytechnic Institute in Troy, New York. "It is only ethical to develop it proactively, validate it rigorously, regulate it systematically, and optimize it as time goes by in a healthy ecosystem."