How a Nobel-Prize Winner Fought Her Family, Nazis, and Bombs to Change our Understanding of Cells Forever

Rita Levi-Montalcini survived the Nazis and eventually won a Nobel Prize for her work to understand why certain cells grow so quickly.

When Rita Levi-Montalcini decided to become a scientist, she was determined that nothing would stand in her way. And from the beginning, that determination was put to the test. Before Levi-Montalcini became a Nobel Prize-winning neurobiologist, the first to discover and isolate a crucial chemical called Neural Growth Factor (NGF), she would have to battle both the sexism within her own family as well as the racism and fascism that was slowly engulfing her country

Levi-Montalcini was born to two loving parents in Turin, Italy at the turn of the 20th century. She and her twin sister, Paola, were the youngest of the family's four children, and Levi-Montalcini described her childhood as "filled with love and reciprocal devotion." But while her parents were loving, supportive and "highly cultured," her father refused to let his three daughters engage in any schooling beyond the basics. "He loved us and had a great respect for women," she later explained, "but he believed that a professional career would interfere with the duties of a wife and mother."

At age 20, Levi-Montalcini had finally had enough. "I realized that I could not possibly adjust to a feminine role as conceived by my father," she is quoted as saying, and asked his permission to finish high school and pursue a career in medicine. When her father reluctantly agreed, Levi-Montalcini was ecstatic: In just under a year, she managed to catch up on her mathematics, graduate high school, and enroll in medical school in Turin.

By 1936, Levi-Montalcini had graduated medical school at the top of her class and decided to stay on at the University of Turin as a research assistant for histologist and human anatomy professor Guiseppe Levi. Levi-Montalcini started studying nerve cells and nerve fibers – the tiny, slender tendrils that are threaded throughout our nerves and that determine what information each nerve can transmit. But it wasn't long before another enormous obstacle to her scientific career reared its head.

Science Under a Fascist Regime

Two years into her research assistant position, Levi-Montalcini was fired, along with every other "non-Aryan Italian" who held an academic or professional career, thanks to a series of antisemitic laws passed by Italy's then-leader Benito Mussolini. Forced out of her academic position, Levi-Montalcini went to Belgium for a fellowship at a neurological institute in Brussels – but then was forced back to Turin when the German army invaded.

Levi-Montalcini decided to keep researching. She and Guiseppe Levi built a makeshift lab in Levi-Montalcini's apartment, borrowing chicken eggs from local farmers and using sewing needles to dissect them. By dissecting the chicken embryos from her bedroom laboratory, she was able to see how nerve fibers formed and died. The two continued this research until they were interrupted again – this time, by British air raids. Levi-Montalcini fled to a country cottage to continue her research, and then two years later was forced into hiding when the German army invaded Italy. Levi-Montalcini and her family assumed different identities and lived with non-Jewish friends in Florence to survive the Holocaust. Despite all of this, Levi-Montalcini continued her work, dissecting chicken embryos from her hiding place until the end of the war.

"The discovery of NGF really changed the world in which we live, because now we knew that cells talk to other cells, and that they use soluble factors. It was hugely important."

A Post-War Discovery

Several years after the war, when Levi-Montalcini was once again working at the University of Turin, a German embryologist named Viktor Hamburger invited her to Washington University in St. Louis. Hamburger was impressed by Levi-Montalcini's research with her chicken embryos, and secured an opportunity for her to continue her work in America. The invitation would "change the course of my life," Levi-Montalcini would later recall.

During her fellowship, Montalcini grew tumors in mice and then transferred them to chick embryos in order to see how it would affect the chickens. To her surprise, she noticed that introducing the tumor samples would cause nerve fibers to grow rapidly. From this, Levi-Montalcini discovered and was able to isolate a protein that she determined was able to cause this rapid growth. She later named this Nerve Growth Factor, or NGF.

From there, Levi-Montalcini and her team launched new experiments to test NGF, injecting it and repressing it to see the effect it had in a test subject's body. When the team injected NGF into embryonic mice, they observed nerve growth, as well as the mouse pups developing faster – their eyes opening earlier and their teeth coming in sooner – than the untreated group. When the team purified the NGF extract, however, it had no effect, leading the team to believe that something else in the crude extract of NGF was influencing the growth of the newborn mice. Stanley Cohen, Levi-Montalcini's colleague, identified another growth factor called EGF – epidermal growth factor – that caused the mouse pups' eyes and teeth to grow so quickly.

Levi-Montalcini continued to experiment with NGF for the next several decades at Washington University, illuminating how NGF works in our body. When Levi-Montalcini injected newborn mice with an antiserum for NGF, for example, her team found that it "almost completely deprived the animals of a sympathetic nervous system." Other experiments done by Levi-Montalcini and her colleagues helped show the role that NGF plays in other important biological processes, such as the regulation of our immune system and ovulation.

"The discovery of NGF really changed the world in which we live, because now we knew that cells talk to other cells, and that they use soluble factors. It was hugely important," said Bill Mobley, Chair of the Department of Neurosciences at the University of California, San Diego School of Medicine.

Her Lasting Legacy

After years of setbacks, Levi-Montalcini's groundbreaking work was recognized in 1986, when she was awarded the Nobel Prize in Medicine for her discovery of NGF (Cohen, her colleague who discovered EGF, shared the prize). Researchers continue to study NGF even to this day, and the continued research has been able to increase our understanding of diseases like HIV and Alzheimer's.

Levi-Montalcini never stopped researching either: In January 2012, at the age of 102, Levi-Montalcini published her last research paper in the journal PNAS, making her the oldest member of the National Academy of Science to do so. Before she died in December 2012, she encouraged other scientists who would suffer setbacks in their careers to keep pursuing their passions. "Don't fear the difficult moments," Levi-Montalcini is quoted as saying. "The best comes from them."

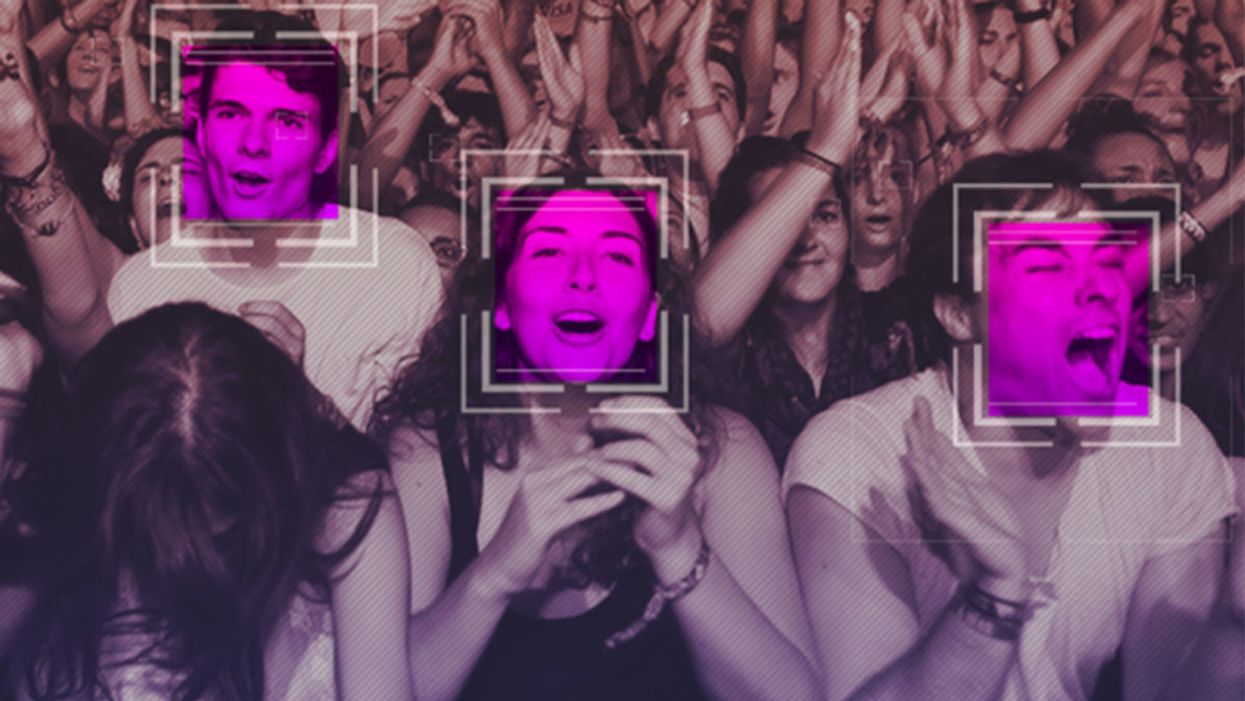

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

Scientists Are Building an “AccuWeather” for Germs to Predict Your Risk of Getting the Flu

A future app may help you avoid getting the flu by informing you of your local risk on a given day.

Applied mathematician Sara del Valle works at the U.S.'s foremost nuclear weapons lab: Los Alamos. Once colloquially called Atomic City, it's a hidden place 45 minutes into the mountains northwest of Santa Fe. Here, engineers developed the first atomic bomb.

Like AccuWeather, an app for disease prediction could help people alter their behavior to live better lives.

Today, Los Alamos still a small science town, though no longer a secret, nor in the business of building new bombs. Instead, it's tasked with, among other things, keeping the stockpile of nuclear weapons safe and stable: not exploding when they're not supposed to (yes, please) and exploding if someone presses that red button (please, no).

Del Valle, though, doesn't work on any of that. Los Alamos is also interested in other kinds of booms—like the explosion of a contagious disease that could take down a city. Predicting (and, ideally, preventing) such epidemics is del Valle's passion. She hopes to develop an app that's like AccuWeather for germs: It would tell you your chance of getting the flu, or dengue or Zika, in your city on a given day. And like AccuWeather, it could help people alter their behavior to live better lives, whether that means staying home on a snowy morning or washing their hands on a sickness-heavy commute.

Sara del Valle of Los Alamos is working to predict and prevent epidemics using data and machine learning.

Since the beginning of del Valle's career, she's been driven by one thing: using data and predictions to help people behave practically around pathogens. As a kid, she'd always been good at math, but when she found out she could use it to capture the tentacular spread of disease, and not just manipulate abstractions, she was hooked.

When she made her way to Los Alamos, she started looking at what people were doing during outbreaks. Using social media like Twitter, Google search data, and Wikipedia, the team started to sift for trends. Were people talking about hygiene, like hand-washing? Or about being sick? Were they Googling information about mosquitoes? Searching Wikipedia for symptoms? And how did those things correlate with the spread of disease?

It was a new, faster way to think about how pathogens propagate in the real world. Usually, there's a 10- to 14-day lag in the U.S. between when doctors tap numbers into spreadsheets and when that information becomes public. By then, the world has moved on, and so has the disease—to other villages, other victims.

"We found there was a correlation between actual flu incidents in a community and the number of searches online and the number of tweets online," says del Valle. That was when she first let herself dream about a real-time forecast, not a 10-days-later backcast. Del Valle's group—computer scientists, mathematicians, statisticians, economists, public health professionals, epidemiologists, satellite analysis experts—has continued to work on the problem ever since their first Twitter parsing, in 2011.

They've had their share of outbreaks to track. Looking back at the 2009 swine flu pandemic, they saw people buying face masks and paying attention to the cleanliness of their hands. "People were talking about whether or not they needed to cancel their vacation," she says, and also whether pork products—which have nothing to do with swine flu—were safe to buy.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place.

They watched internet conversations during the measles outbreak in California. "There's a lot of online discussion about anti-vax sentiment, and people trying to convince people to vaccinate children and vice versa," she says.

Today, they work on predicting the spread of Zika, Chikungunya, and dengue fever, as well as the plain old flu. And according to the CDC, that latter effort is going well.

Since 2015, the CDC has run the Epidemic Prediction Initiative, a competition in which teams like de Valle's submit weekly predictions of how raging the flu will be in particular locations, along with other ailments occasionally. Michael Johannson is co-founder and leader of the program, which began with the Dengue Forecasting Project. Its goal, he says, was to predict when dengue cases would blow up, when previously an area just had a low-level baseline of sick people. "You'll get this massive epidemic where all of a sudden, instead of 3,000 to 4,000 cases, you have 20,000 cases," he says. "They kind of come out of nowhere."

But the "kind of" is key: The outbreaks surely come out of somewhere and, if scientists applied research and data the right way, they could forecast the upswing and perhaps dodge a bomb before it hit big-time. Questions about how big, when, and where are also key to the flu.

A big part of these projects is the CDC giving the right researchers access to the right information, and the structure to both forecast useful public-health outcomes and to compare how well the models are doing. The extra information has been great for the Los Alamos effort. "We don't have to call departments and beg for data," says del Valle.

When data isn't available, "proxies"—things like symptom searches, tweets about empty offices, satellite images showing a green, wet, mosquito-friendly landscape—are helpful: You don't have to rely on anyone's health department.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place. But del Valle wants more than weekly numbers on a government website; she wants that weather-app-inspired fortune-teller, incorporating the many diseases you could get today, standing right where you are. "That's our dream," she says.

This plot shows the the correlations between the online data stream, from Wikipedia, and various infectious diseases in different countries. The results of del Valle's predictive models are shown in brown, while the actual number of cases or illness rates are shown in blue.

(Courtesy del Valle)

The goal isn't to turn you into a germophobic agoraphobe. It's to make you more aware when you do go out. "If you know it's going to rain today, you're more likely to bring an umbrella," del Valle says. "When you go on vacation, you always look at the weather and make sure you bring the appropriate clothing. If you do the same thing for diseases, you think, 'There's Zika spreading in Sao Paulo, so maybe I should bring even more mosquito repellent and bring more long sleeves and pants.'"

They're not there yet (don't hold your breath, but do stop touching your mouth). She estimates it's at least a decade away, but advances in machine learning could accelerate that hypothetical timeline. "We're doing baby steps," says del Valle, starting with the flu in the U.S., dengue in Brazil, and other efforts in Colombia, Ecuador, and Canada. "Going from there to forecasting all diseases around the globe is a long way," she says.

But even AccuWeather started small: One man began predicting weather for a utility company, then helping ski resorts optimize their snowmaking. His influence snowballed, and now private forecasting apps, including AccuWeather's, populate phones across the planet. The company's progression hasn't been without controversy—privacy incursions, inaccuracy of long-term forecasts, fights with the government—but it has continued, for better and for worse.

Disease apps, perhaps spun out of a small, unlikely team at a nuclear-weapons lab, could grow and breed in a similar way. And both the controversies and public-health benefits that may someday spin out of them lie in the future, impossible to predict with certainty.