How Excessive Regulation Helped Ignite COVID-19's Rampant Spread

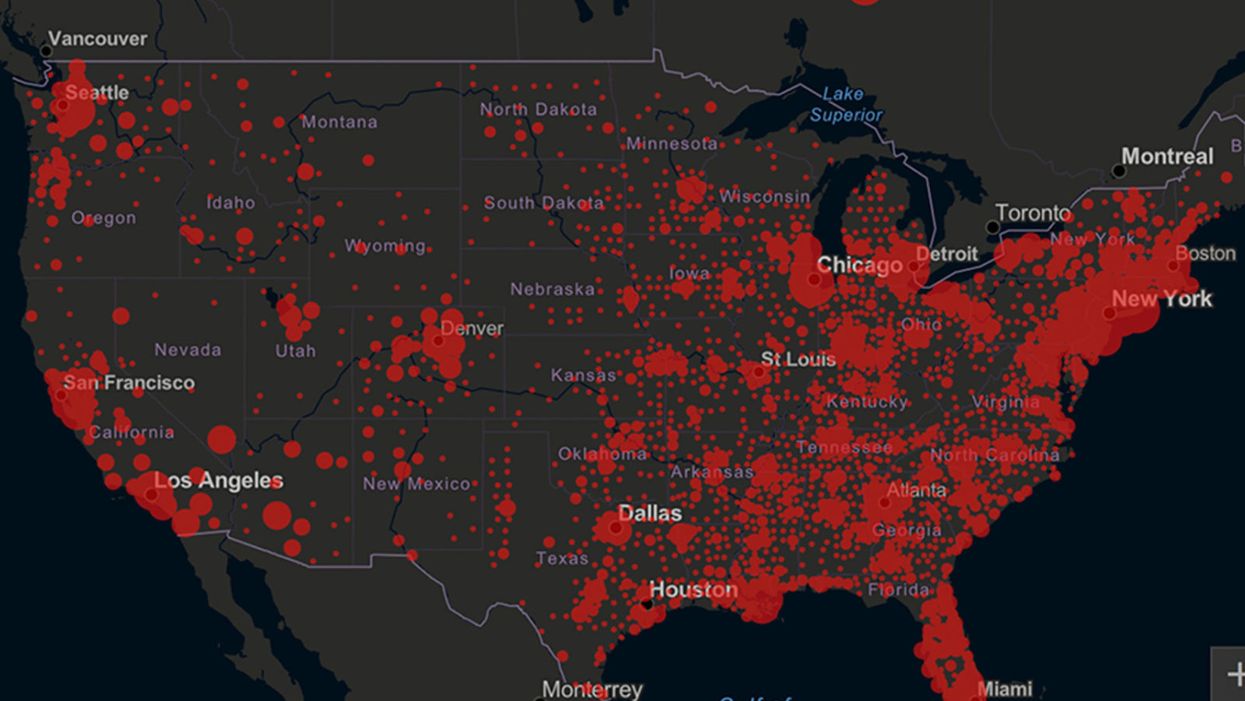

Screenshot of an interactive map of coronavirus cases across the United States, current as of 1:45 p.m. Pacific time on Tuesday, March 31st. Full map accessible at https://coronavirus.jhu.edu/map.html

When historians of the future look back at the 2020 pandemic, the heroic work of Helen Y. Chu, a flu researcher at the University of Washington, will be worthy of recognition.

Chu's team bravely defied the order and conducted the testing anyway.

In late January, Chu was testing nasal swabs for the Seattle Flu Study to monitor influenza spread when she learned of the first case of COVID-19 in Washington state. She deemed it a pressing public health matter to document if and how the illness was spreading locally, so that early containment efforts could succeed. So she sought regulatory approval to adapt the Flu Study to test for the coronavirus, but the federal government denied the request because the original project was funded to study only influenza.

Aware of the urgency, Chu's team bravely defied the order and conducted the testing anyway. Soon they identified a local case in a teenager without any travel history, followed by others. Still, the government tried to shutter their efforts until the outbreak grew dangerous enough to command attention.

Needless testing delays, prompted by excessive regulatory interference, eliminated any chances of curbing the pandemic at its initial stages. Even after Chu went out on a limb to sound alarms, a heavy-handed bureaucracy crushed the nation's ability to roll out early and widespread testing across the country. The Centers for Disease Control and Prevention infamously blundered its own test, while also impeding state and private labs from coming on board, fueling a massive shortage.

The long holdup created "a backlog of testing that needed to be done," says Amesh Adalja, an infectious disease specialist who is a senior scholar at the Johns Hopkins University Center for Health Security.

In a public health crisis, "the ideal situation" would allow the government's test to be "supplanted by private laboratories" without such "a lag in that transition," Adalja says. Only after the eventual release of CDC's test could private industry "begin in earnest" to develop its own versions under the Food and Drug Administration's emergency use authorization.

In a statement, CDC acknowledged that "this process has not gone as smoothly as we would have liked, but there is currently no backlog for testing at CDC."

Now, universities and corporations are in a race against time, playing catch up as the virus continues its relentless spread, also afflicting many health care workers on the front lines.

"Home-testing accessibility is key to preventing further spread of the COVID-19 pandemic."

Hospitals are attempting to add the novel coronavirus to the testing panel of their existent diagnostic machines, which would reduce the results processing time from 48 hours to as little as four hours. Meanwhile, at least four companies announced plans to deliver at-home collection tests to help meet the demand – before a startling injunction by the FDA halted their plans.

Everlywell, an Austin, Texas-based digital health company, had been set to launch online sales of at-home collection kits directly to consumers last week. Scaling up in a matter of days to an initial supply of 30,000 tests, Everlywell collaborated with multiple laboratories where consumers could ship their nasal swab samples overnight, projecting capacity to screen a quarter-million individuals on a weekly basis, says Frank Ong, chief medical and scientific officer.

Secure digital results would have been available online within 48 hours of a sample's arrival at the lab, as well as a telehealth consultation with an independent, board-certified doctor if someone tested positive, for an inclusive $135 cost. The test has a less than 3 percent false-negative rate, Ong says, and in the event of an inadequate self-swab, the lab would not report a conclusive finding. "Home-testing accessibility," he says, "is key to preventing further spread of the COVID-19 pandemic."

But on March 20, the FDA announced restrictions on home collection tests due to concerns about accuracy. The agency did note "the public health value in expanding the availability of COVID-19 testing through safe and accurate tests that may include home collection," while adding that "we are actively working with test developers in this space."

After the restrictions were announced, Everlywell decided to allocate its initial supply of COVID-19 collection kits to hospitals, clinics, nursing homes, and other qualifying health care companies that can commit to no-cost screening of frontline workers and high-risk symptomatic patients. For now, no consumers can order a home-collection test.

"Losing two months is close to disastrous, and that's what we did."

Currently, the U.S. has ramped up to testing an estimated 100,000 people a day, according to Stat News. But 150,000 or more Americans should be tested every day, says Ashish Jha, professor and director of the Harvard Global Health Institute. Due to the dearth of tests, many sick people who suspect they are infected still cannot get confirmation unless they need to be hospitalized.

To give a concrete sense of how far behind we are in testing, consider Palm Beach County, Fla. The state's only drive-thru test center just opened there, requiring an appointment. The center aims to test 750 people per day, but more than 330,000 people have already called to try to book a slot.

"This is such a rapidly moving infection that losing a few days is bad, and losing a couple of weeks is terrible," says Jha, a practicing general internist. "Losing two months is close to disastrous, and that's what we did."

At this point, it will take a long time to fully ramp up. "We are blindfolded," he adds, "and I'd like to take the blindfolds off so we can fight this battle with our eyes wide open."

Better late than never: Yesterday, FDA Commissioner Stephen Hahn said in a statement that the agency has worked with more than 230 test developers and has approved 20 tests since January. An especially notable one was authorized last Friday – 67 days since the country's first known case in Washington state. It's a rapid point-of-care test from medical-device firm Abbott that provides positive results in five minutes and negative results in 13 minutes. Abbott will send 50,000 tests a day to urgent care settings. The first tests are expected to ship tomorrow.

Indigenous wisdom plus honeypot ants could provide new antibiotics

Indigenous people in Australia dig pits next to a honeypot colony. Scientists think the honey can be used to make new antimicrobial drugs.

For generations, the Indigenous Tjupan people of Australia enjoyed the sweet treat of honey made by honeypot ants. As a favorite pastime, entire families would go searching for the underground colonies, first spotting a worker ant and then tracing it to its home. The ants, which belong to the species called Camponotus inflatus, usually build their subterranean homes near the mulga trees, Acacia aneura. Having traced an ant to its tree, it would be the women who carefully dug a pit next to a colony, cautious not to destroy the entire structure. Once the ant chambers were exposed, the women would harvest a small amount to avoid devastating the colony’s stocks—and the family would share the treat.

The Tjupan people also knew that the honey had antimicrobial properties. “You could use it for a sore throat,” says Danny Ulrich, a member of the Tjupan nation. “You could also use it topically, on cuts and things like that.”

These hunts have become rarer, as many of the Tjupan people have moved away and, up until now, the exact antimicrobial properties of the ant honey remained unknown. But recently, scientists Andrew Dong and Kenya Fernandes from the University of Sydney, joined Ulrich, who runs the Honeypot Ants tours in Kalgoorlie, a city in Western Australia, on a honey-gathering expedition. Afterwards, they ran a series of experiments analyzing the honey’s antimicrobial activity—and confirmed that the Indigenous wisdom was true. The honey was effective against Staphylococcus aureus, a common pathogen responsible for sore throats, skin infections like boils and sores, and also sepsis, which can result in death. Moreover, the honey also worked against two species of fungi, Cryptococcus and Aspergillus, which can be pathogenic to humans, especially those with suppressed immune systems.

In the era of growing antibiotic resistance and the rising threat of pathogenic fungi, these findings may help scientists identify and make new antimicrobial compounds. “Natural products have been honed over thousands and millions of years by nature and evolution,” says Fernandes. “And some of them have complex and intricate properties that make them really important as potential new antibiotics. “

In an era of growing resistance to antibiotics and new threats of fungi infections, the latest findings about honeypot ants are helping scientists identify new antimicrobial drugs.

Danny Ulrich

Bee honey is also known for its antimicrobial properties, but bees produce it very differently than the ants. Bees collect nectar from flowers, which they regurgitate at the hive and pack into the hexagonal honeycombs they build for storage. As they do so, they also add into the mix an enzyme called glucose oxidase produced by their glands. The enzyme converts atmospheric oxygen into hydrogen peroxide, a reactive molecule that destroys bacteria and acts as a natural preservative. After the bees pack the honey into the honeycombs, they fan it with their wings to evaporate the water. Once a honeycomb is full, the bees put a beeswax cover on it, where it stays well-preserved thanks to the enzymatic action, until the bees need it.

Less is known about the chemistry of ants’ honey-making. Similarly to bees, they collect nectar. They also collect the sweet sap of the mulga tree. Additionally, they also “milk” the aphids—small sap-sucking insects that live on the tree. When ants tickle the aphids with their antennae, the latter release a sweet substance, which the former also transfer to their colonies. That’s where the honey management difference becomes really pronounced. The ants don’t build any kind of structures to store their honey. Instead, they store it in themselves.

The workers feed their harvest to their fellow ants called repletes, stuffing them up to the point that their swollen bellies outgrow the ants themselves, looking like amber-colored honeypots—hence the name. Because of their size, repletes don’t move, but hang down from the chamber’s ceiling, acting as living feedstocks. When food becomes scarce, they regurgitate their reserves to their colony’s brethren. It’s not clear whether the repletes die afterwards or can be restuffed again. “That's a good question,” Dong says. “After they've been stretched, they can't really return to exactly the same shape.”

These replete ants are the “treat” the Tjupan women dug for. Once they saw the round-belly ants inside the chambers, they would reach in carefully and get a few scoops of them. “You see a lot of honeypot ants just hanging on the roof of the little openings,” says Ulrich’s mother, Edie Ulrich. The women would share the ants with family members who would eat them one by one. “They're very delicate,” shares Edie Ulrich—you have to take them out carefully, so they don’t accidentally pop and become a wasted resource. “Because you’d lose all this precious honey.”

Dong stumbled upon the honeypot ants phenomenon because he was interested in Indigenous foods and went on Ulrich’s tour. He quickly became fascinated with the insects and their role in the Indigenous culture. “The honeypot ants are culturally revered by the Indigenous people,” he says. Eventually he decided to test out the honey’s medicinal qualities.

The researchers were surprised to see that even the smallest, eight percent concentration of honey was able to arrest the growth of S. aureus.

To do this, the two scientists first diluted the ant honey with water. “We used something called doubling dilutions, which means that we made 32 percent dilutions, and then we halve that to 16 percent and then we half that to eight percent,” explains Fernandes. The goal was to obtain as much results as possible with the meager honey they had. “We had very, very little of the honeypot ant honey so we wanted to maximize the spectrum of results we can get without wasting too much of the sample.”

After that, the researchers grew different microbes inside a nutrient rich broth. They added the broth to the different honey dilutions and incubated the mixes for a day or two at the temperature favorable to the germs’ growth. If the resulting solution turned turbid, it was a sign that the bugs proliferated. If it stayed clear, it meant that the honey destroyed them. The researchers were surprised to see that even the smallest, eight percent concentration of honey was able to arrest the growth of S. aureus. “It was really quite amazing,” Fernandes says. “Eight milliliters of honey in 92 milliliters of water is a really tiny amount of honey compared to the amount of water.”

Similar to bee honey, the ants’ honey exhibited some peroxide antimicrobial activity, researchers found, but given how little peroxide was in the solution, they think the honey also kills germs by a different mechanism. “When we measured, we found that [the solution] did have some hydrogen peroxide, but it didn't have as much of it as we would expect based on how active it was,” Fernandes says. “Whether this hydrogen peroxide also comes from glucose oxidase or whether it's produced by another source, we don't really know,” she adds. The research team does have some hypotheses about the identity of this other germ-killing agent. “We think it is most likely some kind of antimicrobial peptide that is actually coming from the ant itself.”

The honey also has a very strong activity against the two types of fungi, Cryptococcus and Aspergillus. Both fungi are associated with trees and decaying leaves, as well as in the soils where ants live, so the insects likely have evolved some natural defense compounds, which end up inside the honey.

It wouldn’t be the first time when modern medicines take their origin from the natural world or from the indigenous people’s knowledge. The bark of the cinchona tree native to South America contains quinine, a substance that treats malaria. The Indigenous people of the Andes used the bark to quell fever and chills for generations, and when Europeans began to fall ill with malaria in the Amazon rainforest, they learned to use that medicine from the Andean people.

The wonder drug aspirin similarly takes its origin from a bark of a tree—in this case a willow.

Even some anticancer compounds originated from nature. A chemotherapy drug called Paclitaxel, was originally extracted from the Pacific yew trees, Taxus brevifolia. The samples of the Pacific yew bark were first collected in 1962 by researchers from the United States Department of Agriculture who were looking for natural compounds that might have anti-tumor activity. In December 1992, the FDA approved Paclitaxel (brand name Taxol) for the treatment of ovarian cancer and two years later for breast cancer.

In the era when the world is struggling to find new medicines fast enough to subvert a fungal or bacterial pandemic, these discoveries can pave the way to new therapeutics. “I think it's really important to listen to indigenous cultures and to take their knowledge because they have been using these sources for a really, really long time,” Fernandes says. Now we know it works, so science can elucidate the molecular mechanisms behind it, she adds. “And maybe it can even provide a lead for us to develop some kind of new treatments in the future.”

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

Blood Test Can Detect Lymphoma Cells Before a Tumor Grows Back

David Kurtz making DNA sequencing libraries in his lab.

When David M. Kurtz was doing his clinical fellowship at Stanford University Medical Center in 2009, specializing in lymphoma treatments, he found himself grappling with a question no one could answer. A typical regimen for these blood cancers prescribed six cycles of chemotherapy, but no one knew why. "The number seemed to be drawn out of a hat," Kurtz says. Some patients felt much better after just two doses, but had to endure the toxic effects of the entire course. For some elderly patients, the side effects of chemo are so harsh, they alone can kill. Others appeared to be cancer-free on the CT scans after the requisite six but then succumbed to it months later.

"Anecdotally, one patient decided to stop therapy after one dose because he felt it was so toxic that he opted for hospice instead," says Kurtz, now an oncologist at the center. "Five years down the road, he was alive and well. For him, just one dose was enough." Others would return for their one-year check up and find that their tumors grew back. Kurtz felt that while CT scans and MRIs were powerful tools, they weren't perfect ones. They couldn't tell him if there were any cancer cells left, stealthily waiting to germinate again. The scans only showed the tumor once it was back.

Blood cancers claim about 68,000 people a year, with a new diagnosis made about every three minutes, according to the Leukemia Research Foundation. For patients with B-cell lymphoma, which Kurtz focuses on, the survival chances are better than for some others. About 60 percent are cured, but the remaining 40 percent will relapse—possibly because they will have a negative CT scan, but still harbor malignant cells. "You can't see this on imaging," says Michael Green, who also treats blood cancers at University of Texas MD Anderson Medical Center.

The new blood test is sensitive enough to spot one cancerous perpetrator amongst one million other DNA molecules.

Kurtz wanted a better diagnostic tool, so he started working on a blood test that could capture the circulating tumor DNA or ctDNA. For that, he needed to identify the specific mutations typical for B-cell lymphomas. Working together with another fellow PhD student Jake Chabon, Kurtz finally zeroed-in on the tumor's genetic "appearance" in 2017—a pair of specific mutations sitting in close proximity to each other—a rare and telling sign. The human genome contains about 3 billion base pairs of nucleotides—molecules that compose genes—and in case of the B-cell lymphoma cells these two mutations were only a few base pairs apart. "That was the moment when the light bulb went on," Kurtz says.

The duo formed a company named Foresight Diagnostics, focusing on taking the blood test to the clinic. But knowing the tumor's mutational signature was only half the process. The other was fishing the tumor's DNA out of patients' bloodstream that contains millions of other DNA molecules, explains Chabon, now Foresight's CEO. It would be like looking for an escaped criminal in a large crowd. Kurtz and Chabon solved the problem by taking the tumor's "mug shot" first. Doctors would take the biopsy pre-treatment and sequence the tumor, as if taking the criminal's photo. After treatments, they would match the "mug shot" to all DNA molecules derived from the patient's blood sample to see if any molecular criminals managed to escape the chemo.

Foresight isn't the only company working on blood-based tumor detection tests, which are dubbed liquid biopsies—other companies such as Natera or ArcherDx developed their own. But in a recent study, the Foresight team showed that their method is significantly more sensitive in "fishing out" the cancer molecules than existing tests. Chabon says that this test can detect circulating tumor DNA in concentrations that are nearly 100 times lower than other methods. Put another way, it's sensitive enough to spot one cancerous perpetrator amongst one million other DNA molecules.

They also aim to extend their test to detect other malignancies such as lung, breast or colorectal cancers.

"It increases the sensitivity of detection and really catches most patients who are going to progress," says Green, the University of Texas oncologist who wasn't involved in the study, but is familiar with the method. It would also allow monitoring patients during treatment and making better-informed decisions about which therapy regimens would be most effective. "It's a minimally invasive test," Green says, and "it gives you a very high confidence about what's going on."

Having shown that the test works well, Kurtz and Chabon are planning a new trial in which oncologists would rely on their method to decide when to stop or continue chemo. They also aim to extend their test to detect other malignancies such as lung, breast or colorectal cancers. The latest genome sequencing technologies have sequenced and catalogued over 2,500 different tumor specimens and the Foresight team is analyzing this data, says Chabon, which gives the team the opportunity to create more molecular "mug shots."

The team hopes that that their blood cancer test will become available to patients within about five years, making doctors' job easier, and not only at the biological level. "When I tell patients, "good news, your cancer is in remission', they ask me, 'does it mean I'm cured?'" Kurtz says. "Right now I can't answer this question because I don't know—but I would like to." His company's test, he hopes, will enable him to reply with certainty. He'd very much like to have the power of that foresight.

This article is republished from our archives to coincide with Blood Cancer Awareness Month, which highlights progress in cancer diagnostics and treatment.

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.