Is There a Blind Spot in the Oversight of Human Subject Research?

A scientist examining samples.

Human experimentation has come a long way since congressional hearings in the 1970s exposed patterns of abuse. Where yesterday's patients were protected only by the good conscience of physician-researchers, today's patients are spirited past hazards through an elaborate system of oversight and informed consent. Yet in many ways, the project of grounding human research on ethical foundations remains incomplete.

As human research has become a mainstay of career and commercial advancement among academics, research centers, and industry, new threats to research integrity have emerged.

To be sure, much of the medical research we do meets exceedingly high standards. Progress in cancer immunotherapy, or infectious disease, reflects the best of what can be accomplished when medical scientists and patients collaborate productively. And abuses of the earlier part of the 20th century--like those perpetrated by the U.S. Public Health Service in Guatemala--are for the history books.

Yet as human research has become a mainstay of career and commercial advancement among academics, research centers, and industry, new threats to research integrity have emerged. Many flourish in the blind spot of current oversight systems.

Take, for example, the tendency to publish only "positive" findings ("publication bias"). When patients participate in studies, they are told that their contributions will promote medical discovery. That can't happen if results of experiments never get beyond the hard drives of researchers. While researchers are often eager to publish trials showing a drug works, according to a study my own team conducted, fewer than 4 in 10 trials of drugs that never receive FDA approval get published. This tendency- which occurs in academia as well as industry- deprives other scientists of opportunities to build on these failures and make good on the sacrifice of patients. It also means the trials may be inadvertently repeated by other researchers, subjecting more patients to risks.

On the other hand, many clinical trials test treatments that have already been proven effective beyond a shadow of doubt. Consider the drug aprotinin, used for the management of bleeding during surgery. An analysis in 2005 showed that, not long after the drug was proven effective, researchers launched dozens of additional placebo-controlled trials. These redundant trials are far in excess of what regulators required for drug approval, and deprived patients in placebo arms of a proven effective therapy. Whether because of an oversight or deliberately (does it matter?), researchers conducting these trials often failed in publications to describe previous evidence of efficacy. What's the point of running a trial if no one reads the results?

It is surprisingly easy for companies to hijack research to market their treatments.

At the other extreme are trials that are little more than shots in the dark. In one case, patients with spinal cord injury were enrolled in a safety trial testing a cell-based regenerative medicine treatment. After the trial stopped (results were negative), laboratory scientists revealed that the cells had been shown ineffective in animal experiments. Though this information had been available to the company and FDA, researchers pursued the trial anyway.

It is surprisingly easy for companies to hijack research to market their treatments. One way this happens is through "seeding trials"- studies that are designed not to address a research question, but instead to habituate doctors to using a new drug and to generate publications that serve as advertisements. Such trials flood the medical literature with findings that are unreliable because studies are small and not well designed. They also use the prestige of science to pursue goals that are purely commercial. Yet because they harm science- not patients (many such studies are minimally risky because all patients receive proven effective medications)- ethics committees rarely block them.

Closely related is the phenomenon of small uninformative trials. After drugs get approved by the FDA, companies often launch dozens of small trials in new diseases other than the one the drug was approved to treat. Because these studies are small, they often overestimate efficacy. Indeed, the way trials are often set up, if a company tests an ineffective drug in 40 different studies, one will typically produce a false positive by chance alone. Because companies are free to run as many trials as they like and to circulate "positive" results, they have incentives to run lots of small trials that don't provide a definitive test of their drug's efficacy.

Universities, funding bodies, and companies should be scored by a neutral third-party based on the impact of their trials -- like Moody's for credit ratings.

Don't think public agencies are much better. Funders like the National Institutes of Health secure their appropriations by gratifying Congress. This means that NIH gets more by spreading its funding among small studies in different Congressional districts than by concentrating budgets among a few research institutions pursuing large trials. The result is that some NIH-funded clinical trials are not especially equipped to inform medical practice.

It's tempting to think that FDA, medical journals, ethics committees, and funding agencies can fix these problems. However, these practices continue in part because FDA, ethics committees, and researchers often do not see what is at stake for patients by acquiescing to low scientific standards. This behavior dishonors the patients who volunteer for research, and also threatens the welfare of downstream patients, whose care will be determined by the output of research.

To fix this, deficiencies in study design and reporting need to be rendered visible. Universities, funding bodies, and companies should be scored by a neutral third-party based on the impact of their trials, or the extent to which their trials are published in full -- like Moody's for credit ratings, or the Kelley Blue Book for cars. This system of accountability would allow everyone to see which institutions make the most of the contributions of research subjects. It could also harness the competitive instincts of institutions to improve research quality.

Another step would be for researchers to level with patients when they enroll in studies. Patients who agree to research are usually offered bromides about how their participation may help future patients. However, not all studies are created equal with respect to merit. Patients have a right to know when they are entering studies that are unlikely to have a meaningful impact on medicine.

Ethics committees and drug regulators have done a good job protecting research volunteers from unchecked scientific ambition. However, today's research is plagued by studies that have poor scientific credentials. Such studies free-ride on the well-earned reputation of serious medical science. They also potentially distort the evidence available to physicians and healthcare systems. Regulators, academic medical centers, and others should establish policies that better protect human research volunteers by protecting the quality of the research itself.

DNA- and RNA-based electronic implants may revolutionize healthcare

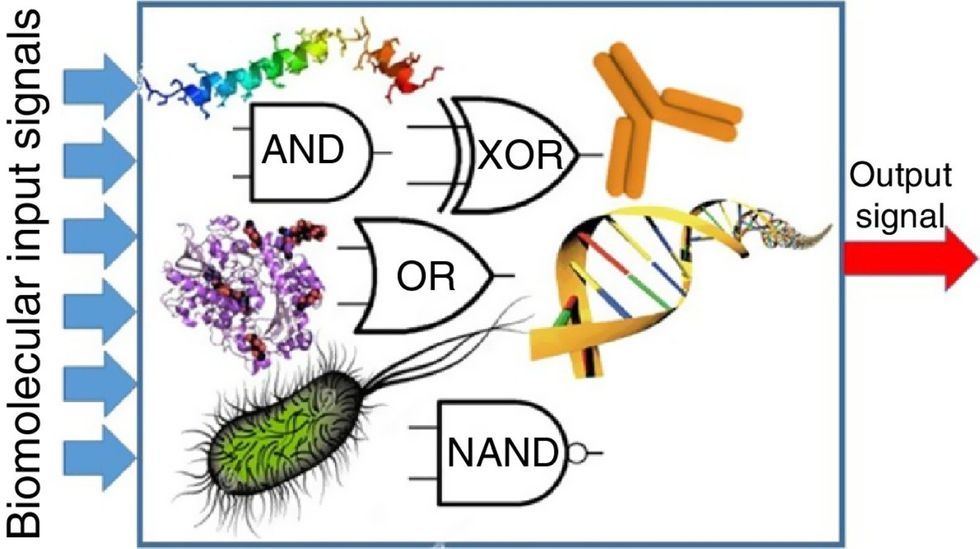

The test tubes contain tiny DNA/enzyme-based circuits, which comprise TRUMPET, a new type of electronic device, smaller than a cell.

Implantable electronic devices can significantly improve patients’ quality of life. A pacemaker can encourage the heart to beat more regularly. A neural implant, usually placed at the back of the skull, can help brain function and encourage higher neural activity. Current research on neural implants finds them helpful to patients with Parkinson’s disease, vision loss, hearing loss, and other nerve damage problems. Several of these implants, such as Elon Musk’s Neuralink, have already been approved by the FDA for human use.

Yet, pacemakers, neural implants, and other such electronic devices are not without problems. They require constant electricity, limited through batteries that need replacements. They also cause scarring. “The problem with doing this with electronics is that scar tissue forms,” explains Kate Adamala, an assistant professor of cell biology at the University of Minnesota Twin Cities. “Anytime you have something hard interacting with something soft [like muscle, skin, or tissue], the soft thing will scar. That's why there are no long-term neural implants right now.” To overcome these challenges, scientists are turning to biocomputing processes that use organic materials like DNA and RNA. Other promised benefits include “diagnostics and possibly therapeutic action, operating as nanorobots in living organisms,” writes Evgeny Katz, a professor of bioelectronics at Clarkson University, in his book DNA- And RNA-Based Computing Systems.

While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output.

Adamala’s research focuses on developing such biocomputing systems using DNA, RNA, proteins, and lipids. Using these molecules in the biocomputing systems allows the latter to be biocompatible with the human body, resulting in a natural healing process. In a recent Nature Communications study, Adamala and her team created a new biocomputing platform called TRUMPET (Transcriptional RNA Universal Multi-Purpose GatE PlaTform) which acts like a DNA-powered computer chip. “These biological systems can heal if you design them correctly,” adds Adamala. “So you can imagine a computer that will eventually heal itself.”

The basics of biocomputing

Biocomputing and regular computing have many similarities. Like regular computing, biocomputing works by running information through a series of gates, usually logic gates. A logic gate works as a fork in the road for an electronic circuit. The input will travel one way or another, giving two different outputs. An example logic gate is the AND gate, which has two inputs (A and B) and two different results. If both A and B are 1, the AND gate output will be 1. If only A is 1 and B is 0, the output will be 0 and vice versa. If both A and B are 0, the result will be 0. While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output. In this case, the DNA enters the logic gate as a single or double strand.

If the DNA is double-stranded, the system “digests” the DNA or destroys it, which results in non-fluorescence or “0” output. Conversely, if the DNA is single-stranded, it won’t be digested and instead will be copied by several enzymes in the biocomputing system, resulting in fluorescent RNA or a “1” output. And the output for this type of binary system can be expanded beyond fluorescence or not. For example, a “1” output might be the production of the enzyme insulin, while a “0” may be that no insulin is produced. “This kind of synergy between biology and computation is the essence of biocomputing,” says Stephanie Forrest, a professor and the director of the Biodesign Center for Biocomputing, Security and Society at Arizona State University.

Biocomputing circles are made of DNA, RNA, proteins and even bacteria.

Evgeny Katz

The TRUMPET’s promise

Depending on whether the biocomputing system is placed directly inside a cell within the human body, or run in a test-tube, different environmental factors play a role. When an output is produced inside a cell, the cell's natural processes can amplify this output (for example, a specific protein or DNA strand), creating a solid signal. However, these cells can also be very leaky. “You want the cells to do the thing you ask them to do before they finish whatever their businesses, which is to grow, replicate, metabolize,” Adamala explains. “However, often the gate may be triggered without the right inputs, creating a false positive signal. So that's why natural logic gates are often leaky." While biocomputing outside a cell in a test tube can allow for tighter control over the logic gates, the outputs or signals cannot be amplified by a cell and are less potent.

TRUMPET, which is smaller than a cell, taps into both cellular and non-cellular biocomputing benefits. “At its core, it is a nonliving logic gate system,” Adamala states, “It's a DNA-based logic gate system. But because we use enzymes, and the readout is enzymatic [where an enzyme replicates the fluorescent RNA], we end up with signal amplification." This readout means that the output from the TRUMPET system, a fluorescent RNA strand, can be replicated by nearby enzymes in the platform, making the light signal stronger. "So it combines the best of both worlds,” Adamala adds.

These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body.

The TRUMPET biocomputing process is relatively straightforward. “If the DNA [input] shows up as single-stranded, it will not be digested [by the logic gate], and you get this nice fluorescent output as the RNA is made from the single-stranded DNA, and that's a 1,” Adamala explains. "And if the DNA input is double-stranded, it gets digested by the enzymes in the logic gate, and there is no RNA created from the DNA, so there is no fluorescence, and the output is 0." On the story's leading image above, if the tube is "lit" with a purple color, that is a binary 1 signal for computing. If it's "off" it is a 0.

While still in research, TRUMPET and other biocomputing systems promise significant benefits to personalized healthcare and medicine. These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body. The study’s lead author and graduate student Judee Sharon is already beginning to research TRUMPET's ability for earlier cancer diagnoses. Because the inputs for TRUMPET are single or double-stranded DNA, any mutated or cancerous DNA could theoretically be detected from the platform through the biocomputing process. Theoretically, devices like TRUMPET could be used to detect cancer and other diseases earlier.

Adamala sees TRUMPET not only as a detection system but also as a potential cancer drug delivery system. “Ideally, you would like the drug only to turn on when it senses the presence of a cancer cell. And that's how we use the logic gates, which work in response to inputs like cancerous DNA. Then the output can be the production of a small molecule or the release of a small molecule that can then go and kill what needs killing, in this case, a cancer cell. So we would like to develop applications that use this technology to control the logic gate response of a drug’s delivery to a cell.”

Although platforms like TRUMPET are making progress, a lot more work must be done before they can be used commercially. “The process of translating mechanisms and architecture from biology to computing and vice versa is still an art rather than a science,” says Forrest. “It requires deep computer science and biology knowledge,” she adds. “Some people have compared interdisciplinary science to fusion restaurants—not all combinations are successful, but when they are, the results are remarkable.”

Crickets are low on fat, high on protein, and can be farmed sustainably. They are also crunchy.

In today’s podcast episode, Leaps.org Deputy Editor Lina Zeldovich speaks about the health and ecological benefits of farming crickets for human consumption with Bicky Nguyen, who joins Lina from Vietnam. Bicky and her business partner Nam Dang operate an insect farm named CricketOne. Motivated by the idea of sustainable and healthy protein production, they started their unconventional endeavor a few years ago, despite numerous naysayers who didn’t believe that humans would ever consider munching on bugs.

Yet, making creepy crawlers part of our diet offers many health and planetary advantages. Food production needs to match the rise in global population, estimated to reach 10 billion by 2050. One challenge is that some of our current practices are inefficient, polluting and wasteful. According to nonprofit EarthSave.org, it takes 2,500 gallons of water, 12 pounds of grain, 35 pounds of topsoil and the energy equivalent of one gallon of gasoline to produce one pound of feedlot beef, although exact statistics vary between sources.

Meanwhile, insects are easy to grow, high on protein and low on fat. When roasted with salt, they make crunchy snacks. When chopped up, they transform into delicious pâtes, says Bicky, who invents her own cricket recipes and serves them at industry and public events. Maybe that’s why some research predicts that edible insects market may grow to almost $10 billion by 2030. Tune in for a delectable chat on this alternative and sustainable protein.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Further reading:

More info on Bicky Nguyen

https://yseali.fulbright.edu.vn/en/faculty/bicky-n...

The environmental footprint of beef production

https://www.earthsave.org/environment.htm

https://www.watercalculator.org/news/articles/beef-king-big-water-footprints/

https://www.frontiersin.org/articles/10.3389/fsufs.2019.00005/full

https://ourworldindata.org/carbon-footprint-food-methane

Insect farming as a source of sustainable protein

https://www.insectgourmet.com/insect-farming-growing-bugs-for-protein/

https://www.sciencedirect.com/topics/agricultural-and-biological-sciences/insect-farming

Cricket flour is taking the world by storm

https://www.cricketflours.com/

https://talk-commerce.com/blog/what-brands-use-cricket-flour-and-why/

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.