Meet the Scientists on the Frontlines of Protecting Humanity from a Man-Made Pathogen

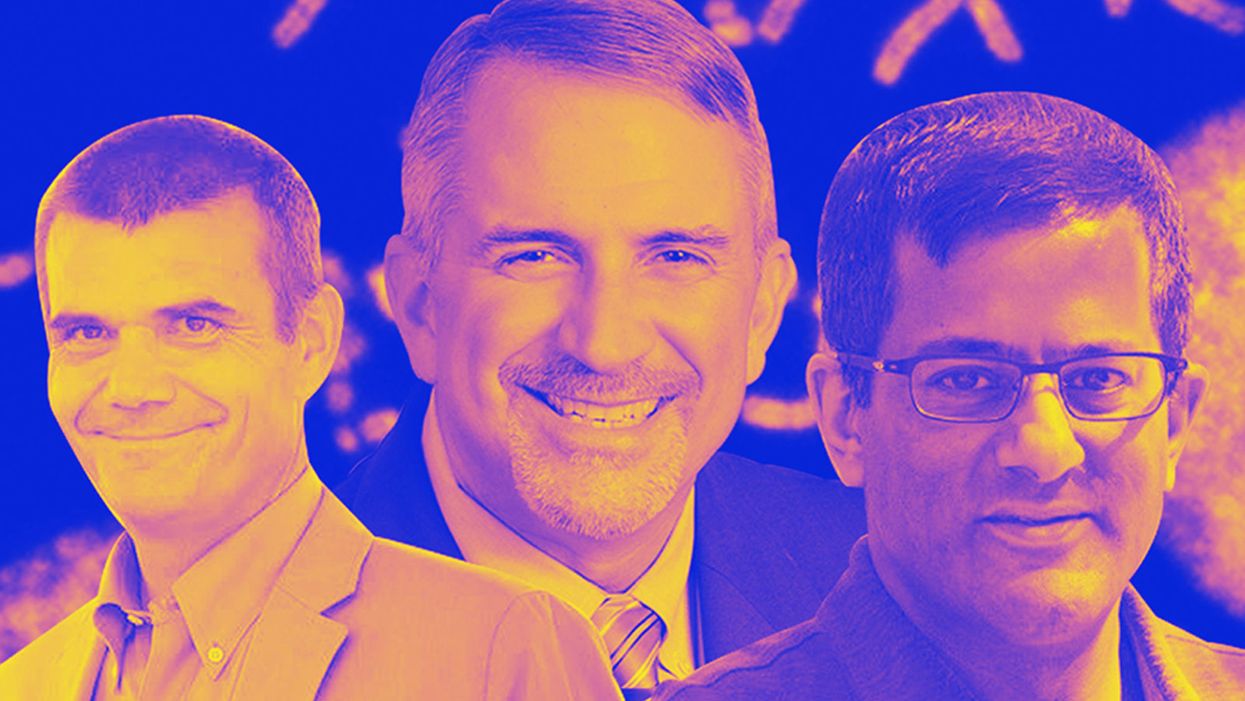

From left: Jean Peccoud, Randall Murch, and Neeraj Rao.

Jean Peccoud wasn't expecting an email from the FBI. He definitely wasn't expecting the agency to invite him to a meeting. "My reaction was, 'What did I do wrong to be on the FBI watch list?'" he recalls.

You use those blueprints for white-hat research—which is, indeed, why the open blueprints exist—or you can do the same for a black-hat attack.

He didn't know what the feds could possibly want from him. "I was mostly scared at this point," he says. "I was deeply disturbed by the whole thing."

But he decided to go anyway, and when he traveled to San Francisco for the 2008 gathering, the reason for the e-vite became clear: The FBI was reaching out to researchers like him—scientists interested in synthetic biology—in anticipation of the potential nefarious uses of this technology. "The whole purpose of the meeting was, 'Let's start talking to each other before we actually need to talk to each other,'" says Peccoud, now a professor of chemical and biological engineering at Colorado State University. "'And let's make sure next time you get an email from the FBI, you don't freak out."

Synthetic biology—which Peccoud defines as "the application of engineering methods to biological systems"—holds great power, and with that (as always) comes great responsibility. When you can synthesize genetic material in a lab, you can create new ways of diagnosing and treating people, and even new food ingredients. But you can also "print" the genetic sequence of a virus or virulent bacterium.

And while it's not easy, it's also not as hard as it could be, in part because dangerous sequences have publicly available blueprints. You use those blueprints for white-hat research—which is, indeed, why the open blueprints exist—or you can do the same for a black-hat attack. You could synthesize a dangerous pathogen's code on purpose, or you could unwittingly do so because someone tampered with your digital instructions. Ordering synthetic genes for viral sequences, says Peccoud, would likely be more difficult today than it was a decade ago.

"There is more awareness of the industry, and they are taking this more seriously," he says. "There is no specific regulation, though."

Trying to lock down the interconnected machines that enable synthetic biology, secure its lab processes, and keep dangerous pathogens out of the hands of bad actors is part of a relatively new field: cyberbiosecurity, whose name Peccoud and colleagues introduced in a 2018 paper.

Biological threats feel especially acute right now, during the ongoing pandemic. COVID-19 is a natural pathogen -- not one engineered in a lab. But future outbreaks could start from a bug nature didn't build, if the wrong people get ahold of the right genetic sequences, and put them in the right sequence. Securing the equipment and processes that make synthetic biology possible -- so that doesn't happen -- is part of why the field of cyberbiosecurity was born.

The Origin Story

It is perhaps no coincidence that the FBI pinged Peccoud when it did: soon after a journalist ordered a sequence of smallpox DNA and wrote, for The Guardian, about how easy it was. "That was not good press for anybody," says Peccoud. Previously, in 2002, the Pentagon had funded SUNY Stonybrook researchers to try something similar: They ordered bits of polio DNA piecemeal and, over the course of three years, strung them together.

Although many years have passed since those early gotchas, the current patchwork of regulations still wouldn't necessarily prevent someone from pulling similar tricks now, and the technological systems that synthetic biology runs on are more intertwined — and so perhaps more hackable — than ever. Researchers like Peccoud are working to bring awareness to those potential problems, to promote accountability, and to provide early-detection tools that would catch the whiff of a rotten act before it became one.

Peccoud notes that if someone wants to get access to a specific pathogen, it is probably easier to collect it from the environment or take it from a biodefense lab than to whip it up synthetically. "However, people could use genetic databases to design a system that combines different genes in a way that would make them dangerous together without each of the components being dangerous on its own," he says. "This would be much more difficult to detect."

After his meeting with the FBI, Peccoud grew more interested in these sorts of security questions. So he was paying attention when, in 2010, the Department of Health and Human Services — now helping manage the response to COVID-19 — created guidance for how to screen synthetic biology orders, to make sure suppliers didn't accidentally send bad actors the sequences that make up bad genomes.

Guidance is nice, Peccoud thought, but it's just words. He wanted to turn those words into action: into a computer program. "I didn't know if it was something you can run on a desktop or if you need a supercomputer to run it," he says. So, one summer, he tasked a team of student researchers with poring over the sentences and turning them into scripts. "I let the FBI know," he says, having both learned his lesson and wanting to get in on the game.

Peccoud later joined forces with Randall Murch, a former FBI agent and current Virginia Tech professor, and a team of colleagues from both Virginia Tech and the University of Nebraska-Lincoln, on a prototype project for the Department of Defense. They went into a lab at the University of Nebraska at Lincoln and assessed all its cyberbio-vulnerabilities. The lab develops and produces prototype vaccines, therapeutics, and prophylactic components — exactly the kind of place that you always, and especially right now, want to keep secure.

"We were creating wiki of all these nasty things."

The team found dozens of Achilles' heels, and put them in a private report. Not long after that project, the two and their colleagues wrote the paper that first used the term "cyberbiosecurity." A second paper, led by Murch, came out five months later and provided a proposed definition and more comprehensive perspective on cyberbiosecurity. But although it's now a buzzword, it's the definition, not the jargon, that matters. "Frankly, I don't really care if they call it cyberbiosecurity," says Murch. Call it what you want: Just pay attention to its tenets.

A Database of Scary Sequences

Peccoud and Murch, of course, aren't the only ones working to screen sequences and secure devices. At the nonprofit Battelle Memorial Institute in Columbus, Ohio, for instance, scientists are working on solutions that balance the openness inherent to science and the closure that can stop bad stuff. "There's a challenge there that you want to enable research but you want to make sure that what people are ordering is safe," says the organization's Neeraj Rao.

Rao can't talk about the work Battelle does for the spy agency IARPA, the Intelligence Advanced Research Projects Activity, on a project called Fun GCAT, which aims to use computational tools to deep-screen gene-sequence orders to see if they pose a threat. It can, though, talk about a twin-type internal project: ThreatSEQ (pronounced, of course, "threat seek").

The project started when "a government customer" (as usual, no one will say which) asked Battelle to curate a list of dangerous toxins and pathogens, and their genetic sequences. The researchers even started tagging sequences according to their function — like whether a particular sequence is involved in a germ's virulence or toxicity. That helps if someone is trying to use synthetic biology not to gin up a yawn-inducing old bug but to engineer a totally new one. "How do you essentially predict what the function of a novel sequence is?" says Rao. You look at what other, similar bits of code do.

"We were creating wiki of all these nasty things," says Rao. As they were working, they realized that DNA manufacturers could potentially scan in sequences that people ordered, run them against the database, and see if anything scary matched up. Kind of like that plagiarism software your college professors used.

Battelle began offering their screening capability, as ThreatSEQ. When customers -- like, currently, Twist Bioscience -- throw their sequences in, and get a report back, the manufacturers make the final decision about whether to fulfill a flagged order — whether, in the analogy, to give an F for plagiarism. After all, legitimate researchers do legitimately need to have DNA from legitimately bad organisms.

"Maybe it's the CDC," says Rao. "If things check out, oftentimes [the manufacturers] will fulfill the order." If it's your aggrieved uncle seeking the virulent pathogen, maybe not. But ultimately, no one is stopping the manufacturers from doing so.

Beyond that kind of tampering, though, cyberbiosecurity also includes keeping a lockdown on the machines that make the genetic sequences. "Somebody now doesn't need physical access to infrastructure to tamper with it," says Rao. So it needs the same cyber protections as other internet-connected devices.

Scientists are also now using DNA to store data — encoding information in its bases, rather than into a hard drive. To download the data, you sequence the DNA and read it back into a computer. But if you think like a bad guy, you'd realize that a bad guy could then, for instance, insert a computer virus into the genetic code, and when the researcher went to nab her data, her desktop would crash or infect the others on the network.

Something like that actually happened in 2017 at the USENIX security symposium, an annual programming conference: Researchers from the University of Washington encoded malware into DNA, and when the gene sequencer assembled the DNA, it corrupted the sequencer's software, then the computer that controlled it.

"This vulnerability could be just the opening an adversary needs to compromise an organization's systems," Inspirion Biosciences' J. Craig Reed and Nicolas Dunaway wrote in a paper for Frontiers in Bioengineering and Biotechnology, included in an e-book that Murch edited called Mapping the Cyberbiosecurity Enterprise.

Where We Go From Here

So what to do about all this? That's hard to say, in part because we don't know how big a current problem any of it poses. As noted in Mapping the Cyberbiosecurity Enterprise, "Information about private sector infrastructure vulnerabilities or data breaches is protected from public release by the Protected Critical Infrastructure Information (PCII) Program," if the privateers share the information with the government. "Government sector vulnerabilities or data breaches," meanwhile, "are rarely shared with the public."

"What I think is encouraging right now is the fact that we're even having this discussion."

The regulations that could rein in problems aren't as robust as many would like them to be, and much good behavior is technically voluntary — although guidelines and best practices do exist from organizations like the International Gene Synthesis Consortium and the National Institute of Standards and Technology.

Rao thinks it would be smart if grant-giving agencies like the National Institutes of Health and the National Science Foundation required any scientists who took their money to work with manufacturing companies that screen sequences. But he also still thinks we're on our way to being ahead of the curve, in terms of preventing print-your-own bioproblems: "What I think is encouraging right now is the fact that we're even having this discussion," says Rao.

Peccoud, for his part, has worked to keep such conversations going, including by doing training for the FBI and planning a workshop for students in which they imagine and work to guard against the malicious use of their research. But actually, Peccoud believes that human error, flawed lab processes, and mislabeled samples might be bigger threats than the outside ones. "Way too often, I think that people think of security as, 'Oh, there is a bad guy going after me,' and the main thing you should be worried about is yourself and errors," he says.

Murch thinks we're only at the beginning of understanding where our weak points are, and how many times they've been bruised. Decreasing those contusions, though, won't just take more secure systems. "The answer won't be technical only," he says. It'll be social, political, policy-related, and economic — a cultural revolution all its own.

Sharing land and other resources among farmers isn’t new. But research shows it may be increasingly relevant in a time of climatic upheaval.

The livestock trucks arrived all night. One after the other they backed up to the wood chute leading to a dusty corral and loosed their cargo — 580 head of cattle by the time the last truck pulled away at 3pm the next afternoon. Dan Probert, astride his horse, guided the cows to paddocks of pristine grassland stretching alongside the snow-peaked Wallowa Mountains. They’d spend the summer here grazing bunchgrass and clovers and biscuitroot. The scuffle of their hooves and nibbles of their teeth would mimic the elk, antelope and bison that are thought to have historically roamed this portion of northeastern Oregon’s Zumwalt Prairie, helping grasses grow and restoring health to the soil.

The cows weren’t Probert’s, although the fifth-generation rancher and one other member of the Carman Ranch Direct grass-fed beef collective also raise their own herds here for part of every year. But in spring, when the prairie is in bloom, Probert receives cattle from several other ranchers. As the grasses wither in October, the cows move on to graze fertile pastures throughout the Columbia Basin, which stretches across several Pacific Northwest states; some overwinter on a vegetable farm in central Washington, feeding on corn leaves and pea vines left behind after harvest.

Sharing land and other resources among farmers isn’t new. But research shows it may be increasingly relevant in a time of climatic upheaval, potentially influencing “farmers to adopt environmentally friendly practices and agricultural innovation,” according to a 2021 paper in the Journal of Economic Surveys. Farmers might share knowledge about reducing pesticide use, says Heather Frambach, a supply chain consultant who works with farmers in California and elsewhere. As a group they may better qualify for grants to monitor soil and water quality.

Most research around such practices applies to cooperatives, whose owner-members equally share governance and profits. But a collective like Carman Ranch’s — spearheaded by fourth-generation rancher Cory Carman, who purchases beef from eight other ranchers to sell under one “regeneratively” certified brand — shows when producers band together, they can achieve eco-benefits that would be elusive if they worked alone.

Vitamins and minerals in soil pass into plants through their roots, then into cattle as they graze, then back around as the cows walk around pooping.

Carman knows from experience. Taking over her family's land in 2003, she started selling grass-fed beef “because I really wanted to figure out how to not participate in the feedlot world, to have a healthier product. I didn't know how we were going to survive,” she says. Part of her land sits on a degraded portion of Zumwalt Prairie replete with invasive grasses; working to restore it, she thought, “What good does it do to kill myself trying to make this ranch more functional? If you want to make a difference, change has to be more than single entrepreneurs on single pieces of land. It has to happen at a community level.” The seeds of her collective were sown.

Raising 100 percent grass-fed beef requires land that’s got something for cows to graze in every season — which most collective members can’t access individually. So, they move cattle around their various parcels. It’s practical, but it also restores nutrient flows “to the way they used to move, from lowlands and canyons during the winter to higher-up places as the weather gets hot,” Carman says. Meaning, vitamins and minerals in soil pass into plants through their roots, then into cattle as they graze, then back around as the cows walk around pooping.

Cory Carman sells grass-fed beef, which requires land that’s got something for cows to graze in every season.

Courtesy Cory Carman

Each collective member has individual ecological goals: Carman brought in pigs to root out invasive grasses and help natives flourish. Probert also heads a more conventional grain-finished beef collective with 100 members, and their combined 6.5 million ranchland acres were eligible for a grant supporting climate-friendly practices, which compels them to improve soil and water health and biodiversity and make their product “as environmentally friendly as possible,” Probert says. The Washington veg farmer reduced tilling and pesticide use thanks to the ecoservices of visiting cows. Similarly, a conventional hay farmer near Carman has reduced his reliance on fertilizer by letting cattle graze the cover crops he plants on 80 acres.

Additionally, the collective must meet the regenerative standards promised on their label — another way in which they work together to achieve ecological goals. Says David LeZaks, formerly a senior fellow at finance-focused ecology nonprofit Croatan Institute, it’s hard for individual farmers to access monetary assistance. “But it's easier to get financing flowing when you increase the scale with cooperatives or collectives,” he says. “This supports producers in ways that can lead to better outcomes on the landscape.”

New, smaller scale farmers might gain the most from collective and cooperative models.

For example, it can help them minimize waste by using more of an animal, something our frugal ancestors excelled at. Small-scale beef producers normally throw out hides; Thousand Hills’ 50 regenerative beef producers together have enough to sell to Timberland to make carbon-neutral leather. In another example, working collectively resulted in the support of more diverse farms: Meadowlark Community Mill in Wisconsin went from working with one wheat grower, to sourcing from several organic wheat growers marketing flour under one premium brand.

Another example shows how these collaborations can foster greater equity, among other benefits: The Federation of Southern Cooperatives has a mission to support Black farmers as they build community health. It owns several hundred forest acres in Alabama, where it teaches members to steward their own forest land and use it to grow food — one member coop raises goats to graze forest debris and produce milk. Adding the combined acres of member forest land to the Federation’s, the group qualified for a federal conservation grant that will keep this resource available for food production, and community environmental and mental health benefits. “That's the value-add of the collective land-owner structure,” says Dãnia Davy, director of land retention and advocacy.

New, smaller scale farmers might gain the most from collective and cooperative models, says Jordan Treakle, national program coordinator of the National Family Farm Coalition (NFFC). Many of them enter farming specifically to raise healthy food in healthy ways — with organic production, or livestock for soil fertility. With land, equipment and labor prohibitively expensive, farming collectively allows shared costs and risk that buy farmers the time necessary to “build soil fertility and become competitive” in the marketplace, Treakle says. Just keeping them in business is an eco-win; when small farms fail, they tend to get sold for development or absorbed into less-diversified operations, so the effects of their success can “reverberate through the entire local economy.”

Frambach, the supply chain consultant, has been experimenting with what she calls “collaborative crop planning,” where she helps farmers strategize what they’ll plant as a group. “A lot of them grow based on what they hear their neighbor is going to do, and that causes really poor outcomes,” she says. “Nobody replanted cauliflower after the [atmospheric rivers in California] this year and now there's a huge shortage of cauliflower.” A group plan can avoid the under-planting that causes farmers to lose out on revenue.

It helps avoid overplanted crops, too, which small farmers might have to plow under or compost. Larger farmers, conversely, can sell surplus produce into the upcycling market — to Matriark Foods, for example, which turns it into value-add products like pasta sauce for companies like Sysco that supply institutional kitchens at colleges and hospitals. Frambach and Anna Hammond, Matriark’s CEO, want to collectivize smaller farmers so that they can sell to the likes of Matriark and “not lose an incredible amount of income,” Hammond says.

Ultimately, farming is fraught with challenges and even collectivizing doesn’t guarantee that farms will stay in business. But with agriculture accounting for almost 30 percent of greenhouse gas emissions globally, there's an “urgent” need to shift farming practices to more environmentally sustainable models, as well as a “demand in the marketplace for it,” says NFFC’s Treakle. “The growth of cooperative and collective farming can be a huge, huge boon for the ecological integrity of the system.”

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.