The New Prospective Parenthood: When Does More Info Become Too Much?

Obstetric ultrasound of a fourth-month fetus.

Peggy Clark was 12 weeks pregnant when she went in for a nuchal translucency (NT) scan to see whether her unborn son had Down syndrome. The sonographic scan measures how much fluid has accumulated at the back of the baby's neck: the more fluid, the higher the likelihood of an abnormality. The technician said the baby was in such an odd position, the test couldn't be done. Clark, whose name has been changed to protect her privacy, was told to come back in a week and a half to see if the baby had moved.

"With the growing sophistication of prenatal tests, it seems that the more questions are answered, the more new ones arise."

"It was like the baby was saying, 'I don't want you to know,'" she recently recalled.

When they went back, they found the baby had a thickened neck. It's just one factor in identifying Down's, but it's a strong indication. At that point, she was 13 weeks and four days pregnant. She went to the doctor the next day for a blood test. It took another two weeks for the results, which again came back positive, though there was still a .3% margin of error. Clark said she knew she wanted to terminate the pregnancy if the baby had Down's, but she didn't want the guilt of knowing there was a small chance the tests were wrong. At that point, she was too late to do a Chorionic villus sampling (CVS), when chorionic villi cells are removed from the placenta and sequenced. And she was too early to do an amniocentesis, which isn't done until between 14 and 20 weeks of the pregnancy. So she says she had to sit and wait, calling those few weeks "brutal."

By the time they did the amnio, she was already nearly 18 weeks pregnant and was getting really big. When that test also came back positive, she made the anguished decision to end the pregnancy.

Now, three years after Clark's painful experience, a newer form of prenatal testing routinely gives would-be parents more information much earlier on, especially for women who are over 35. As soon as nine weeks into their pregnancies, women can have a simple blood test to determine if there are abnormalities in the DNA of chromosomes 21, which indicates Down syndrome, as well as in chromosomes 13 and 18. Using next-generation sequencing technologies, the test separates out and examines circulating fetal cells in the mother's blood, which eliminates the risks of drawing fluid directly from the fetus or placenta.

"Finding out your baby has Down syndrome at 11 or 12 weeks is much easier for parents to make any decision they may want to make, as opposed to 16 or 17 weeks," said Dr. Leena Nathan, an obstetrician-gynecologist in UCLA's healthcare system. "People are much more willing or able to perhaps make a decision to terminate the pregnancy."

But with the growing sophistication of prenatal tests, it seems that the more questions are answered, the more new ones arise--questions that previous generations have never had to face. And as genomic sequencing improves in its predictive accuracy at the earliest stages of life, the challenges only stand to increase. Imagine, for example, learning your child's lifetime risk of breast cancer when you are ten weeks pregnant. Would you terminate if you knew she had a 70 percent risk? What about 40 percent? Lots of hard questions. Few easy answers. Once the cost of whole genome sequencing drops low enough, probably within the next five to ten years according to experts, such comprehensive testing may become the new standard of care. Welcome to the future of prospective parenthood.

"In one way, it's a blessing to have this information. On the other hand, it's very difficult to deal with."

How Did We Get Here?

Prenatal testing is not new. In 1979, amniocentesis was used to detect whether certain inherited diseases had been passed on to the fetus. Through the 1980s, parents could be tested to see if they carried disease like Tay-Sachs, Sickle cell anemia, Cystic fibrosis and Duchenne muscular dystrophy. By the early 1990s, doctors could test for even more genetic diseases and the CVS test was beginning to become available.

A few years later, a technique called preimplantation genetic diagnosis (PGD) emerged, in which embryos created in a lab with sperm and harvested eggs would be allowed to grow for several days and then cells would be removed and tested to see if any carried genetic diseases. Those that weren't affected could be transferred back to the mother. Once in vitro fertilization (IVF) took off, so did genetic testing. The labs test the embryonic cells and get them back to the IVF facilities within 24 hours so that embryo selection can occur. In the case of IVF, genetic tests are done so early, parents don't even have to decide whether to terminate a pregnancy. Embryos with issues often aren't even used.

"It was a very expensive endeavor but exciting to see our ability to avoid disorders, especially for families that don't want to terminate a pregnancy," said Sara Katsanis, an expert in genetic testing who teaches at Duke University. "In one way, it's a blessing to have this information (about genetic disorders). On the other hand, it's very difficult to deal with. To make that decision about whether to terminate a pregnancy is very hard."

Just Because We Can, Does It Mean We Should?

Parents in the future may not only find out whether their child has a genetic disease but will be able to potentially fix the problem through a highly controversial process called gene editing. But because we can, does it mean we should? So far, genes have been edited in other species, but to date, the procedure has not been used on an unborn child for reproductive purposes apart from research.

"There's a lot of bioethics debate and convening of groups to try to figure out where genetic manipulation is going to be useful and necessary, and where it is going to need some restrictions," said Katsanis. She notes that it's very useful in areas like cancer research, so one wouldn't want to over-regulate it.

There are already some criteria as to which genes can be manipulated and which should be left alone, said Evan Snyder, professor and director of the Center for Stem Cells and Regenerative Medicine at Sanford Children's Health Research Center in La Jolla, Calif. He noted that genes don't stand in isolation. That is, if you modify one that causes disease, will it disrupt others? There may be unintended consequences, he added.

"As the technical dilemmas get fixed, some of the ethical dilemmas get fixed. But others arise. It's kind of like ethical whack-a-mole."

But gene editing of embryos may take years to become an acceptable practice, if ever, so a more pressing issue concerns the rationale behind embryo selection during IVF. Prospective parents can end up with anywhere from zero to thirty embryos from the procedure and must choose only one (rarely two) to implant. Since embryos are routinely tested now for certain diseases, and selected or discarded based on that information, should it be ethical—and legal—to make selections based on particular traits, too? To date so far, parents can select for gender, but no other traits. Whether trait selection becomes routine is a matter of time and business opportunity, Katsanis said. So far, the old-fashioned way of making a baby combined with the luck of the draw seems to be the preferred method for the marketplace. But that could change.

"You can easily see a family deciding not to implant a lethal gene for Tay-Sachs or Duchene or Cystic fibrosis. It becomes more ethically challenging when you make a decision to implant girls and not any of the boys," said Snyder. "And then as we get better and better, we can start assigning genes to certain skills and this starts to become science fiction."

Once a pregnancy occurs, prospective parents of all stripes will face decisions about whether to keep the fetus based on the information that increasingly robust prenatal testing will provide. What influences their decision is the crux of another ethical knot, said Snyder. A clear-cut rationale would be if the baby is anencephalic, or it has no brain. A harder one might be, "It's a girl, and I wanted a boy," or "The child will only be 5' 2" tall in adulthood."

"Those are the extremes, but the ultimate question is: At what point is it a legitimate response to say, I don't want to keep this baby?'" he said. Of course, people's responses will vary, so the bigger conundrum for society is: Where should a line be drawn—if at all? Should a woman who is within the legal scope of termination (up to around 24 weeks, though it varies by state) be allowed to terminate her pregnancy for any reason whatsoever? Or must she have a so-called "legitimate" rationale?

"As the technical dilemmas get fixed, some of the ethical dilemmas get fixed. But others arise. It's kind of like ethical whack-a-mole," Snyder said.

One of the newer moles to emerge is, if one can fix a damaged gene, for how long should it be fixed? In one child? In the family's whole line, going forward? If the editing is done in the embryo right after the egg and sperm have united and before the cells begin dividing and becoming specialized, when, say, there are just two or four cells, it will likely affect that child's entire reproductive system and thus all of that child's progeny going forward.

"This notion of changing things forever is a major debate," Snyder said. "It literally gets into metaphysics. On the one hand, you could say, well, wouldn't it be great to get rid of Cystic fibrosis forever? What bad could come of getting rid of a mutant gene forever? But we're not smart enough to know what other things the gene might be doing, and how disrupting one thing could affect this network."

As with any tool, there are risks and benefits, said Michael Kalichman, Director of the Research Ethics Program at the University of California San Diego. While we can envision diverse benefits from a better understanding of human biology and medicine, it is clear that our species can also misuse those tools – from stigmatizing children with certain genetic traits as being "less than," aka dystopian sci-fi movies like Gattaca, to judging parents for making sure their child carries or doesn't carry a particular trait.

"The best chance to ensure that the benefits of this technology will outweigh the risks," Kalichman said, "is for all stakeholders to engage in thoughtful conversations, strive for understanding of diverse viewpoints, and then develop strategies and policies to protect against those uses that are considered to be problematic."

Thanks to safety cautions from the COVID-19 pandemic, a strain of influenza has been completely eliminated.

If you were one of the millions who masked up, washed your hands thoroughly and socially distanced, pat yourself on the back—you may have helped change the course of human history.

Scientists say that thanks to these safety precautions, which were introduced in early 2020 as a way to stop transmission of the novel COVID-19 virus, a strain of influenza has been completely eliminated. This marks the first time in human history that a virus has been wiped out through non-pharmaceutical interventions, such as vaccines.

The flu shot, explained

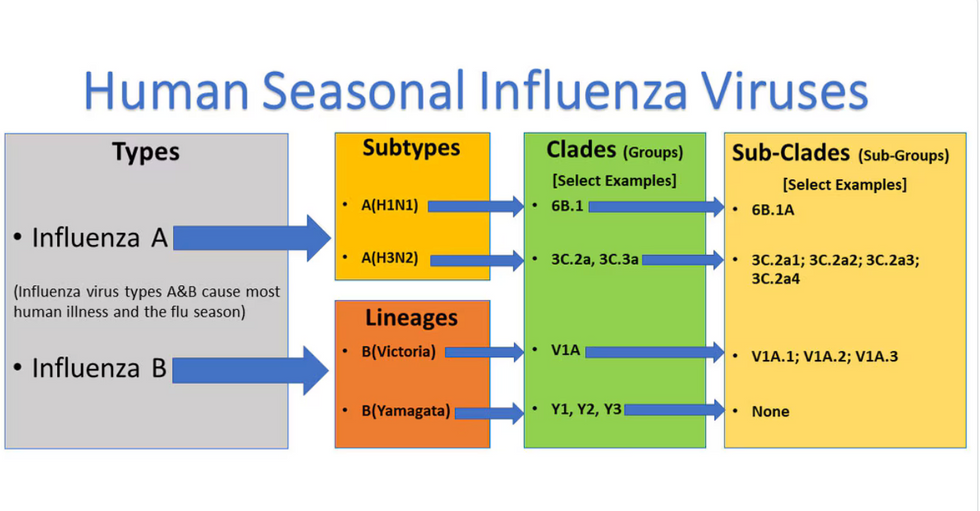

Influenza viruses type A and B are responsible for the majority of human illnesses and the flu season.

Centers for Disease Control

For more than a decade, flu shots have protected against two types of the influenza virus–type A and type B. While there are four different strains of influenza in existence (A, B, C, and D), only strains A, B, and C are capable of infecting humans, and only A and B cause pandemics. In other words, if you catch the flu during flu season, you’re most likely sick with flu type A or B.

Flu vaccines contain inactivated—or dead—influenza virus. These inactivated viruses can’t cause sickness in humans, but when administered as part of a vaccine, they teach a person’s immune system to recognize and kill those viruses when they’re encountered in the wild.

Each spring, a panel of experts gives a recommendation to the US Food and Drug Administration on which strains of each flu type to include in that year’s flu vaccine, depending on what surveillance data says is circulating and what they believe is likely to cause the most illness during the upcoming flu season. For the past decade, Americans have had access to vaccines that provide protection against two strains of influenza A and two lineages of influenza B, known as the Victoria lineage and the Yamagata lineage. But this year, the seasonal flu shot won’t include the Yamagata strain, because the Yamagata strain is no longer circulating among humans.

How Yamagata Disappeared

Flu surveillance data from the Global Initiative on Sharing All Influenza Data (GISAID) shows that the Yamagata lineage of flu type B has not been sequenced since April 2020.

Nature

Experts believe that the Yamagata lineage had already been in decline before the pandemic hit, likely because the strain was naturally less capable of infecting large numbers of people compared to the other strains. When the COVID-19 pandemic hit, the resulting safety precautions such as social distancing, isolating, hand-washing, and masking were enough to drive the virus into extinction completely.

Because the strain hasn’t been circulating since 2020, the FDA elected to remove the Yamagata strain from the seasonal flu vaccine. This will mark the first time since 2012 that the annual flu shot will be trivalent (three-component) rather than quadrivalent (four-component).

Should I still get the flu shot?

The flu shot will protect against fewer strains this year—but that doesn’t mean we should skip it. Influenza places a substantial health burden on the United States every year, responsible for hundreds of thousands of hospitalizations and tens of thousands of deaths. The flu shot has been shown to prevent millions of illnesses each year (more than six million during the 2022-2023 season). And while it’s still possible to catch the flu after getting the flu shot, studies show that people are far less likely to be hospitalized or die when they’re vaccinated.

Another unexpected benefit of dropping the Yamagata strain from the seasonal vaccine? This will possibly make production of the flu vaccine faster, and enable manufacturers to make more vaccines, helping countries who have a flu vaccine shortage and potentially saving millions more lives.

After his grandmother’s dementia diagnosis, one man invented a snack to keep her healthy and hydrated.

Founder Lewis Hornby and his grandmother Pat, sampling Jelly Drops—an edible gummy containing water and life-saving electrolytes.

On a visit to his grandmother’s nursing home in 2016, college student Lewis Hornby made a shocking discovery: Dehydration is a common (and dangerous) problem among seniors—especially those that are diagnosed with dementia.

Hornby’s grandmother, Pat, had always had difficulty keeping up her water intake as she got older, a common issue with seniors. As we age, our body composition changes, and we naturally hold less water than younger adults or children, so it’s easier to become dehydrated quickly if those fluids aren’t replenished. What’s more, our thirst signals diminish naturally as we age as well—meaning our body is not as good as it once was in letting us know that we need to rehydrate. This often creates a perfect storm that commonly leads to dehydration. In Pat’s case, her dehydration was so severe she nearly died.

When Lewis Hornby visited his grandmother at her nursing home afterward, he learned that dehydration especially affects people with dementia, as they often don’t feel thirst cues at all, or may not recognize how to use cups correctly. But while dementia patients often don’t remember to drink water, it seemed to Hornby that they had less problem remembering to eat, particularly candy.

Hornby wanted to create a solution for elderly people who struggled keeping their fluid intake up. He spent the next eighteen months researching and designing a solution and securing funding for his project. In 2019, Hornby won a sizable grant from the Alzheimer’s Society, a UK-based care and research charity for people with dementia and their caregivers. Together, through the charity’s Accelerator Program, they created a bite-sized, sugar-free, edible jelly drop that looked and tasted like candy. The candy, called Jelly Drops, contained 95% water and electrolytes—important minerals that are often lost during dehydration. The final product launched in 2020—and was an immediate success. The drops were able to provide extra hydration to the elderly, as well as help keep dementia patients safe, since dehydration commonly leads to confusion, hospitalization, and sometimes even death.

Not only did Jelly Drops quickly become a favorite snack among dementia patients in the UK, but they were able to provide an additional boost of hydration to hospital workers during the pandemic. In NHS coronavirus hospital wards, patients infected with the virus were regularly given Jelly Drops to keep their fluid levels normal—and staff members snacked on them as well, since long shifts and personal protective equipment (PPE) they were required to wear often left them feeling parched.

In April 2022, Jelly Drops launched in the United States. The company continues to donate 1% of its profits to help fund Alzheimer’s research.