How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

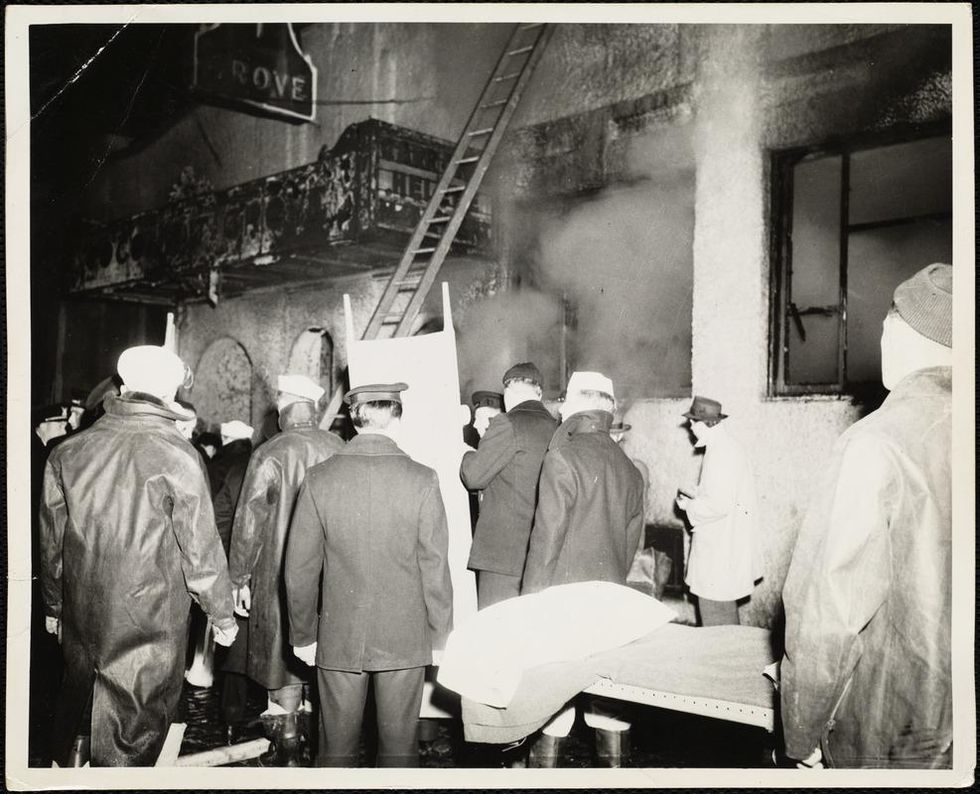

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Artificial Wombs Are Getting Closer to Reality for Premature Babies

A mannequin of a 24-week-old fetus replicated from MR imaging. Created by: Juliette van Haren, Mark Thielen, Jasper Sterk, Chet Bangaru, and Frank Delbressine, Department of Industrial Design, Eindhoven University of Technology.

In 2017, researchers at the Children's Hospital of Philadelphia grew extremely preterm lambs from hairless to fluffy inside a "biobag," a dark, fluid-filled bag designed to mimic a mother's womb.

"There could be quite a lot of infants that would benefit from artificial womb technologies."

This happened over the course of a month, across a delicate period of fetal development that scientists consider the "edge of viability" for survival at birth.

In 2019, Australian and Japanese scientists repeated the success of keeping extremely premature lambs inside an artificial womb environment until they were ready to survive on their own. Those researchers are now developing a treatment strategy for infants born at "the hard limit of viability," between 20 and 23 weeks of gestation. At the same time, Dutch researchers are going so far as to replicate the sound of a mother's heartbeat inside a biobag. These developments signal exciting times ahead--with a touch of science fiction--for artificial womb technologies. But is there a catch?

"There could be quite a lot of infants that would benefit from artificial womb technologies," says Josephine Johnston, a bioethicist and lawyer at The Hastings Center, an independent bioethics research institute in New York. "These technologies can decrease morbidity and mortality for infants at the edge of viability and help them survive without significant damage to the lungs or other problems," she says.

It is a viewpoint shared by Frans van de Vosse, leader of the Cardiovascular Biomechanics research group at Eindhoven University of Technology in the Netherlands. He participates in a university project that recently received more than $3 million in funding from the E.U. to produce a prototype artificial womb for preterm babies between 24 and 28 weeks of gestation by 2024.

The Eindhoven design comes with a fluid-based environment, just like that of the natural womb, where the baby receives oxygen and nutrients through an artificial placenta that is connected to the baby's umbilical cord. "With current incubators, when a respiratory device delivers oxygen into the lungs in order for the baby to breathe, you may harm preterm babies because their lungs are not yet mature for that," says van de Vosse. "But when the lungs are under water, then they can develop, they can mature, and the baby will receive the oxygen through the umbilical cord, just like in the natural womb," he says.

His research team is working to achieve the "perfectly natural" artificial womb based on strict mathematical models and calculations, van de Vosse says. They are even employing 3D printing technology to develop the wombs and artificial babies to test in them--the mannequins, as van de Vosse calls them. These mannequins are being outfitted with sensors that can replicate the environment a fetus experiences inside a mother's womb, including the soothing sound of her heartbeat.

"The Dutch study's artificial womb design is slightly different from everything else we have seen as it encourages a gestateling to experience the kind of intimacy that a fetus does in pregnancy," says Elizabeth Chloe Romanis, an assistant professor in biolaw at Durham Law School in the U.K. But what is a "gestateling" anyway? It's a term Romanis has coined to describe neither a fetus nor a newborn, but an in-between artificial stage.

"Because they aren't born, they are not neonates," Romanis explains. "But also, they are not inside a pregnant person's body, so they are not fetuses. In an artificial womb the fetus is still gestating, hence why I call it gestateling."

The terminology is not just a semantic exercise to lend a name to what medical dictionaries haven't yet defined. "Gestatelings might have a slightly different psychology," says Romanis. "A fetus inside a mother's womb interacts with the mother. A neonate has some kind of self-sufficiency in terms of physiology. But the gestateling doesn't do either of those things," she says, urging us to be mindful of the still-obscure effects that experiencing early life as a gestateling might have on future humans. Psychology aside, there are also legal repercussions.

The Universal Declaration of Human Rights proclaims the "inalienable rights which everyone is entitled to as a human being," with "everyone" including neonates. However, such a legal umbrella is absent when it comes to fetuses, which have no rights under the same declaration. "We might need a new legal category for a gestateling," concludes Romanis.

But not everyone agrees. "However well-meaning, a new legal category would almost certainly be used to further erode the legality of abortion in countries like the U.S.," says Johnston.

The "abortion war" in the U.S. has risen to a crescendo since 2019, when states like Missouri, Mississippi, Kentucky, Louisiana and Georgia passed so-called "fetal heartbeat bills," which render an abortion illegal once a fetal heartbeat is detected. The situation is only bound to intensify now that Justice Ruth Bader Ginsburg, one of the Supreme Court's fiercest champions for abortion rights, has passed away. If President Trump appoints Ginsburg's replacement, he will probably grant conservatives on the Court the votes needed to revoke or weaken Roe v. Wade, the milestone decision of 1973 that established women's legal right to an abortion.

"A gestateling with intermediate status would almost certainly be considered by some in the U.S. (including some judges) to have at least certain legal rights, likely including right-to-life," says Johnston. This would enable a fetus on the edge of viability to make claims on the mother, and lead either to a shortening of the window in which abortion is legal—or a practice of denying abortion altogether. Instead, Johnston predicts, doctors might offer to transfer the fetus to an artificial womb for external gestation as a new standard of care.

But the legal conundrum does not stop there. The viability threshold is an estimate decided by medical professionals based on the clinical evidence and the technology available. It is anything but static. In the 1970s when Roe v. Wade was decided, for example, a fetus was considered legally viable starting at 28 weeks. Now, with improved technology and medical management, "the hard limit today is probably 20 or 21 weeks," says Matthew Kemp, associate professor at the University of Western Australia and one of the Australian-Japanese artificial womb project's senior researchers.

The changing threshold can result in situations where lots of people invested in the decision disagree. "Those can be hard decisions, but they are case-by-case decisions that families make or parents make with the key providers to determine when to proceed and when to let the infant die. Usually, it's a shared decision where the parents have the final say," says Johnston. But this isn't always the case.

On May 9th 2016, a boy named Alfie Evans was born in Liverpool, UK. Suffering seizures a few months after his birth, Alfie was diagnosed with an unknown neurodegenerative disorder and soon went into a semi-vegetative state, which lasted for more than a year. Alfie's medical team decided to withdraw his ventilation support, suggesting further treatment was unlawful and inhumane, but his parents wanted permission to fly him to a hospital in Rome and attempt to prolong his life there. In the end, the case went all the way up to the Supreme Court, which ruled that doctors could stop providing life support for Alfie, saying that the child required "peace, quiet and privacy." What happened to little Alfie raised huge publicity in the UK and pointedly highlighted the dilemma of whether parents or doctors should have the final say in the fate of a terminally-ill child in life-support treatment.

"In a few years from now, women who cannot get pregnant because of uterine infertility will be able to have a fully functional uterus made from their own tissue."

Alfie was born and, thus had legal rights, yet legal and ethical mayhem arose out of his case. When it comes to gestatelings, the scenarios will be even more complicated, says Romanis. "I think there's a really big question about who has parental rights and who doesn't," she says. "The assisted reproductive technology (ART) law in the U.K. hasn't been updated since 2008....It certainly needs an update when you think about all the things we have done since [then]."

This June, for instance, scientists from the Wake Forest Institute for Regenerative Medicine in North Carolina published research showing that they could take a small sample of tissue from a rabbit's uterus and create a bioengineered uterus, which then supported both fertilization and normal pregnancy like a natural uterus does.

"In [a number of] years from now, women who cannot get pregnant because of uterine infertility will be able to have a fully functional uterus made from their own tissue," says Dr. Anthony Atala, the Institute's director and a pioneer in regenerative medicine. These bioengineered uteri will eventually be covered by insurance, Atala expects. But when it comes to artificial wombs that externally gestate premature infants, will all mothers have equal access?

Medical reports have already shown racial and ethnic disparities in infertility treatments and access to assisted reproductive technologies. Costs on average total $12,400 per cycle of treatment and may require several cycles to achieve a live birth. "There's no indication that artificial wombs would be treated any differently. That's what we see with almost every expensive new medical technology," says Johnston. In a much more dystopian future, there is even a possibility that inequity in healthcare might create disturbing chasms in how women of various class levels bear children. Romanis asks us to picture the following scenario:

We live in a world where artificial wombs have become mainstream. Most women choose to end their pregnancies early and transfer their gestatelings to the care of machines. After a while, insurers deem full-term pregnancy and childbirth a risky non-necessity, and are lobbying to stop covering them altogether. Wealthy white women continue opting out of their third trimesters (at a high cost), since natural pregnancy has become a substandard route for poorer women. Those women are strongly judged for any behaviors that could risk their fetus's health, in contrast with the machine's controlled environment. "Why are you having a coffee during your pregnancy?" critics might ask. "Why are you having a glass of red wine? If you can't be perfect, why don't you have it the artificial way?"

Problem is, even if they want to, they won't be able to afford it.

In a more sanguine version, however, the artificial wombs are only used in cases of prematurity as a life-saving medical intervention rather than as a lifestyle accommodation. The 15 million babies who are born prematurely each year and may face serious respiratory, cardiovascular, visual and hearing problems, as well as learning disabilities, instead continue their normal development in artificial wombs. After lots of deliberation, insurers agree to bear the cost of external wombs because they are cheaper than a lifetime of medical care for a disabled or diseased person. This enables racial and ethnic minority women, who make up the majority of women giving premature birth, to access the technology.

Even extremely premature babies, those babies (far) below the threshold of 28 weeks of gestation, half of which die, could now discover this thing called life. In this scenario, as the Australian researcher Kemp says, we are simply giving a good shot at healthy, long-term survival to those who were unfortunate enough to start too soon.

Real-Time Monitoring of Your Health Is the Future of Medicine

Implantable sensors and other surveillance technologies offer tremendous health benefits -- and ethical challenges.

The same way that it's harder to lose 100 pounds than it is to not gain 100 pounds, it's easier to stop a disease before it happens than to treat an illness once it's developed.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

Bio-engineers working on the next generation of diagnostic tools say today's technology, such as colonoscopies or mammograms, are reactionary; that is, they tell a person they are sick often when it's too late to reverse course. Surveillance medicine — such as implanted sensors — will detect disease at its onset, in real time.

What Is Possible?

Ever since the Human Genome Project — which concluded in 2003 after mapping the DNA sequence of all 30,000 human genes — modern medicine has shifted to "personalized medicine." Also called, "precision health," 21st-century doctors can in some cases assess a person's risk for specific diseases from his or her DNA. The information enables women with a BRCA gene mutation, for example, to undergo more frequent screenings for breast cancer or to pro-actively choose to remove their breasts, as a "just in case" measure.

But your DNA is not always enough to determine your risk of illness. Not all genetic mutations are harmful, for example, and people can get sick without a genetic cause, such as with an infection. Hence the need for a more "real-time" way to monitor health.

Aaron Morris, a postdoctoral researcher in the Department of Biomedical Engineering at the University of Michigan, wants doctors to be able to predict illness with pinpoint accuracy well before symptoms show up. Working in the lab of Dr. Lonnie Shea, the team is building "a tiny diagnostic lab" that can live under a person's skin and monitor for illness, 24/7. Currently being tested in mice, the Michigan team's porous biodegradable implant becomes part of the body as "cells move right in," says Morris, allowing engineered tissue to be biopsied and analyzed for diseases. The information collected by the sensors will enable doctors to predict disease flareups, such as for cancer relapses, so that therapies can begin well before a person comes out of remission. The technology will also measure the effectiveness of those therapies in real time.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

While it may be four or five decades before Morris' sensor becomes mainstream, "the age of surveillance medicine is here," says Jamie Metzl, a technology and healthcare futurist who penned Hacking Darwin: Genetic Engineering and the Future of Humanity. "It will get more effective and sophisticated and less obtrusive over time," says Metzl.

Already, Google compiles public health data about disease hotspots by amalgamating individual searches for medical symptoms; pill technology can digitally track when and how much medication a patient takes; and, the Apple watch heart app can predict with 85-percent accuracy if an individual using the wrist device has Atrial Fibrulation (AFib) — a condition that causes stroke, blood clots and heart failure, and goes undiagnosed in 700,000 people each year in the U.S.

"We'll never be able to predict everything," says Metzl. "But we will always be able to predict and prevent more and more; that is the future of healthcare and medicine."

Morris believes that within ten years there will be surveillance tools that can predict if an individual has contracted the flu well before symptoms develop.

At City College of New York, Ryan Williams, assistant professor of biomedical engineering, has built an implantable nano-sensor that works with a florescent wand to scope out if cancer cells are growing at the implant site. "Instead of having the ovary or breast removed, the patient could just have this [surveillance] device that can say 'hey we're monitoring for this' in real-time… [to] measure whether the cancer is maybe coming back,' as opposed to having biopsy tests or undergoing treatments or invasive procedures."

Not all surveillance technologies that are being developed need to be implanted. At Case Western, Colin Drummond, PhD, MBA, a data scientist and assistant department chair of the Department of Biomedical Engineering, is building a "surroundable." He describes it as an Alexa-style surveillance system (he's named her Regina) that will "tell" the user, if a need arises for medication, how much to take and when.

Bioethical Red Flags

"Everyone should be extremely excited about our move toward what I call predictive and preventive health care and health," says Metzl. "We should also be worried about it. Because all of these technologies can be used well and they can [also] be abused." The concerns are many layered:

Discriminatory practices

For years now, bioethicists have expressed concerns about employee-sponsored wellness programs that encourage fitness while also tracking employee health data."Getting access to your health data can change the way your employer thinks about your employability," says Keisha Ray, assistant professor at the University of Texas Health Science Center at Houston (UTHealth). Such access can lead to discriminatory practices against employees that are less fit. "Surveillance medicine only heightens those risks," says Ray.

Who owns the data?

Surveillance medicine may help "democratize healthcare" which could be a good thing, says Anita Ho, an associate professor in bioethics at both the University of California, San Francisco and at the University of British Columbia. It would enable easier access by patients to their health data, delivered to smart phones, for example, rather than waiting for a call from the doctor. But, she also wonders who will own the data collected and if that owner has the right to share it or sell it. "A direct-to-consumer device is where the lines get a little blurry," says Ho. Currently, health data collected by Apple Watch is owned by Apple. "So we have to ask bigger ethical questions in terms of what consent should be required" by users.

Insurance coverage

"Consumers of these products deserve some sort of assurance that using a product that will predict future needs won't in any way jeopardize their ability to access care for those needs," says Hastings Center bioethicist Carolyn Neuhaus. She is urging lawmakers to begin tackling policy issues created by surveillance medicine, now, well ahead of the technology becoming mainstream, not unlike GINA, the Genetic Information Nondiscrimination Act of 2008 -- a federal law designed to prevent discrimination in health insurance on the basis of genetic information.

And, because not all Americans have insurance, Ho wants to know, who's going to pay for this technology and how much will it cost?

Trusting our guts

Some bioethicists are concerned that surveillance technology will reduce individuals to their "risk profiles," leaving health care systems to perceive them as nothing more than a "bundle of health and security risks." And further, in our quest to predict and prevent ailments, Neuhaus wonders if an over-reliance on data could damage the ability of future generations to trust their gut and tune into their own bodies?

It "sounds kind of hippy-dippy and feel-goodie," she admits. But in our culture of medicine where efficiency is highly valued, there's "a tendency to not value and appreciate what one feels inside of their own body … [because] it's easier to look at data than to listen to people's really messy stories of how they 'felt weird' the other day. It takes a lot less time to look at a sheet, to read out what the sensor implanted inside your body or planted around your house says."

Ho, too, worries about lost narratives. "For surveillance medicine to actually work we have to think about how we educate clinicians about the utility of these devices and how to how to interpret the data in the broader context of patients' lives."

Over-diagnosing

While one of the goals of surveillance medicine is to cut down on doctor visits, Ho wonders if the technology will have the opposite effect. "People may be going to the doctor more for things that actually are benign and are really not of concern yet," says Ho. She is also concerned that surveillance tools could make healthcare almost "recreational" and underscores the importance of making sure that the goals of surveillance medicine are met before the technology is unleashed.

"We can't just assume that any of these technologies are inherently technologies of liberation."

AI doesn't fix existing healthcare problems

"Knowing that you're going to have a fall or going to relapse or have a disease isn't all that helpful if you have no access to the follow-up care and you can't afford it and you can't afford the prescription medication that's going to ward off the onset," says Neuhaus. "It may still be worth knowing … but we can't fool ourselves into thinking that this technology is going to reshape medicine in America if we don't pay attention to … the infrastructure that we don't currently have."

Race-based medicine

How surveillances devices are tested before being approved for human use is a major concern for Ho. In recent years, alerts have been raised about the homogeneity of study group participants — too white and too male. Ho wonders if the devices will be able to "accurately predict the disease progression for people whose data has not been used in developing the technology?" COVID-19 has killed Black people at a rate 2.5 time greater than white people, for example, and new, virtual clinical research is focused on recruiting more people of color.

The Biggest Question

"We can't just assume that any of these technologies are inherently technologies of liberation," says Metzl.

Especially because we haven't yet asked the 64-thousand dollar question: Would patients even want to know?

Jenny Ahlstrom is an IT professional who was diagnosed at 43 with multiple myeloma, a blood cancer that typically attacks people in their late 60s and 70s and for which there is no cure. She believes that most people won't want to know about their declining health in real time. People like to live "optimistically in denial most of the time. If they don't have a problem, they don't want to really think they have a problem until they have [it]," especially when there is no cure. "Psychologically? That would be hard to know."

Ahlstrom says there's also the issue of trust, something she experienced first-hand when she launched her non-profit, HealthTree, a crowdsourcing tool to help myeloma patients "find their genetic twin" and learn what therapies may or may not work. "People want to share their story, not their data," says Ahlstrom. "We have been so conditioned as a nation to believe that our medical data is so valuable."

Metzl acknowledges that adoption of new technologies will be uneven. But he also believes that "over time, it will be abundantly clear that it's much, much cheaper to predict and prevent disease than it is to treat disease once it's already emerged."

Beyond cost, the tremendous potential of these technologies to help us live healthier and longer lives is a game-changer, he says, as long as we find ways "to ultimately navigate this terrain and put systems in place ... to minimize any potential harms."