How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

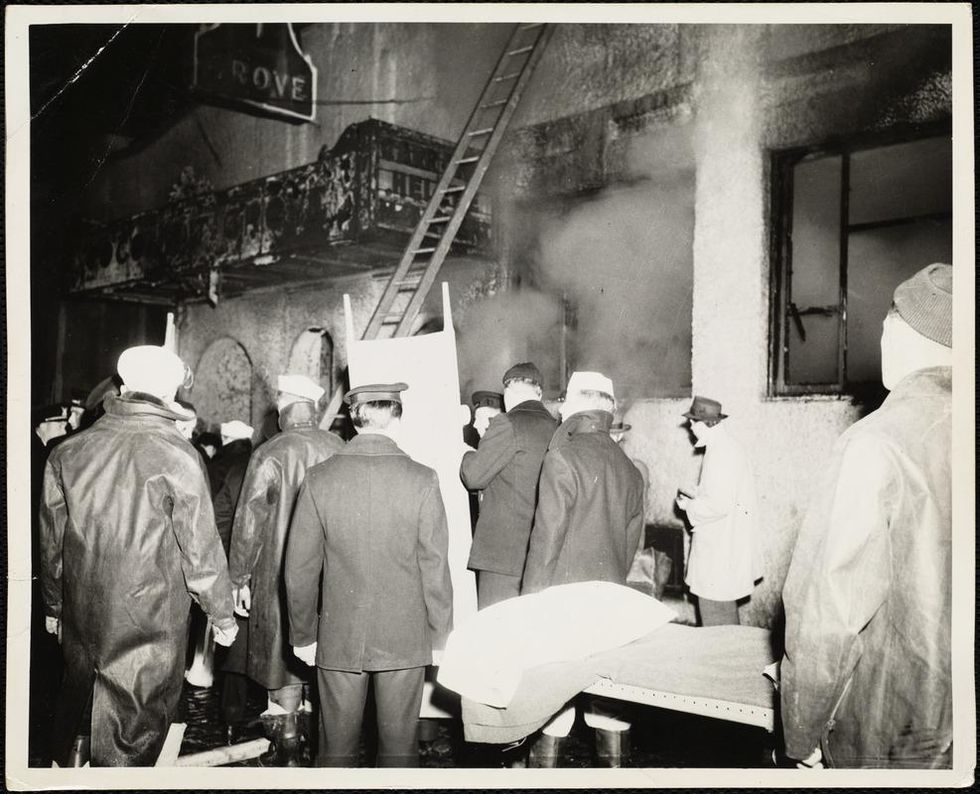

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

The latest tools, like whole genome sequencing, are allowing food outbreaks to be identified and stopped more quickly.

With the pandemic at the forefront of everyone's minds, many people have wondered if food could be a source of coronavirus transmission. Luckily, that "seems unlikely," according to the CDC, but foodborne illnesses do still sicken a whopping 48 million people per year.

Whole genome sequencing is like "going from an eight-bit image—maybe like what you would see in Minecraft—to a high definition image."

In normal times, when there isn't a historic global health crisis infecting millions and affecting the lives of billions, foodborne outbreaks are real and frightening, potentially deadly, and can cause widespread fear of particular foods. Think of Romaine lettuce spreading E. coli last year— an outbreak that infected more than 500 people and killed eight—or peanut butter spreading salmonella in 2008, which infected 167 people.

The technologies available to detect and prevent the next foodborne disease outbreak have improved greatly over the past 30-plus years, particularly during the past decade, and better, more nimble technologies are being developed, according to experts in government, academia, and private industry. The key to advancing detection of harmful foodborne pathogens, they say, is increasing speed and portability of detection, and the precision of that detection.

Getting to Rapid Results

Researchers at Purdue University have recently developed a lateral flow assay that, with the help of a laser, can detect toxins and pathogenic E. coli. Lateral flow assays are cheap and easy to use; a good example is a home pregnancy test. You place a liquid or liquefied sample on a piece of paper designed to detect a single substance and soon after you get the results in the form of a colored line: yes or no.

"They're a great portable tool for us for food contaminant detection," says Carmen Gondhalekar, a fifth-year biomedical engineering graduate student at Purdue. "But one of the areas where paper-based lateral flow assays could use improvement is in multiplexing capability and their sensitivity."

J. Paul Robinson, a professor in Purdue's Colleges of Veterinary Medicine and Engineering, and Gondhalekar's advisor, agrees. "One of the fundamental problems that we have in detection is that it is hard to identify pathogens in complex samples," he says.

When it comes to foodborne disease outbreaks, you don't always know what substance you're looking for, so an assay made to detect only a single substance isn't always effective. The goal of the project at Purdue is to make assays that can detect multiple substances at once.

These assays would be more complex than a pregnancy test. As detailed in Gondhalekar's recent paper, a laser pulse helps create a spectral signal from the sample on the assay paper, and the spectral signal is then used to determine if any unique wavelengths associated with one of several toxins or pathogens are present in the sample. Though the handheld technology has yet to be built, the idea is that the results would be given on the spot. So someone in the field trying to track the source of a Salmonella infection could, for instance, put a suspected lettuce sample on the assay and see if it has the pathogen on it.

"What our technology is designed to do is to give you a rapid assessment of the sample," says Robinson. "The goal here is speed."

Seeing the Pathogen in "High-Def"

"One in six Americans will get a foodborne illness every year," according to Dr. Heather Carleton, a microbiologist at the Centers for Disease Control and Prevention's Enteric Diseases Laboratory Branch. But not every foodborne outbreak makes the news. In 2017 alone, the CDC monitored between 18 and 37 foodborne poison clusters per week and investigated 200 multi-state clusters. Hardboiled eggs, ground beef, chopped salad kits, raw oysters, frozen tuna, and pre-cut melon are just a taste of the foods that were investigated last year for different strains of listeria, salmonella, and E. coli.

At the heart of the CDC investigations is PulseNet, a national network of laboratories that uses DNA fingerprinting to detect outbreaks at local and regional levels. This is how it works: When a patient gets sick—with symptoms like vomiting and fever, for instance—they will go to a hospital or clinic for treatment. Since we're talking about foodborne illnesses, a clinician will likely take a stool sample from the patient and send it off to a laboratory to see if there is a foodborne pathogen, like salmonella, E. Coli, or another one. If it does contain a potentially harmful pathogen, then a bacterial isolate of that identified sample is sent to a regional public health lab so that whole genome sequencing can be performed.

Whole genome sequencing can differentiate "virtually all" strains of foodborne pathogens, no matter the species, according to the FDA.

Whole genome sequencing is a method for reading the entire genome of a bacterial isolate (or from any organism, for that matter). Instead of working with a couple dozen data points, now you're working with millions of base pairs. Carleton likes to describe it as "going from an eight-bit image—maybe like what you would see in Minecraft—to a high definition image," she says. "It's really an evolution of how we detect foodborne illnesses and identify outbreaks."

If the bacterial isolate matches another in the CDC's database, this means there could be a potential outbreak and an investigation may be started, with the goal of tracking the pathogen to its source.

Whole genome sequencing has been a relatively recent shift in foodborne disease detection. For more than 20 years, the standard technique for analyzing pathogens in foodborne disease outbreaks was pulsed-field gel electrophoresis. This method creates a DNA fingerprint for each sample in the form of a pattern of about 15-30 "bands," with each band representing a piece of DNA. Researchers like Carleton can use this fingerprint to see if two samples are from the same bacteria. The problem is that 15-30 bands are not enough to differentiate all isolates. Some isolates whose bands look very similar may actually come from different sources and some whose bands look different may be from the same source. But if you can see the entire DNA fingerprint, then you don't have that issue. That's where whole genome sequencing comes in.

Although the PulseNet team had piloted whole genome sequencing as early as 2013, it wasn't until July of last year that the transition to using whole genome sequencing for all pathogens was complete. Though whole genome sequencing requires far more computing power to generate, analyze, and compare those millions of data points, the payoff is huge.

Stopping Outbreaks Sooner

The U.S. Food and Drug Administration (FDA) acquired their first whole genome sequencers in 2008, according to Dr. Eric Brown, the Director of the Division of Microbiology in the FDA's Office of Regulatory Science. Since then, through their GenomeTrakr program, a network of more than 60 domestic and international labs, the FDA has sequenced and publicly shared more than 400,000 isolates. "The impact of what whole genome sequencing could do to resolve a foodborne outbreak event was no less impactful than when NASA turned on the Hubble Telescope for the first time," says Brown.

Whole genome sequencing has helped identify strains of Salmonella that prior methods were unable to differentiate. In fact, whole genome sequencing can differentiate "virtually all" strains of foodborne pathogens, no matter the species, according to the FDA. This means it takes fewer clinical cases—fewer sick people—to detect and end an outbreak.

And perhaps the largest benefit of whole genome sequencing is that these detailed sequences—the millions of base pairs—can imply geographic location. The genomic information of bacterial strains can be different depending on the area of the country, helping these public health agencies eventually track the source of outbreaks—a restaurant, a farm, a food-processing center.

Coming Soon: "Lab in a Backpack"

Now that whole genome sequencing has become the go-to technology of choice for analyzing foodborne pathogens, the next step is making the process nimbler and more portable. Putting "the lab in a backpack," as Brown says.

The CDC's Carleton agrees. "Right now, the sequencer we use is a fairly big box that weighs about 60 pounds," she says. "We can't take it into the field."

A company called Oxford Nanopore Technologies is developing handheld sequencers. Their devices are meant to "enable the sequencing of anything by anyone anywhere," according to Dan Turner, the VP of Applications at Oxford Nanopore.

"The sooner that we can see linkages…the sooner the FDA gets in action to mitigate the problem and put in some kind of preventative control."

"Right now, sequencing is very much something that is done by people in white coats in laboratories that are set up for that purpose," says Turner. Oxford Nanopore would like to create a new, democratized paradigm.

The FDA is currently testing these types of portable sequencers. "We're very excited about it. We've done some pilots, to be able to do that sequencing in the field. To actually do it at a pond, at a river, at a canal. To do it on site right there," says Brown. "This, of course, is huge because it means we can have real-time sequencing capability to stay in step with an actual laboratory investigation in the field."

"The timeliness of this information is critical," says Marc Allard, a senior biomedical research officer and Brown's colleague at the FDA. "The sooner that we can see linkages…the sooner the FDA gets in action to mitigate the problem and put in some kind of preventative control."

At the moment, the world is rightly focused on COVID-19. But as the danger of one virus subsides, it's only a matter of time before another pathogen strikes. Hopefully, with new and advancing technology like whole genome sequencing, we can stop the next deadly outbreak before it really gets going.

What Will Make the Public Trust a COVID-19 Vaccine?

A successful deployment of an eventual vaccine will mean grappling with ongoing cultural tensions.

With a brighter future hanging on the hopes of an approved COVID-19 vaccine, is it possible to win over the minds of fearful citizens who challenge the value or safety of vaccination?

Globally, nine COVID-19 vaccines so far are being tested for safety in early phase human clinical trials.

It's a decades-old practice. With a dose injected into the arm of a healthy patient, doctors aim to prevent illness with a vaccine shot designed to trigger a person's immune system to fight serious infection without getting the disease.

This week, in fact, the U.S. frontrunner vaccine candidate, developed by Moderna, safely produced an immune response in the first eight healthy volunteers, the company announced. A large efficacy trial is planned to start in July. But if positive signals for safety and efficacy result from that trial, will that be enough to convince the public to broadly embrace a new vaccine?

"Throughout the history of vaccines there has always been a small vocal minority who don't believe vaccines work or don't trust the science," says sociologist and researcher Jennifer Reich, a professor at the University of Colorado in Denver and author of Calling the Shots: Why Parents Reject Vaccines.

Research indicates that only about 2 percent of the population say vaccines aren't necessary under any circumstance. Remarkably, a quarter to one third of American parents delay or reject the shots, not because they are anti-vaccine, but because they disapprove of the recommended timing or administration, says Reich.

Additionally, addressing distrust about how they come to market is key when talking to parents, workers or anyone targeted for a new vaccine, she says.

"When I talk to parents about why they reject vaccines for their kids, a lot of them say that they don't fully trust the process by which vaccines are regulated and tested," says Reich. "They don't trust that vaccine manufacturers -- which are for-profit companies -- are looking out for public health."

Balancing Act

Globally, nine COVID-19 vaccine candidates so far are being tested for safety in early phase human clinical trials and more than 100 are under development as scientists hustle to curtail the disease. Creating a new vaccine at a record pace requires a delicate balance of benefit and risk, says vaccinology expert Dr. Kathryn Edwards, professor of pediatrics in the division of infectious diseases at Vanderbilt University School of Medicine in Nashville, Tenn.

"We take safety very seriously," says Dr. Edwards. "We don't want something bad to happen, but we also realize that we have a terrible outbreak and we have a lot of people dying. We want to figure out how we can stop this."

In the U.S., all vaccine clinical trials have a data safety board of experts who monitor results for adverse reactions and red flags that should halt a study, notes Dr. Edwards. Any candidate that succeeds through safety and efficacy trials still requires review and approval by the Food and Drug Administration before a public launch.

Community vs. Individual

A major challenge to the deployment of a safe and effective coronavirus vaccine goes beyond the technical realm. A persistent all-out anti-vaccine sentiment has found a home and growing community on social media where conspiracies thrive. Main tenets of the movement are that vaccines are ineffective, unsafe and cause autism, despite abundant scientific evidence to the contrary.

Best-case scenario, more than one successful vaccine ascends with competing methods to achieve the same goal of preventing or lessening the severity of the COVID-19 virus.

In fact, widespread use of vaccines is considered by the U.S. Centers of Disease Control and Prevention to be one of the greatest public health achievements of the 20th Century. The World Health Organization estimates that between two million to three million deaths are avoided each year through immunization campaigns that employ vaccination to control life-threatening infectious diseases.

Most people reluctant to give their children vaccines, however, don't oppose them for everyone, but believe that they are a personal choice, says Reich.

"They think that vaccines are one strategy in personal health optimization, but they shouldn't be mandated for participation in any part of civil society," she says.

Vaccine hesitancy, like the teeter totter of social distancing acceptance, reflects the push and pull of individual versus community values, says Reich.

"A lot of people are saying, 'I take personal responsibility for my own health and I don't want a city or a county or state telling me what I should and shouldn't do,'" says Reich. "Then we also see calls for collective responsibility that says 'It's not your personal choice. This is about helping health systems function. This is about making sure vulnerable people are protected.'"

These same debates are likely to continue if a vaccine comes to market, she says.

Building Public Confidence

Reich offers solutions to address the conflict between embedded American norms and widespread embrace of an approved COVID-19 vaccine. Long-term goals: Stop blaming people when they get sick, treat illness as a community responsibility, make sick leave common for all workers, and improve public health systems.

"In the shorter run," says Reich, "health authorities and companies that might bring a vaccine to market need to work very hard to explain to the public why they should trust this vaccine and why they should use it."

The rush for a viable vaccine raises questions for consumers. To build public confidence, it's up to FDA reviewers, institutions and pharmaceutical companies to explain "what steps were skipped. What steps moved forward. How rigorous was safety testing. And to make that information clear to the public," says Reich.

Dr. Edwards says clinical trial timelines accelerated to test vaccines in humans make all the safeguards involved in the process that more compelling and important.

"There's no question we need a vaccine," she says. "But we also have to make sure that we don't harm people."

The Road Ahead

Think of manufacturing and distribution as key pitstops to keep the race for a vaccine on the road to the finish line. Both elements require substantial effort and consideration.

The speed of getting a vaccine to those who need it could hinge on the type of technology used to create it. Best-case scenario, more than one successful vaccine ascends with competing methods to achieve the same goal of preventing or lessening the severity of the COVID-19 virus.

Technological platforms fall into two basic camps, those that are proven and licensed for other viruses, and experimental approaches that may hold great promise but lack regulatory approval, says Maria Elena Bottazzi, co-director of Texas Children's Center for Vaccine Development at Baylor College of Medicine in Houston.

Moderna, for instance, employs an experimental technology called messenger RNA (mRNA) that has produced the encouraging early results in human safety trials, although some researchers criticized the company for not making the data public. The mRNA vaccine instructs cells to make copies of the key COVID-19 spike protein, with the goal of then triggering production of immune cells that can recognize and attack the virus if it ever invades the body.

"We were already seeing a lot of dissent around questions of individual freedoms and community responsibilities."

Scientists always look for ways to incorporate new technologies into drug development, says Bottazzi. On the other hand, the more basic and generic the technology, theoretically, the faster production could ramp up if a vaccine proves successful through all phases of clinical trials, she says.

"I don't want to develop a vaccine in my lab, but then I don't have anybody to hand it off to because my process is not suitable" for manufacturing or scalability, says Bottazzi.

Researchers at the Baylor lab hope to repurpose a shelved vaccine developed for the genetically similar SARS virus, with a strategy to leverage what is already known instead of "starting from scratch" to develop a COVID-19 vaccine. A recombinant protein technology similar to that used for an approved Hepatitis B vaccine lets scientists focus on identifying a suitable vaccine target without the added worry of a novel platform, says Bottazzi.

The Finish Line

If and when a COVID-19 vaccine is approved is anyone's guess. Announcing a plan to hasten vaccine development via a program dubbed Operation Warp Speed, President Trump said recently one could be available "hopefully" by the end of the year or early 2021.

Scientists urge caution, noting that safe vaccines can take 10 years or more to develop. If a rushed vaccine turns out to have safety and efficacy issues, that could add ammunition to the anti-vaccine lobby.

Emergence of a successful vaccine requires an "enormous effort" with many complex systems from the lab all the way to manufacturing enough capacity to handle a pandemic, says Bottazzi.

"At the same time, you're developing it, you're really carefully assessing its safety and ability to be effective," she says, so it's important "not to get discouraged" if it takes longer than a year or more.

To gauge if a vaccine works on a broad scale, it would have to be delivered into communities where the virus is active. There are examples in history of life-saving vaccines going first to people who could pay for them and not to those who needed them most, says Reich.

"Agencies are going to have to think about how those distribution decisions are going to be made and who is going to make them and that will go a certain way toward reassuring the public," says Reich.

A Gallup survey last year found that vaccine confidence, in general, remains high, with 86 percent of Americans believing that vaccines are safer than the diseases that they are designed to prevent. Still, recent news organization polls indicate that roughly 20 to 25 percent of Americans say they won't or are unlikely to get a COVID-19 vaccine if one becomes available.

Until the 1980s, every vaccine to hit the market was appreciated; a culture of questioning science didn't exist in the same way as today, notes Reich. Time passed and attitudes changed.

"We were already having robust arguments nationally about what counts as an expert, what's the role of the government in daily life," says Reich. "We were already seeing a lot of dissent around questions of individual freedoms and community responsibilities. COVID-19 did not create those conflicts, but they've definitely become more visible since we've moved into this pandemic."