How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

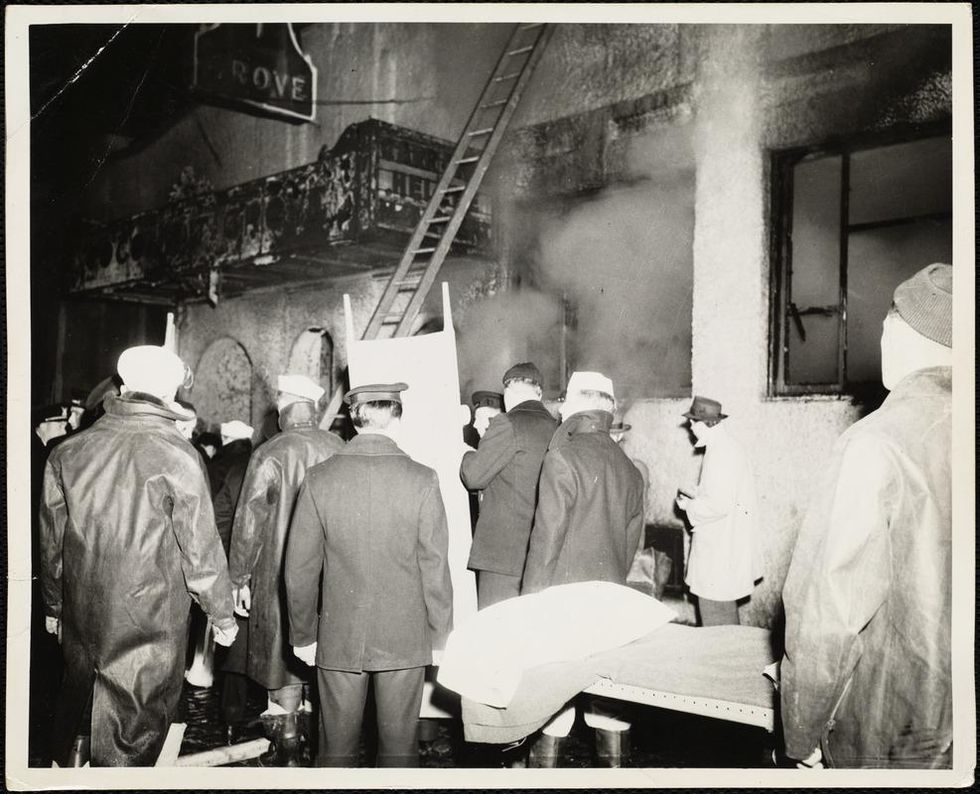

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Who’s Responsible If a Scientist’s Work Is Used for Harm?

A face off in medical ethics.

Are scientists morally responsible for the uses of their work? To some extent, yes. Scientists are responsible for both the uses that they intend with their work and for some of the uses they don't intend. This is because scientists bear the same moral responsibilities that we all bear, and we are all responsible for the ends we intend to help bring about and for some (but not all) of those we don't.

To not think about plausible unintended effects is to be negligent -- and to recognize, but do nothing about, such effects is to be reckless.

It should be obvious that the intended outcomes of our work are within our sphere of moral responsibility. If a scientist intends to help alleviate hunger (by, for example, breeding new drought-resistant crop strains), and they succeed in that goal, they are morally responsible for that success, and we would praise them accordingly. If a scientist intends to produce a new weapon of mass destruction (by, for example, developing a lethal strain of a virus), and they are unfortunately successful, they are morally responsible for that as well, and we would blame them accordingly. Intention matters a great deal, and we are most praised or blamed for what we intend to accomplish with our work.

But we are responsible for more than just the intended outcomes of our choices. We are also responsible for unintended but readily foreseeable uses of our work. This is in part because we are all responsible for thinking not just about what we intend, but also what else might follow from our chosen course of action. In cases where severe and egregious harms are plausible, we should act in ways that strive to prevent such outcomes. To not think about plausible unintended effects is to be negligent -- and to recognize, but do nothing about, such effects is to be reckless. To be negligent or reckless is to be morally irresponsible, and thus blameworthy. Each of us should think beyond what we intend to do, reflecting carefully on what our course of action could entail, and adjusting our choices accordingly.

It is this area, of unintended but readily foreseeable (and plausible) impacts, that often creates the most difficulty for scientists. Many scientists can become so focused on their work (which is often demanding) and so focused on achieving their intended goals, that they fail to stop and think about other possible implications.

Debates over "dual-use" research exemplify these concerns, where harmful potential uses of research might mean the work should not be pursued, or the full publication of results should be curtailed. When researchers perform gain-of-function research, pushing viruses to become more transmissible or more deadly, it is clear how dangerous such work could be in the wrong hands. In these cases, it is not enough to simply claim that such uses were not intended and that it is someone else's job to ensure that the materials remain secure. We know securing infectious materials can be error-prone (recall events at the CDC and the FDA).

In some areas of research, scientists are already worrying about the unintended possible downsides of their work.

Further, securing viral strains does nothing to secure the knowledge that could allow for reproducing the viral strain (particularly when the methodologies and/or genetic sequences are published after the fact, as was the case for H5N1 and horsepox). It is, in fact, the researcher's moral responsibility to be concerned not just about the biosafety controls in their own labs, but also which projects should be pursued (Will the gain in knowledge be worth the possible downsides?) and which results should be published (Will a result make it easier for a malicious actor to deploy a new bioweapon?).

We have not yet had (to my knowledge) a use of gain-of-function research to harm people. If that does happen, those who actually released the virus on the public will be most blameworthy–-intentions do matter. But the scientists who developed the knowledge deployed by the malicious actors may also be held blameworthy, especially if the malicious use was easy to foresee, even if it was not pleasant to think about.

In some areas of research, scientists are already worrying about the unintended possible downsides of their work. Scientists investigating gene drives have thought beyond the immediate desired benefits of their work (e.g. reducing invasive species populations) and considered the possible spread of gene drives to untargeted populations. Modeling the impacts of such possibilities has led some researchers to pull back from particular deployment possibilities. It is precisely such thinking through both the intended and unintended possible outcomes that is needed for responsible work.

The world has gotten too small, too vulnerable for scientists to act as though they are not responsible for the uses of their work, intended or not. They must seek to ensure that, as the recent AAAS Statement on Scientific Freedom and Responsibility demands, their work is done "in the interest of humanity." This requires thinking beyond one's intentions, potentially drawing on the expertise of others, sometimes from other disciplines, to help explore implications. The need for such thinking does not guarantee good outcomes, but it will ensure that we are doing the best we can, and that is what being morally responsible is all about.

This Startup Uses Dust to Fight Sweatshops

Workers at an industrial textile factory.

"Dust thou art, and unto dust shalt thou return." Whoever wrote that famous line probably didn't realize that dust actually contains a secret weapon.

"We have developed the capability to turn dust into data that can be used to trace problems in the supply chain."

Far from being a collection of mere inanimate particles, dust is now recognized as a powerful tool filled with living sensors. Studying those sensors can reveal an object's location history, which can help brands fight unethical manufacturing.

"We have developed the capability to turn dust into data that can be used to trace problems in the supply chain," explains Jessica Green, the CEO of Phylagen, a San-Francisco-based company that she co-founded in 2014.

So how does the technology work?

Dust gathers everywhere—on our bodies, on objects—and that dust contains microbes like bacteria and viruses. Just as we humans have our own unique microbiomes, research has shown that physical locations have their own identifiable patterns of microbes as well. Visiting a place means you may pick up its microbial fingerprint in the dust that settles on you. The DNA of those microbes can later be sequenced in a lab and matched back to the place of origin.

"Your environment is constantly imprinted on you and vice versa," says Justin Gallivan, the director of the Biotechnology Office at DARPA, the research and defense arm of the Pentagon, which is funding Phylagen. "If we have a microbial map of the world," he posits, "can we infer an object's transit history?"

So far, Phylagen has shown that it's possible to identify where a ship came from based on the unique microbial populations it picked up at different naval ports. In another experiment, the sampling technology allowed researchers to determine where a person had walked within 1 kilometer in San Francisco, because of the microbes picked up by their shoes.

One application of this technology is to help companies that make products abroad. Such companies are very interested in determining exactly where their products are coming from, especially if foreign subcontractors are involved.

"In retail and apparel, often the facilities performing the subcontracting are not up to the same code that the brands require their suppliers to be, so there could be poor working conditions," says Roxana Hickey, a data scientist at Phylagen. "A supplier might use a subcontractor to save on the bottom line, but unethical practices are very damaging to the brand."

Before this technology was developed, brands sometimes faced a challenge figuring out what was going on in their supply chain. But now a product can be tested upon arrival in the States; its microbial signature can theoretically be analyzed and matched against a reference database to help determine if its DNA pattern matches that of the place where the product was purported to have been made.

Phylagen declined to elaborate further about how their process works, such as how they are building a database of reference samples, and how consistent a microbial population remains across a given location.

As the technology grows more robust, though, one could imagine numerous other applications, like in police work and forensics. But today, Phylagen is solely focused on helping commercial entities bring greater transparency to their operations so they can root out unauthorized subcontracting.

Then those unethical suppliers can – shall we say – bite the dust.

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.