How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

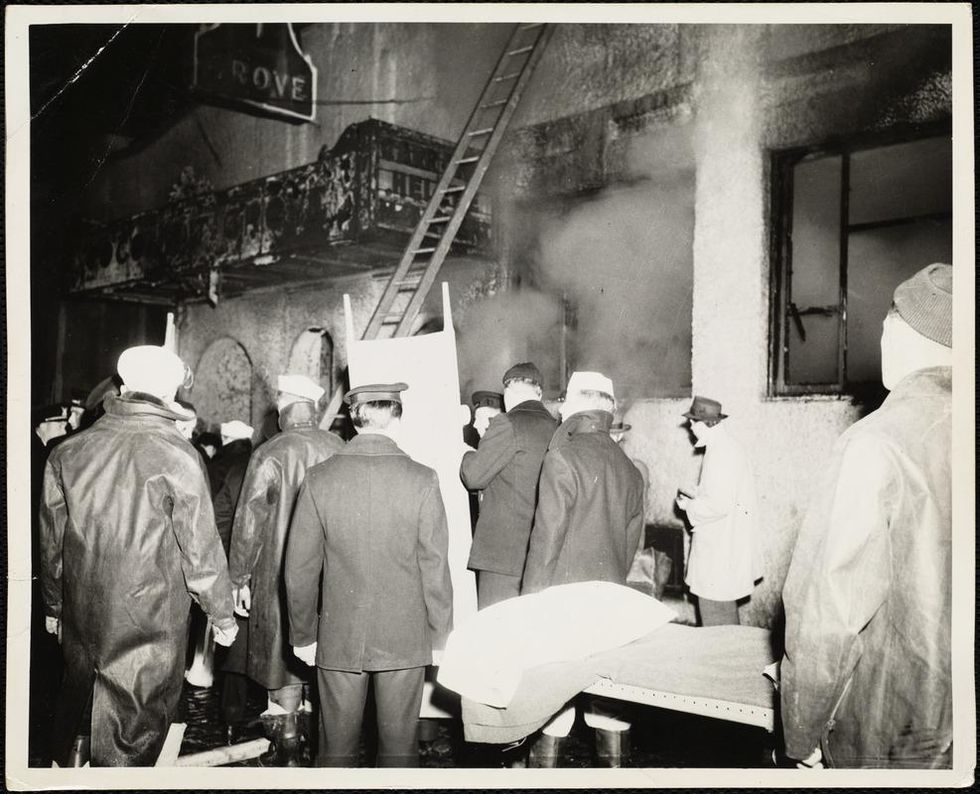

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Recent leaps in technology represent an important step forward in unlocking artificial photosynthesis.

Since the beginning of life on Earth, plants have been naturally converting sunlight into energy. This photosynthesis process that's effortless for them has been anything but for scientists who have been trying to achieve artificial photosynthesis for the last half a century with the goal of creating a carbon-neutral fuel. Such a fuel could be a gamechanger — rather than putting CO2 back into the atmosphere like traditional fuels do, it would take CO2 out of the atmosphere and convert it into usable energy.

If given the option between a carbon-neutral fuel at the gas station and a fuel that produces carbon dioxide in spades -- and if costs and effectiveness were equal --who wouldn't choose the one best for the planet? That's the endgame scientists are after. A consumer switch to clean fuel could have a huge impact on our global CO2 emissions.

Up until this point, the methods used to make liquid fuel from atmospheric CO2 have been expensive, not efficient enough to really get off the ground, and often resulted in unwanted byproducts. But now, a new technology may be the key to unlocking the full potential of artificial photosynthesis. At the very least, it's a step forward and could help make a dent in atmospheric CO2 reduction.

"It's an important breakthrough in artificial photosynthesis," says Qian Wang, a researcher in the Department of Chemistry at Cambridge University and lead author on a recent study published in Nature about an innovation she calls "photosheets."

The latest version of the artificial leaf directly produces liquid fuel, which is easier to transport and use commercially.

These photosheets convert CO2, sunlight, and water into a carbon-neutral liquid fuel called formic acid without the aid of electricity. They're made of semiconductor powders that absorb sunlight. When in the presence of water and CO2, the electrons in the powders become excited and join with the CO2 and protons from the water molecules, reducing the CO2 in the process. The chemical reaction results in the production of formic acid, which can be used directly or converted to hydrogen, another clean energy fuel.

In the past, it's been difficult to reduce CO2 without creating a lot of unwanted byproducts. According to Wang, this new conversion process achieves the reduction and fuel creation with almost no byproducts.

The Cambridge team's new technology is a first and certainly momentous, but they're far from the only team to have produced fuel from CO2 using some form of artificial photosynthesis. More and more scientists are aiming to perfect the method in hopes of producing a truly sustainable, photosynthetic fuel capable of lowering carbon emissions.

Thanks to advancements in nanoscience, which has led to better control of materials, more successes are emerging. A team at the University of Illinois at Urbana-Champaign, for example, used gold nanoparticles as the photocatalysts in their process.

"My group demonstrated that you could actually use gold nanoparticles both as a light absorber and a catalyst in the process of converting carbon dioxide to hydrocarbons such as methane, ethane and propane fuels," says professor Prashant Jain, co-author of the study. Not only are gold nanoparticles great at absorbing light, they don't degrade as quickly as other metals, which makes them more sustainable.

That said, Jain's team, like every other research team working on artificial photosynthesis including the Cambridge team, is grappling with efficiency issues. Jain says that all parts of the process need to be optimized so the reaction can happen as quickly as possible.

"You can't just improve one [aspect], because that can lead to a decrease in performance in some other aspects," Jain explains.

The Cambridge team is currently experimenting with a range of catalysts to improve their device's stability and efficiency. Virgil Andrei, who is working on an artificial leaf design that was developed at Cambridge in 2019, was recently able to improve the performance and selectivity of the device. Now the leaf's solar-to-CO2 energy conversion efficiency is 0.2%, twice its previous efficiency.

The latest version also directly produces liquid fuel, which is easier to transport and use commercially.

In determining a method of fuel production's efficiency, one must consider how sustainable it is at every stage. That involves calculating whenever excess energy is needed to complete a step. According to Jain, in order to use CO2 for fuel production, you have to condense the CO2, which takes energy. And on the fuel production side, once the chemical reaction has created your byproducts, they need to be separated, which also takes energy.

To be truly sustainable, each part of the conversion system also needs to be durable. If parts need to be replaced often, or regularly maintained, that counts against it. Then you have to account for the system's reuse cycle. If you extract CO2 from the environment and convert it into fuel that's then put into a fuel cell, it's going to release CO2 at the other end. In order to create a fully green, carbon-neutral fuel source, that same amount of CO2 needs to be trapped and reintroduced back into the fuel conversion system.

"The cycle continues, and at each point, you will see a loss in efficiency, and depending on how much you [may also] see a loss in yield," says Jain. "And depending on what those efficiencies are at each one of those points will determine whether or not this process can be sustainable."

The science is at least a decade away from offering a competitive sustainable fuel option at scale. Streamlining a process to mimic what plants have perfected over billions of years is no small feat, but an ever-growing community of researchers using rapidly advancing technology is driving progress forward.

Genetic data sets skew too European, threatening to narrow who will benefit from future advances.

Genomics has begun its golden age. Just 20 years ago, sequencing a single genome cost nearly $3 billion and took over a decade. Today, the same feat can be achieved for a few hundred dollars and the better part of a day . Suddenly, the prospect of sequencing not just individuals, but whole populations, has become feasible.

The genetic differences between humans may seem meager, only around 0.1 percent of the genome on average, but this variation can have profound effects on an individual's risk of disease, responsiveness to medication, and even the dosage level that would work best.

Already, initiatives like the U.K.'s 100,000 Genomes Project - now expanding to 1 million genomes - and other similarly massive sequencing projects in Iceland and the U.S., have begun collecting population-scale data in order to capture and study this variation.

The resulting data sets are immensely valuable to researchers and drug developers working to design new 'precision' medicines and diagnostics, and to gain insights that may benefit patients. Yet, because the majority of this data comes from developed countries with well-established scientific and medical infrastructure, the data collected so far is heavily biased towards Western populations with largely European ancestry.

This presents a startling and fast-emerging problem: groups that are under-represented in these datasets are likely to benefit less from the new wave of therapeutics, diagnostics, and insights, simply because they were tailored for the genetic profiles of people with European ancestry.

We may indeed be approaching a golden age of genomics-enabled precision medicine. But if the data bias persists then there is a risk, as with most golden ages throughout history, that the benefits will not be equally accessible to all, and existing inequalities will only be exacerbated.

To remedy the situation, a number of initiatives have sprung up to sequence genomes of under-represented groups, adding them to the datasets and ensuring that they too will benefit from the rapidly unfolding genomic revolution.

Global Gene Corp

The idea behind Global Gene Corp was born eight years ago in Harvard when Sumit Jamuar, co-founder and CEO, met up with his two other co-founders, both experienced geneticists, for a coffee.

"They were discussing the limitless applications of understanding your genetic code," said Jamuar, a business executive from New Delhi.

"And so, being a technology enthusiast type, I was excited and I turned to them and said hey, this is incredible! Could you sequence me and give me some insights? And they actually just turned around and said no, because it's not going to be useful for you - there's not enough reference for what a good Sumit looks like."

What started as a curiosity-driven conversation on the power of genomics ended with a commitment to tackle one of the field's biggest roadblocks - its lack of global representation.

Jamuar set out to begin with India, which has about 20 percent of the world's population, including over 4000 different ethnicities, but contributes less than 2 percent of genomic data, he told Leaps.org.

Eight years later, Global Gene Corp's sequencing initiative is well underway, and is the largest in the history of the Indian subcontinent. The program is being carried out in collaboration with biotech giant Regeneron, with support from the Indian government, local communities, and the Indian healthcare ecosystem. In August 2020, Global Gene Corp's work was recognized through the $1 million 2020 Roddenberry award for organizations that advance the vision of 'Star Trek' creator Gene Roddenberry to better humanity.

This problem has already begun to manifest itself in, for example, much higher levels of genetic misdiagnosis among non-Europeans tested for their risk of certain diseases, such as hypertrophic cardiomyopathy - an inherited disease of the heart muscle.

Global Gene Corp also focuses on developing and implementing AI and machine learning tools to make sense of the deluge of genomic data. These tools are increasingly used by both industry and academia to guide future research by identifying particularly promising or clinically interesting genetic variants. But if the underlying data is skewed European, then the effectiveness of the computational analysis - along with the future advances and avenues of research that emerge from it - will be skewed towards Europeans too.

This problem has already begun to manifest itself in, for example, much higher levels of genetic misdiagnosis among non-Europeans tested for their risk of certain diseases, such as hypertrophic cardiomyopathy - an inherited disease of the heart muscle. Most of the genetic variants used in these tests were identified as being causal for the disease from studies of European genomes. However, many of these variants differ both in their distribution and clinical significance across populations, leading to many patients of non-European ancestry receiving false-positive test results - as their benign genetic variants were misclassified as pathogenic. Had even a small number of genomes from other ethnicities been included in the initial studies, these misdiagnoses could have been avoided.

"Unless we have a data set which is unbiased and representative, we're never going to achieve the success that we want," Jamuar says.

"When Siri was first launched, she could hardly recognize an accent which was not of a certain type, so if I was trying to speak to Siri, I would have to repeat myself multiple times and try to mimic an accent which wasn't my accent so that she could understand it.

"But over time the voice recognition technology improved tremendously because the training data was expanded to include people of very diverse backgrounds and their accents, so the algorithms were trained to be able to pick that up and it dramatically improved the technology. That's the way we have to think about it - without that good-quality diverse data, we will never be able to achieve the full potential of the computational tools."

While mapping India's rich genetic diversity has been the organization's primary focus so far, they plan, in time, to expand their work to other under-represented groups in Asia, the Middle East, Africa, and Latin America.

"As other like-minded people and partners join the mission, it just accelerates the achievement of what we have set out to do, which is to map out and organize the world's genomic diversity so that we can enable high-quality life and longevity benefits for everyone, everywhere," Jamuar says.

Empowering African Genomics

Africa is the birthplace of our species, and today still retains an inordinate amount of total human genetic diversity. Groups that left Africa and went on to populate the rest of the world, some 50 to 100,000 years ago, were likely small in number and only took a fraction of the total genetic diversity with them. This ancient bottleneck means that no other group in the world can match the level of genetic diversity seen in modern African populations.

Despite Africa's central importance in understanding the history and extent of human genetic diversity, the genomics of African populations remains wildly understudied. Addressing this disparity has become a central focus of the H3Africa Consortium, an initiative formally launched in 2012 with support from the African Academy of Sciences, the U.S. National Institutes of Health, and the UK's Wellcome Trust. Today, H3Africa supports over 50 projects across the continent, on an array of different research areas in genetics relevant to the health and heredity of Africans.

"Africa is the cradle of Humankind. So what that really means is that the populations that are currently living in Africa are among some of the oldest populations on the globe, and we know that the longer populations have had to go through evolutionary phases, the more variation there is in the genomes of people who live presently," says Zane Lombard, a principal investigator at H3Africa and Associate Professor of Human Genetics at the University of the Witwatersrand in Johannesburg, South Africa.

"So for that reason, African populations carry a huge amount of genetic variation and diversity, which is pretty much uncaptured. There's still a lot to learn as far as novel variation is concerned by looking at and studying African genomes."

A recent landmark H3Africa study, led by Lombard and published in Nature in October, sequenced the genomes of over 400 African individuals from 50 ethno-linguistic groups - many of which had never been sampled before.

Despite the relatively modest number of individuals sequenced in the study, over three million previously undescribed genetic variants were found, and complex patterns of ancestral migration were uncovered.

"In some of these ethno-linguistic groups they don't have a word for DNA, so we've had to really think about how to make sure that we communicate the purposes of different studies to participants so that you have true informed consent," says Lombard.

"The objective," she explained, "was to try and fill some of the gaps for many of these populations for which we didn't have any whole genome sequences or any genetic variation data...because if we're thinking about the future of precision medicine, if the patient is a member of a specific group where we don't know a lot about the genomic variation that exists in that group, it makes it really difficult to start thinking about clinical interpretation of their data."

From H3Africa's conception, the consortium's goal has not only been to better represent Africa's staggering genetic diversity in genomic data sets, but also to build Africa's domestic genomics capabilities and empower a new generation of African researchers. By doing so, the hope is that Africans will be able to set their own genomics agenda, and leapfrog to new and better ways of doing the work.

"The training that has happened on the continent and the number of new scientists, new students, and fellows that have come through the process and are now enabled to start their own research groups, to grow their own research in their countries, to be a spokesperson for genomics research in their countries, and to build that political will to do these larger types of sequencing initiatives - that is really a significant outcome from H3Africa as well. Over and above all the science that's coming out," Lombard says.

"What has been created through H3Africa is just this locus of researchers and scientists and bioethicists who have the same goal at heart - to work towards adjusting the data bias and making sure that all global populations are represented in genomics."