How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

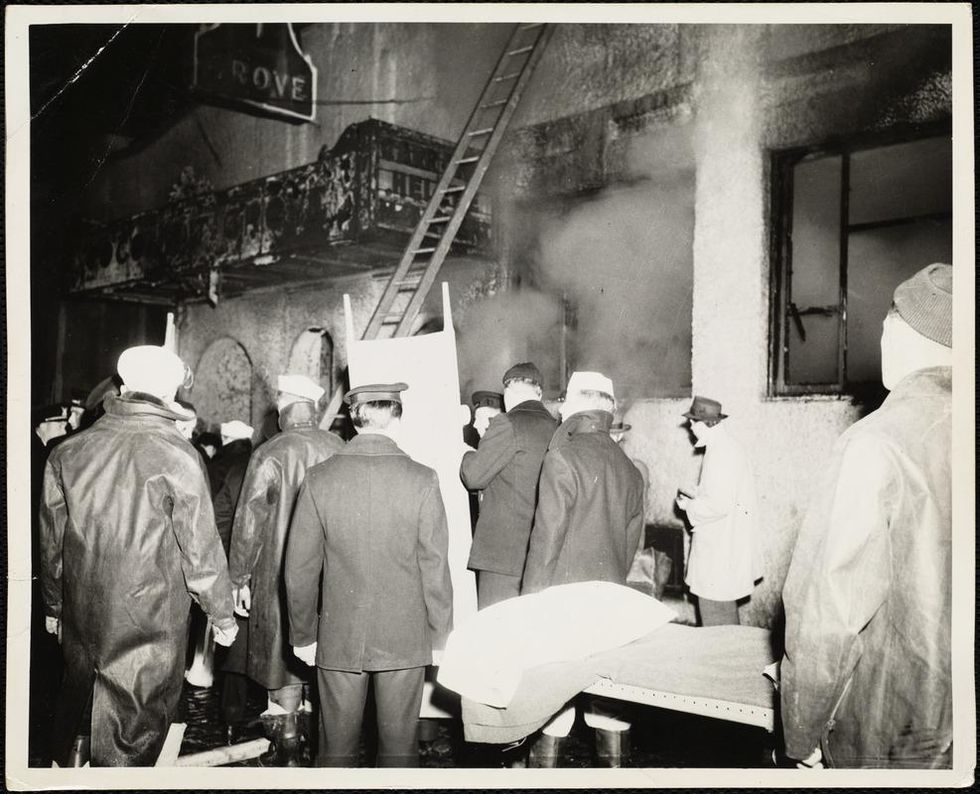

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Lab-grown insect meat could be the protein source of the future.

Agriculture in the 21st century is not as simple as it once was. With a population seven billion strong, a climate in crisis, and sustainability in farming practices on everyone's radar, figuring out how to feed the masses without destroying the Earth is a pressing concern.

Tufts scientists argue that insect cells may be better suited to lab-created meat protein than traditional farm animal cells.

In addition to low-emission cows and drone pollinators, there's a promising new solution on the table. How does "lab-grown insect meat" grab you?

Writing in Frontiers in Sustainable Food Systems, researchers at Tufts University say insects that are fed plants and genetically modified for maximum growth, nutrition, and flavor could be the best, greenest alternative to our current livestock farming practices. This lab-grown protein source could produce high volume, nutritious food without the massive resources required for traditional animal agriculture.

"Due to the environmental, public health, and animal welfare concerns associated with our current livestock system, it is vital to develop more sustainable food production methods," says lead author Natalie Rubio. Could insect meat be the key?

Next Up

New sustainable food production includes what's called "cellular agriculture," an emerging industry and field of study in which meat and dairy are produced via cells in a lab instead of whole animals. So far, scientists have primarily focused on bovine, porcine, and avian cells to create this "cultured meat."

But the Tufts scientists argue that insect cells may be better suited to lab-created meat protein than traditional farm animal cells.

"Compared to cultured mammalian, avian, and other vertebrate cells, insect cell cultures require fewer resources and less energy-intensive environmental control, as they have lower glucose requirements and can thrive in a wider range of temperature, pH, oxygen, and osmolarity conditions," reports Rubio.

"Alterations necessary for large-scale production are also simpler to achieve with insect cells, which are currently used for biomanufacturing of insecticides, drugs, and vaccines," she adds.

They still have some details to hash out, however, including how to make cultured insect meat more like the steak and chicken we're all familiar with.

"Despite this immense potential, cultured insect meat isn't ready for consumption," says Rubio. "Research is ongoing to master two key processes: controlling development of insect cells into muscle and fat, and combining these in 3D cultures with a meat-like texture." They are currently experimenting with mushroom-derived fiber to tackle the latter.

People would still be able to eat meat—it would just come from a different source.

Open Questions

As the report points out, one thing that makes cellular agriculture an attractive alternative to high-density animal farming is that it doesn't require consumers to change their behaviors. People would still be able to eat meat—it would just come from a different source.

But the big question remains: How will lab-grown insect meat taste? Will the buggers really taste as good as burgers?

And, of course, there's the "ew" factor. Meat alternatives have proven to work for some people—Tofurky is still in business, after all—but it may be a hard sell to get the masses to jump on board with eating bugs. Consuming creepy crawlies sounds simply unpalatable to many, and the term "lab-grown, cellular insect meat" doesn't help much. Perhaps an entirely new nomenclature is in order.

Another question is whether or not folks will trust such scientifically-created food. People already use the term "frankenfood" to refer to genetic modification -- even though the vast majority of the corn and soybeans planted in the U.S. today are genetically engineered, and other major crops with GM varieties include potatoes, apples, squash, and papayas. Still, combining GM technology with eating insects may be a hard sell.

However, we're all going to have to get used to trying new things if we want to leave a habitable home for our children. If a lab-grown bug burger can save the planet, maybe it's worth a shot.

Six Reasons Why Humans Should Return to the Moon

An astronaut does a spacewalk on the Moon.

"That's one small step for man; one giant leap for mankind."

This July 20th marks fifty years since Neil Armstrong, mission commander of NASA's Apollo 11, uttered those famous words. Much less discussed is how Project Apollo shifted lunar science into high gear, ultimately teaching scientists just how valuable the Moon could become.

A lunar-based solar power system would actually be cheaper than Earth-based solar power implemented on a global scale.

During the six missions that landed humans on the lunar surface from 1969 to 1972, Apollo astronauts collected some 842 pounds of lunar rocks and dirt. Analysis of these materials has provided us with major clues about the origin of Earth's celestial companion 4.51 billion years ago, but also has revealed the Moon is a treasure trove. Lunar rock contains a plethora of minerals with high industrial value. So let's take a look at some prime examples of how humanity's expected return to the lunar surface in the years to come could help life here on Earth.

24/7 solar energy for Earth

During the 1970s, scientists began examining the Apollo lunar samples to study how the lunar surface could be used as a resource. One such scientist was physicist David Criswell, who has since shown that a lunar-based solar power system would actually be cheaper than Earth-based solar power implemented on a global scale. Whoa! How is that possible, given the high cost of launching people and machines into space?

The key is that it would be enormously expensive to scale up enough Earth-based solar power to supply all of humanity's electrical needs, since solar power on such a scale would require a lot of metal, glass, and cement.

But the Moon's lack of atmosphere and weather means that photovoltaic cells built by robots from lunar materials can be paper thin, in contrast with the heavy structures needed in Earth-based solar arrays. Ringing the Moon, such a system would be in perpetual sunlight, making it cheaper to collect solar power there and beam it down to Earth in the form of microwaves.

A source of helium-3 for clean, safe nuclear fusion power and other uses

The gas helium-3 is extremely rare on Earth, but plentiful on the Moon, and could be used in advanced nuclear fusion reactors. Helium-3 also has anti-terrorism and medical uses, especially in the diagnosis of various pulmonary diseases.

A place to offload industrial pollution

Since there are minerals and oxygen in lunar rocks and dust, and frozen water in certain locations, the Moon is an ideal home for factories. Thus, billionaire Jeff Bezos has proposed relocating large segments of heavy industry there, reducing the amount of pollution that is produced on Earth.

The Moon could be a place for colonists to get their space legs before humans put down roots on more distant locations like Mars.

Radio Astronomy without interference from Earth

Constructed on the Moon's far side (the side of the Moon that always faces away from Earth), radio telescopes advancing human knowledge of the Cosmos, and searching for signals from extraterrestrial civilizations, could operate with increased sensitivity and efficiency.

Lunar Tourism

Using the Moon as a destination for tourists may not sound helpful initially, given that only the very wealthy would be able to afford such journeys in the foreseeable future. However, the economic payoff could be substantial in terms of jobs that lunar tourism could provide on Earth. Furthermore, short of actual tourism, companies are gearing up to provide lunar entertainment to fun-seekers here on Earth in the form of mini lunar rovers that people could control from their living rooms, just for fun.

Lunar Colonies

Similar to lunar tourism, lunar colonization sounds initially like a development that would help only those people who go. But, located just three-days' travel from Earth, the Moon would be an excellent place for humanity to become a multi-planet species. The Moon could be a place for colonists to get their space legs before humans put down roots on more distant locations like Mars. With hundreds or thousands of humans thriving on the Moon, Earthlings might find some level of peace of mind knowing that humanity is in a position to outlive a planetary catastrophe.