How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

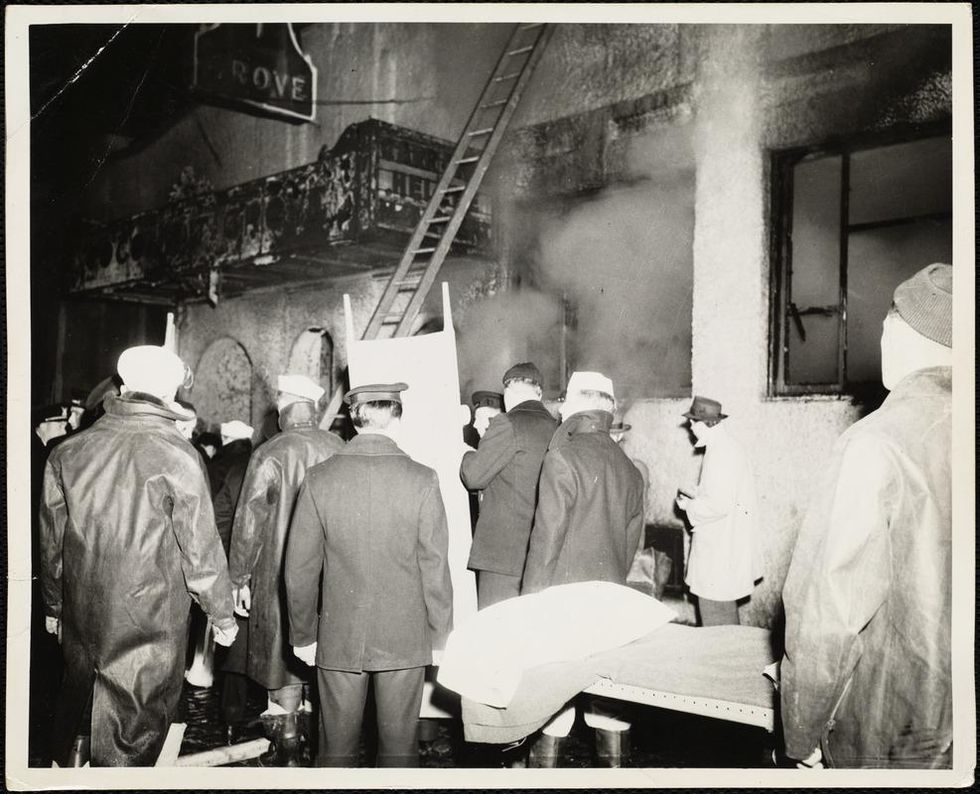

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Can Biotechnology Take the Allergies Out of Cats?

From a special food to a vaccine and gene editing, new technologies may offer solutions for cat lovers with allergies.

Amy Bitterman, who teaches at Rutgers Law School in Newark, gets enormous pleasure from her three mixed-breed rescue cats, Spike, Dee, and Lucy. To manage her chronically stuffy nose, three times a week she takes Allegra D, which combines the antihistamine fexofenadine with the decongestant pseudoephedrine. Amy's dog allergy is rougher--so severe that when her sister launched a business, Pet Care By Susan, from their home in Edison, New Jersey, they knew Susan would have to move elsewhere before she could board dogs. Amy has tried to visit their brother, who owns a Labrador Retriever, taking Allegra D beforehand. But she began sneezing, and then developed watery eyes and phlegm in her chest.

"It gets harder and harder to breathe," she says.

Animal lovers have long dreamed of "hypo-allergenic" cats and dogs. Although to date, there is no such thing, biotechnology is beginning to provide solutions for cat-lovers. Cats are a simpler challenge than dogs. Dog allergies involve as many as seven proteins. But up to 95 percent of people who have cat allergies--estimated at 10 to 30 percent of the population in North America and Europe--react to one protein, Fel d1. Interestingly, cats don't seem to need Fel d1. There are cats who don't produce much Fel d1 and have no known health problems.

The current technologies fight Fel d1 in ingenious ways. Nestle Purina reached the market first with a cat food, Pro Plan LiveClear, launched in the U.S. a year and a half ago. It contains Fel d1 antibodies from eggs that in effect neutralize the protein. HypoCat, a vaccine for cats, induces them to create neutralizing antibodies to their own Fel d1. It may be available in the United States by 2024, says Gary Jennings, chief executive officer of Saiba Animal Health, a University of Zurich spin-off. Another approach, using the gene-editing tool CRISPR to create a medication that would splice out Fel d1 genes in particular tissues, is the furthest from fruition.

"Our goal was to ensure that whatever we do has no negative impact on the cat."

Customer demand is high. "We already have a steady stream of allergic cat owners contacting us desperate to have access to the vaccine or participate in the testing program," Jennings said. "There is a major unmet medical need."

More than a third of Americans own a cat (while half own a dog), and pet ownership is rising. With more Americans living alone, pets may be just the right amount of company. But the number of Americans with asthma increases every year. Of that group, some 20 to 30 percent have pet allergies that could trigger a possibly deadly attack. It is not clear how many pets end up in shelters because their owners could no longer manage allergies. Instead, allergists commonly report that their patients won't give up a beloved companion.

No one can completely avoid Fel d1, which clings to clothing and lands everywhere cat-owners go, even in schools and new homes never occupied by cats. Myths among cat-lovers may lead them to underestimate their own level of risk. Short hair doesn't help: the length of cat hair doesn't affect the production of Fel d1. Bathing your cat will likely upset it and accomplish little. Washing cuts the amount on its skin and fur only for two days. In one study, researchers measured the Fel d1 in the ambient air in a small chamber occupied by a cat—and then washed the cat. Three hours later, with the cat in the chamber again, the measurable Fel d1 in the air was lower. But this benefit was gone after 24 hours.

For years, the best option has been shots for people that prompt protective antibodies. Bitterman received dog and cat allergy injections twice a week as a child. However, these treatments require up to 100 injections over three to five years, and, as in her case, the effect may be partial or wear off. Even if you do opt for shots, treating the cat also makes sense, since you could protect more than one allergic member of your household and any allergic visitors as well.

An Allergy-Neutralizing Diet

Cats produce much of their Fel d1 in their saliva, which then spreads it to their fur when they groom, observed Nestle Purina immunologist Ebenezer Satyaraj. He realized that this made saliva—and therefore a cat's mouth--an unusually effective site for change. Hens exposed to Fel d1 produce their own antibodies, which survive in their eggs. The team coated LiveClear food with a powder form of these eggs; once in a cat's mouth, the chicken antibody binds to the Fel d1 in the cat's saliva, neutralizing it.

The results are partial: In a study with 105 cats, the level of active Fel d1 in their fur had dropped on average by 47 percent after ten weeks eating LiveClear. Cats that produced more Fel d1 at baseline had a more robust response, with a drop of up to 71 percent. A safety study found no effects on cats after six months on the diet. "Our goal was to ensure that whatever we do has no negative impact on the cat," Satyaraj said. Might a dogfood that minimizes dog allergens be on the way? "There is some early work," he said.

A Vaccine

This is a year when vaccines changed the lives of billions. Saiba's vaccine, HypoCat, delivers recombinant Fel d1 and the coat from a plant virus (the Cucumber mosaic virus) without any vital genetic information. The viral coat serves as a carrier. A cat would need shots once or twice a year to produce antibodies that neutralize Fel d1.

HypoCat works much like any vaccine, with the twist that the enemy is the cat's own protein. Is that safe? Saiba's team has followed 70 cats treated with the vaccine over two years and they remain healthy. Again the active Fel d1 doesn't disappear but diminishes. The team asked 10 people with cat allergies to report on their symptoms when they pet their vaccinated cats. Eight of them could pet their cat for nearly a half hour before their symptoms began, compared with an average of 17 minutes before the vaccine.

Jennings hopes to develop a HypoDog shot with a similar approach. However, the goal would be to target four or five proteins in one vaccine, and that increases the risk of hurting the dog. In the meantime, allergic dog-lovers considering an expensive breeder dog might think again: Independent research does not support the idea that any breed of dog produces less dander in the home. In fact, one well-designed study found that Spanish water dogs, Airedales, poodles and Labradoodles--breeds touted as hypo-allergenic--had significantly more of the most common allergen on their coat than an ordinary Lab and the control group.

Gene Editing

One day you might be able to bring your cat to the vet once a year for an injection that would modify specific tissues so they wouldn't produce Fel d1.

Nicole Brackett, a postdoctoral scientist at Viriginia-based Indoor Biotechnologies, which specializes in manufacturing biologics for allergy and asthma, most recently has used CRISPR to identify Fel d1 genetic sequences in cells from 50 domestic cats and 24 exotic ones. She learned that the sequences vary substantially from one cat to the next. This discovery, she says, backs up the observations that Fel d1 doesn't have a vital purpose.

The next step will be a CRISPR knockout of the relevant genes in cells from feline salivary glands, a prime source of Fel d1. Although the company is considering using CRISPR to edit the genes in a cat embryo and possibly produce a Fel d1-free cat, designer cats won't be its ultimate product. Instead, the company aims to produce injections that could treat any cat.

Reducing pet allergens at home could have a compound benefit, Indoor Biotechnologies founder Martin Chapman, an immunologist, notes: "When you dampen down the response to one allergen, you could also dampen it down to multiple allergens." As allergies become more common around the world, that's especially good news.

The large genetic studies underlying certain disease risk tests have primarily been done in populations of European ancestry, limiting their accuracy.

Earlier this year, California-based Ambry Genetics announced that it was discontinuing a test meant to estimate a person's risk of developing prostate or breast cancer. The test looks for variations in a person's DNA that are known to be associated with these cancers.

Known as a polygenic risk score, this type of test adds up the effects of variants in many genes — often in the dozens or hundreds — and calculates a person's risk of developing a particular health condition compared to other people. In this way, polygenic risk scores are different from traditional genetic tests that look for mutations in single genes, such as BRCA1 and BRCA2, which raise the risk of breast cancer.

Traditional genetic tests look for mutations that are relatively rare in the general population but have a large impact on a person's disease risk, like BRCA1 and BRCA2. By contrast, polygenic risk scores scan for more common genetic variants that, on their own, have a small effect on risk. Added together, however, they can raise a person's risk for developing disease.

These scores could become a part of routine healthcare in the next few years. Researchers are developing polygenic risk scores for cancer, heart, disease, diabetes and even depression. Before they can be rolled out widely, they'll have to overcome a key limitation: racial bias.

"The issue with these polygenic risk scores is that the scientific studies which they're based on have primarily been done in individuals of European ancestry," says Sara Riordan, president of the National Society of Genetics Counselors. These scores are calculated by comparing the genetic data of people with and without a particular disease. To make these scores accurate, researchers need genetic data from tens or hundreds of thousands of people.

Myriad's old test would have shown that a Black woman had twice as high of a risk for breast cancer compared to the average woman even if she was at low or average risk.

A 2018 analysis found that 78% of participants included in such large genetic studies, known as genome-wide association studies, were of European descent. That's a problem, because certain disease-associated genetic variants don't appear equally across different racial and ethnic groups. For example, a particular variant in the TTR gene, known as V1221, occurs more frequently in people of African descent. In recent years, the variant has been found in 3 to 4 percent of individuals of African ancestry in the United States. Mutations in this gene can cause protein to build up in the heart, leading to a higher risk of heart failure. A polygenic risk score for heart disease based on genetic data from mostly white people likely wouldn't give accurate risk information to African Americans.

Accuracy in genetic testing matters because such polygenic risk scores could help patients and their doctors make better decisions about their healthcare.

For instance, if a polygenic risk score determines that a woman is at higher-than-average risk of breast cancer, her doctor might recommend more frequent mammograms — X-rays that take a picture of the breast. Or, if a risk score reveals that a patient is more predisposed to heart attack, a doctor might prescribe preventive statins, a type of cholesterol-lowering drug.

"Let's be clear, these are not diagnostic tools," says Alicia Martin, a population and statistical geneticist at the Broad Institute of MIT and Harvard. "We can't use a polygenic score to say you will or will not get breast cancer or have a heart attack."

But combining a patient's polygenic risk score with other factors that affect disease risk — like age, weight, medication use or smoking status — may provide a better sense of how likely they are to develop a specific health condition than considering any one risk factor one its own. The accuracy of polygenic risk scores becomes even more important when considering that these scores may be used to guide medication prescription or help patients make decisions about preventive surgery, such as a mastectomy.

In a study published in September, researchers used results from large genetics studies of people with European ancestry and data from the UK Biobank to calculate polygenic risk scores for breast and prostate cancer for people with African, East Asian, European and South Asian ancestry. They found that they could identify individuals at higher risk of breast and prostate cancer when they scaled the risk scores within each group, but the authors say this is only a temporary solution. Recruiting more diverse participants for genetics studies will lead to better cancer detection and prevent, they conclude.

Recent efforts to do just that are expected to make these scores more accurate in the future. Until then, some genetics companies are struggling to overcome the European bias in their tests.

Acknowledging the limitations of its polygenic risk score, Ambry Genetics said in April that it would stop offering the test until it could be recalibrated. The company launched the test, known as AmbryScore, in 2018.

"After careful consideration, we have decided to discontinue AmbryScore to help reduce disparities in access to genetic testing and to stay aligned with current guidelines," the company said in an email to customers. "Due to limited data across ethnic populations, most polygenic risk scores, including AmbryScore, have not been validated for use in patients of diverse backgrounds." (The company did not make a spokesperson available for an interview for this story.)

In September 2020, the National Comprehensive Cancer Network updated its guidelines to advise against the use of polygenic risk scores in routine patient care because of "significant limitations in interpretation." The nonprofit, which represents 31 major cancer cancers across the United States, said such scores could continue to be used experimentally in clinical trials, however.

Holly Pederson, director of Medical Breast Services at the Cleveland Clinic, says the realization that polygenic risk scores may not be accurate for all races and ethnicities is relatively recent. Pederson worked with Salt Lake City-based Myriad Genetics, a leading provider of genetic tests, to improve the accuracy of its polygenic risk score for breast cancer.

The company announced in August that it had recalibrated the test, called RiskScore, for women of all ancestries. Previously, Myriad did not offer its polygenic risk score to women who self-reported any ancestry other than sole European or Ashkenazi ancestry.

"Black women, while they have a similar rate of breast cancer to white women, if not lower, had twice as high of a polygenic risk score because the development and validation of the model was done in white populations," Pederson said of the old test. In other words, Myriad's old test would have shown that a Black woman had twice as high of a risk for breast cancer compared to the average woman even if she was at low or average risk.

To develop and validate the new score, Pederson and other researchers assessed data from more than 275,000 women, including more than 31,000 African American women and nearly 50,000 women of East Asian descent. They looked at 56 different genetic variants associated with ancestry and 93 associated with breast cancer. Interestingly, they found that at least 95% of the breast cancer variants were similar amongst the different ancestries.

The company says the resulting test is now more accurate for all women across the board, but Pederson cautions that it's still slightly less accurate for Black women.

"It's not only the lack of data from Black women that leads to inaccuracies and a lack of validation in these types of risk models, it's also the pure genomic diversity of Africa," she says, noting that Africa is the most genetically diverse continent on the planet. "We just need more data, not only in American Black women but in African women to really further characterize that continent."

Martin says it's problematic that such scores are most accurate for white people because they could further exacerbate health disparities in traditionally underserved groups, such as Black Americans. "If we were to set up really representative massive genetic studies, we would do a much better job at predicting genetic risk for everybody," she says.

Earlier this year, the National Institutes of Health awarded $38 million to researchers to improve the accuracy of polygenic risk scores in diverse populations. Researchers will create new genome datasets and pool information from existing ones in an effort to diversify the data that polygenic scores rely on. They plan to make these datasets available to other scientists to use.

"By having adequate representation, we can ensure that the results of a genetic test are widely applicable," Riordan says.