How thousands of first- and second-graders saved the world from a deadly disease

Although Jonas Salk has gone down in history for helping rid the world (almost) of polio, his revolutionary vaccine wouldn't have been possible without the world’s largest clinical trial – and the bravery of thousands of kids.

Exactly 67 years ago, in 1955, a group of scientists and reporters gathered at the University of Michigan and waited with bated breath for Dr. Thomas Francis Jr., director of the school’s Poliomyelitis Vaccine Evaluation Center, to approach the podium. The group had gathered to hear the news that seemingly everyone in the country had been anticipating for the past two years – whether the vaccine for poliomyelitis, developed by Francis’s former student Jonas Salk, was effective in preventing the disease.

Polio, at that point, had become a household name. As the highly contagious virus swept through the United States, cities closed their schools, movie theaters, swimming pools, and even churches to stop the spread. For most, polio presented as a mild illness, and was usually completely asymptomatic – but for an unlucky few, the virus took hold of the central nervous system and caused permanent paralysis of muscles in the legs, arms, and even people’s diaphragms, rendering the person unable to walk and breathe. It wasn’t uncommon to hear reports of people – mostly children – who fell sick with a flu-like virus and then, just days later, were relegated to spend the rest of their lives in an iron lung.

For two years, researchers had been testing a vaccine that would hopefully be able to stop the spread of the virus and prevent the 45,000 infections each year that were keeping the nation in a chokehold. At the podium, Francis greeted the crowd and then proceeded to change the course of human history: The vaccine, he reported, was “safe, effective, and potent.” Widespread vaccination could begin in just a few weeks. The nightmare was over.

The road to success

Jonas Salk, a medical researcher and virologist who developed the vaccine with his own research team, would rightfully go down in history as the man who eradicated polio. (Today, wild poliovirus circulates in just two countries, Afghanistan and Pakistan – with only 140 cases reported in 2020.) But many people today forget that the widespread vaccination campaign that effectively ended wild polio across the globe would have never been possible without the human clinical trials that preceded it.

As with the COVID-19 vaccine, skepticism and misinformation around the polio vaccine abounded. But even more pervasive than the skepticism was fear. The consequences of polio had arguably never been more visible.

The road to human clinical trials – and the resulting vaccine – was a long one. In 1938, President Franklin Delano Roosevelt launched the National Foundation for Infantile Paralysis in order to raise funding for research and development of a polio vaccine. (Today, we know this organization as the March of Dimes.) A polio survivor himself, Roosevelt elevated awareness and prevention into the national spotlight, even more so than it had been previously. Raising funds for a safe and effective polio vaccine became a cornerstone of his presidency – and the funds raked in by his foundation went primarily to Salk to fund his research.

The Trials Begin

Salk’s vaccine, which included an inactivated (killed) polio virus, was promising – but now the researchers needed test subjects to make global vaccination a possibility. Because the aim of the vaccine was to prevent paralytic polio, researchers decided that they had to test the vaccine in the population that was most vulnerable to paralysis – young children. And, because the rate of paralysis was so low even among children, the team required many children to collect enough data. Francis, who led the trial to evaluate Salk’s vaccine, began the process of recruiting more than one million school-aged children between the ages of six and nine in 272 counties that had the highest incidence of the disease. The participants were nicknamed the “Polio Pioneers.”

Double-blind, placebo-based trials were considered the “gold standard” of epidemiological research back in Francis's day - and they remain the best approach we have today. These rigorous scientific studies are designed with two participant groups in mind. One group, called the test group, receives the experimental treatment (such as a vaccine); the other group, called the control, receives an inactive treatment known as a placebo. The researchers then compare the effects of the active treatment against the effects of the placebo, and every researcher is “blinded” as to which participants receive what treatment. That way, the results aren’t tainted by any possible biases.

But the study was controversial in that only some of the individual field trials at the county and state levels had a placebo group. Researchers described this as a “calculated risk,” meaning that while there were risks involved in giving the vaccine to a large number of children, the bigger risk was the potential paralysis or death that could come with being infected by polio. In all, just 200,000 children across the US received a placebo treatment, while an additional 725,000 children acted as observational controls – in other words, researchers monitored them for signs of infection, but did not give them any treatment.

As with the COVID-19 vaccine, skepticism and misinformation around the polio vaccine abounded. But even more pervasive than the skepticism was fear. President Roosevelt, who had made many public and televised appearances in a wheelchair, served as a perpetual reminder of the consequences of polio, as an infection at age 39 had rendered him permanently unable to walk. The consequences of polio had arguably never been more visible, and parents signed up their children in droves to participate in the study and offer them protection.

The Polio Pioneer Legacy

In a little less than a year, roughly half a million children received a dose of Salk’s polio vaccine. While plenty of children were hesitant to get the shot, many former participants still remember the fear surrounding the disease. One former participant, a Polio Pioneer named Debbie LaCrosse, writes of her experience: “There was no discussion, no listing of pros and cons. No amount of concern over possible side effects or other unknowns associated with a new vaccine could compare to the terrifying threat of polio.” For their participation, each kid received a certificate – and sometimes a pin – with the words “Polio Pioneer” emblazoned across the front.

When Francis announced the results of the trial on April 12, 1955, people did more than just breathe a sigh of relief – they openly celebrated, ringing church bells and flooding into the streets to embrace. Salk, who had become the face of the vaccine at that point, was instantly hailed as a national hero – and teachers around the country had their students to write him ‘thank you’ notes for his years of diligent work.

But while Salk went on to win national acclaim – even accepting the Presidential Medal of Freedom for his work on the polio vaccine in 1977 – his success was due in no small part to the children (and their parents) who took a risk in order to advance medical science. And that risk paid off: By the early 1960s, the yearly cases of polio in the United States had gone down to just 910. Where before the vaccine polio had caused around 15,000 cases of paralysis each year, only ten cases of paralysis were recorded in the entire country throughout the 1970s. And in 1979, the virus that once shuttered entire towns was declared officially eradicated in this country. Thanks to the efforts of these brave pioneers, the nation – along with the majority of the world – remains free of polio even today.

New Podcast: George Church on Woolly Mammoths, Organ Transplants, and Covid Vaccines

Dr. George Church, a leading pioneer of gene editing, updates our listeners on several of his noteworthy projects.

The "Making Sense of Science" podcast features interviews with leading medical and scientific experts about the latest developments and the big ethical and societal questions they raise. This monthly podcast is hosted by journalist Kira Peikoff, founding editor of the award-winning science outlet Leaps.org.

This month, our guest is notable genetics pioneer Dr. George Church of Harvard Medical School. Dr. Church has remarkably bold visions for how innovation in science can fundamentally transform the future of humanity and our planet. His current moonshot projects include: de-extincting some of the woolly mammoth's genes to create a hybrid Asian elephant with the cold-tolerance traits of the woolly mammoth, so that this animal can re-populate the Arctic and help stave off climate change; reversing chronic diseases of aging through gene therapy, which he and colleagues are now testing in dogs; and transplanting genetically engineered pig organs to humans to eliminate the tragically long waiting lists for organs. Hear Dr. Church discuss all this and more on our latest episode.

Watch the Trailer:

Listen to the Episode:

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

Beyond Henrietta Lacks: How the Law Has Denied Every American Ownership Rights to Their Own Cells

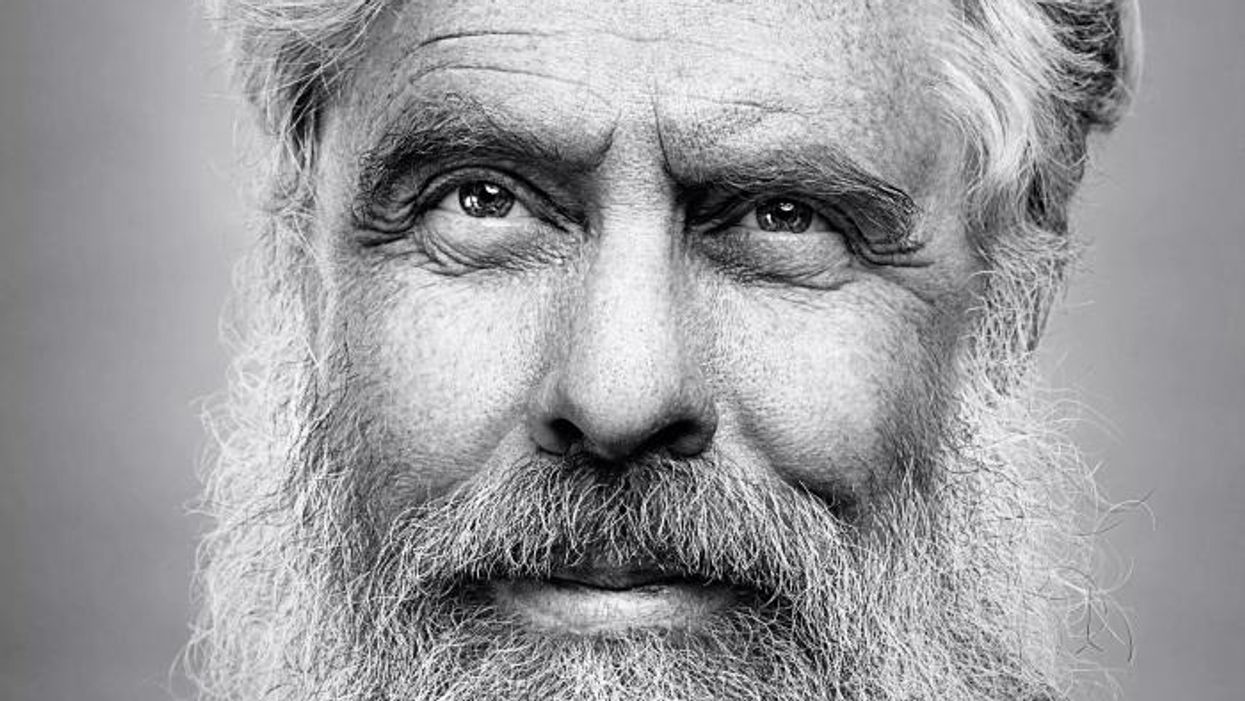

A 2017 portrait of Henrietta Lacks.

The common perception is that Henrietta Lacks was a victim of poverty and racism when in 1951 doctors took samples of her cervical cancer without her knowledge or permission and turned them into the world's first immortalized cell line, which they called HeLa. The cell line became a workhorse of biomedical research and facilitated the creation of medical treatments and cures worth untold billions of dollars. Neither Lacks nor her family ever received a penny of those riches.

But racism and poverty is not to blame for Lacks' exploitation—the reality is even worse. In fact all patients, then and now, regardless of social or economic status, have absolutely no right to cells that are taken from their bodies. Some have called this biological slavery.

How We Got Here

The case that established this legal precedent is Moore v. Regents of the University of California.

John Moore was diagnosed with hairy-cell leukemia in 1976 and his spleen was removed as part of standard treatment at the UCLA Medical Center. On initial examination his physician, David W. Golde, had discovered some unusual qualities to Moore's cells and made plans prior to the surgery to have the tissue saved for research rather than discarded as waste. That research began almost immediately.

"On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery.'"

Even after Moore moved to Seattle, Golde kept bringing him back to Los Angeles to collect additional samples of blood and tissue, saying it was part of his treatment. When Moore asked if the work could be done in Seattle, he was told no. Golde's charade even went so far as claiming to find a low-income subsidy to pay for Moore's flights and put him up in a ritzy hotel to get him to return to Los Angeles, while paying for those out of his own pocket.

Moore became suspicious when he was asked to sign new consent forms giving up all rights to his biological samples and he hired an attorney to look into the matter. It turned out that Golde had been lying to his patient all along; he had been collecting samples unnecessary to Moore's treatment and had turned them into a cell line that he and UCLA had patented and already collected millions of dollars in compensation. The market for the cell lines was estimated at $3 billion by 1990.

Moore felt he had been taken advantage of and filed suit to claim a share of the money that had been made off of his body. "On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery,'" wrote Priscilla Wald, a professor at Duke University whose career has focused on issues of medicine and culture. "Moore could be viewed as asking to commodify his own body part or be seen as the victim of the theft of his most private and inalienable information."

The case bounced around different levels of the court system with conflicting verdicts for nearly six years until the California Supreme Court ruled on July 9, 1990 that Moore had no legal rights to cells and tissue once they were removed from his body.

The court made a utilitarian argument that the cells had no value until scientists manipulated them in the lab. And it would be too burdensome for researchers to track individual donations and subsequent cell lines to assure that they had been ethically gathered and used. It would impinge on the free sharing of materials between scientists, slow research, and harm the public good that arose from such research.

"In effect, what Moore is asking us to do is impose a tort duty on scientists to investigate the consensual pedigree of each human cell sample used in research," the majority wrote. In other words, researchers don't need to ask any questions about the materials they are using.

One member of the court did not see it that way. In his dissent, Stanley Mosk raised the specter of slavery that "arises wherever scientists or industrialists claim, as defendants have here, the right to appropriate and exploit a patient's tissue for their sole economic benefit—the right, in other words, to freely mine or harvest valuable physical properties of the patient's body. … This is particularly true when, as here, the parties are not in equal bargaining positions."

Mosk also cited the appeals court decision that the majority overturned: "If this science has become for profit, then we fail to see any justification for excluding the patient from participation in those profits."

But the majority bought the arguments that Golde, UCLA, and the nascent biotechnology industry in California had made in amici briefs filed throughout the legal proceedings. The road was now cleared for them to develop products worth billions without having to worry about or share with the persons who provided the raw materials upon which their research was based.

Critical Views

Biomedical research requires a continuous and ever-growing supply of human materials for the foundation of its ongoing work. If an increasing number of patients come to feel as John Moore did, that the system is ripping them off, then they become much less likely to consent to use of their materials in future research.

Some legal and ethical scholars say that donors should be able to limit the types of research allowed for their tissues and researchers should be monitored to assure compliance with those agreements. For example, today it is commonplace for companies to certify that their clothing is not made by child labor, their coffee is grown under fair trade conditions, that food labeled kosher is properly handled. Should we ask any less of our pharmaceuticals than that the donors whose cells made such products possible have been treated honestly and fairly, and share in the financial bounty that comes from such drugs?

Protection of individual rights is a hallmark of the American legal system, says Lisa Ikemoto, a law professor at the University of California Davis. "Putting the needs of a generalized public over the interests of a few often rests on devaluation of the humanity of the few," she writes in a reimagined version of the Moore decision that upholds Moore's property claims to his excised cells. The commentary is in a chapter of a forthcoming book in the Feminist Judgment series, where authors may only use legal precedent in effect at the time of the original decision.

"Why is the law willing to confer property rights upon some while denying the same rights to others?" asks Radhika Rao, a professor at the University of California, Hastings College of the Law. "The researchers who invest intellectual capital and the companies and universities that invest financial capital are permitted to reap profits from human research, so why not those who provide the human capital in the form of their own bodies?" It might be seen as a kind of sweat equity where cash strapped patients make a valuable in kind contribution to the enterprise.

The Moore court also made a big deal about inhibiting the free exchange of samples between scientists. That has become much less the situation over the more than three decades since the decision was handed down. Ironically, this decision, as well as other laws and regulations, have since strengthened the power of patents in biomedicine and by doing so have increased secrecy and limited sharing.

"Although the research community theoretically endorses the sharing of research, in reality sharing is commonly compromised by the aggressive pursuit and defense of patents and by the use of licensing fees that hinder collaboration and development," Robert D. Truog, Harvard Medical School ethicist and colleagues wrote in 2012 in the journal Science. "We believe that measures are required to ensure that patients not bear all of the altruistic burden of promoting medical research."

Additionally, the increased complexity of research and the need for exacting standardization of materials has given rise to an industry that supplies certified chemical reagents, cell lines, and whole animals bred to have specific genetic traits to meet research needs. This has been more efficient for research and has helped to ensure that results from one lab can be reproduced in another.

The Court's rationale of fostering collaboration and free exchange of materials between researchers also has been undercut by the changing structure of that research. Big pharma has shrunk the size of its own research labs and over the last decade has worked out cooperative agreements with major research universities where the companies contribute to the research budget and in return have first dibs on any findings (and sometimes a share of patent rights) that come out of those university labs. It has had a chilling effect on the exchange of materials between universities.

Perhaps tracking cell line donors and use restrictions on those donations might have been burdensome to researchers when Moore was being litigated. Some labs probably still kept their cell line records on 3x5 index cards, computers were primarily expensive room-size behemoths with limited capacity, the internet barely existed, and there was no cloud storage.

But that was the dawn of a new technological age and standards have changed. Now cell lines are kept in state-of-the-art sub zero storage units, tagged with the source, type of tissue, date gathered and often other information. Adding a few more data fields and contacting the donor if and when appropriate does not seem likely to disrupt the research process, as the court asserted.

Forging the Future

"U.S. universities are awarded almost 3,000 patents each year. They earn more than $2 billion each year from patent royalties. Sharing a modest portion of these profits is a novel method for creating a greater sense of fairness in research relationships that we think is worth exploring," wrote Mark Yarborough, a bioethicist at the University of California Davis Medical School, and colleagues. That was penned nearly a decade ago and those numbers have only grown.

The Michigan BioTrust for Health might serve as a useful model in tackling some of these issues. Dried blood spots have been collected from all newborns for half a century to be tested for certain genetic diseases, but controversy arose when the huge archive of dried spots was used for other research projects. As a result, the state created a nonprofit organization to in essence become a biobank and manage access to these spots only for specific purposes, and also to share any revenue that might arise from that research.

"If there can be no property in a whole living person, does it stand to reason that there can be no property in any part of a living person? If there were, can it be said that this could equate to some sort of 'biological slavery'?" Irish ethicist Asim A. Sheikh wrote several years ago. "Any amount of effort spent pondering the issue of 'ownership' in human biological materials with existing law leaves more questions than answers."

Perhaps the biggest question will arise when -- not if but when -- it becomes possible to clone a human being. Would a human clone be a legal person or the property of those who created it? Current legal precedent points to it being the latter.

Today, October 4, is the 70th anniversary of Henrietta Lacks' death from cancer. Over those decades her immortalized cells have helped make possible miraculous advances in medicine and have had a role in generating billions of dollars in profits. Surviving family members have spoken many times about seeking a share of those profits in the name of social justice; they intend to file lawsuits today. Such cases will succeed or fail on their own merits. But regardless of their specific outcomes, one can hope that they spark a larger public discussion of the role of patients in the biomedical research enterprise and lead to establishing a legal and financial claim for their contributions toward the next generation of biomedical research.