Regenerative medicine has come a long way, baby

After a cloned baby sheep, what started as one of the most controversial areas in medicine is now promising to transform it.

The field of regenerative medicine had a shaky start. In 2002, when news spread about the first cloned animal, Dolly the sheep, a raucous debate ensued. Scary headlines and organized opposition groups put pressure on government leaders, who responded by tightening restrictions on this type of research.

Fast forward to today, and regenerative medicine, which focuses on making unhealthy tissues and organs healthy again, is rewriting the code to healing many disorders, though it’s still young enough to be considered nascent. What started as one of the most controversial areas in medicine is now promising to transform it.

Progress in the lab has addressed previous concerns. Back in the early 2000s, some of the most fervent controversy centered around somatic cell nuclear transfer (SCNT), the process used by scientists to produce Dolly. There was fear that this technique could be used in humans, with possibly adverse effects, considering the many medical problems of the animals who had been cloned.

But today, scientists have discovered better approaches with fewer risks. Pioneers in the field are embracing new possibilities for cellular reprogramming, 3D organ printing, AI collaboration, and even growing organs in space. It could bring a new era of personalized medicine for longer, healthier lives - while potentially sparking new controversies.

Engineering tissues from amniotic fluids

Work in regenerative medicine seeks to reverse damage to organs and tissues by culling, modifying and replacing cells in the human body. Scientists in this field reach deep into the mechanisms of diseases and the breakdowns of cells, the little workhorses that perform all life-giving processes. If cells can’t do their jobs, they take whole organs and systems down with them. Regenerative medicine seeks to harness the power of healthy cells derived from stem cells to do the work that can literally restore patients to a state of health—by giving them healthy, functioning tissues and organs.

Modern-day regenerative medicine takes its origin from the 1998 isolation of human embryonic stem cells, first achieved by John Gearhart at Johns Hopkins University. Gearhart isolated the pluripotent cells that can differentiate into virtually every kind of cell in the human body. There was a raging controversy about the use of these cells in research because at that time they came exclusively from early-stage embryos or fetal tissue.

Back then, the highly controversial SCNT cells were the only way to produce genetically matched stem cells to treat patients. Since then, the picture has changed radically because other sources of highly versatile stem cells have been developed. Today, scientists can derive stem cells from amniotic fluid or reprogram patients’ skin cells back to an immature state, so they can differentiate into whatever types of cells the patient needs.

In the context of medical history, the field of regenerative medicine is progressing at a dizzying speed. But for those living with aggressive or chronic illnesses, it can seem that the wheels of medical progress grind slowly.

The ethical debate has been dialed back and, in the last few decades, the field has produced important innovations, spurring the development of whole new FDA processes and categories, says Anthony Atala, a bioengineer and director of the Wake Forest Institute for Regenerative Medicine. Atala and a large team of researchers have pioneered many of the first applications of 3D printed tissues and organs using cells developed from patients or those obtained from amniotic fluid or placentas.

His lab, considered to be the largest devoted to translational regenerative medicine, is currently working with 40 different engineered human tissues. Sixteen of them have been transplanted into patients. That includes skin, bladders, urethras, muscles, kidneys and vaginal organs, to name just a few.

These achievements are made possible by converging disciplines and technologies, such as cell therapies, bioengineering, gene editing, nanotechnology and 3D printing, to create living tissues and organs for human transplants. Atala is currently overseeing clinical trials to test the safety of tissues and organs engineered in the Wake Forest lab, a significant step toward FDA approval.

In the context of medical history, the field of regenerative medicine is progressing at a dizzying speed. But for those living with aggressive or chronic illnesses, it can seem that the wheels of medical progress grind slowly.

“It’s never fast enough,” Atala says. “We want to get new treatments into the clinic faster, but the reality is that you have to dot all your i’s and cross all your t’s—and rightly so, for the sake of patient safety. People want predictions, but you can never predict how much work it will take to go from conceptualization to utilization.”

As a surgeon, he also treats patients and is able to follow transplant recipients. “At the end of the day, the goal is to get these technologies into patients, and working with the patients is a very rewarding experience,” he says. Will the 3D printed organs ever outrun the shortage of donated organs? “That’s the hope,” Atala says, “but this technology won’t eliminate the need for them in our lifetime.”

New methods are out of this world

Jeanne Loring, another pioneer in the field and director of the Center for Regenerative Medicine at Scripps Research Institute in San Diego, says that investment in regenerative medicine is not only paying off, but is leading to truly personalized medicine, one of the holy grails of modern science.

This is because a patient’s own skin cells can be reprogrammed to become replacements for various malfunctioning cells causing incurable diseases, such as diabetes, heart disease, macular degeneration and Parkinson’s. If the cells are obtained from a source other than the patient, they can be rejected by the immune system. This means that patients need lifelong immunosuppression, which isn’t ideal. “With Covid,” says Loring, “I became acutely aware of the dangers of immunosuppression.” Using the patient’s own cells eliminates that problem.

Microgravity conditions make it easier for the cells to form three-dimensional structures, which could more easily lead to the growing of whole organs. In fact, Loring's own cells have been sent to the ISS for study.

Loring has a special interest in neurons, or brain cells that can be developed by manipulating cells found in the skin. She is looking to eventually treat Parkinson’s disease using them. The manipulated cells produce dopamine, the critical hormone or neurotransmitter lacking in the brains of patients. A company she founded plans to start a Phase I clinical trial using cell therapies for Parkinson’s soon, she says.

This is the culmination of many years of basic research on her part, some of it on her own cells. In 2007, Loring had her own cells reprogrammed, so there’s a cell line that carries her DNA. “They’re just like embryonic stem cells, but personal,” she said.

Loring has another special interest—sending immature cells into space to be studied at the International Space Station. There, microgravity conditions make it easier for the cells to form three-dimensional structures, which could more easily lead to the growing of whole organs. In fact, her own cells have been sent to the ISS for study. “My colleagues and I have completed four missions at the space station,” she says. “The last cells came down last August. They were my own cells reprogrammed into pluripotent cells in 2009. No one else can say that,” she adds.

Future controversies and tipping points

Although the original SCNT debate has calmed down, more controversies may arise, Loring thinks.

One of them could concern growing synthetic embryos. The embryos are ultimately derived from embryonic stem cells, and it’s not clear to what stage these embryos can or will be grown in an artificial uterus—another recent invention. The science, so far done only in animals, is still new and has not been widely publicized but, eventually, “People will notice the production of synthetic embryos and growing them in an artificial uterus,” Loring says. It’s likely to incite many of the same reactions as the use of embryonic stem cells.

Bernard Siegel, the founder and director of the Regenerative Medicine Foundation and executive director of the newly formed Healthspan Action Coalition (HSAC), believes that stem cell science is rapidly approaching tipping point and changing all of medical science. (For disclosure, I do consulting work for HSAC). Siegel says that regenerative medicine has become a new pillar of medicine that has recently been fast-tracked by new technology.

Artificial intelligence is speeding up discoveries and the convergence of key disciplines, as demonstrated in Atala’s lab, which is creating complex new medical products that replace the body’s natural parts. Just as importantly, those parts are genetically matched and pose no risk of rejection.

These new technologies must be regulated, which can be a challenge, Siegel notes. “Cell therapies represent a challenge to the existing regulatory structure, including payment, reimbursement and infrastructure issues that 20 years ago, didn’t exist.” Now the FDA and other agencies are faced with this revolution, and they’re just beginning to adapt.

Siegel cited the 2021 FDA Modernization Act as a major step. The Act allows drug developers to use alternatives to animal testing in investigating the safety and efficacy of new compounds, loosening the agency’s requirement for extensive animal testing before a new drug can move into clinical trials. The Act is a recognition of the profound effect that cultured human cells are having on research. Being able to test drugs using actual human cells promises to be far safer and more accurate in predicting how they will act in the human body, and could accelerate drug development.

Siegel, a longtime veteran and founding father of several health advocacy organizations, believes this work helped bring cell therapies to people sooner rather than later. His new focus, through the HSAC, is to leverage regenerative medicine into extending not just the lifespan but the worldwide human healthspan, the period of life lived with health and vigor. “When you look at the HSAC as a tree,” asks Siegel, “what are the roots of that tree? Stem cell science and the huge ecosystem it has created.” The study of human aging is another root to the tree that has potential to lengthen healthspans.

The revolutionary science underlying the extension of the healthspan needs to be available to the whole world, Siegel says. “We need to take all these roots and come up with a way to improve the life of all mankind,” he says. “Everyone should be able to take advantage of this promising new world.”

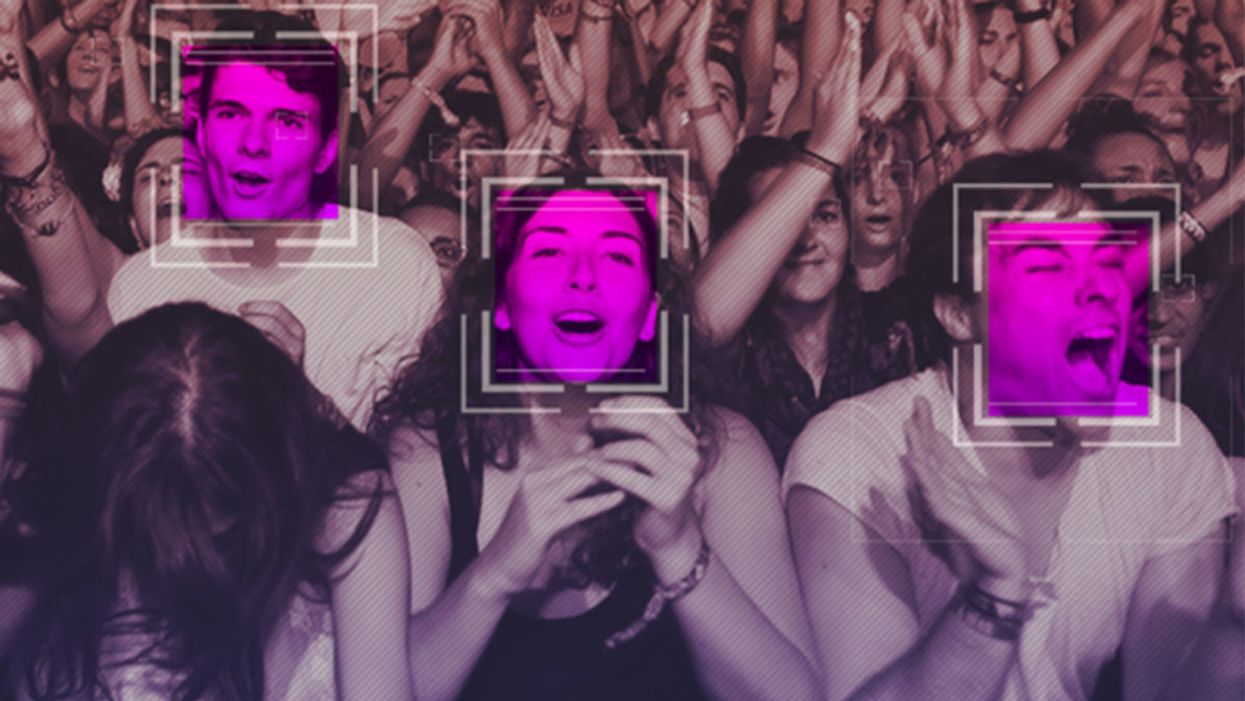

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

Scientists Are Building an “AccuWeather” for Germs to Predict Your Risk of Getting the Flu

A future app may help you avoid getting the flu by informing you of your local risk on a given day.

Applied mathematician Sara del Valle works at the U.S.'s foremost nuclear weapons lab: Los Alamos. Once colloquially called Atomic City, it's a hidden place 45 minutes into the mountains northwest of Santa Fe. Here, engineers developed the first atomic bomb.

Like AccuWeather, an app for disease prediction could help people alter their behavior to live better lives.

Today, Los Alamos still a small science town, though no longer a secret, nor in the business of building new bombs. Instead, it's tasked with, among other things, keeping the stockpile of nuclear weapons safe and stable: not exploding when they're not supposed to (yes, please) and exploding if someone presses that red button (please, no).

Del Valle, though, doesn't work on any of that. Los Alamos is also interested in other kinds of booms—like the explosion of a contagious disease that could take down a city. Predicting (and, ideally, preventing) such epidemics is del Valle's passion. She hopes to develop an app that's like AccuWeather for germs: It would tell you your chance of getting the flu, or dengue or Zika, in your city on a given day. And like AccuWeather, it could help people alter their behavior to live better lives, whether that means staying home on a snowy morning or washing their hands on a sickness-heavy commute.

Sara del Valle of Los Alamos is working to predict and prevent epidemics using data and machine learning.

Since the beginning of del Valle's career, she's been driven by one thing: using data and predictions to help people behave practically around pathogens. As a kid, she'd always been good at math, but when she found out she could use it to capture the tentacular spread of disease, and not just manipulate abstractions, she was hooked.

When she made her way to Los Alamos, she started looking at what people were doing during outbreaks. Using social media like Twitter, Google search data, and Wikipedia, the team started to sift for trends. Were people talking about hygiene, like hand-washing? Or about being sick? Were they Googling information about mosquitoes? Searching Wikipedia for symptoms? And how did those things correlate with the spread of disease?

It was a new, faster way to think about how pathogens propagate in the real world. Usually, there's a 10- to 14-day lag in the U.S. between when doctors tap numbers into spreadsheets and when that information becomes public. By then, the world has moved on, and so has the disease—to other villages, other victims.

"We found there was a correlation between actual flu incidents in a community and the number of searches online and the number of tweets online," says del Valle. That was when she first let herself dream about a real-time forecast, not a 10-days-later backcast. Del Valle's group—computer scientists, mathematicians, statisticians, economists, public health professionals, epidemiologists, satellite analysis experts—has continued to work on the problem ever since their first Twitter parsing, in 2011.

They've had their share of outbreaks to track. Looking back at the 2009 swine flu pandemic, they saw people buying face masks and paying attention to the cleanliness of their hands. "People were talking about whether or not they needed to cancel their vacation," she says, and also whether pork products—which have nothing to do with swine flu—were safe to buy.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place.

They watched internet conversations during the measles outbreak in California. "There's a lot of online discussion about anti-vax sentiment, and people trying to convince people to vaccinate children and vice versa," she says.

Today, they work on predicting the spread of Zika, Chikungunya, and dengue fever, as well as the plain old flu. And according to the CDC, that latter effort is going well.

Since 2015, the CDC has run the Epidemic Prediction Initiative, a competition in which teams like de Valle's submit weekly predictions of how raging the flu will be in particular locations, along with other ailments occasionally. Michael Johannson is co-founder and leader of the program, which began with the Dengue Forecasting Project. Its goal, he says, was to predict when dengue cases would blow up, when previously an area just had a low-level baseline of sick people. "You'll get this massive epidemic where all of a sudden, instead of 3,000 to 4,000 cases, you have 20,000 cases," he says. "They kind of come out of nowhere."

But the "kind of" is key: The outbreaks surely come out of somewhere and, if scientists applied research and data the right way, they could forecast the upswing and perhaps dodge a bomb before it hit big-time. Questions about how big, when, and where are also key to the flu.

A big part of these projects is the CDC giving the right researchers access to the right information, and the structure to both forecast useful public-health outcomes and to compare how well the models are doing. The extra information has been great for the Los Alamos effort. "We don't have to call departments and beg for data," says del Valle.

When data isn't available, "proxies"—things like symptom searches, tweets about empty offices, satellite images showing a green, wet, mosquito-friendly landscape—are helpful: You don't have to rely on anyone's health department.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place. But del Valle wants more than weekly numbers on a government website; she wants that weather-app-inspired fortune-teller, incorporating the many diseases you could get today, standing right where you are. "That's our dream," she says.

This plot shows the the correlations between the online data stream, from Wikipedia, and various infectious diseases in different countries. The results of del Valle's predictive models are shown in brown, while the actual number of cases or illness rates are shown in blue.

(Courtesy del Valle)

The goal isn't to turn you into a germophobic agoraphobe. It's to make you more aware when you do go out. "If you know it's going to rain today, you're more likely to bring an umbrella," del Valle says. "When you go on vacation, you always look at the weather and make sure you bring the appropriate clothing. If you do the same thing for diseases, you think, 'There's Zika spreading in Sao Paulo, so maybe I should bring even more mosquito repellent and bring more long sleeves and pants.'"

They're not there yet (don't hold your breath, but do stop touching your mouth). She estimates it's at least a decade away, but advances in machine learning could accelerate that hypothetical timeline. "We're doing baby steps," says del Valle, starting with the flu in the U.S., dengue in Brazil, and other efforts in Colombia, Ecuador, and Canada. "Going from there to forecasting all diseases around the globe is a long way," she says.

But even AccuWeather started small: One man began predicting weather for a utility company, then helping ski resorts optimize their snowmaking. His influence snowballed, and now private forecasting apps, including AccuWeather's, populate phones across the planet. The company's progression hasn't been without controversy—privacy incursions, inaccuracy of long-term forecasts, fights with the government—but it has continued, for better and for worse.

Disease apps, perhaps spun out of a small, unlikely team at a nuclear-weapons lab, could grow and breed in a similar way. And both the controversies and public-health benefits that may someday spin out of them lie in the future, impossible to predict with certainty.