Researchers Behaving Badly: Known Frauds Are "the Tip of the Iceberg"

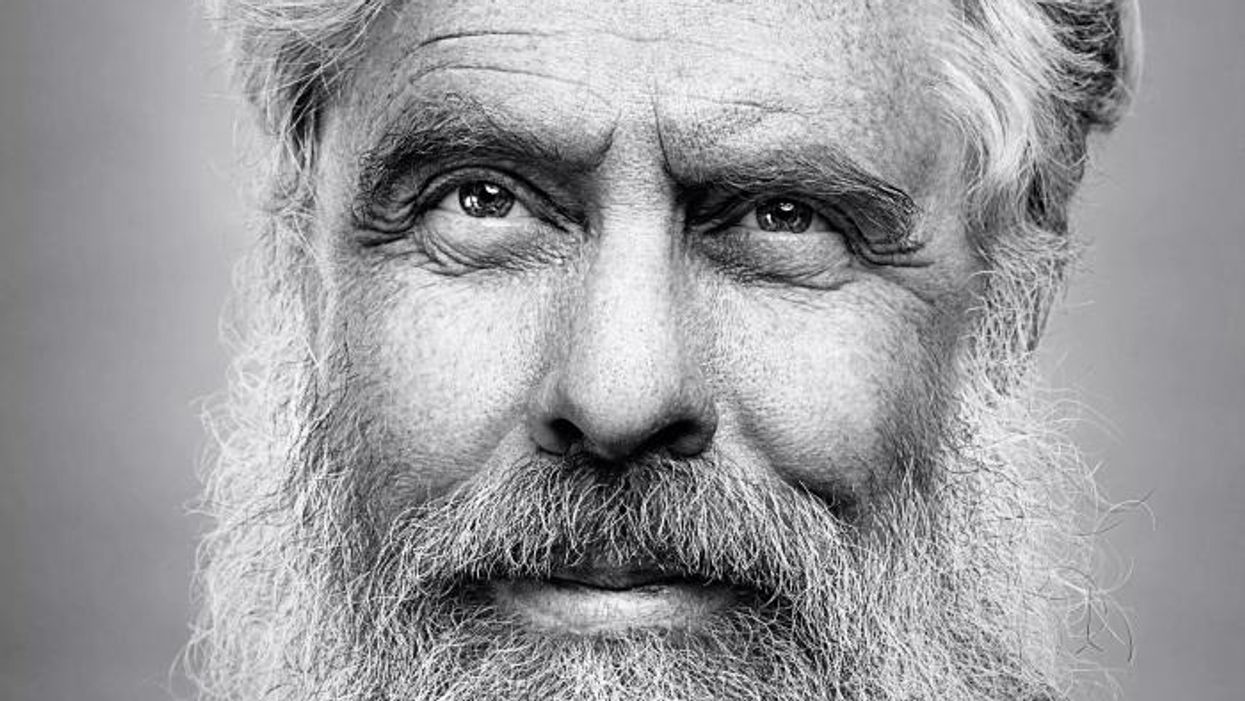

Disgraced stem cell researcher and celebrity surgeon Paolo Macchiarini.

Last week, the whistleblowers in the Paolo Macchiarini affair at Sweden's Karolinska Institutet went on the record here to detail the retaliation they suffered for trying to expose a star surgeon's appalling research misconduct.

Scientific fraud of the type committed by Macchiarini is rare, but studies suggest that it's on the rise.

The whistleblowers had discovered that in six published papers, Macchiarini falsified data, lied about the condition of patients and circumvented ethical approvals. As a result, multiple patients suffered and died. But Karolinska turned a blind eye for years.

Scientific fraud of the type committed by Macchiarini is rare, but studies suggest that it's on the rise. Just this week, for example, Retraction Watch and STAT together broke the news that a Harvard Medical School cardiologist and stem cell researcher, Piero Anversa, falsified data in a whopping 31 papers, which now have to be retracted. Anversa had claimed that he could regenerate heart muscle by injecting bone marrow cells into damaged hearts, a result that no one has been able to duplicate.

A 2009 study published in the Public Library of Science (PLOS) found that about two percent of scientists admitted to committing fabrication, falsification or plagiarism in their work. That's a small number, but up to one third of scientists admit to committing "questionable research practices" that fall into a gray area between rigorous accuracy and outright fraud.

These dubious practices may include misrepresentations, research bias, and inaccurate interpretations of data. One common questionable research practice entails formulating a hypothesis after the research is done in order to claim a successful premise. Another highly questionable practice that can shape research is ghost-authoring by representatives of the pharmaceutical industry and other for-profit fields. Still another is gifting co-authorship to unqualified but powerful individuals who can advance one's career. Such practices can unfairly bolster a scientist's reputation and increase the likelihood of getting the work published.

The above percentages represent what scientists admit to doing themselves; when they evaluate the practices of their colleagues, the numbers jump dramatically. In a 2012 study published in the Journal of Research in Medical Sciences, researchers estimated that 14 percent of other scientists commit serious misconduct, while up to 72 percent engage in questionable practices. While these are only estimates, the problem is clearly not one of just a few bad apples.

In the PLOS study, Daniele Fanelli says that increasing evidence suggests the known frauds are "just the 'tip of the iceberg,' and that many cases are never discovered" because fraud is extremely hard to detect.

Essentially everyone wants to be associated with big breakthroughs, and they may overlook scientifically shaky foundations when a major advance is claimed.

In addition, it's likely that most cases of scientific misconduct go unreported because of the high price of whistleblowing. Those in the Macchiarini case showed extraordinary persistence in their multi-year campaign to stop his deadly trachea implants, while suffering serious damage to their careers. Such heroic efforts to unmask fraud are probably rare.

To make matters worse, there are numerous players in the scientific world who may be complicit in either committing misconduct or covering it up. These include not only primary researchers but co-authors, institutional executives, journal editors, and industry leaders. Essentially everyone wants to be associated with big breakthroughs, and they may overlook scientifically shaky foundations when a major advance is claimed.

Another part of the problem is that it's rare for students in science and medicine to receive an education in ethics. And studies have shown that older, more experienced and possibly jaded researchers are more likely to fudge results than their younger, more idealistic colleagues.

So, given the steep price that individuals and institutions pay for scientific misconduct, what compels them to go down that road in the first place? According to the JRMS study, individuals face intense pressures to publish and to attract grant money in order to secure teaching positions at universities. Once they have acquired positions, the pressure is on to keep the grants and publishing credits coming in order to obtain tenure, be appointed to positions on boards, and recruit flocks of graduate students to assist in research. And not to be underestimated is the human ego.

Paolo Macchiarini is an especially vivid example of a scientist seeking not only fortune, but fame. He liberally (and falsely) claimed powerful politicians and celebrities, even the Pope, as patients or admirers. He may be an extreme example, but we live in an age of celebrity scientists who bring huge amounts of grant money and high prestige to the institutions that employ them.

The media plays a significant role in both glorifying stars and unmasking frauds. In the Macchiarini scandal, the media first lifted him up, as in NBC's laudatory documentary, "A Leap of Faith," which painted him as a kind of miracle-worker, and then brought him down, as in the January 2016 documentary, "The Experiments," which chronicled the agonizing death of one of his patients.

Institutions can also play a crucial role in scientific fraud by putting more emphasis on the number and frequency of papers published than on their quality. The whole course of a scientist's career is profoundly affected by something called the h-index. This is a number based on both the frequency of papers published and how many times the papers are cited by other researchers. Raising one's ranking on the h-index becomes an overriding goal, sometimes eclipsing the kind of patient, time-consuming research that leads to true breakthroughs based on reliable results.

Universities also create a high-pressured environment that encourages scientists to cut corners. They, too, place a heavy emphasis on attracting large monetary grants and accruing fame and prestige. This can lead them, just as it led Karolinska, to protect a star scientist's sloppy or questionable research. According to Dr. Andrew Rosenberg, who is director of the Center for Science and Democracy at the U.S.-based Union of Concerned Scientists, "Karolinska defended its investment in an individual as opposed to the long-term health of the institution. People were dying, and they should have outsourced the investigation from the very beginning."

Having institutions investigate their own practices is a conflict of interest from the get-go, says Rosenberg.

Scientists, universities, and research institutions are also not immune to fads. "Hot" subjects attract grant money and confer prestige, incentivizing scientists to shift their research priorities in a direction that garners more grants. This can mean neglecting the scientist's true area of expertise and interests in favor of a subject that's more likely to attract grant money. In Macchiarini's case, he was allegedly at the forefront of the currently sexy field of regenerative medicine -- a field in which Karolinska was making a huge investment.

The relative scarcity of resources intensifies the already significant pressure on scientists. They may want to publish results rapidly, since they face many competitors for limited grant money, academic positions, students, and influence. The scarcity means that a great many researchers will fail while only a few succeed. Once again, the temptation may be to rush research and to show it in the most positive light possible, even if it means fudging or exaggerating results.

Though the pressures facing scientists are very real, the problem of misconduct is not inevitable.

Intense competition can have a perverse effect on researchers, according to a 2007 study in the journal Science of Engineering and Ethics. Not only does it place undue pressure on scientists to succeed, it frequently leads to the withholding of information from colleagues, which undermines a system in which new discoveries build on the previous work of others. Researchers may feel compelled to withhold their results because of the pressure to be the first to publish. The study's authors propose that more investment in basic research from governments could alleviate some of these competitive pressures.

Scientific journals, although they play a part in publishing flawed science, can't be expected to investigate cases of suspected fraud, says the German science blogger Leonid Schneider. Schneider's writings helped to expose the Macchiarini affair.

"They just basically wait for someone to retract problematic papers," he says.

He also notes that, while American scientists can go to the Office of Research Integrity to report misconduct, whistleblowers in Europe have no external authority to whom they can appeal to investigate cases of fraud.

"They have to go to their employer, who has a vested interest in covering up cases of misconduct," he says.

Science is increasingly international. Major studies can include collaborators from several different countries, and he suggests there should be an international body accessible to all researchers that will investigate suspected fraud.

Ultimately, says Rosenberg, the scientific system must incorporate trust. "You trust co-authors when you write a paper, and peer reviewers at journals trust that scientists at research institutions like Karolinska are acting with integrity."

Without trust, the whole system falls apart. It's the trust of the public, an elusive asset once it has been betrayed, that science depends upon for its very existence. Scientific research is overwhelmingly financed by tax dollars, and the need for the goodwill of the public is more than an abstraction.

The Macchiarini affair raises a profound question of trust and responsibility: Should multiple co-authors be held responsible for a lead author's misconduct?

Karolinska apparently believes so. When the institution at last owned up to the scandal, it vindictively found Karl Henrik-Grinnemo, one of the whistleblowers, guilty of scientific misconduct as well. It also designated two other whistleblowers as "blameworthy" for their roles as co-authors of the papers on which Macchiarini was the lead author.

As a result, the whistleblowers' reputations and employment prospects have become collateral damage. Accusations of research misconduct can be a career killer. Research grants dry up, employment opportunities evaporate, publishing becomes next to impossible, and collaborators vanish into thin air.

Grinnemo contends that co-authors should only be responsible for their discrete contributions, not for the data supplied by others.

"Different aspects of a paper are highly specialized," he says, "and that's why you have multiple authors. You cannot go through every single bit of data because you don't understand all the parts of the article."

This is especially true in multidisciplinary, translational research, where there are sometimes 20 or more authors. "You have to trust co-authors, and if you find something wrong you have to notify all co-authors. But you couldn't go through everything or it would take years to publish an article," says Grinnemo.

Though the pressures facing scientists are very real, the problem of misconduct is not inevitable. Along with increased support from governments and industry, a change in academic culture that emphasizes quality over quantity of published studies could help encourage meritorious research.

But beyond that, trust will always play a role when numerous specialists unite to achieve a common goal: the accumulation of knowledge that will promote human health, wealth, and well-being.

[Correction: An earlier version of this story mistakenly credited The New York Times with breaking the news of the Anversa retractions, rather than Retraction Watch and STAT, which jointly published the exclusive on October 14th. The piece in the Times ran on October 15th. We regret the error.]

The large genetic studies underlying certain disease risk tests have primarily been done in populations of European ancestry, limiting their accuracy.

Earlier this year, California-based Ambry Genetics announced that it was discontinuing a test meant to estimate a person's risk of developing prostate or breast cancer. The test looks for variations in a person's DNA that are known to be associated with these cancers.

Known as a polygenic risk score, this type of test adds up the effects of variants in many genes — often in the dozens or hundreds — and calculates a person's risk of developing a particular health condition compared to other people. In this way, polygenic risk scores are different from traditional genetic tests that look for mutations in single genes, such as BRCA1 and BRCA2, which raise the risk of breast cancer.

Traditional genetic tests look for mutations that are relatively rare in the general population but have a large impact on a person's disease risk, like BRCA1 and BRCA2. By contrast, polygenic risk scores scan for more common genetic variants that, on their own, have a small effect on risk. Added together, however, they can raise a person's risk for developing disease.

These scores could become a part of routine healthcare in the next few years. Researchers are developing polygenic risk scores for cancer, heart, disease, diabetes and even depression. Before they can be rolled out widely, they'll have to overcome a key limitation: racial bias.

"The issue with these polygenic risk scores is that the scientific studies which they're based on have primarily been done in individuals of European ancestry," says Sara Riordan, president of the National Society of Genetics Counselors. These scores are calculated by comparing the genetic data of people with and without a particular disease. To make these scores accurate, researchers need genetic data from tens or hundreds of thousands of people.

Myriad's old test would have shown that a Black woman had twice as high of a risk for breast cancer compared to the average woman even if she was at low or average risk.

A 2018 analysis found that 78% of participants included in such large genetic studies, known as genome-wide association studies, were of European descent. That's a problem, because certain disease-associated genetic variants don't appear equally across different racial and ethnic groups. For example, a particular variant in the TTR gene, known as V1221, occurs more frequently in people of African descent. In recent years, the variant has been found in 3 to 4 percent of individuals of African ancestry in the United States. Mutations in this gene can cause protein to build up in the heart, leading to a higher risk of heart failure. A polygenic risk score for heart disease based on genetic data from mostly white people likely wouldn't give accurate risk information to African Americans.

Accuracy in genetic testing matters because such polygenic risk scores could help patients and their doctors make better decisions about their healthcare.

For instance, if a polygenic risk score determines that a woman is at higher-than-average risk of breast cancer, her doctor might recommend more frequent mammograms — X-rays that take a picture of the breast. Or, if a risk score reveals that a patient is more predisposed to heart attack, a doctor might prescribe preventive statins, a type of cholesterol-lowering drug.

"Let's be clear, these are not diagnostic tools," says Alicia Martin, a population and statistical geneticist at the Broad Institute of MIT and Harvard. "We can't use a polygenic score to say you will or will not get breast cancer or have a heart attack."

But combining a patient's polygenic risk score with other factors that affect disease risk — like age, weight, medication use or smoking status — may provide a better sense of how likely they are to develop a specific health condition than considering any one risk factor one its own. The accuracy of polygenic risk scores becomes even more important when considering that these scores may be used to guide medication prescription or help patients make decisions about preventive surgery, such as a mastectomy.

In a study published in September, researchers used results from large genetics studies of people with European ancestry and data from the UK Biobank to calculate polygenic risk scores for breast and prostate cancer for people with African, East Asian, European and South Asian ancestry. They found that they could identify individuals at higher risk of breast and prostate cancer when they scaled the risk scores within each group, but the authors say this is only a temporary solution. Recruiting more diverse participants for genetics studies will lead to better cancer detection and prevent, they conclude.

Recent efforts to do just that are expected to make these scores more accurate in the future. Until then, some genetics companies are struggling to overcome the European bias in their tests.

Acknowledging the limitations of its polygenic risk score, Ambry Genetics said in April that it would stop offering the test until it could be recalibrated. The company launched the test, known as AmbryScore, in 2018.

"After careful consideration, we have decided to discontinue AmbryScore to help reduce disparities in access to genetic testing and to stay aligned with current guidelines," the company said in an email to customers. "Due to limited data across ethnic populations, most polygenic risk scores, including AmbryScore, have not been validated for use in patients of diverse backgrounds." (The company did not make a spokesperson available for an interview for this story.)

In September 2020, the National Comprehensive Cancer Network updated its guidelines to advise against the use of polygenic risk scores in routine patient care because of "significant limitations in interpretation." The nonprofit, which represents 31 major cancer cancers across the United States, said such scores could continue to be used experimentally in clinical trials, however.

Holly Pederson, director of Medical Breast Services at the Cleveland Clinic, says the realization that polygenic risk scores may not be accurate for all races and ethnicities is relatively recent. Pederson worked with Salt Lake City-based Myriad Genetics, a leading provider of genetic tests, to improve the accuracy of its polygenic risk score for breast cancer.

The company announced in August that it had recalibrated the test, called RiskScore, for women of all ancestries. Previously, Myriad did not offer its polygenic risk score to women who self-reported any ancestry other than sole European or Ashkenazi ancestry.

"Black women, while they have a similar rate of breast cancer to white women, if not lower, had twice as high of a polygenic risk score because the development and validation of the model was done in white populations," Pederson said of the old test. In other words, Myriad's old test would have shown that a Black woman had twice as high of a risk for breast cancer compared to the average woman even if she was at low or average risk.

To develop and validate the new score, Pederson and other researchers assessed data from more than 275,000 women, including more than 31,000 African American women and nearly 50,000 women of East Asian descent. They looked at 56 different genetic variants associated with ancestry and 93 associated with breast cancer. Interestingly, they found that at least 95% of the breast cancer variants were similar amongst the different ancestries.

The company says the resulting test is now more accurate for all women across the board, but Pederson cautions that it's still slightly less accurate for Black women.

"It's not only the lack of data from Black women that leads to inaccuracies and a lack of validation in these types of risk models, it's also the pure genomic diversity of Africa," she says, noting that Africa is the most genetically diverse continent on the planet. "We just need more data, not only in American Black women but in African women to really further characterize that continent."

Martin says it's problematic that such scores are most accurate for white people because they could further exacerbate health disparities in traditionally underserved groups, such as Black Americans. "If we were to set up really representative massive genetic studies, we would do a much better job at predicting genetic risk for everybody," she says.

Earlier this year, the National Institutes of Health awarded $38 million to researchers to improve the accuracy of polygenic risk scores in diverse populations. Researchers will create new genome datasets and pool information from existing ones in an effort to diversify the data that polygenic scores rely on. They plan to make these datasets available to other scientists to use.

"By having adequate representation, we can ensure that the results of a genetic test are widely applicable," Riordan says.

New Podcast: George Church on Woolly Mammoths, Organ Transplants, and Covid Vaccines

Dr. George Church, a leading pioneer of gene editing, updates our listeners on several of his noteworthy projects.

The "Making Sense of Science" podcast features interviews with leading medical and scientific experts about the latest developments and the big ethical and societal questions they raise. This monthly podcast is hosted by journalist Kira Peikoff, founding editor of the award-winning science outlet Leaps.org.

This month, our guest is notable genetics pioneer Dr. George Church of Harvard Medical School. Dr. Church has remarkably bold visions for how innovation in science can fundamentally transform the future of humanity and our planet. His current moonshot projects include: de-extincting some of the woolly mammoth's genes to create a hybrid Asian elephant with the cold-tolerance traits of the woolly mammoth, so that this animal can re-populate the Arctic and help stave off climate change; reversing chronic diseases of aging through gene therapy, which he and colleagues are now testing in dogs; and transplanting genetically engineered pig organs to humans to eliminate the tragically long waiting lists for organs. Hear Dr. Church discuss all this and more on our latest episode.

Watch the Trailer:

Listen to the Episode:

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.