SCOOP: Largest Cryobank in the U.S. to Offer Ancestry Testing

Vanessa Colimorio (left) and Sharon Kochlany (right) at a farm with their four-year-old twin daughters and one-year-old son. The kids share the same sperm donor.

Sharon Kochlany and Vanessa Colimorio's four-year-old twin girls had a classic school assignment recently: make a family tree. They drew themselves and their one-year-old brother branching off from their moms, with aunts, uncles, and grandparents forking off to the sides.

The recently-gained sovereignty of queer families stands to be lost if a consumer DNA test brings a stranger's identity out of the woodwork.

What you don't see in the invisible space between Kochlany and Colimorio, however, is the sperm donor they used to conceive all three children.

To look at a family tree like this is to see in its purest form that kinship can supersede biology—the boundaries of where this family starts and stops are clear to everyone in it, in spite of a third party's genetic involvement. This kind of self-definition has always been synonymous with LGBTQ families, especially those that rely on donor gametes (sperm or eggs) to exist.

But the world around them has changed quite suddenly: The recent consumer DNA testing boom has made it more complicated than ever for families built through reproductive technology—openly, not secretively—to maintain the strong sense of autonomy and privacy that can be crucial for their emotional security. Prospective parents and cryobanks are now mulling how best to bring a new generation of donor-conceived people into this world in a way that leaves open the choice to know more about their ancestry without obliterating an equally important choice: the right not to know about biological relatives.

For queer parents who have long fought for social acceptance, having a biological relationship to their children has been revolutionary, and using an unknown donor as a means to this end especially so. Getting help from a friend often comes with the expectation that the friend will also have social involvement in the family, which some people are comfortable with, but being able to access sperm from an unknown donor—which queer parents have only been able to openly do since the early 1980s—grants them the reproductive autonomy to create families seemingly on their own. That recently-gained sovereignty stands to be lost if a consumer DNA test brings a stranger's identity out of the woodwork.

At the same time, it's natural for donor-conceived people to want to know more about where they come from ethnically, even if they don't want to know the identity of their donor. As a donor-conceived person myself, I know my donor's self-reported ethnicity, but have often wondered how accurate it is.

Opening the Pandora's box of a consumer DNA test as a way to find out has always felt profoundly unappealing to me, however. Many people have accidentally learned they're donor-conceived by unwittingly using these tools, but I already know that about myself going in, and subsequently know I'll be connected to a large web of people whose existence I'm not interested in learning about. In addition to possibly identifying my anonymous donor, his family could also show up, along with any donor-siblings—other people with whom I share a donor. My single lesbian mom is enough for me, and the trade off to learn more about my ethnic ancestry has never seemed worth it.

In 1992, when I was born, no one was planning for how consumer DNA tests might upend or illuminate one's sense of self. But the donor community has always had to stay nimble with balancing privacy concerns and psychological well-being, so it should come as no surprise that figuring out how to do so in 2020 includes finding a way to offer ancestry insight while circumventing consumer DNA tests.

A New Paradigm

This is the rationale behind unprecedented industry news that LeapsMag can exclusively break: Within the next few weeks, California Cryobank, the largest cryobank in the country, will begin offering genetically-verified ancestry information on the free public part of every donor's anonymous profile in its database, something no other cryobanks yet offer (an exact launch date was not available at the time of publication). Currently, California Cryobank's donor profiles include a short self-reported list that might merely say, "Ancestry: German, Lebanese, Scottish."

The new information will be a report in pie chart form that details exactly what percentages of a donor's DNA come from up to 26 ethnicities—it's analogous to, but on a smaller scale than, the format offered by consumer DNA testing companies, and uses the same base technology that looks for single nucleotide polymorphisms in DNA that are associated with specific ethnicities. But crucially, because the donor takes the DNA test through California Cryobank, not a consumer-facing service, the information is not connected in a network to anyone else's DNA test. It's also taken before any offspring exist so there's no chance of revealing a donor-conceived person's identity this way.

Later, when a donor-conceived person is born, grows up, and wants information about their ethnicity from the donor side, all they need is their donor's anonymous ID number to look it up. The donor-conceived person never takes a genetic test, and therefore also can't accidentally find donor siblings this way. People who want to be connected to donor siblings can use a sibling registry where other people who want to be found share donor ID numbers and look for matches (this is something that's been available for decades, and remains so).

"With genetic testing, you have no control over who reaches out to you, and at what point in your life."

California Cryobank will require all new donors to consent to this extra level of genetic testing, setting a new standard for what information prospective parents and donor-conceived people can expect to have. In the immediate, this information will be most useful for prospective parents looking for donors with specific backgrounds, possibly ones similar to their own.

It's a solution that was actually hiding in plain sight. Two years ago, California Cryobank's partner Sema4, the company handling the genetic carrier testing that's used to screen for heritable diseases, started analyzing ethnic data in its samples. That extra information was being collected because it can help calculate a more accurate assessment of genetic risks that run in certain populations—like Ashkenazi Jews and Tay Sachs disease—than relying on oral family histories. Shortly after a plan to start collecting these extra data, Jamie Shamonki, chief medical officer of California Cryobank, realized the companies would be sitting on a goldmine for a different reason.

"I didn't want to use one of these genetic testing companies like Ancestry to accomplish this," says Shamonki. "The whole thing we're trying to accomplish is also privacy."

Consumer-facing DNA testing companies are not HIPAA compliant (whereas Sema4, which isn't direct-to-consumer, is HIPAA compliant), which means there are no legal privacy protections covering people who add their DNA to these databases. Although some companies, like 23andMe, allow users to opt-out of being connected with genetic relatives, the language can be confusing to navigate, requires a high level of knowledge and self-advocacy on the user's part, and, as an opt-out system, is not set up to protect the user from unwanted information by default; many unwittingly walk right into such information as a result.

Additionally, because consumer-facing DNA testing companies operate outside the legal purview that applies to other health care entities, like hospitals, even a person who does opt-out of being linked to genetic relatives is not protected in perpetuity from being re-identified in the future by a change in company policy. The safest option for people with privacy concerns is to stay out of these databases altogether.

For California Cryobank, the new information about donor heritage won't retroactively be added to older profiles in the system, so donor-conceived people who already exist won't benefit from the ancestry tool, but it'll be the new standard going forward. The company has about 500 available donors right now, many of which have been in their registry for a while; about 100 of those donors, all new, will have this ancestry data on their profiles.

Shamonki says it has taken about two years to get to the point of publicly including ancestry information on a donor's profile because it takes about nine months of medical and psychological screening for a donor to go from walking through the door to being added to their registry. The company wanted to wait to launch until it could offer this information for a significant number of donors. As more new donors come online under the new protocol, the number with ancestry information on their profiles will go up.

For Parents: An Unexpected Complication

While this change will no doubt be welcome progress for LGBTQ families contemplating parenthood, it'll never be possible to put this entire new order back in the box. What are such families who already have donor-conceived children losing in today's world of widespread consumer genetic testing?

Kochlany and Colimorio's twins aren't themselves much older than the moment at-home DNA testing really started to take off. They were born in 2015, and two years later the industry saw its most significant spike. By now, more than 26 million people's DNA is in databases like 23andMe and Ancestry; as a result, it's estimated that within a year, 90 percent of Americans of European descent will be identifiable through these consumer databases, by way of genetic third cousins, even if they didn't want to be found and never took the test themselves. This was the principle behind solving the Golden State Killer cold case.

The waning of privacy through consumer DNA testing fundamentally clashes with the priorities of the cyrobank industry, which has long sought to protect the privacy of donor-conceived people, even as open identification became standard. Since the 1980s, donors have been able to allow their identity to be released to any offspring who is at least 18 and wants the information. Lesbian moms pushed for this option early on so their children—who would obviously know they couldn't possibly be the biological product of both parents—would never feel cut off from the chance to know more about themselves. But importantly, the openness is not a two-way street: the donors can't ever ask for the identities of their offspring. It's the latter that consumer DNA testing really puts at stake.

"23andMe basically created the possibility that there will be donors who will have contact with their donor-conceived children, and that's not something that I think the donor community is comfortable with," says I. Glenn Cohen, director of Harvard Law School's Center for Health Law Policy, Biotechnology & Bioethics. "That's about the donor's autonomy, not the rearing parents' autonomy, or the donor-conceived child's autonomy."

Kochlany and Colimorio have an open identification donor and fully support their children reaching out to California Cryobank to get more information about him if they want to when they're 18, but having a singular name revealed isn't the same thing as having contact, nor is it the same thing as revealing a web of dozens of extended genetic relations. Their concern now is that if their kids participate in genetic testing, a stranger—someone they're careful to refer to as only "the donor" and never "dad"—will reach out to the children to begin some kind of relationship. They know other people who are contemplating giving their children DNA tests, and feel staunchly that it wouldn't be right for their family.

"With genetic testing, you have no control over who reaches out to you, and at what point in your life," Kochlany says. "[People] reaching out and trying to say, 'Hey I know who your dad is' throws a curveball. It's like, 'Wait, I never thought I had a dad.' It might put insecurities in their minds."

"We want them to have the opportunity to choose whether or not they want to reach out," Colimorio adds.

Kochlany says that when their twins are old enough to start asking questions, she and Colimorio plan to frame it like this: "The donor was kind of like a technology that helped us make you a person, and make sure that you exist," she says, role playing a conversation with their kids. "But it's not necessarily that you're looking to this person [for] support or love, or because you're missing a piece."

It's a line in the sand that's present even for couples still far off from conceiving. When Mallory Schwartz, a film and TV producer in Los Angeles, and Lauren Pietra, a marriage and family therapy associate (and Shamonki's step-daughter), talk about getting married someday, it's a package deal with talking about how they'll approach having kids. They feel there are too many variables and choices to make around family planning as a same-sex couple these days to not have those conversations simultaneously. Consumer DNA databases are already on their minds.

"It frustrates me that the DNA databases are just totally unregulated," says Schwartz. "I hope they are by the time we do this. I think everyone deserves a right to privacy when making your family [using a sperm donor]."

"I wouldn't want to create a world where people who are donor-conceived feel like they can't participate in this technology because they're trying to shut out [other] information."

On the prospect of having a donor relation pop up non-consensually for a future child, Pietra says, "I don't like it. It would be really disappointing if the child didn't want [contact], and unfortunately they're on the receiving end."

You can see how important preserving the right to keep this door closed is when you look at what's going on at The Sperm Bank of California. This pioneering cryobank was the first in the world to openly serve LGBTQ people and single women, and also the first to offer the open identification option when it opened in 1982, but not as many people are asking for their donor's identity as expected.

"We're finding a third of young people are coming forward for their donor's identity," says Alice Ruby, executive director. "We thought it would be a higher number." Viewed the other way, two-thirds of the donor-conceived people who could ethically get their donor's identity through The Sperm Bank of California are not asking the cryobank for it.

Ruby says that part of what historically made an open identification program appealing, rather than invasive or nerve-wracking, is how rigidly it's always been formatted around mutual consent, and protects against surprises for all parties. Those [donor-conceived people] who wanted more information were never barred from it, while those who wanted to remain in the dark could. No one group's wish eclipsed the other's. The potential breakdown of a system built around consent, expectations, and respect for privacy is why unregulated consumer DNA testing is most concerning to her as a path for connecting with genetic relatives.

For the last few decades in cryobanks around the world, the largest cohort of people seeking out donor sperm has been lesbian couples, followed by single women. For infertile heterosexual couples, the smallest client demographic, Ruby says donor sperm offers a solution to a medical problem, but in contrast, it historically "provided the ability for [lesbian] couples and single moms to have some reproductive autonomy." Yes, it was still a solution to a biological problem, but it was also a solution to a social one.

The Sperm Bank of California updated its registration forms to include language urging parents, donor-conceived people, and donors not to use consumer DNA tests, and to go through the cryobank if they, understandably, want to learn more about who they're connected to. But truthfully, there's not much else cryobanks can do to protect clients on any side of the donor transaction from surprise contact right now—especially not from relatives of the donor who may not even know someone in their family has donated sperm.

A Tricky Position

Personally, I've known I was donor-conceived from day one. It has never been a source of confusion, angst, or curiosity, and in fact has never loomed particularly large for me in any way. I see it merely as a type of reproductive technology—on par with in vitro fertilization—that enabled me to exist, and, now that I do exist, is irrelevant. Being confronted with my donor's identity or any donor siblings would make this fact of my conception bigger than I need it to be, as an adult with a full-blown identity derived from all of my other life experiences. But I still wonder about the minutiae of my ethnicity in much the same way as anyone else who wonders, and feel there's no safe way for me to find out without relinquishing some of my existential independence.

"People obviously want to participate in 23andMe and Ancestry because they're interested in knowing more about themselves," says Shamonki. "I wouldn't want to create a world where people who are donor-conceived feel like they can't participate in this technology because they're trying to shut out [other] information."

After all, it was the allure of that exact conceit—knowing more about oneself—that seemed to magnetically draw in millions of people to these tools in the first place. It's an experience that clearly taps into a population-wide psychic need, even—perhaps especially—if one's origins are a mystery.

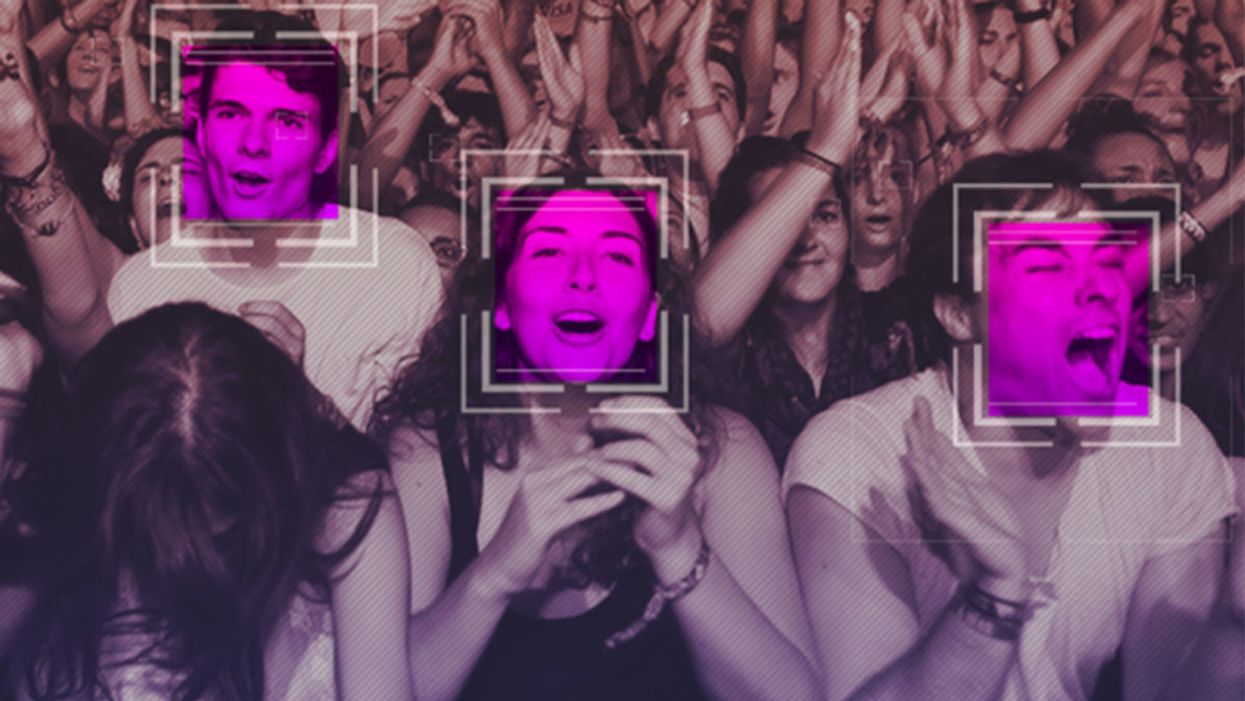

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

Scientists Are Building an “AccuWeather” for Germs to Predict Your Risk of Getting the Flu

A future app may help you avoid getting the flu by informing you of your local risk on a given day.

Applied mathematician Sara del Valle works at the U.S.'s foremost nuclear weapons lab: Los Alamos. Once colloquially called Atomic City, it's a hidden place 45 minutes into the mountains northwest of Santa Fe. Here, engineers developed the first atomic bomb.

Like AccuWeather, an app for disease prediction could help people alter their behavior to live better lives.

Today, Los Alamos still a small science town, though no longer a secret, nor in the business of building new bombs. Instead, it's tasked with, among other things, keeping the stockpile of nuclear weapons safe and stable: not exploding when they're not supposed to (yes, please) and exploding if someone presses that red button (please, no).

Del Valle, though, doesn't work on any of that. Los Alamos is also interested in other kinds of booms—like the explosion of a contagious disease that could take down a city. Predicting (and, ideally, preventing) such epidemics is del Valle's passion. She hopes to develop an app that's like AccuWeather for germs: It would tell you your chance of getting the flu, or dengue or Zika, in your city on a given day. And like AccuWeather, it could help people alter their behavior to live better lives, whether that means staying home on a snowy morning or washing their hands on a sickness-heavy commute.

Sara del Valle of Los Alamos is working to predict and prevent epidemics using data and machine learning.

Since the beginning of del Valle's career, she's been driven by one thing: using data and predictions to help people behave practically around pathogens. As a kid, she'd always been good at math, but when she found out she could use it to capture the tentacular spread of disease, and not just manipulate abstractions, she was hooked.

When she made her way to Los Alamos, she started looking at what people were doing during outbreaks. Using social media like Twitter, Google search data, and Wikipedia, the team started to sift for trends. Were people talking about hygiene, like hand-washing? Or about being sick? Were they Googling information about mosquitoes? Searching Wikipedia for symptoms? And how did those things correlate with the spread of disease?

It was a new, faster way to think about how pathogens propagate in the real world. Usually, there's a 10- to 14-day lag in the U.S. between when doctors tap numbers into spreadsheets and when that information becomes public. By then, the world has moved on, and so has the disease—to other villages, other victims.

"We found there was a correlation between actual flu incidents in a community and the number of searches online and the number of tweets online," says del Valle. That was when she first let herself dream about a real-time forecast, not a 10-days-later backcast. Del Valle's group—computer scientists, mathematicians, statisticians, economists, public health professionals, epidemiologists, satellite analysis experts—has continued to work on the problem ever since their first Twitter parsing, in 2011.

They've had their share of outbreaks to track. Looking back at the 2009 swine flu pandemic, they saw people buying face masks and paying attention to the cleanliness of their hands. "People were talking about whether or not they needed to cancel their vacation," she says, and also whether pork products—which have nothing to do with swine flu—were safe to buy.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place.

They watched internet conversations during the measles outbreak in California. "There's a lot of online discussion about anti-vax sentiment, and people trying to convince people to vaccinate children and vice versa," she says.

Today, they work on predicting the spread of Zika, Chikungunya, and dengue fever, as well as the plain old flu. And according to the CDC, that latter effort is going well.

Since 2015, the CDC has run the Epidemic Prediction Initiative, a competition in which teams like de Valle's submit weekly predictions of how raging the flu will be in particular locations, along with other ailments occasionally. Michael Johannson is co-founder and leader of the program, which began with the Dengue Forecasting Project. Its goal, he says, was to predict when dengue cases would blow up, when previously an area just had a low-level baseline of sick people. "You'll get this massive epidemic where all of a sudden, instead of 3,000 to 4,000 cases, you have 20,000 cases," he says. "They kind of come out of nowhere."

But the "kind of" is key: The outbreaks surely come out of somewhere and, if scientists applied research and data the right way, they could forecast the upswing and perhaps dodge a bomb before it hit big-time. Questions about how big, when, and where are also key to the flu.

A big part of these projects is the CDC giving the right researchers access to the right information, and the structure to both forecast useful public-health outcomes and to compare how well the models are doing. The extra information has been great for the Los Alamos effort. "We don't have to call departments and beg for data," says del Valle.

When data isn't available, "proxies"—things like symptom searches, tweets about empty offices, satellite images showing a green, wet, mosquito-friendly landscape—are helpful: You don't have to rely on anyone's health department.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place. But del Valle wants more than weekly numbers on a government website; she wants that weather-app-inspired fortune-teller, incorporating the many diseases you could get today, standing right where you are. "That's our dream," she says.

This plot shows the the correlations between the online data stream, from Wikipedia, and various infectious diseases in different countries. The results of del Valle's predictive models are shown in brown, while the actual number of cases or illness rates are shown in blue.

(Courtesy del Valle)

The goal isn't to turn you into a germophobic agoraphobe. It's to make you more aware when you do go out. "If you know it's going to rain today, you're more likely to bring an umbrella," del Valle says. "When you go on vacation, you always look at the weather and make sure you bring the appropriate clothing. If you do the same thing for diseases, you think, 'There's Zika spreading in Sao Paulo, so maybe I should bring even more mosquito repellent and bring more long sleeves and pants.'"

They're not there yet (don't hold your breath, but do stop touching your mouth). She estimates it's at least a decade away, but advances in machine learning could accelerate that hypothetical timeline. "We're doing baby steps," says del Valle, starting with the flu in the U.S., dengue in Brazil, and other efforts in Colombia, Ecuador, and Canada. "Going from there to forecasting all diseases around the globe is a long way," she says.

But even AccuWeather started small: One man began predicting weather for a utility company, then helping ski resorts optimize their snowmaking. His influence snowballed, and now private forecasting apps, including AccuWeather's, populate phones across the planet. The company's progression hasn't been without controversy—privacy incursions, inaccuracy of long-term forecasts, fights with the government—but it has continued, for better and for worse.

Disease apps, perhaps spun out of a small, unlikely team at a nuclear-weapons lab, could grow and breed in a similar way. And both the controversies and public-health benefits that may someday spin out of them lie in the future, impossible to predict with certainty.