Scientists Want to Make Robots with Genomes that Help Grow their Minds

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

Giving robots self-awareness as they move through space - and maybe even providing them with gene-like methods for storing rules of behavior - could be important steps toward creating more intelligent machines.

One day in recent past, scientists at Columbia University’s Creative Machines Lab set up a robotic arm inside a circle of five streaming video cameras and let the robot watch itself move, turn and twist. For about three hours the robot did exactly that—it looked at itself this way and that, like toddlers exploring themselves in a room full of mirrors. By the time the robot stopped, its internal neural network finished learning the relationship between the robot’s motor actions and the volume it occupied in its environment. In other words, the robot built a spatial self-awareness, just like humans do. “We trained its deep neural network to understand how it moved in space,” says Boyuan Chen, one of the scientists who worked on it.

For decades robots have been doing helpful tasks that are too hard, too dangerous, or physically impossible for humans to carry out themselves. Robots are ultimately superior to humans in complex calculations, following rules to a tee and repeating the same steps perfectly. But even the biggest successes for human-robot collaborations—those in manufacturing and automotive industries—still require separating the two for safety reasons. Hardwired for a limited set of tasks, industrial robots don't have the intelligence to know where their robo-parts are in space, how fast they’re moving and when they can endanger a human.

Over the past decade or so, humans have begun to expect more from robots. Engineers have been building smarter versions that can avoid obstacles, follow voice commands, respond to human speech and make simple decisions. Some of them proved invaluable in many natural and man-made disasters like earthquakes, forest fires, nuclear accidents and chemical spills. These disaster recovery robots helped clean up dangerous chemicals, looked for survivors in crumbled buildings, and ventured into radioactive areas to assess damage.

Now roboticists are going a step further, training their creations to do even better: understand their own image in space and interact with humans like humans do. Today, there are already robot-teachers like KeeKo, robot-pets like Moffin, robot-babysitters like iPal, and robotic companions for the elderly like Pepper.

But even these reasonably intelligent creations still have huge limitations, some scientists think. “There are niche applications for the current generations of robots,” says professor Anthony Zador at Cold Spring Harbor Laboratory—but they are not “generalists” who can do varied tasks all on their own, as they mostly lack the abilities to improvise, make decisions based on a multitude of facts or emotions, and adjust to rapidly changing circumstances. “We don’t have general purpose robots that can interact with the world. We’re ages away from that.”

Robotic spatial self-awareness – the achievement by the team at Columbia – is an important step toward creating more intelligent machines. Hod Lipson, professor of mechanical engineering who runs the Columbia lab, says that future robots will need this ability to assist humans better. Knowing how you look and where in space your parts are, decreases the need for human oversight. It also helps the robot to detect and compensate for damage and keep up with its own wear-and-tear. And it allows robots to realize when something is wrong with them or their parts. “We want our robots to learn and continue to grow their minds and bodies on their own,” Chen says. That’s what Zador wants too—and on a much grander level. “I want a robot who can drive my car, take my dog for a walk and have a conversation with me.”

Columbia scientists have trained a robot to become aware of its own "body," so it can map the right path to touch a ball without running into an obstacle, in this case a square.

Jane Nisselson and Yinuo Qin/ Columbia Engineering

Today’s technological advances are making some of these leaps of progress possible. One of them is the so-called Deep Learning—a method that trains artificial intelligence systems to learn and use information similar to how humans do it. Described as a machine learning method based on neural network architectures with multiple layers of processing units, Deep Learning has been used to successfully teach machines to recognize images, understand speech and even write text.

Trained by Google, one of these language machine learning geniuses, BERT, can finish sentences. Another one called GPT3, designed by San Francisco-based company OpenAI, can write little stories. Yet, both of them still make funny mistakes in their linguistic exercises that even a child wouldn’t. According to a paper published by Stanford’s Center for Research on Foundational Models, BERT seems to not understand the word “not.” When asked to fill in the word after “A robin is a __” it correctly answers “bird.” But try inserting the word “not” into that sentence (“A robin is not a __”) and BERT still completes it the same way. Similarly, in one of its stories, GPT3 wrote that if you mix a spoonful of grape juice into your cranberry juice and drink the concoction, you die. It seems that robots, and artificial intelligence systems in general, are still missing some rudimentary facts of life that humans and animals grasp naturally and effortlessly.

How does one give robots a genome? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create.

It's not exactly the robots’ fault. Compared to humans, and all other organisms that have been around for thousands or millions of years, robots are very new. They are missing out on eons of evolutionary data-building. Animals and humans are born with the ability to do certain things because they are pre-wired in them. Flies know how to fly, fish knows how to swim, cats know how to meow, and babies know how to cry. Yet, flies don’t really learn to fly, fish doesn’t learn to swim, cats don’t learn to meow, and babies don’t learn to cry—they are born able to execute such behaviors because they’re preprogrammed to do so. All that happens thanks to the millions of years of evolutions wired into their respective genomes, which give rise to the brain’s neural networks responsible for these behaviors. Robots are the newbies, missing out on that trove of information, Zador argues.

A neuroscience professor who studies how brain circuitry generates various behaviors, Zador has a different approach to developing the robotic mind. Until their creators figure out a way to imbue the bots with that information, robots will remain quite limited in their abilities. Each model will only be able to do certain things it was programmed to do, but it will never go above and beyond its original code. So Zador argues that we have to start giving robots a genome.

How does one do that? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create. Genomes lay out rules for brain development. Specifically, the genome encodes blueprints for wiring up our nervous system—the details of which neurons are connected, the strength of those connections and other specs that will later hold the information learned throughout life. “Our genomes serve as blueprints for building our nervous system and these blueprints give rise to a human brain, which contains about 100 billion neurons,” Zador says.

If you think what a genome is, he explains, it is essentially a very compact and compressed form of information storage. Conceptually, genomes are similar to CliffsNotes and other study guides. When students read these short summaries, they know about what happened in a book, without actually reading that book. And that’s how we should be designing the next generation of robots if we ever want them to act like humans, Zador says. “We should give them a set of behavioral CliffsNotes, which they can then unwrap into brain-like structures.” Robots that have such brain-like structures will acquire a set of basic rules to generate basic behaviors and use them to learn more complex ones.

Currently Zador is in the process of developing algorithms that function like simple rules that generate such behaviors. “My algorithms would write these CliffsNotes, outlining how to solve a particular problem,” he explains. “And then, the neural networks will use these CliffsNotes to figure out which ones are useful and use them in their behaviors.” That’s how all living beings operate. They use the pre-programmed info from their genetics to adapt to their changing environments and learn what’s necessary to survive and thrive in these settings.

For example, a robot’s neural network could draw from CliffsNotes with “genetic” instructions for how to be aware of its own body or learn to adjust its movements. And other, different sets of CliffsNotes may imbue it with the basics of physical safety or the fundamentals of speech.

At the moment, Zador is working on algorithms that are trying to mimic neuronal blueprints for very simple organisms—such as earthworms, which have only 302 neurons and about 7000 synapses compared to the millions we have. That’s how evolution worked, too—expanding the brains from simple creatures to more complex to the Homo Sapiens. But if it took millions of years to arrive at modern humans, how long would it take scientists to forge a robot with human intelligence? That’s a billion-dollar question. Yet, Zador is optimistic. “My hypotheses is that if you can build simple organisms that can interact with the world, then the higher level functions will not be nearly as challenging as they currently are.”

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

DNA- and RNA-based electronic implants may revolutionize healthcare

The test tubes contain tiny DNA/enzyme-based circuits, which comprise TRUMPET, a new type of electronic device, smaller than a cell.

Implantable electronic devices can significantly improve patients’ quality of life. A pacemaker can encourage the heart to beat more regularly. A neural implant, usually placed at the back of the skull, can help brain function and encourage higher neural activity. Current research on neural implants finds them helpful to patients with Parkinson’s disease, vision loss, hearing loss, and other nerve damage problems. Several of these implants, such as Elon Musk’s Neuralink, have already been approved by the FDA for human use.

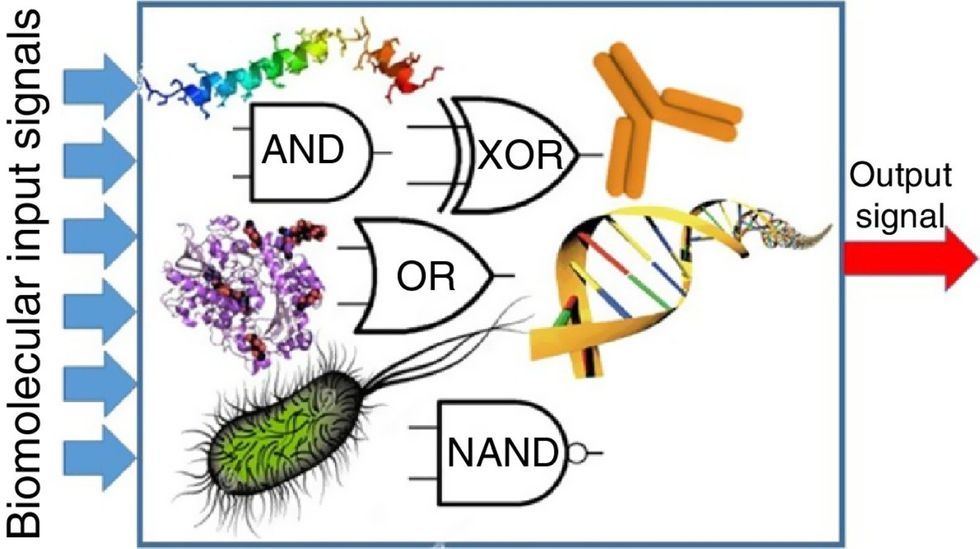

Yet, pacemakers, neural implants, and other such electronic devices are not without problems. They require constant electricity, limited through batteries that need replacements. They also cause scarring. “The problem with doing this with electronics is that scar tissue forms,” explains Kate Adamala, an assistant professor of cell biology at the University of Minnesota Twin Cities. “Anytime you have something hard interacting with something soft [like muscle, skin, or tissue], the soft thing will scar. That's why there are no long-term neural implants right now.” To overcome these challenges, scientists are turning to biocomputing processes that use organic materials like DNA and RNA. Other promised benefits include “diagnostics and possibly therapeutic action, operating as nanorobots in living organisms,” writes Evgeny Katz, a professor of bioelectronics at Clarkson University, in his book DNA- And RNA-Based Computing Systems.

While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output.

Adamala’s research focuses on developing such biocomputing systems using DNA, RNA, proteins, and lipids. Using these molecules in the biocomputing systems allows the latter to be biocompatible with the human body, resulting in a natural healing process. In a recent Nature Communications study, Adamala and her team created a new biocomputing platform called TRUMPET (Transcriptional RNA Universal Multi-Purpose GatE PlaTform) which acts like a DNA-powered computer chip. “These biological systems can heal if you design them correctly,” adds Adamala. “So you can imagine a computer that will eventually heal itself.”

The basics of biocomputing

Biocomputing and regular computing have many similarities. Like regular computing, biocomputing works by running information through a series of gates, usually logic gates. A logic gate works as a fork in the road for an electronic circuit. The input will travel one way or another, giving two different outputs. An example logic gate is the AND gate, which has two inputs (A and B) and two different results. If both A and B are 1, the AND gate output will be 1. If only A is 1 and B is 0, the output will be 0 and vice versa. If both A and B are 0, the result will be 0. While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output. In this case, the DNA enters the logic gate as a single or double strand.

If the DNA is double-stranded, the system “digests” the DNA or destroys it, which results in non-fluorescence or “0” output. Conversely, if the DNA is single-stranded, it won’t be digested and instead will be copied by several enzymes in the biocomputing system, resulting in fluorescent RNA or a “1” output. And the output for this type of binary system can be expanded beyond fluorescence or not. For example, a “1” output might be the production of the enzyme insulin, while a “0” may be that no insulin is produced. “This kind of synergy between biology and computation is the essence of biocomputing,” says Stephanie Forrest, a professor and the director of the Biodesign Center for Biocomputing, Security and Society at Arizona State University.

Biocomputing circles are made of DNA, RNA, proteins and even bacteria.

Evgeny Katz

The TRUMPET’s promise

Depending on whether the biocomputing system is placed directly inside a cell within the human body, or run in a test-tube, different environmental factors play a role. When an output is produced inside a cell, the cell's natural processes can amplify this output (for example, a specific protein or DNA strand), creating a solid signal. However, these cells can also be very leaky. “You want the cells to do the thing you ask them to do before they finish whatever their businesses, which is to grow, replicate, metabolize,” Adamala explains. “However, often the gate may be triggered without the right inputs, creating a false positive signal. So that's why natural logic gates are often leaky." While biocomputing outside a cell in a test tube can allow for tighter control over the logic gates, the outputs or signals cannot be amplified by a cell and are less potent.

TRUMPET, which is smaller than a cell, taps into both cellular and non-cellular biocomputing benefits. “At its core, it is a nonliving logic gate system,” Adamala states, “It's a DNA-based logic gate system. But because we use enzymes, and the readout is enzymatic [where an enzyme replicates the fluorescent RNA], we end up with signal amplification." This readout means that the output from the TRUMPET system, a fluorescent RNA strand, can be replicated by nearby enzymes in the platform, making the light signal stronger. "So it combines the best of both worlds,” Adamala adds.

These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body.

The TRUMPET biocomputing process is relatively straightforward. “If the DNA [input] shows up as single-stranded, it will not be digested [by the logic gate], and you get this nice fluorescent output as the RNA is made from the single-stranded DNA, and that's a 1,” Adamala explains. "And if the DNA input is double-stranded, it gets digested by the enzymes in the logic gate, and there is no RNA created from the DNA, so there is no fluorescence, and the output is 0." On the story's leading image above, if the tube is "lit" with a purple color, that is a binary 1 signal for computing. If it's "off" it is a 0.

While still in research, TRUMPET and other biocomputing systems promise significant benefits to personalized healthcare and medicine. These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body. The study’s lead author and graduate student Judee Sharon is already beginning to research TRUMPET's ability for earlier cancer diagnoses. Because the inputs for TRUMPET are single or double-stranded DNA, any mutated or cancerous DNA could theoretically be detected from the platform through the biocomputing process. Theoretically, devices like TRUMPET could be used to detect cancer and other diseases earlier.

Adamala sees TRUMPET not only as a detection system but also as a potential cancer drug delivery system. “Ideally, you would like the drug only to turn on when it senses the presence of a cancer cell. And that's how we use the logic gates, which work in response to inputs like cancerous DNA. Then the output can be the production of a small molecule or the release of a small molecule that can then go and kill what needs killing, in this case, a cancer cell. So we would like to develop applications that use this technology to control the logic gate response of a drug’s delivery to a cell.”

Although platforms like TRUMPET are making progress, a lot more work must be done before they can be used commercially. “The process of translating mechanisms and architecture from biology to computing and vice versa is still an art rather than a science,” says Forrest. “It requires deep computer science and biology knowledge,” she adds. “Some people have compared interdisciplinary science to fusion restaurants—not all combinations are successful, but when they are, the results are remarkable.”

Crickets are low on fat, high on protein, and can be farmed sustainably. They are also crunchy.

In today’s podcast episode, Leaps.org Deputy Editor Lina Zeldovich speaks about the health and ecological benefits of farming crickets for human consumption with Bicky Nguyen, who joins Lina from Vietnam. Bicky and her business partner Nam Dang operate an insect farm named CricketOne. Motivated by the idea of sustainable and healthy protein production, they started their unconventional endeavor a few years ago, despite numerous naysayers who didn’t believe that humans would ever consider munching on bugs.

Yet, making creepy crawlers part of our diet offers many health and planetary advantages. Food production needs to match the rise in global population, estimated to reach 10 billion by 2050. One challenge is that some of our current practices are inefficient, polluting and wasteful. According to nonprofit EarthSave.org, it takes 2,500 gallons of water, 12 pounds of grain, 35 pounds of topsoil and the energy equivalent of one gallon of gasoline to produce one pound of feedlot beef, although exact statistics vary between sources.

Meanwhile, insects are easy to grow, high on protein and low on fat. When roasted with salt, they make crunchy snacks. When chopped up, they transform into delicious pâtes, says Bicky, who invents her own cricket recipes and serves them at industry and public events. Maybe that’s why some research predicts that edible insects market may grow to almost $10 billion by 2030. Tune in for a delectable chat on this alternative and sustainable protein.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Further reading:

More info on Bicky Nguyen

https://yseali.fulbright.edu.vn/en/faculty/bicky-n...

The environmental footprint of beef production

https://www.earthsave.org/environment.htm

https://www.watercalculator.org/news/articles/beef-king-big-water-footprints/

https://www.frontiersin.org/articles/10.3389/fsufs.2019.00005/full

https://ourworldindata.org/carbon-footprint-food-methane

Insect farming as a source of sustainable protein

https://www.insectgourmet.com/insect-farming-growing-bugs-for-protein/

https://www.sciencedirect.com/topics/agricultural-and-biological-sciences/insect-farming

Cricket flour is taking the world by storm

https://www.cricketflours.com/

https://talk-commerce.com/blog/what-brands-use-cricket-flour-and-why/

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.