The Science Sleuth Holding Fraudulent Research Accountable

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

Outside whistleblower Elisabeth Bik scrutinizes newly published scientific papers for misleading images and data.

Introduction by Mary Inman, Whistleblower Attorney

For most people, when they see the word "whistleblower," the image that leaps to mind is a lone individual bravely stepping forward to shine a light on misconduct she has witnessed first-hand. Meryl Streep as Karen Silkwood exposing safety violations observed while working the line at the Kerr-McGee plutonium plant. Matt Damon as Mark Whitacre in The Informant!, capturing on his pocket recorder clandestine meetings between his employer and its competitors to fix the price of lysine. However, a new breed of whistleblower is emerging who isn't at the scene of the crime but instead figures it out after the fact through laborious review of publicly available information and expert analysis. Elisabeth Bik belongs to this new class of whistleblower.

"There's this delicate balance where on one hand we want to spread results really fast as scientists, but on the other hand, we know it's incomplete, it's rushed and it's not great."

Using her expertise as a microbiologist and her trained eye, Bik studies publicly available scientific papers to sniff out potential irregularities in the images that suggest research fraud, later seeking retraction of the offending paper from the journal's publisher. There's no smoking gun, no first-hand account of any kind. Just countless hours spent reviewing scores of scientific papers and Bik's skills and dedication as a science fraud sleuth.

While Bik's story may not as readily lend itself to the big screen, her work is nonetheless equally heroic. By tirelessly combing scientific papers to expose research fraud, Bik is playing a vital role in holding the scientific publishing process accountable and ensuring that misleading information does not spread unchecked. This is important work in any age, but particularly so in the time of COVID, where we can ill afford the setbacks and delays of scientists building on false science. In the present climate, where science is politicized and scientific principles are under attack, strong voices like Bik's must rise above the din to ensure the scientific information we receive, and our governments act upon, is accurate. Our health and wellbeing depend on it.

Whistleblower outsiders like Bik are challenging the traditional concept of what it means to be a whistleblower. Fortunately for us, the whistleblower community is a broad church. As with most ecosystems, we all benefit from a diversity of voices —whistleblower insiders and outsiders alike. What follows is an illuminating conversation between Bik, and Ivan Oransky, the co-founder of Retraction Watch, an influential blog that reports on retractions of scientific papers and related topics. (Conversation facilitated by LeapsMag Editor-in-Chief Kira Peikoff)

Elisabeth Bik and Ivan Oransky.

(Photo credits Michel & Co Photography, San Jose, CA and Elizabeth Solaka)

Ivan

I'd like to hear your thoughts, Elisabeth, on an L.A. Times story, which was picking up a preprint about mutations and the novel coronavirus, alleging that the virus is mutating to become more infectious – even though this conclusion wasn't actually warranted.

Elisabeth

A lot of the news around it is picking up on one particular side of the story that is maybe not that much exaggerated by the scientists. I don't think this paper really showed that the mutations were causing the virus to be more virulent. Some of these viruses continuously mutate and mutate and mutate, and that doesn't necessarily make a strain more virulent. I think in many cases, a lot of people want to read something in a paper that is not actually there.

Ivan

The tone level, everything that's being published now, it's problematic. It's being rushed, here it wasn't even peer-reviewed. But even when they are peer-reviewed, they're being peer-reviewed by people who often aren't really an expert in that particular area.

Elisabeth

That's right.

Ivan

To me, it's all problematic. At the same time, it's all really good that it's all getting out there. I think that five or 10 years ago, or if we weren't in a pandemic, maybe that paper wouldn't have appeared at all. It would have maybe been submitted to a top-ranked journal and not have been accepted, or maybe it would have been improved during peer review and bounced down the ladder a bit to a lower-level journal.

Yet, now, because it's about coronavirus, it's in a major newspaper and, in fact, it's getting critiqued immediately.

Maybe it's too Pollyanna-ish, but I actually think that quick uploading is a good thing. The fear people have about preprint servers is based on this idea that the peer-reviewed literature is perfect. Once it is in a peer-reviewed journal, they think it must have gone through this incredible process. You're laughing because-

Elisabeth

I am laughing.

Ivan

You know it's not true.

Elisabeth

Yes, we both know that. I agree and I think in this particular situation, a pandemic that is unlike something our generation has seen before, there is a great, great need for fast dissemination of science.

If you have new findings, it is great that there is a thing called a preprint server where scientists can quickly share their results, with, of course, the caveat that it's not peer-reviewed yet.

It's unlike the traditional way of publishing papers, which can take months or years. Preprint publishing is a very fast way of spreading your results in a good way so that is what the world needs right now.

On the other hand, of course, there's the caveat that these are brand new results and a good scientist usually thinks about their results to really interpret it well. You have to look at it from all sides and I think with the rushed publication of preprint papers, there is no such thing as carefully thinking about what results might mean.

So there's this delicate balance where on one hand we want to spread results really fast as scientists, but on the other hand, we know it's incomplete, it's rushed and it's not great. This might be hard for the general audience to understand.

Ivan

I still think the benefits of that dissemination are more positive than negative.

Elisabeth

Right. But there's also so many papers that come out now on preprint servers and most of them are not that great, but there are some really good studies in there. It's hard to find those nuggets of really great papers. There's just a lot of papers that come out now.

Ivan

Well, you've made more than a habit of finding problems in papers. These are mostly, of course, until now published papers that you examined, but what is this time like for you? How is it different?

Elisabeth

It's different because in the beginning I looked at several COVID-19-related papers that came out and wrote some critiques about it. I did experience a lot of backlash because of that. So I felt I had to take a break from social media and from writing about COVID-19.

I focused a little bit more on other work because I just felt that a lot of these papers on COVID-19 became so politically divisive that if you tried to be a scientist and think critically about a paper, you were actually assigned to a particular political party or to be against other political parties. It's hard for me to be sucked into the political discussion and to the way that our society now is so completely divided into two camps that seem to be not listening to each other.

Ivan

I was curious about that because I've followed your work for a number of years, as you know, and certainly you have had critics before. I'm thinking of the case in China that you uncovered, the leading figure in the Chinese Academy who was really a powerful political figure in addition to being a scientist.

Elisabeth

So that was a case in which I found a couple of papers at first from a particular group in China, and I was just posting on a website called PubPeer, where you can post comments, concerns about papers. And in this case, these were image duplication issues, which is my specialty.

I did not realize that the group I was looking at at that moment was led by one of the highest ranked scientists in China. If I had known that, I would probably not have posted that under my full name, but under a pseudonym. Since I had already posted, some people were starting to send me direct messages on Twitter like, "OMG, the guy you're posting about now is the top scientist in China so you're going to have a lot of backlash."

Then I decided I'll just continue doing this. I found a total of around 50 papers from this group and posted all of them on PubPeer. That story quickly became a very popular story in China: number two on Sina Weibo, a social media site in China.

I was surprised it wasn't suppressed by the Chinese government, it was actually allowed by journalists that were writing about it, and I didn't experience a lot of backlash because of that.

Actually the Chinese doctor wrote me an email saying that he appreciated my feedback and that he would look into these cases. He sent a very polite email so I sent him back that I appreciated that he would look into these cases and left it there.

Ivan

There are certain subjects that I know when we write about them in Retraction Watch, they have tended in the past to really draw a lot of ire. I'm thinking anything about vaccines and autism, anything about climate change, stem cell research.

For a while that last subject has sort of died down. But now it's become a highly politically charged atmosphere. Do you feel that this pandemic has raised the profile of people such as yourself who we refer to as scientific sleuths, people who look critically and analytically at new research?

Elisabeth

Yeah, some people. But I'm also worried that some people who are great scientists and have shown a lot of critical thinking are being attacked because of that. If you just look at what happened to Dr. Fauci, I think that's a prime example. Where somebody who actually is very knowledgeable and very cautious of new science has not been widely accepted as a great leader, in our country at least. It's sad to see that. I'm just worried how long he will be at his position, to be honest.

Ivan

We noticed a big uptick in our traffic in the last few days to Retraction Watch and it turns out it was because someone we wrote about a number of years ago has really hopped on the bandwagon to try and discredit and even try to have Dr. Fauci fired.

It's one of these reminders that the way people think about scientists has, in many cases, far more to do with their own history or their own perspective going in than with any reality or anything about the science. It's pretty disturbing, but it's not a new thing. This has been happening for a while.

You can go back and read sociologists of science from 50-60 years ago and see the same thing, but I just don't think that it's in the same way that it is now, maybe in part because of social media.

Elisabeth

I've been personally very critical about several studies, but this is the first time I've experienced being attacked by trolls and having some nasty websites written about me. It is very disturbing to read.

"I don't think that something that's been peer-reviewed is perfect and something that hasn't been peer reviewed, you should never bother reading it."

Ivan

It is. Yet you have been a fearless and vocal critic of some very high-profile papers, like the infamous French study about hydroxychloroquine.

Elisabeth

Right, the paper that came out was immediately tweeted by the President of the United States. At first I thought it was great that our President tweeted about science! I thought that was a major breakthrough. I took a look at this paper.

It had just come out that day, I believe. The first thing I noticed is that it was accepted within 24 hours of being submitted to the journal. It was actually published in a journal where one of the authors is the editor-in-chief, which is a huge conflict of interest, but it happens.

But in this particular case, there were also a lot of flaws with the study and that, I think, should have been caught during peer review. The paper was first published on a preprint server and then within 24 hours or so it was published in that paper, supposedly after peer review.

There were very few changes between the preprint version and the peer review paper. There were just a couple of extra lines, extra sentences added here and there, but it wasn't really, I think, critically looked at. Because there were a lot of things that I thought were flaws.

Just to go over a couple of them. This paper showed supposedly that people who were treated with hydroxychloroquine and azithromycin were doing much better by clearing their virus much faster than people who were not treated with these drugs.

But if you look carefully at the paper there were a couple of people who were left out of the study. So they were treated with hydroxychloroquine, but they were not shown in the end results of the paper. All six people who were treated with the drug combination were clearing the virus within six days, but there were a couple of others who were left out of the study. They also started the drug combination, but they stopped taking the drugs for several reasons and three of them were admitted to the intensive care, one died, one had some side effects and one apparently walked out of the hospital.

They were left out of the study but they were actually not doing very well with the drug combination. It's not very good science if you leave out people who don't do very well with your drug combination in your study. That was one of my biggest critiques of the paper.

Ivan

What struck us about that case was, in addition to what you, of course, mentioned, the fact that Trump tweeted it and was talking about hydroxychloroquine, was that it seemed to be a perfect example of, "well, it was in a peer review journal." Yeah, it was a preprint first, but, well, it's a peer review journal. And yet, as you point out, when you look at the history of the paper, it was accepted in 24 hours.

If you talk to most scientists, the actual act of a peer review, once you sit down to do it and can concentrate, a good one takes, again, these are averages, but four hours, a half a day is not unreasonable. So you had to find three people who could suddenly review this paper. As you pointed out, it was in a journal where one of the authors was editor.

Then some strange things also happened, right? The society that actually publishes the journal, they came out with a statement saying this wasn't up to our standards, which is odd. Then Elsevier came in, they're the ones who are actually contracted to publish the journal for the society. They said, basically, "Oh, we're going to look into this now too."

It just makes you wonder what happened before the paper was actually published. All the people who were supposed to have been involved in doing the peer review or checking on it are clearly very distraught about what actually happened. It's that scene from Casablanca, "I'm shocked, shocked there's gambling going on here." And then, "Your winnings, sir."

Elisabeth

Yes.

Ivan

And I don't actually blame the public, I don't blame reporters for getting a bit confused about what it all means and what they should trust. I don't think trust is a binary any more than anything else is a binary. I don't think that something that's been peer-reviewed is perfect and something that hasn't been peer reviewed, you should never bother reading it. I think everything is much more gray.

Yet we've turned things into a binary. Even if you go back before coronavirus, coffee is good for you, coffee is bad for you, red wine, chocolate, all the rest of it. A lot of that is because of this sort of binary construct of the world for journalists, frankly, for scientists that need to get their next grants. And certainly for the general public, they want answers.

On the one hand, if I had to choose what group of experts, or what field of human endeavor would I trust with finding the answer to a pandemic like this, or to any crisis, it would absolutely be scientists. Hands down. This is coming from someone who writes about scientific fraud.

But on the other hand, that means that if scientists aren't clear about what they don't know and about the nuances and about what the scientific method actually allows us to do and learn, that just sets them up for failure. It sets people like Dr. Fauci up for failure.

Elisabeth

Right.

Ivan

It sets up any public health official who has a discussion about models. There's a famous saying: "All models are wrong, but some are useful."

Just because the projections change, it's not proof of wrongness, it's not proof that the model is fatally flawed. In fact, I'd be really concerned if the projections didn't change based on new information. I would love it if this whole episode did lead to a better understanding of the scientific process and how scientific publishing fits into that — and doesn't fit into it.

Elisabeth

Yes, I'm with you. I'm very worried that the general audience's perspective is based on maybe watching too many movies where the scientist comes up with a conclusion one hour into the movie when everything is about to fail. Like that scene in Contagion where somebody injects, I think, eight monkeys, and one of the monkeys survives and boom we have the vaccine. That's not really how science works. Everything takes many, many years and many, many applications where usually your first ideas and your first hypothesis turn out to be completely wrong.

Then you go back to the drawing board, you develop another hypothesis and this is a very reiterative process that usually takes years. Most of the people who watch the movie might have a very wrong idea and wrong expectations about how science works. We're living in the movie Contagion and by September, we'll all be vaccinated and we can go on and live our lives. But that's not what is going to happen. It's going to take much, much longer and we're going to have to change the models every time and change our expectations. Just because we don't know all the numbers and all the facts yet.

Ivan

Generally it takes a fairly long time to change medical practice. A lot of times people see that as a bad thing. What I think that ignores, or at least doesn't take into as much account as I would, is that you don't want doctors and other health care professionals to turn on a dime and suddenly switch. Unless, of course, it turns out there was no evidence for what you were looking at.

It's a complicated situation.

Everybody wants scientists to be engineers, right?

Elisabeth

Right.

Ivan

I'm not saying engineering isn't scientific, nor am I saying that science is just completely whimsical, but there's a different process. It's a different way of looking at things and you can't just throw all the data into a big supercomputer, which is what I think a lot of people seem to want us to do, and then the obvious answer will come out on the other side.

Elisabeth

No. It's true and a lot of engineers suddenly feel their inherent need to solve this as a problem. They're not scientists and it's not building a bridge over a big river. But we're dealing with something that is very hard to solve because we don't understand the problem yet. I think scientists are usually first analyzing the problem and trying to understand what the problem actually is before you can even think about a solution.

Ivan

I think we're still at the understanding the problem phase.

Elisabeth

Exactly. And going back to the French group paper, that promised such a result and that was interpreted as such by a lot of people including presidents, but it's a very rare thing to find a medication that will have a 100% curation rate. That's something that I wish the people would understand better. We all want that to happen, but it's very unlikely and very unprecedented in the best of times.

Ivan

I would second that and also say that the world needs to better value the work that people like Elisabeth and others are doing. Because we're not going to get to a better answer if we're not rigorous about scrutinizing the literature and scrutinizing the methodology and scrutinizing the results.

"I quit my job to be able to do this work."

It's a relatively new phenomenon that you're able to do this at any scale at all, and even now it's at a very small scale. Elisabeth mentioned PubPeer and I'm a big fan — also full disclosure, I'm on their board of directors as a volunteer — it's a very powerful engine for readers and journal editors and other scientists to discuss issues.

And Elisabeth has used it really, really well. I think we need to start giving credit to people like that. And, also creating incentives for that kind of work in a way that science hasn't yet.

Elisabeth

Yeah. I quit my job to be able to do this work. It's really hard to combine it with a job either in academia or industry because we're looking for or criticizing papers and it's hard when you are still employed to do that.

I try to make it about the papers and do it in a polite way, but still it's a very hard job to do if you have a daytime job and a position and a career to worry about. Because if you're critical of other academics, that could actually mean the end of your career and that's sad. They should be more open to polite criticism.

Ivan

And for the general public, if you're reading a newspaper story or something online about a single study and it doesn't mention any other studies that have said the same thing or similar, or frankly, if it doesn't say anything about any studies that contradicted it, that's probably also telling you something.

Say you're looking at a huge painting of a shoreline, a beach, and a forest. Any single study is just a one-centimeter-by-one-centimeter square of any part of that canvas. If you just look at that, you would either think it was a painting of the sea, of a beach, or of the forest. It's actually all three of those things.

We just need to be patient, and that's very challenging to us as human beings, but we need to take the time to look at the whole picture.

DISCLAIMER: Neither Elisabeth Bik nor Ivan Oransky was compensated for participation in The Pandemic Issue. While the magazine's editors suggested broad topics for discussion, consistent with Bik's and Oransky's work, neither they nor the magazine's underwriters had any influence on their conversation.

[Editor's Note: This article was originally published on June 8th, 2020 as part of a standalone magazine called GOOD10: The Pandemic Issue. Produced as a partnership among LeapsMag, The Aspen Institute, and GOOD, the magazine is available for free online.]

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

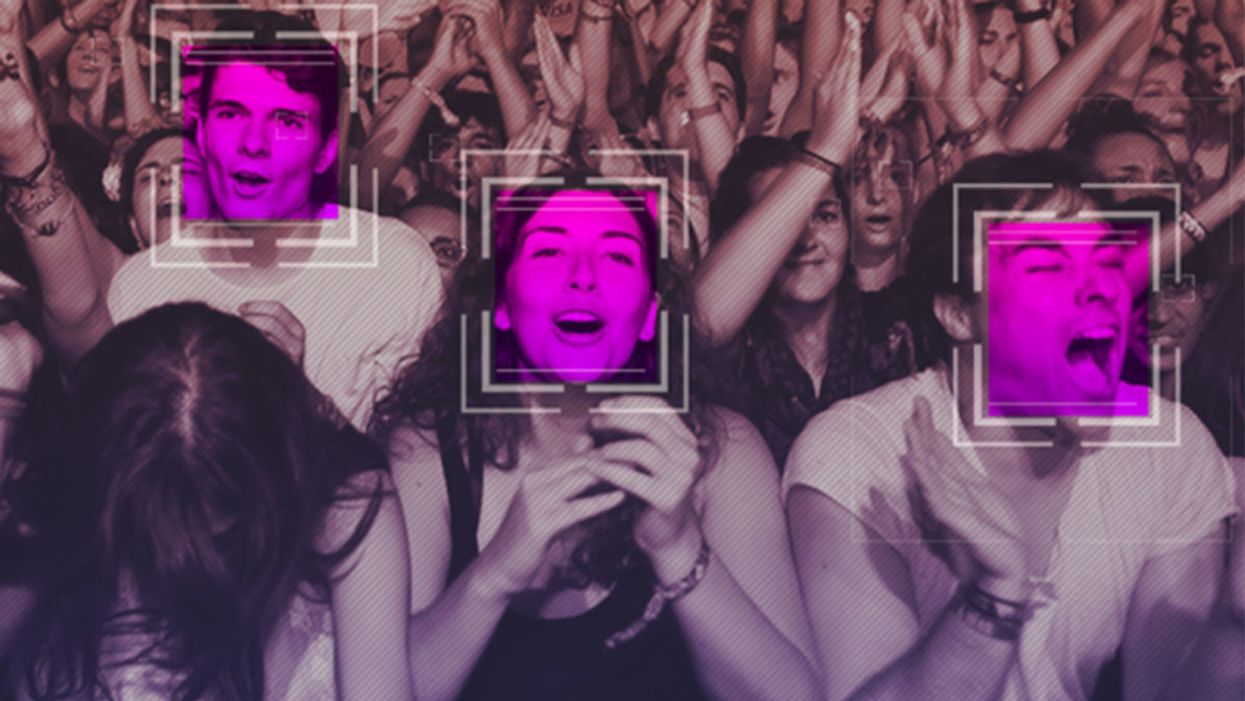

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

Scientists Are Building an “AccuWeather” for Germs to Predict Your Risk of Getting the Flu

A future app may help you avoid getting the flu by informing you of your local risk on a given day.

Applied mathematician Sara del Valle works at the U.S.'s foremost nuclear weapons lab: Los Alamos. Once colloquially called Atomic City, it's a hidden place 45 minutes into the mountains northwest of Santa Fe. Here, engineers developed the first atomic bomb.

Like AccuWeather, an app for disease prediction could help people alter their behavior to live better lives.

Today, Los Alamos still a small science town, though no longer a secret, nor in the business of building new bombs. Instead, it's tasked with, among other things, keeping the stockpile of nuclear weapons safe and stable: not exploding when they're not supposed to (yes, please) and exploding if someone presses that red button (please, no).

Del Valle, though, doesn't work on any of that. Los Alamos is also interested in other kinds of booms—like the explosion of a contagious disease that could take down a city. Predicting (and, ideally, preventing) such epidemics is del Valle's passion. She hopes to develop an app that's like AccuWeather for germs: It would tell you your chance of getting the flu, or dengue or Zika, in your city on a given day. And like AccuWeather, it could help people alter their behavior to live better lives, whether that means staying home on a snowy morning or washing their hands on a sickness-heavy commute.

Sara del Valle of Los Alamos is working to predict and prevent epidemics using data and machine learning.

Since the beginning of del Valle's career, she's been driven by one thing: using data and predictions to help people behave practically around pathogens. As a kid, she'd always been good at math, but when she found out she could use it to capture the tentacular spread of disease, and not just manipulate abstractions, she was hooked.

When she made her way to Los Alamos, she started looking at what people were doing during outbreaks. Using social media like Twitter, Google search data, and Wikipedia, the team started to sift for trends. Were people talking about hygiene, like hand-washing? Or about being sick? Were they Googling information about mosquitoes? Searching Wikipedia for symptoms? And how did those things correlate with the spread of disease?

It was a new, faster way to think about how pathogens propagate in the real world. Usually, there's a 10- to 14-day lag in the U.S. between when doctors tap numbers into spreadsheets and when that information becomes public. By then, the world has moved on, and so has the disease—to other villages, other victims.

"We found there was a correlation between actual flu incidents in a community and the number of searches online and the number of tweets online," says del Valle. That was when she first let herself dream about a real-time forecast, not a 10-days-later backcast. Del Valle's group—computer scientists, mathematicians, statisticians, economists, public health professionals, epidemiologists, satellite analysis experts—has continued to work on the problem ever since their first Twitter parsing, in 2011.

They've had their share of outbreaks to track. Looking back at the 2009 swine flu pandemic, they saw people buying face masks and paying attention to the cleanliness of their hands. "People were talking about whether or not they needed to cancel their vacation," she says, and also whether pork products—which have nothing to do with swine flu—were safe to buy.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place.

They watched internet conversations during the measles outbreak in California. "There's a lot of online discussion about anti-vax sentiment, and people trying to convince people to vaccinate children and vice versa," she says.

Today, they work on predicting the spread of Zika, Chikungunya, and dengue fever, as well as the plain old flu. And according to the CDC, that latter effort is going well.

Since 2015, the CDC has run the Epidemic Prediction Initiative, a competition in which teams like de Valle's submit weekly predictions of how raging the flu will be in particular locations, along with other ailments occasionally. Michael Johannson is co-founder and leader of the program, which began with the Dengue Forecasting Project. Its goal, he says, was to predict when dengue cases would blow up, when previously an area just had a low-level baseline of sick people. "You'll get this massive epidemic where all of a sudden, instead of 3,000 to 4,000 cases, you have 20,000 cases," he says. "They kind of come out of nowhere."

But the "kind of" is key: The outbreaks surely come out of somewhere and, if scientists applied research and data the right way, they could forecast the upswing and perhaps dodge a bomb before it hit big-time. Questions about how big, when, and where are also key to the flu.

A big part of these projects is the CDC giving the right researchers access to the right information, and the structure to both forecast useful public-health outcomes and to compare how well the models are doing. The extra information has been great for the Los Alamos effort. "We don't have to call departments and beg for data," says del Valle.

When data isn't available, "proxies"—things like symptom searches, tweets about empty offices, satellite images showing a green, wet, mosquito-friendly landscape—are helpful: You don't have to rely on anyone's health department.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place. But del Valle wants more than weekly numbers on a government website; she wants that weather-app-inspired fortune-teller, incorporating the many diseases you could get today, standing right where you are. "That's our dream," she says.

This plot shows the the correlations between the online data stream, from Wikipedia, and various infectious diseases in different countries. The results of del Valle's predictive models are shown in brown, while the actual number of cases or illness rates are shown in blue.

(Courtesy del Valle)

The goal isn't to turn you into a germophobic agoraphobe. It's to make you more aware when you do go out. "If you know it's going to rain today, you're more likely to bring an umbrella," del Valle says. "When you go on vacation, you always look at the weather and make sure you bring the appropriate clothing. If you do the same thing for diseases, you think, 'There's Zika spreading in Sao Paulo, so maybe I should bring even more mosquito repellent and bring more long sleeves and pants.'"

They're not there yet (don't hold your breath, but do stop touching your mouth). She estimates it's at least a decade away, but advances in machine learning could accelerate that hypothetical timeline. "We're doing baby steps," says del Valle, starting with the flu in the U.S., dengue in Brazil, and other efforts in Colombia, Ecuador, and Canada. "Going from there to forecasting all diseases around the globe is a long way," she says.

But even AccuWeather started small: One man began predicting weather for a utility company, then helping ski resorts optimize their snowmaking. His influence snowballed, and now private forecasting apps, including AccuWeather's, populate phones across the planet. The company's progression hasn't been without controversy—privacy incursions, inaccuracy of long-term forecasts, fights with the government—but it has continued, for better and for worse.

Disease apps, perhaps spun out of a small, unlikely team at a nuclear-weapons lab, could grow and breed in a similar way. And both the controversies and public-health benefits that may someday spin out of them lie in the future, impossible to predict with certainty.