To Make Science Engaging, We Need a Sesame Street for Adults

A new kind of television series could establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

In the mid-1960s, a documentary producer in New York City wondered if the addictive jingles, clever visuals, slogans, and repetition of television ads—the ones that were captivating young children of the time—could be harnessed for good. Over the course of three months, she interviewed educators, psychologists, and artists, and the result was a bonanza of ideas.

Perhaps a new TV show could teach children letters and numbers in short animated sequences? Perhaps adults and children could read together with puppets providing comic relief and prompting interaction from the audience? And because it would be broadcast through a device already in almost every home, perhaps this show could reach across socioeconomic divides and close an early education gap?

Soon after Joan Ganz Cooney shared her landmark report, "The Potential Uses of Television in Preschool Education," in 1966, she was prototyping show ideas, attracting funding from The Carnegie Corporation, The Ford Foundation, and The Corporation for Public Broadcasting, and co-founding the Children's Television Workshop with psychologist Lloyd Morrisett. And then, on November 10, 1969, informal learning was transformed forever with the premiere of Sesame Street on public television.

For its first season, Sesame Street won three Emmy Awards and a Peabody Award. Its star, Big Bird, landed on the cover of Time Magazine, which called the show "TV's gift to children." Fifty years later, it's hard to imagine an approach to informal preschool learning that isn't Sesame Street.

And that approach can be boiled down to one word: Entertainment.

Despite decades of evidence from Sesame Street—one of the most studied television shows of all time—and more research from social science, psychology, and media communications, we haven't yet taken Ganz Cooney's concepts to heart in educating adults. Adults have news programs and documentaries and educational YouTube channels, but no Sesame Street. So why don't we? Here's how we can design a new kind of television to make science engaging and accessible for a public that is all too often intimidated by it.

We have to start from the realization that America is a nation of high-school graduates. By the end of high school, students have decided to abandon science because they think it's too difficult, and as a nation, we've made it acceptable for any one of us to say "I'm not good at science" and offload thinking to the ones who might be. So, is it surprising that a large number of Americans are likely to believe in conspiracy theories like the 25% that believe the release of COVID-19 was planned, the one in ten who believe the Moon landing was a hoax, or the 30–40% that think the condensation trails of planes are actually nefarious chemtrails? If we're meeting people where they are, the aim can't be to get the audience from an A to an A+, but from an F to a D, and without judgment of where they are starting from.

There's also a natural compulsion for a well-meaning educator to fill a literacy gap with a barrage of information, but this is what I call "factsplaining," and we know it doesn't work. And worse, it can backfire. In one study from 2014, parents were provided with factual information about vaccine safety, and it was the group that was already the most averse to vaccines that uniquely became even more averse.

Why? Our social identities and cognitive biases are stubborn gatekeepers when it comes to processing new information. We filter ideas through pre-existing beliefs—our values, our religions, our political ideologies. Incongruent ideas are rejected. Congruent ideas, no matter how absurd, are allowed through. We hear what we want to hear, and then our brains justify the input by creating narratives that preserve our identities. Even when we have all the facts, we can use them to support any worldview.

But social science has revealed many mechanisms for hijacking these processes through narrative storytelling, and this can form the foundation of a new kind of educational television.

Could new television series establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence?

As media creators, we can reject factsplaining and instead construct entertaining narratives that disrupt cognitive processes. Two-decade-old research tells us when people are immersed in entertaining fiction narratives, they loosen their defenses, opening a path for new information, editing attitudes, and inspiring new behavior. Where news about hot-button issues like climate change or vaccination might trigger resistance or a backfire effect, fiction can be crafted to be absorbing and, as a result, persuasive.

But the narratives can't be stuffed with information. They must be simplified. If this feels like the opposite of what an educator should be doing, it is possible to reduce the complexity of information, without oversimplification, through "exemplification," a framing device to tell the stories of individuals in specific circumstances that can speak to the greater issue without needing to explain it all. It's a technique you've seen used in biopics. The Discovery Channel true-crime miniseries Manhunt: Unabomber does many things well from a science storytelling perspective, including exemplifying the virtues of the scientific method through a character who argues for a new field of science, forensic linguistics, to catch one of the most notorious domestic terrorists in U.S. history.

We must also appeal to the audience's curiosity. We know curiosity is such a strong driver of human behavior that it can even counteract the biases put up by one's political ideology around subjects like climate change. If we treat science information like a product—and we should—advertising research tells us we can maximize curiosity though a Goldilocks effect. If the information is too complex, your show might as well be a PowerPoint presentation. If it's too simple, it's Sesame Street. There's a sweet spot for creating intrigue about new information when there's a moderate cognitive gap.

The science of "identification" tells us that the more the main character is endearing to a viewer, the more likely the viewer will adopt the character's worldview and journey of change. This insight further provides incentives to craft characters reflective of our audiences. If we accept our biases for what they are, we can understand why the messenger becomes more important than the message, because, without an appropriate messenger, the message becomes faint and ineffective. And research confirms that the stereotype-busting doctor-skeptic Dana Scully of The X-Files, a popular science-fiction series, was an inspiration for a generation of women who pursued science careers.

With these directions, we can start making a new kind of television. But is television itself still the right delivery medium? Americans do spend six hours per day—a quarter of their lives—watching video. And even with the rise of social media and apps, science-themed television shows remain popular, with four out of five adults reporting that they watch shows about science at least sometimes. CBS's The Big Bang Theory was the most-watched show on television in the 2017–2018 season, and Cartoon Network's Rick & Morty is the most popular comedy series among millennials. And medical and forensic dramas continue to be broadcast staples. So yes, it's as true today as it was in the 1980s when George Gerbner, the "cultivation theory" researcher who studied the long-term impacts of television images, wrote, "a single episode on primetime television can reach more people than all science and technology promotional efforts put together."

We know from cultivation theory that media images can shape our views of scientists. Quick, picture a scientist! Was it an old, white man with wild hair in a lab coat? If most Americans don't encounter research science firsthand, it's media that dictates how we perceive science and scientists. Characters like Sheldon Cooper and Rick Sanchez become the model. But we can correct that by representing professionals more accurately on-screen and writing characters more like Dana Scully.

Could new television series establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence? Or could new series counter the misinfodemics surrounding COVID-19 and vaccines through more compelling, corrective narratives? Social science has given us a blueprint suggesting they could. Binge-watching a show like the surreal NBC sitcom The Good Place doesn't replace a Ph.D. in philosophy, but its use of humor plants the seed of continued interest in a new subject. The goal of persuasive entertainment isn't to replace formal education, but it can inspire, shift attitudes, increase confidence in the knowledge of complex issues, and otherwise prime viewers for continued learning.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

Tech-related injuries are becoming more common as many people depend on - and often develop addictions for - smart phones and computers.

In the 1990s, a mysterious virus spread throughout the Massachusetts Institute of Technology Artificial Intelligence Lab—or that’s what the scientists who worked there thought. More of them rubbed their aching forearms and massaged their cricked necks as new computers were introduced to the AI Lab on a floor-by-floor basis. They realized their musculoskeletal issues coincided with the arrival of these new computers—some of which were mounted high up on lab benches in awkward positions—and the hours spent typing on them.

Today, these injuries have become more common in a society awash with smart devices, sleek computers, and other gadgets. And we don’t just get hurt from typing on desktop computers; we’re massaging our sore wrists from hours of texting and Facetiming on phones, especially as they get bigger in size.

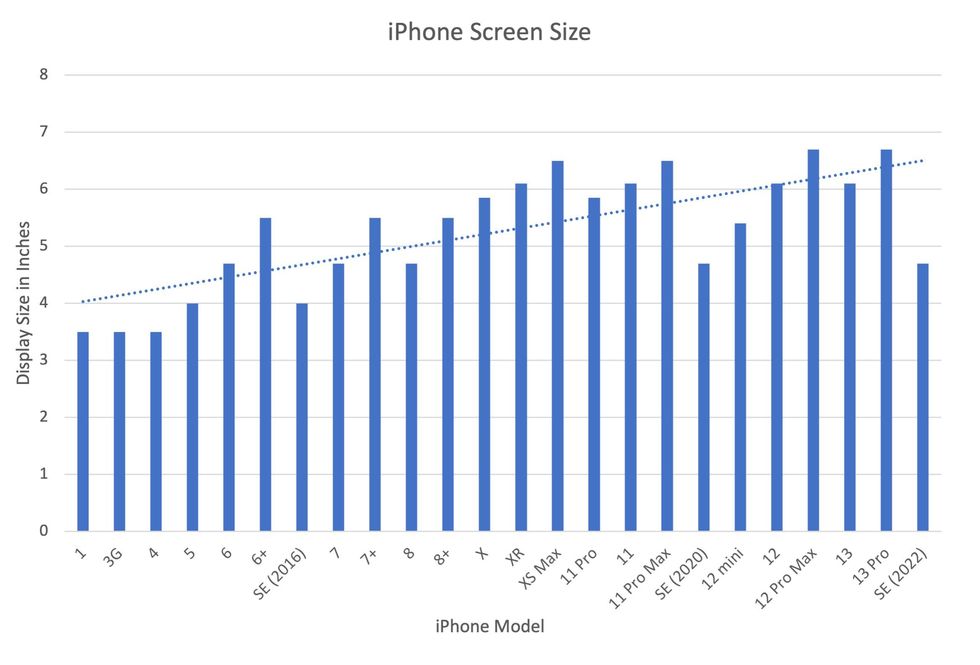

In 2007, the first iPhone measured 3.5-inches diagonally, a measurement known as the display size. That’s been nearly doubled by the newest iPhone 13 Pro, which has a 6.7-inch display. Other phones, too, like the Google Pixel 6 and the Samsung Galaxy S22, have bigger screens than their predecessors. Physical therapists and orthopedic surgeons have had to come up with names for a variety of new conditions: selfie elbow, tech neck, texting thumb. Orthopedic surgeon Sonya Sloan says she sees selfie elbow in younger kids and in women more often than men. She hears complaints related to technology once or twice a day.

The addictive quality of smartphones and social media means that people spend more time on their devices, which exacerbates injuries. According to Statista, 68 percent of those surveyed spent over three hours a day on their phone, and almost half spent five to six hours a day. Another report showed that people dedicate a third of their day to checking their phones, while the Media Effects Research Laboratory at Pennsylvania State University has found that bigger screens, ideal for entertainment purposes, immerse their users more than smaller screens. Oversized screens also provide easier navigation and more space for those with bigger hands or trouble seeing.

But others with conditions like arthritis can benefit from smaller phones. In March of 2016, Apple released the iPhone SE with a display size of 4.7 inches—an inch smaller than the iPhone 7, released that September. Apple has since come out with two more versions of the diminutive iPhone SE, one in 2020 and another in 2022.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable?

Kavin Senapathy, a freelance science journalist, has the Google Pixel 6. She was drawn to the phone because Google marketed the Pixel 6’s camera as better at capturing different skin tones. But this phone boasts one of the largest display sizes on the market: 6.4 inches.

Senapathy was diagnosed with carpal and cubital tunnel syndromes in 2017 and fibromyalgia in 2019. She has had to create a curated ergonomic workplace setup, otherwise her wrists and hands get weak and tingly, and she’s had to adjust how she holds her phone to prevent pain flares.

Recently, Senapathy underwent an electromyography, or an EMG, in which doctors insert electrodes into muscles to measure their electrical activity. The electrical response of the muscles tells doctors whether the nerve cells and muscles are successfully communicating. Depending on her results, steroid shots and even surgery might be required. Senapathy wants to stick with her Pixel 6, but the pain she’s experiencing may push her to buy a smaller phone. Unfortunately, options for these modestly sized phones are more limited.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers like Senapathy to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable for creating addictive devices that lead to musculoskeletal injury?

Kavin Senapathy, a freelance journalist, bought the Google Pixel 6 because of its high-quality camera, but she’s had to adjust how she holds the oversized phone to prevent pain flares.

Kavin Senapathy

A one-size-fits-all mentality for smartphones will continue to lead to injuries because every user has different wants and needs. S. Shyam Sundar, the founder of Penn State’s lab on media effects and a communications professor, says the needs for mobility and portability conflict with the desire for greater visibility. “The best thing a company can do is offer different sizes,” he says.

Joanna Bryson, an AI ethics expert and professor at The Hertie School of Governance in Berlin, Germany, echoed these sentiments. “A lot of the lack of choice we see comes from the fact that the markets have consolidated so much,” she says. “We want to make sure there’s sufficient diversity [of products].”

Consumers can still maintain some control despite the ubiquity of tech. Sloan, the orthopedic surgeon, has to pester her son to change his body positioning when using his tablet. Our heads get heavier as they bend forward: at rest, they weigh 12 pounds, but bent 60 degrees, they weigh 60. “I have to tell him, ‘Raise your head, son!’” she says. It’s important, Sloan explains, to consider that growth and development will affect ligaments and bones in the neck, potentially making kids even more vulnerable to injuries from misusing gadgets. She recommends that parents limit their kids’ tech time to alleviate strain. She also suggested that tech companies implement a timer to remind us to change our body positioning.

In 2017, Nan-Wei Gong, a former contractor for Google, founded Figur8, which uses wearable trackers to measure muscle function and joint movement. It’s like physical therapy with biofeedback. “Each unique injury has a different biomarker,” says Gong. “With Figur8, you are comparing yourself to yourself.” This allows an individual to self-monitor for wear and tear and strengthen an injury in a way that’s efficient and designed for their body. Gong noticed that the work-from-home model during the COVID-19 pandemic created a new set of ergonomic problems that resulted in injuries. Figur8 provides real-time data for these injuries because “behavioral change requires feedback.”

Gong worked on a project called Jacquard while at Google. Textile experts weave conductive thread into their fabric, and the result is a patch of the fabric—like the cuff of a Levi’s jacket—that responds to commands on your smartphone. One swipe can call your partner or check the weather. It was designed with cyclists in mind who can’t easily check their phones, and it’s part of a growing movement in the tech industry to deliver creative, hands-free design. Gong thinks that engineers at large corporations like Google have accessibility in mind; it’s part of what drives their decisions for new products.

Display sizes of iPhones have become larger over time.

Sourced from Screenrant https://screenrant.com/iphone-apple-release-chronological-order-smartphone/ and Apple Tech Specs: https://www.apple.com/iphone-se/specs/

Back in Germany, Joanna Bryson reminds us that products like smartphones should adhere to best practices. These rules may be especially important for phones and other products with AI that are addictive. Disclosure, accountability, and regulation are important for AI, she says. “The correct balance will keep changing. But we have responsibilities and obligations to each other.” She was on an AI Ethics Council at Google, but the committee was disbanded after only one week due to issues with one of their members.

Bryson was upset about the Council’s dissolution but has faith that other regulatory bodies will prevail. OECD.AI, and international nonprofit, has drafted policies to regulate AI, which countries can sign and implement. “As of July 2021, 46 governments have adhered to the AI principles,” their website reads.

Sundar, the media effects professor, also directs Penn State’s Center for Socially Responsible AI. He says that inclusivity is a crucial aspect of social responsibility and how devices using AI are designed. “We have to go beyond first designing technologies and then making them accessible,” he says. “Instead, we should be considering the issues potentially faced by all different kinds of users before even designing them.”

Entomologist Jessica Ware is using new technologies to identify insect species in a changing climate. She shares her suggestions for how we can live harmoniously with creeper crawlers everywhere.

Jessica Ware is obsessed with bugs.

My guest today is a leading researcher on insects, the president of the Entomological Society of America and a curator at the American Museum of Natural History. Learn more about her here.

You may not think that insects and human health go hand-in-hand, but as Jessica makes clear, they’re closely related. A lot of people care about their health, and the health of other creatures on the planet, and the health of the planet itself, but researchers like Jessica are studying another thing we should be focusing on even more: how these seemingly separate areas are deeply entwined. (This is the theme of an upcoming event hosted by Leaps.org and the Aspen Institute.)

Listen to the Episode

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Entomologist Jessica Ware

D. Finnin / AMNH

Maybe it feels like a core human instinct to demonize bugs as gross. We seem to try to eradicate them in every way possible, whether that’s with poison, or getting out our blood thirst by stomping them whenever they creep and crawl into sight.

But where did our fear of bugs really come from? Jessica makes a compelling case that a lot of it is cultural, rather than in-born, and we should be following the lead of other cultures that have learned to live with and appreciate bugs.

The truth is that a healthy planet depends on insects. You may feel stung by that news if you hate bugs. Reality bites.

Jessica and I talk about whether learning to live with insects should include eating them and gene editing them so they don’t transmit viruses. She also tells me about her important research into using genomic tools to track bugs in the wild to figure out why and how we’ve lost 50 percent of the insect population since 1970 according to some estimates – bad news because the ecosystems that make up the planet heavily depend on insects. Jessica is leading the way to better understand what’s causing these declines in order to start reversing these trends to save the insects and to save ourselves.