Why Your Brain Falls for Misinformation – And How to Avoid It

Understanding the vulnerabilities of our own brains can help us guard against fake news.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Whenever you hear something repeated, it feels more true. In other words, repetition makes any statement seem more accurate. So anything you hear again will resonate more each time it's said.

Do you see what I did there? Each of the three sentences above conveyed the same message. Yet each time you read the next sentence, it felt more and more true. Cognitive neuroscientists and behavioral economists like myself call this the "illusory truth effect."

Go back and recall your experience reading the first sentence. It probably felt strange and disconcerting, perhaps with a note of resistance, as in "I don't believe things more if they're repeated!"

Reading the second sentence did not inspire such a strong reaction. Your reaction to the third sentence was tame by comparison.

Why? Because of a phenomenon called "cognitive fluency," meaning how easily we process information. Much of our vulnerability to deception in all areas of life—including to fake news and misinformation—revolves around cognitive fluency in one way or another. And unfortunately, such misinformation can swing major elections.

The Lazy Brain

Our brains are lazy. The more effort it takes to process information, the more uncomfortable we feel about it and the more we dislike and distrust it.

By contrast, the more we like certain data and are comfortable with it, the more we feel that it's accurate. This intuitive feeling in our gut is what we use to judge what's true and false.

Yet no matter how often you heard that you should trust your gut and follow your intuition, that advice is wrong. You should not trust your gut when evaluating information where you don't have expert-level knowledge, at least when you don't want to screw up. Structured information gathering and decision-making processes help us avoid the numerous errors we make when we follow our intuition. And even experts can make serious errors when they don't rely on such decision aids.

These mistakes happen due to mental errors that scholars call "cognitive biases." The illusory truth effect is one of these mental blindspots; there are over 100 altogether. These mental blindspots impact all areas of our life, from health and politics to relationships and even shopping.

We pay the most attention to whatever we find most emotionally salient in our environment, as that's the information easiest for us to process.

The Maladapted Brain

Why do we have so many cognitive biases? It turns out that our intuitive judgments—our gut reactions, our instincts, whatever you call them—aren't adapted for the modern environment. They evolved from the ancestral savanna environment, when we lived in small tribes of 15–150 people and spent our time hunting and foraging.

It's not a surprise, when you think about it. Evolution works on time scales of many thousands of years; our modern informational environment has been around for only a couple of decades, with the rise of the internet and social media.

Unfortunately, that means we're using brains adapted for the primitive conditions of hunting and foraging to judge information and make decisions in a very different world. In the ancestral environment, we had to make quick snap judgments in order to survive, thrive, and reproduce; we're the descendants of those who did so most effectively.

In the modern environment, we can take our time to make much better judgments by using structured evaluation processes to protect yourself from cognitive biases. We have to train our minds to go against our intuitions if we want to figure out the truth and avoid falling for misinformation.

Yet it feels very counterintuitive to do so. Again, not a surprise: by definition, you have to go against your intuitions. It's not easy, but it's truly the only path if you don't want to be vulnerable to fake news.

The Danger of Cognitive Fluency and Illusory Truth

We already make plenty of mistakes by ourselves, without outside intervention. It's especially difficult to protect ourselves against those who know how to manipulate us. Unfortunately, the purveyors of misinformation excel at exploiting our cognitive biases to get us to buy into fake news.

Consider the illusory truth effect. Our vulnerability to it stems from how our brain processes novel stimuli. The first time we hear something new to us, it's difficult to process mentally. It has to integrate with our existing knowledge framework, and we have to build new neural pathways to make that happen. Doing so feels uncomfortable for our lazy brain, so the statement that we heard seems difficult to swallow to us.

The next time we hear that same thing, our mind doesn't have to build new pathways. It just has to go down the same ones it built earlier. Granted, those pathways are little more than trails, newly laid down and barely used. It's hard to travel down that newly established neural path, but much easier than when your brain had to lay down that trail. As a result, the statement is somewhat easier to swallow.

Each repetition widens and deepens the trail. Each time you hear the same thing, it feels more true, comfortable, and intuitive.

Does it work for information that seems very unlikely? Science says yes! Researchers found that the illusory truth effect applies strongly to implausible as well as plausible statements.

What about if you know better? Surely prior knowledge prevents this illusory truth! Unfortunately not: even if you know better, research shows you're still vulnerable to this cognitive bias, though less than those who don't have prior knowledge.

Sadly, people who are predisposed to more elaborate and sophisticated thinking—likely you, if you're reading the article—are more likely to fall for the illusory truth effect. And guess what: more sophisticated thinkers are also likelier than less sophisticated ones to fall for the cognitive bias known as the bias blind spot, where you ignore your own cognitive biases. So if you think that cognitive biases such as the illusory truth effect don't apply to you, you're likely deluding yourself.

That's why the purveyors of misinformation rely on repeating the same thing over and over and over and over again. They know that despite fact-checking, their repetition will sway people, even some of those who think they're invulnerable. In fact, believing that you're invulnerable will make you more likely to fall for this and other cognitive biases, since you won't be taking the steps necessary to address them.

Other Important Cognitive Biases

What are some other cognitive biases you need to beware? If you've heard of any cognitive biases, you've likely heard of the "confirmation bias." That refers to our tendency to look for and interpret information in ways that conform to our prior beliefs, intuitions, feelings, desires, and preferences, as opposed to the facts.

Again, cognitive fluency deserves blame. It's much easier to build neural pathways to information that we already possess, especially that around which we have strong emotions; it's much more difficult to break well-established neural pathways if we need to change our mind based on new information. Consequently, we instead look for information that's easy to accept, that which fits our prior beliefs. In turn, we ignore and even actively reject information that doesn't fit our beliefs.

Moreover, the more educated we are, the more likely we are to engage in such active rejection. After all, our smarts give us more ways of arguing against new information that counters our beliefs. That's why research demonstrates that the more educated you are, the more polarized your beliefs will be around scientific issues that have religious or political value overtones, such as stem cell research, human evolution, and climate change. Where might you be letting your smarts get in the way of the facts?

Our minds like to interpret the world through stories, meaning explanatory narratives that link cause and effect in a clear and simple manner. Such stories are a balm to our cognitive fluency, as our mind constantly looks for patterns that explain the world around us in an easy-to-process manner. That leads to the "narrative fallacy," where we fall for convincing-sounding narratives regardless of the facts, especially if the story fits our predispositions and our emotions.

You ever wonder why politicians tell so many stories? What about the advertisements you see on TV or video advertisements on websites, which tell very quick visual stories? How about salespeople or fundraisers? Sure, sometimes they cite statistics and scientific reports, but they spend much, much more time telling stories: simple, clear, compelling narratives that seem to make sense and tug at our heartstrings.

Now, here's something that's actually true: the world doesn't make sense. The world is not simple, clear, and compelling. The world is complex, confusing, and contradictory. Beware of simple stories! Look for complex, confusing, and contradictory scientific reports and high-quality statistics: they're much more likely to contain the truth than the easy-to-process stories.

Another big problem that comes from cognitive fluency: the "attentional bias." We pay the most attention to whatever we find most emotionally salient in our environment, as that's the information easiest for us to process. Most often, such stimuli are negative; we feel a lesser but real attentional bias to positive information.

That's why fear, anger, and resentment represent such powerful tools of misinformers. They know that people will focus on and feel more swayed by emotionally salient negative stimuli, so be suspicious of negative, emotionally laden data.

You should be especially wary of such information in the form of stories framed to fit your preconceptions and repeated. That's because cognitive biases build on top of each other. You need to learn about the most dangerous ones for evaluating reality clearly and making wise decisions, and watch out for them when you consume news, and in other life areas where you don't want to make poor choices.

Fixing Our Brains

Unfortunately, knowledge only weakly protects us from cognitive biases; it's important, but far from sufficient, as the study I cited earlier on the illusory truth effect reveals.

What can we do?

The easiest decision aid is a personal commitment to twelve truth-oriented behaviors called the Pro-Truth Pledge, which you can make by signing the pledge at ProTruthPledge.org. All of these behaviors stem from cognitive neuroscience and behavioral economics research in the field called debiasing, which refers to counterintuitive, uncomfortable, but effective strategies to protect yourself from cognitive biases.

What are these behaviors? The first four relate to you being truthful yourself, under the category "share truth." They're the most important for avoiding falling for cognitive biases when you share information:

Share truth

- Verify: fact-check information to confirm it is true before accepting and sharing it

- Balance: share the whole truth, even if some aspects do not support my opinion

- Cite: share my sources so that others can verify my information

- Clarify: distinguish between my opinion and the facts

The second set of four are about how you can best "honor truth" to protect yourself from cognitive biases in discussions with others:

Honor truth

- Acknowledge: when others share true information, even when we disagree otherwise

- Reevaluate: if my information is challenged, retract it if I cannot verify it

- Defend: defend others when they come under attack for sharing true information, even when we disagree otherwise

- Align: align my opinions and my actions with true information

The last four, under the category "encourage truth," promote broader patterns of truth-telling in our society by providing incentives for truth-telling and disincentives for deception:

Encourage truth

- Fix: ask people to retract information that reliable sources have disproved even if they are my allies

- Educate: compassionately inform those around me to stop using unreliable sources even if these sources support my opinion

- Defer: recognize the opinions of experts as more likely to be accurate when the facts are disputed

- Celebrate: those who retract incorrect statements and update their beliefs toward the truth

Peer-reviewed research has shown that taking the Pro-Truth Pledge is effective for changing people's behavior to be more truthful, both in their own statements and in interactions with others. I hope you choose to join the many thousands of ordinary citizens—and over 1,000 politicians and officials—who committed to this decision aid, as opposed to going with their gut.

[Adapted from: Dr. Gleb Tsipursky and Tim Ward, Pro Truth: A Practical Plan for Putting Truth Back Into Politics (Changemakers Books, 2020).]

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

DNA- and RNA-based electronic implants may revolutionize healthcare

The test tubes contain tiny DNA/enzyme-based circuits, which comprise TRUMPET, a new type of electronic device, smaller than a cell.

Implantable electronic devices can significantly improve patients’ quality of life. A pacemaker can encourage the heart to beat more regularly. A neural implant, usually placed at the back of the skull, can help brain function and encourage higher neural activity. Current research on neural implants finds them helpful to patients with Parkinson’s disease, vision loss, hearing loss, and other nerve damage problems. Several of these implants, such as Elon Musk’s Neuralink, have already been approved by the FDA for human use.

Yet, pacemakers, neural implants, and other such electronic devices are not without problems. They require constant electricity, limited through batteries that need replacements. They also cause scarring. “The problem with doing this with electronics is that scar tissue forms,” explains Kate Adamala, an assistant professor of cell biology at the University of Minnesota Twin Cities. “Anytime you have something hard interacting with something soft [like muscle, skin, or tissue], the soft thing will scar. That's why there are no long-term neural implants right now.” To overcome these challenges, scientists are turning to biocomputing processes that use organic materials like DNA and RNA. Other promised benefits include “diagnostics and possibly therapeutic action, operating as nanorobots in living organisms,” writes Evgeny Katz, a professor of bioelectronics at Clarkson University, in his book DNA- And RNA-Based Computing Systems.

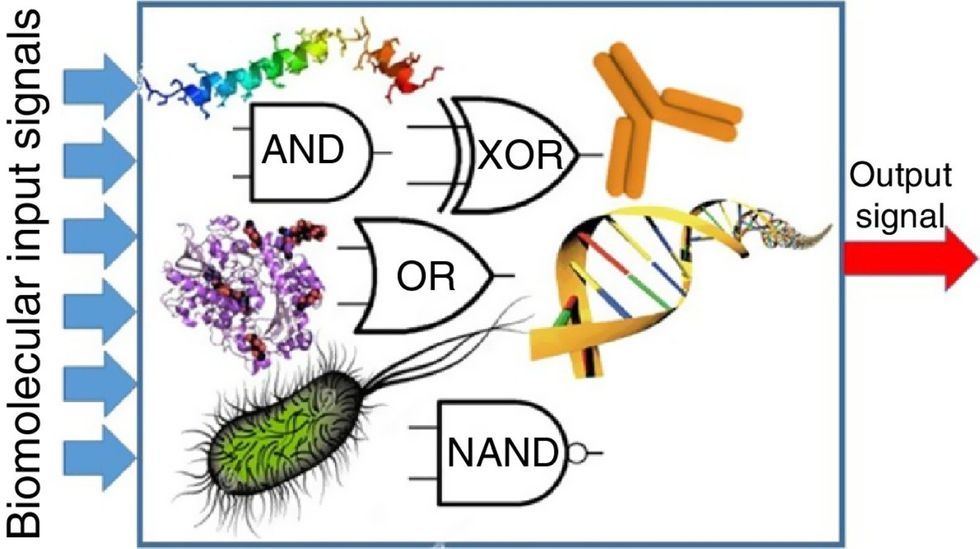

While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output.

Adamala’s research focuses on developing such biocomputing systems using DNA, RNA, proteins, and lipids. Using these molecules in the biocomputing systems allows the latter to be biocompatible with the human body, resulting in a natural healing process. In a recent Nature Communications study, Adamala and her team created a new biocomputing platform called TRUMPET (Transcriptional RNA Universal Multi-Purpose GatE PlaTform) which acts like a DNA-powered computer chip. “These biological systems can heal if you design them correctly,” adds Adamala. “So you can imagine a computer that will eventually heal itself.”

The basics of biocomputing

Biocomputing and regular computing have many similarities. Like regular computing, biocomputing works by running information through a series of gates, usually logic gates. A logic gate works as a fork in the road for an electronic circuit. The input will travel one way or another, giving two different outputs. An example logic gate is the AND gate, which has two inputs (A and B) and two different results. If both A and B are 1, the AND gate output will be 1. If only A is 1 and B is 0, the output will be 0 and vice versa. If both A and B are 0, the result will be 0. While a computer gives these inputs in binary code or "bits," such as a 0 or 1, biocomputing uses DNA strands as inputs, whether double or single-stranded, and often uses fluorescent RNA as an output. In this case, the DNA enters the logic gate as a single or double strand.

If the DNA is double-stranded, the system “digests” the DNA or destroys it, which results in non-fluorescence or “0” output. Conversely, if the DNA is single-stranded, it won’t be digested and instead will be copied by several enzymes in the biocomputing system, resulting in fluorescent RNA or a “1” output. And the output for this type of binary system can be expanded beyond fluorescence or not. For example, a “1” output might be the production of the enzyme insulin, while a “0” may be that no insulin is produced. “This kind of synergy between biology and computation is the essence of biocomputing,” says Stephanie Forrest, a professor and the director of the Biodesign Center for Biocomputing, Security and Society at Arizona State University.

Biocomputing circles are made of DNA, RNA, proteins and even bacteria.

Evgeny Katz

The TRUMPET’s promise

Depending on whether the biocomputing system is placed directly inside a cell within the human body, or run in a test-tube, different environmental factors play a role. When an output is produced inside a cell, the cell's natural processes can amplify this output (for example, a specific protein or DNA strand), creating a solid signal. However, these cells can also be very leaky. “You want the cells to do the thing you ask them to do before they finish whatever their businesses, which is to grow, replicate, metabolize,” Adamala explains. “However, often the gate may be triggered without the right inputs, creating a false positive signal. So that's why natural logic gates are often leaky." While biocomputing outside a cell in a test tube can allow for tighter control over the logic gates, the outputs or signals cannot be amplified by a cell and are less potent.

TRUMPET, which is smaller than a cell, taps into both cellular and non-cellular biocomputing benefits. “At its core, it is a nonliving logic gate system,” Adamala states, “It's a DNA-based logic gate system. But because we use enzymes, and the readout is enzymatic [where an enzyme replicates the fluorescent RNA], we end up with signal amplification." This readout means that the output from the TRUMPET system, a fluorescent RNA strand, can be replicated by nearby enzymes in the platform, making the light signal stronger. "So it combines the best of both worlds,” Adamala adds.

These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body.

The TRUMPET biocomputing process is relatively straightforward. “If the DNA [input] shows up as single-stranded, it will not be digested [by the logic gate], and you get this nice fluorescent output as the RNA is made from the single-stranded DNA, and that's a 1,” Adamala explains. "And if the DNA input is double-stranded, it gets digested by the enzymes in the logic gate, and there is no RNA created from the DNA, so there is no fluorescence, and the output is 0." On the story's leading image above, if the tube is "lit" with a purple color, that is a binary 1 signal for computing. If it's "off" it is a 0.

While still in research, TRUMPET and other biocomputing systems promise significant benefits to personalized healthcare and medicine. These organic-based systems could detect cancer cells or low insulin levels inside a patient’s body. The study’s lead author and graduate student Judee Sharon is already beginning to research TRUMPET's ability for earlier cancer diagnoses. Because the inputs for TRUMPET are single or double-stranded DNA, any mutated or cancerous DNA could theoretically be detected from the platform through the biocomputing process. Theoretically, devices like TRUMPET could be used to detect cancer and other diseases earlier.

Adamala sees TRUMPET not only as a detection system but also as a potential cancer drug delivery system. “Ideally, you would like the drug only to turn on when it senses the presence of a cancer cell. And that's how we use the logic gates, which work in response to inputs like cancerous DNA. Then the output can be the production of a small molecule or the release of a small molecule that can then go and kill what needs killing, in this case, a cancer cell. So we would like to develop applications that use this technology to control the logic gate response of a drug’s delivery to a cell.”

Although platforms like TRUMPET are making progress, a lot more work must be done before they can be used commercially. “The process of translating mechanisms and architecture from biology to computing and vice versa is still an art rather than a science,” says Forrest. “It requires deep computer science and biology knowledge,” she adds. “Some people have compared interdisciplinary science to fusion restaurants—not all combinations are successful, but when they are, the results are remarkable.”

Crickets are low on fat, high on protein, and can be farmed sustainably. They are also crunchy.

In today’s podcast episode, Leaps.org Deputy Editor Lina Zeldovich speaks about the health and ecological benefits of farming crickets for human consumption with Bicky Nguyen, who joins Lina from Vietnam. Bicky and her business partner Nam Dang operate an insect farm named CricketOne. Motivated by the idea of sustainable and healthy protein production, they started their unconventional endeavor a few years ago, despite numerous naysayers who didn’t believe that humans would ever consider munching on bugs.

Yet, making creepy crawlers part of our diet offers many health and planetary advantages. Food production needs to match the rise in global population, estimated to reach 10 billion by 2050. One challenge is that some of our current practices are inefficient, polluting and wasteful. According to nonprofit EarthSave.org, it takes 2,500 gallons of water, 12 pounds of grain, 35 pounds of topsoil and the energy equivalent of one gallon of gasoline to produce one pound of feedlot beef, although exact statistics vary between sources.

Meanwhile, insects are easy to grow, high on protein and low on fat. When roasted with salt, they make crunchy snacks. When chopped up, they transform into delicious pâtes, says Bicky, who invents her own cricket recipes and serves them at industry and public events. Maybe that’s why some research predicts that edible insects market may grow to almost $10 billion by 2030. Tune in for a delectable chat on this alternative and sustainable protein.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Further reading:

More info on Bicky Nguyen

https://yseali.fulbright.edu.vn/en/faculty/bicky-n...

The environmental footprint of beef production

https://www.earthsave.org/environment.htm

https://www.watercalculator.org/news/articles/beef-king-big-water-footprints/

https://www.frontiersin.org/articles/10.3389/fsufs.2019.00005/full

https://ourworldindata.org/carbon-footprint-food-methane

Insect farming as a source of sustainable protein

https://www.insectgourmet.com/insect-farming-growing-bugs-for-protein/

https://www.sciencedirect.com/topics/agricultural-and-biological-sciences/insect-farming

Cricket flour is taking the world by storm

https://www.cricketflours.com/

https://talk-commerce.com/blog/what-brands-use-cricket-flour-and-why/

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.