Why Your Brain Falls for Misinformation – And How to Avoid It

Understanding the vulnerabilities of our own brains can help us guard against fake news.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Whenever you hear something repeated, it feels more true. In other words, repetition makes any statement seem more accurate. So anything you hear again will resonate more each time it's said.

Do you see what I did there? Each of the three sentences above conveyed the same message. Yet each time you read the next sentence, it felt more and more true. Cognitive neuroscientists and behavioral economists like myself call this the "illusory truth effect."

Go back and recall your experience reading the first sentence. It probably felt strange and disconcerting, perhaps with a note of resistance, as in "I don't believe things more if they're repeated!"

Reading the second sentence did not inspire such a strong reaction. Your reaction to the third sentence was tame by comparison.

Why? Because of a phenomenon called "cognitive fluency," meaning how easily we process information. Much of our vulnerability to deception in all areas of life—including to fake news and misinformation—revolves around cognitive fluency in one way or another. And unfortunately, such misinformation can swing major elections.

The Lazy Brain

Our brains are lazy. The more effort it takes to process information, the more uncomfortable we feel about it and the more we dislike and distrust it.

By contrast, the more we like certain data and are comfortable with it, the more we feel that it's accurate. This intuitive feeling in our gut is what we use to judge what's true and false.

Yet no matter how often you heard that you should trust your gut and follow your intuition, that advice is wrong. You should not trust your gut when evaluating information where you don't have expert-level knowledge, at least when you don't want to screw up. Structured information gathering and decision-making processes help us avoid the numerous errors we make when we follow our intuition. And even experts can make serious errors when they don't rely on such decision aids.

These mistakes happen due to mental errors that scholars call "cognitive biases." The illusory truth effect is one of these mental blindspots; there are over 100 altogether. These mental blindspots impact all areas of our life, from health and politics to relationships and even shopping.

We pay the most attention to whatever we find most emotionally salient in our environment, as that's the information easiest for us to process.

The Maladapted Brain

Why do we have so many cognitive biases? It turns out that our intuitive judgments—our gut reactions, our instincts, whatever you call them—aren't adapted for the modern environment. They evolved from the ancestral savanna environment, when we lived in small tribes of 15–150 people and spent our time hunting and foraging.

It's not a surprise, when you think about it. Evolution works on time scales of many thousands of years; our modern informational environment has been around for only a couple of decades, with the rise of the internet and social media.

Unfortunately, that means we're using brains adapted for the primitive conditions of hunting and foraging to judge information and make decisions in a very different world. In the ancestral environment, we had to make quick snap judgments in order to survive, thrive, and reproduce; we're the descendants of those who did so most effectively.

In the modern environment, we can take our time to make much better judgments by using structured evaluation processes to protect yourself from cognitive biases. We have to train our minds to go against our intuitions if we want to figure out the truth and avoid falling for misinformation.

Yet it feels very counterintuitive to do so. Again, not a surprise: by definition, you have to go against your intuitions. It's not easy, but it's truly the only path if you don't want to be vulnerable to fake news.

The Danger of Cognitive Fluency and Illusory Truth

We already make plenty of mistakes by ourselves, without outside intervention. It's especially difficult to protect ourselves against those who know how to manipulate us. Unfortunately, the purveyors of misinformation excel at exploiting our cognitive biases to get us to buy into fake news.

Consider the illusory truth effect. Our vulnerability to it stems from how our brain processes novel stimuli. The first time we hear something new to us, it's difficult to process mentally. It has to integrate with our existing knowledge framework, and we have to build new neural pathways to make that happen. Doing so feels uncomfortable for our lazy brain, so the statement that we heard seems difficult to swallow to us.

The next time we hear that same thing, our mind doesn't have to build new pathways. It just has to go down the same ones it built earlier. Granted, those pathways are little more than trails, newly laid down and barely used. It's hard to travel down that newly established neural path, but much easier than when your brain had to lay down that trail. As a result, the statement is somewhat easier to swallow.

Each repetition widens and deepens the trail. Each time you hear the same thing, it feels more true, comfortable, and intuitive.

Does it work for information that seems very unlikely? Science says yes! Researchers found that the illusory truth effect applies strongly to implausible as well as plausible statements.

What about if you know better? Surely prior knowledge prevents this illusory truth! Unfortunately not: even if you know better, research shows you're still vulnerable to this cognitive bias, though less than those who don't have prior knowledge.

Sadly, people who are predisposed to more elaborate and sophisticated thinking—likely you, if you're reading the article—are more likely to fall for the illusory truth effect. And guess what: more sophisticated thinkers are also likelier than less sophisticated ones to fall for the cognitive bias known as the bias blind spot, where you ignore your own cognitive biases. So if you think that cognitive biases such as the illusory truth effect don't apply to you, you're likely deluding yourself.

That's why the purveyors of misinformation rely on repeating the same thing over and over and over and over again. They know that despite fact-checking, their repetition will sway people, even some of those who think they're invulnerable. In fact, believing that you're invulnerable will make you more likely to fall for this and other cognitive biases, since you won't be taking the steps necessary to address them.

Other Important Cognitive Biases

What are some other cognitive biases you need to beware? If you've heard of any cognitive biases, you've likely heard of the "confirmation bias." That refers to our tendency to look for and interpret information in ways that conform to our prior beliefs, intuitions, feelings, desires, and preferences, as opposed to the facts.

Again, cognitive fluency deserves blame. It's much easier to build neural pathways to information that we already possess, especially that around which we have strong emotions; it's much more difficult to break well-established neural pathways if we need to change our mind based on new information. Consequently, we instead look for information that's easy to accept, that which fits our prior beliefs. In turn, we ignore and even actively reject information that doesn't fit our beliefs.

Moreover, the more educated we are, the more likely we are to engage in such active rejection. After all, our smarts give us more ways of arguing against new information that counters our beliefs. That's why research demonstrates that the more educated you are, the more polarized your beliefs will be around scientific issues that have religious or political value overtones, such as stem cell research, human evolution, and climate change. Where might you be letting your smarts get in the way of the facts?

Our minds like to interpret the world through stories, meaning explanatory narratives that link cause and effect in a clear and simple manner. Such stories are a balm to our cognitive fluency, as our mind constantly looks for patterns that explain the world around us in an easy-to-process manner. That leads to the "narrative fallacy," where we fall for convincing-sounding narratives regardless of the facts, especially if the story fits our predispositions and our emotions.

You ever wonder why politicians tell so many stories? What about the advertisements you see on TV or video advertisements on websites, which tell very quick visual stories? How about salespeople or fundraisers? Sure, sometimes they cite statistics and scientific reports, but they spend much, much more time telling stories: simple, clear, compelling narratives that seem to make sense and tug at our heartstrings.

Now, here's something that's actually true: the world doesn't make sense. The world is not simple, clear, and compelling. The world is complex, confusing, and contradictory. Beware of simple stories! Look for complex, confusing, and contradictory scientific reports and high-quality statistics: they're much more likely to contain the truth than the easy-to-process stories.

Another big problem that comes from cognitive fluency: the "attentional bias." We pay the most attention to whatever we find most emotionally salient in our environment, as that's the information easiest for us to process. Most often, such stimuli are negative; we feel a lesser but real attentional bias to positive information.

That's why fear, anger, and resentment represent such powerful tools of misinformers. They know that people will focus on and feel more swayed by emotionally salient negative stimuli, so be suspicious of negative, emotionally laden data.

You should be especially wary of such information in the form of stories framed to fit your preconceptions and repeated. That's because cognitive biases build on top of each other. You need to learn about the most dangerous ones for evaluating reality clearly and making wise decisions, and watch out for them when you consume news, and in other life areas where you don't want to make poor choices.

Fixing Our Brains

Unfortunately, knowledge only weakly protects us from cognitive biases; it's important, but far from sufficient, as the study I cited earlier on the illusory truth effect reveals.

What can we do?

The easiest decision aid is a personal commitment to twelve truth-oriented behaviors called the Pro-Truth Pledge, which you can make by signing the pledge at ProTruthPledge.org. All of these behaviors stem from cognitive neuroscience and behavioral economics research in the field called debiasing, which refers to counterintuitive, uncomfortable, but effective strategies to protect yourself from cognitive biases.

What are these behaviors? The first four relate to you being truthful yourself, under the category "share truth." They're the most important for avoiding falling for cognitive biases when you share information:

Share truth

- Verify: fact-check information to confirm it is true before accepting and sharing it

- Balance: share the whole truth, even if some aspects do not support my opinion

- Cite: share my sources so that others can verify my information

- Clarify: distinguish between my opinion and the facts

The second set of four are about how you can best "honor truth" to protect yourself from cognitive biases in discussions with others:

Honor truth

- Acknowledge: when others share true information, even when we disagree otherwise

- Reevaluate: if my information is challenged, retract it if I cannot verify it

- Defend: defend others when they come under attack for sharing true information, even when we disagree otherwise

- Align: align my opinions and my actions with true information

The last four, under the category "encourage truth," promote broader patterns of truth-telling in our society by providing incentives for truth-telling and disincentives for deception:

Encourage truth

- Fix: ask people to retract information that reliable sources have disproved even if they are my allies

- Educate: compassionately inform those around me to stop using unreliable sources even if these sources support my opinion

- Defer: recognize the opinions of experts as more likely to be accurate when the facts are disputed

- Celebrate: those who retract incorrect statements and update their beliefs toward the truth

Peer-reviewed research has shown that taking the Pro-Truth Pledge is effective for changing people's behavior to be more truthful, both in their own statements and in interactions with others. I hope you choose to join the many thousands of ordinary citizens—and over 1,000 politicians and officials—who committed to this decision aid, as opposed to going with their gut.

[Adapted from: Dr. Gleb Tsipursky and Tim Ward, Pro Truth: A Practical Plan for Putting Truth Back Into Politics (Changemakers Books, 2020).]

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

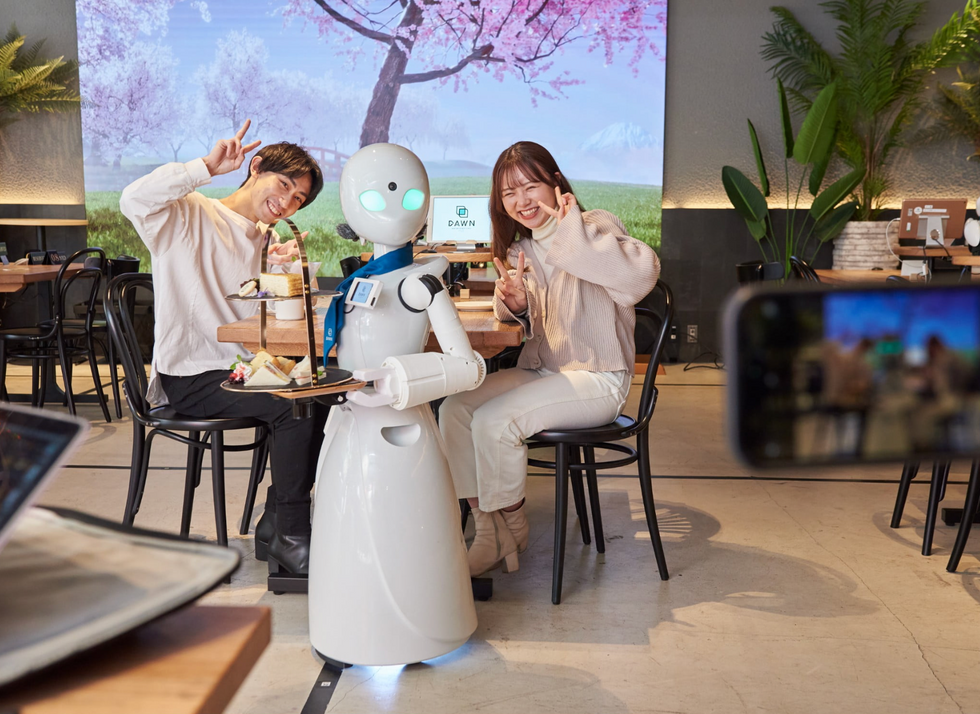

A robot server, controlled remotely by a disabled worker, delivers drinks to patrons at the DAWN cafe in Tokyo.

A sleek, four-foot tall white robot glides across a cafe storefront in Tokyo’s Nihonbashi district, holding a two-tiered serving tray full of tea sandwiches and pastries. The cafe’s patrons smile and say thanks as they take the tray—but it’s not the robot they’re thanking. Instead, the patrons are talking to the person controlling the robot—a restaurant employee who operates the avatar from the comfort of their home.

It’s a typical scene at DAWN, short for Diverse Avatar Working Network—a cafe that launched in Tokyo six years ago as an experimental pop-up and quickly became an overnight success. Today, the cafe is a permanent fixture in Nihonbashi, staffing roughly 60 remote workers who control the robots remotely and communicate to customers via a built-in microphone.

More than just a creative idea, however, DAWN is being hailed as a life-changing opportunity. The workers who control the robots remotely (known as “pilots”) all have disabilities that limit their ability to move around freely and travel outside their homes. Worldwide, an estimated 16 percent of the global population lives with a significant disability—and according to the World Health Organization, these disabilities give rise to other problems, such as exclusion from education, unemployment, and poverty.

These are all problems that Kentaro Yoshifuji, founder and CEO of Ory Laboratory, which supplies the robot servers at DAWN, is looking to correct. Yoshifuji, who was bedridden for several years in high school due to an undisclosed health problem, launched the company to help enable people who are house-bound or bedridden to more fully participate in society, as well as end the loneliness, isolation, and feelings of worthlessness that can sometimes go hand-in-hand with being disabled.

“It’s heartbreaking to think that [people with disabilities] feel they are a burden to society, or that they fear their families suffer by caring for them,” said Yoshifuji in an interview in 2020. “We are dedicating ourselves to providing workable, technology-based solutions. That is our purpose.”

Shota, Kuwahara, a DAWN employee with muscular dystrophy, agrees. "There are many difficulties in my daily life, but I believe my life has a purpose and is not being wasted," he says. "Being useful, able to help other people, even feeling needed by others, is so motivational."

A woman receives a mammogram, which can detect the presence of tumors in a patient's breast.

When a patient is diagnosed with early-stage breast cancer, having surgery to remove the tumor is considered the standard of care. But what happens when a patient can’t have surgery?

Whether it’s due to high blood pressure, advanced age, heart issues, or other reasons, some breast cancer patients don’t qualify for a lumpectomy—one of the most common treatment options for early-stage breast cancer. A lumpectomy surgically removes the tumor while keeping the patient’s breast intact, while a mastectomy removes the entire breast and nearby lymph nodes.

Fortunately, a new technique called cryoablation is now available for breast cancer patients who either aren’t candidates for surgery or don’t feel comfortable undergoing a surgical procedure. With cryoablation, doctors use an ultrasound or CT scan to locate any tumors inside the patient’s breast. They then insert small, needle-like probes into the patient's breast which create an “ice ball” that surrounds the tumor and kills the cancer cells.

Cryoablation has been used for decades to treat cancers of the kidneys and liver—but only in the past few years have doctors been able to use the procedure to treat breast cancer patients. And while clinical trials have shown that cryoablation works for tumors smaller than 1.5 centimeters, a recent clinical trial at Memorial Sloan Kettering Cancer Center in New York has shown that it can work for larger tumors, too.

In this study, doctors performed cryoablation on patients whose tumors were, on average, 2.5 centimeters. The cryoablation procedure lasted for about 30 minutes, and patients were able to go home on the same day following treatment. Doctors then followed up with the patients after 16 months. In the follow-up, doctors found the recurrence rate for tumors after using cryoablation was only 10 percent.

For patients who don’t qualify for surgery, radiation and hormonal therapy is typically used to treat tumors. However, said Yolanda Brice, M.D., an interventional radiologist at Memorial Sloan Kettering Cancer Center, “when treated with only radiation and hormonal therapy, the tumors will eventually return.” Cryotherapy, Brice said, could be a more effective way to treat cancer for patients who can’t have surgery.

“The fact that we only saw a 10 percent recurrence rate in our study is incredibly promising,” she said.