With Lab-Grown Chicken Nuggets, Dumplings, and Burgers, Futuristic Foods Aim to Seem Familiar

Shiok Meat's lab-grown "shrimp" dumplings, alongside a photo of the company's CTO, Ka Yi Ling, in a lab.

Sandhya Sriram is at the forefront of the expanding lab-grown meat industry in more ways than one.

"[Lab-grown meat] is kind of a brave new world for a lot of people, and food isn't something people like being brave about."

She's the CEO and co-founder of one of fewer than 30 companies that is even in this game in the first place. Her Singapore-based company, Shiok Meats, is the only one to pop up in Southeast Asia. And it's the only company in the world that's attempting to grow crustaceans in a lab, starting with shrimp. This spring, the company debuted a prototype of its shrimp, and completed a seed funding round of $4.6 million.

Yet despite all of these wins, Sriram's own mother won't try the company's shrimp. She's a staunch, lifelong vegetarian, adhering to a strict definition of what that means.

"[Lab-grown meat] is kind of a brave new world for a lot of people, and food isn't something people like being brave about. It's really a rather hard-wired thing," says Kate Krueger, the research director at New Harvest, a non-profit accelerator for cellular agriculture (the umbrella field that studies how to grow animal products in the lab, including meat, dairy, and eggs).

It's so hard-wired, in fact, that trends in food inform our species' origin story. In 2017, a group of paleoanthropologists caused an upset when they unearthed fossils in present day Morocco showing that our earliest human ancestors lived much further north and 100,000 years earlier than expected -- the remains date back 300,000 years. But the excavation not only included bones and tools, it also painted a clear picture of the prevailing menu at the time: The oldest humans were apparently chomping on tons of gazelle, as well as wildebeest and zebra when they could find them, plus the occasional seasonal ostrich egg.

These were people with a diet shaped by available resources, but also by the ability to cook in the first place. In his book Catching Fire: How Cooking Made Us Human, Harvard primatologist Richard Wrangam writes that the very thing that allowed for the evolution of Homo sapiens was the ability to transform raw ingredients into edible nutrients through cooking.

Today, our behavior and feelings around food are the product of local climate, crops, animal populations, and tools, but also religion, tradition, and superstition. So what happens when you add science to the mix? Turns out, we still trend toward the familiar. The innovations in lab-grown meat that are picking up the most steam are foods like burgers, not meat chips, and salmon, not salmon-cod-tilapia hybrids. It's not for lack of imagination, it's because the industry's practitioners know that a lifetime of food memories is a hard thing to contend with. So far, the nascent lab-grown meat industry is not so much disrupting as being shaped by the oldest culture we have.

Not a single piece of lab-grown meat is commercially available to consumers yet, and already so much ink has been spilled debating if it's really meat, if it's kosher, if it's vegetarian, if it's ethical, if it's sustainable. But whether or not the industry succeeds and sticks around is almost moot -- watching these conversations and innovations unfold serves as a mirror reflecting back who we are, what concerns us, and what we aspire to.

The More Things Change, the More They Stay the Same

The building blocks for making lab-grown meat right now are remarkably similar, no matter what type of animal protein a company is aiming to produce.

First, a small biopsy, about the size of a sesame seed, is taken from a single animal. Then, the muscle cells are isolated and added to a nutrient-dense culture in a bioreactor -- the same tool used to make beer -- where the cells can multiply, grow, and form muscle tissue. This tissue can then be mixed with additives like nutrients, seasonings, binders, and sometimes colors to form a food product. Whether a company is attempting to make chicken, fish, beef, shrimp, or any other animal protein in a lab, the basic steps remain similar. Cells from various animals do behave differently, though, and each company has its own proprietary techniques and tools. Some, for example, use fetal calf serum as their cell culture, while others, aiming for a more vegan approach, eschew it.

"New gadgets feel safest when they remind us of other objects that we already know."

According to Mark Post, who made the first lab-grown hamburger at Maastricht University in the Netherlands in 2013, the cells of just one cow can give way to 175 million four-ounce burgers. By today's available burger-making methods, you'd need to slaughter 440,000 cows for the same result. The projected difference in the purely material efficiency between the two systems is staggering. The environmental impact is hard to predict, though. Some companies claim that their lab-grown meat requires 99 percent less land and 96 percent less water than traditional farming methods -- and that rearing fewer cows, specifically, would reduce methane emissions -- but the energy cost of running a lab-grown-meat production facility at an industrial scale, especially as compared to small-scale, pasture-raised farming, could be problematic. It's difficult to truly measure any of this in a burgeoning industry.

At this point, growing something like an intact shrimp tail or a marbled steak in a lab is still a Holy Grail. It would require reproducing the complex musculo-skeletal and vascular structure of meat, not just the cellular basis, and no one's successfully done it yet. Until then, many companies working on lab-grown meat are perfecting mince. Each new company's demo of a prototype food feels distinctly regional, though: At the Disruption in Food and Sustainability Summit in March, Shiok (which is pronounced "shook," and is Singaporean slang for "very tasty and delicious") first shared a prototype of its shrimp as an ingredient in siu-mai, a dumpling of Chinese origin and a fixture at dim sum. JUST, a company based in the U.S., produced a demo chicken nugget.

As Jean Anthelme Brillat-Savarin, the 17th century founder of the gastronomic essay, famously said, "Show me what you eat, and I'll tell you who you are."

For many of these companies, the baseline animal protein they are trying to innovate also feels tied to place and culture: When meat comes from a bioreactor, not a farm, the world's largest exporter of seafood could be a landlocked region, and beef could be "reared" in a bayou, yet the handful of lab-grown fish companies, like Finless Foods and BlueNalu, hug the American coasts; VOW, based in Australia, started making lab-grown kangaroo meat in August; and of course the world's first lab-grown shrimp is in Singapore.

"In the U.S., shrimps are either seen in shrimp cocktail, shrimp sushi, and so on, but [in Singapore] we have everything from shrimp paste to shrimp oil," Sriram says. "It's used in noodles and rice, as flavoring in cup noodles, and in biscuits and crackers as well. It's seen in every form, shape, and size. It just made sense for us to go after a protein that was widely used."

It's tempting to assume that innovating on pillars of cultural significance might be easier if the focus were on a whole new kind of food to begin with, not your popular dim sum items or fast food offerings. But it's proving to be quite the opposite.

"That could have been one direction where [researchers] just said, 'Look, it's really hard to reproduce raw ground beef. Why don't we just make something completely new, like meat chips?'" says Mike Lee, co-founder and co-CEO of Alpha Food Labs, which works on food innovation more broadly. "While that strategy's interesting, I think we've got so many new things to explain to people that I don't know if you want to also explain this new format of food that you've never, ever seen before."

We've seen this same cautious approach to change before in other ways that relate to cooking. Perhaps the most obvious example is the kitchen range. As Bee Wilson writes in her book Consider the Fork: A History of How We Cook and Eat, in the 1880s, convincing ardent coal-range users to switch to newfangled gas was a hard sell. To win them over, inventor William Sugg designed a range that used gas, but aesthetically looked like the coal ones already in fashion at the time -- and which in some visual ways harkened even further back to the days of open-hearth cooking. Over time, gas range designs moved further away from those of the past, but the initial jump was only made possible through familiarity. There's a cleverness to meeting people where they are.

"New gadgets feel safest when they remind us of other objects that we already know," writes Wilson. "It is far harder to accept a technology that is entirely new."

Maybe someday we won't want anything other than meat chips, but not today.

Measuring Success

A 2018 Gallup poll shows that in the U.S., rates of true vegetarianism and veganism have been stagnant for as long as they've been measured. When the poll began in 1999, six percent of Americans were vegetarian, a number that remained steady until 2012, when the number dropped one point. As of 2018, it remained at five percent.

In 2012, when Gallup first measured the percentage of vegans, the rate was two percent. By 2018 it had gone up just one point, to three percent. Increasing awareness of animal welfare, health, and environmental concerns don't seem to be incentive enough to convince Americans, en masse, to completely slam the door on a food culture characterized in many ways by its emphasis on traditional meat consumption.

"A lot of consumers get over the ick factor when you tell them that most of the food that you're eating right now has entered the lab at some point."

Wilson writes that "experimenting with new foods has always been a dangerous business. In the wild, trying out some tempting new berries might lead to death. A lingering sense of this danger may make us risk-averse in the kitchen."

That might be one psychologically deep-seated reason that Americans are so resistant to ditch meat altogether. But a middle ground is emerging with a rise in flexitarianism, which aims to reduce reliance on traditional animal products. "Americans are eager to include alternatives to animal products in their diets, but are not willing to give up animal products completely," the same 2018 Gallup poll reported. This may represent the best opportunity for lab-grown meat to wedge itself into the culture.

Quantitatively predicting a population's willingness to try a lab-grown version of its favorite protein is proving a hard thing to measure, however, because it's still science fiction to a regular consumer. Measuring popular opinion of something that doesn't really exist yet is a dubious pastime.

In 2015, University of Wisconsin School of Public Health researchers Linnea Laestadius and Mark Caldwell conducted a study using online comments on articles about lab-grown meat to suss out public response to the food. The results showed a mostly negative attitude, but that was only two years into a field that is six years old today. Already public opinion may have shifted.

Shiok Meat's Sriram and her co-founder Ka Yi Ling have used online surveys to get a sense of the landscape, but they also take a more direct approach sometimes. Every time they give a public talk about their company and their shrimp, they poll their audience before and after the talk, using the question, "How many of you are willing to try, and pay, to eat lab-grown meat?"

They consistently find that the percentage of people willing to try goes up from 50 to 90 percent after hearing their talk, which includes information about the downsides of traditional shrimp farming (for one thing, many shrimp are raised in sewage, and peeled and deveined by slaves) and a bit of information about how lab-grown animal protein is being made now. I saw this pan out myself when Ling spoke at a New Harvest conference in Cambridge, Massachusetts in July.

"A lot of consumers get over the ick factor when you tell them that most of the food that you're eating right now has entered the lab at some point," Sriram says. "We're not going to grow our meat in the lab always. It's in the lab right now, because we're in R&D. Once we go into manufacturing ... it's going to be a food manufacturing facility, where a lot of food comes from."

The downside of the University of Wisconsin's and Shiok Meat's approach to capturing public opinion is that they each look at self-selecting groups: Online commenters are often fueled by a need to complain, and it's likely that anyone attending a talk by the co-founders of a lab-grown meat company already has some level of open-mindedness.

So Sriram says that she and Ling are also using another method to assess the landscape, and it's somewhere in the middle. They've been watching public responses to the closest available product to lab-grown meat that's on the market: Impossible Burger. As a 100 percent plant-based burger, it's not quite the same, but this bleedable, searable patty is still very much the product of science and laboratory work. Its remarkable similarity to beef is courtesy of yeast that have been genetically engineered to contain DNA from soy plant roots, which produce a protein called heme as they multiply. This heme is a plant-derived protein that can look and act like the heme found in animal muscle.

So far, the sciencey underpinnings of the burger don't seem to be turning people off. In just four years, it's already found its place within other American food icons. It's readily available everywhere from nationwide Burger Kings to Boston's Warren Tavern, which has been in operation since 1780, is one of the oldest pubs in America, and is even named after the man who sent Paul Revere on his midnight ride. Some people have already grown so attached to the Impossible Burger that they will actually walk out of a restaurant that's out of stock. Demand for the burger is outpacing production.

"Even though [Impossible] doesn't consider their product cellular agriculture, it's part of a spectrum of innovation," Krueger says. "There are novel proteins that you're not going to find in your average food, and there's some cool tech there. So to me, that does show a lot of willingness on people's part to think about trying something new."

The message for those working on animal-based lab-grown meat is clear: People will accept innovation on their favorite food if it tastes good enough and evokes the same emotional connection as the real deal.

"How people talk about lab-grown meat now, it's still a conversation about science, not about culture and emotion," Lee says. But he's confident that the conversation will start to shift in that direction if the companies doing this work can nail the flavor memory, above all.

And then proving how much power flavor lords over us, we quickly derail into a conversation about Doritos, which he calls "maniacally delicious." The chips carry no health value whatsoever and are a native product of food engineering and manufacturing — just watch how hard it is for Bon Appetit associate food editor Claire Saffitz to try and recreate them in the magazine's test kitchen — yet devotees remain unfazed and crunch on.

"It's funny because it shows you that people don't ask questions about how [some foods] are made, so why are they asking so many questions about how lab-grown meat is made?" Lee asks.

For all the hype around Impossible Burger, there are still controversies and hand-wringing around lab-grown meat. Some people are grossed out by the idea, some people are confused, and if you're the U.S. Cattlemen's Association (USCA), you're territorial. Last year, the group sent a petition to the USDA to "exclude products not derived directly from animals raised and slaughtered from the definition of 'beef' and meat.'"

"I think we are probably three or four big food safety scares away from everyone, especially younger generations, embracing lab-grown meat as like, 'Science is good; nature is dirty, and can kill you.'"

"I have this working hypothesis that if you look at the nation in 50-year spurts, we revolve back and forth between artisanal, all-natural food that's unadulterated and pure, and food that's empowered by science," Lee says. "Maybe we've only had one lap around the track on that, but I think we are probably three or four big food safety scares away from everyone, especially younger generations, embracing lab-grown meat as like, 'Science is good; nature is dirty, and can kill you.'"

Food culture goes beyond just the ingredients we know and love — it's also about how we interact with them, produce them, and expect them to taste and feel when we bite down. We accept a margin of difference among a fast food burger, a backyard burger from the grill, and a gourmet burger. Maybe someday we'll accept the difference between a burger created by killing a cow and a burger created by biopsying one.

Looking to the Future

Every time we engage with food, "we are enacting a ritual that binds us to the place we live and to those in our family, both living and dead," Wilson writes in Consider the Fork. "Such things are not easily shrugged off. Every time a new cooking technology has been introduced, however useful … it has been greeted in some quarters with hostility and protestations that the old ways were better and safer."

This is why it might be hard for a vegetarian mother to try her daughter's lab-grown shrimp, no matter how ethically it was produced or how awe-inspiring the invention is. Yet food cultures can and do change. "They're not these static things," says Benjamin Wurgaft, a historian whose book Meat Planet: Artificial Flesh and the Future of Food comes out this month. "The real tension seems to be between slow change and fast change."

In fact, the very definition of the word "meat" has never exclusively meant what the USCA wants it to mean. Before the 12th century, when it first appeared in Old English as "mete," it wasn't very specific at all and could be used to describe anything from "nourishment," to "food item," to "fodder," to "sustenance." By the 13th century it had been narrowed down to mean "flesh of warm-blooded animals killed and used as food." And yet the British mincemeat pie lives on as a sweet Christmas treat full of -- to the surprise of many non-Brits -- spiced, dried fruit. Since 1901, we've also used this word with ease as a general term for anything that's substantive -- as in, "the meat of the matter." There is room for yet more definitions to pile on.

"The conversation [about lab-ground meat] has changed remarkably in the last six years," Wurgaft says. "It has become a conversation about whether or not specific companies will bring a product to market, and that's a really different conversation than asking, 'Should we produce meat in the lab?'"

As part of the field research for his book, Wurgaft visited the Rijksmuseum Boerhaave, a Dutch museum that specializes in the history of science and medicine. It was 2015, and he was there to see an exhibit on the future of food. Just two years earlier, Mark Post had made that first lab-grown hamburger about a two-and-a-half hour drive south of the museum. When Wurgaft arrived, he found the novel invention, which Post had donated to the museum, already preserved and served up on a dinner plate, the whole outfit protected by plexiglass.

"They put this in the exhibit as if it were already part of the historical records, which to a historian looked really weird," Wurgaft says. "It looked like somebody taking the most recent supercomputer and putting it in a museum exhibit saying, 'This is the supercomputer that changed everything,' as if you were already 100 years in the future, looking back."

It seemed to symbolize an effort to codify a lab-grown hamburger as a matter of Dutch pride, perhaps someday occupying a place in people's hearts right next to the stroopwafel.

"Who's to say that we couldn't get a whole school of how to cook with lab-grown meat?"

Lee likes to imagine that part of the legacy of lab-grown meat, if it succeeds, will be to inspire entirely new fads in cooking -- a step beyond ones like the crab-filled avocado of the 1960s or the pesto of the 1980s in the U.S.

"[Lab-grown meat] is inherently going to be a different quality than anything we've done with an animal," he says. "Look at every cut [of meat] on the sphere today -- each requires a slightly different cooking method to optimize the flavor of that cut. Who's to say that we couldn't get a whole school of how to cook with lab-grown meat?"

At this point, most of us have no way of trying lab-grown meat. It remains exclusively available through sometimes gimmicky demos reserved for investors and the media. But Wurgaft says the stories we tell about this innovation, the articles we write, the films we make, and yes, even the museum exhibits we curate, all hold as much cultural significance as the product itself might someday.

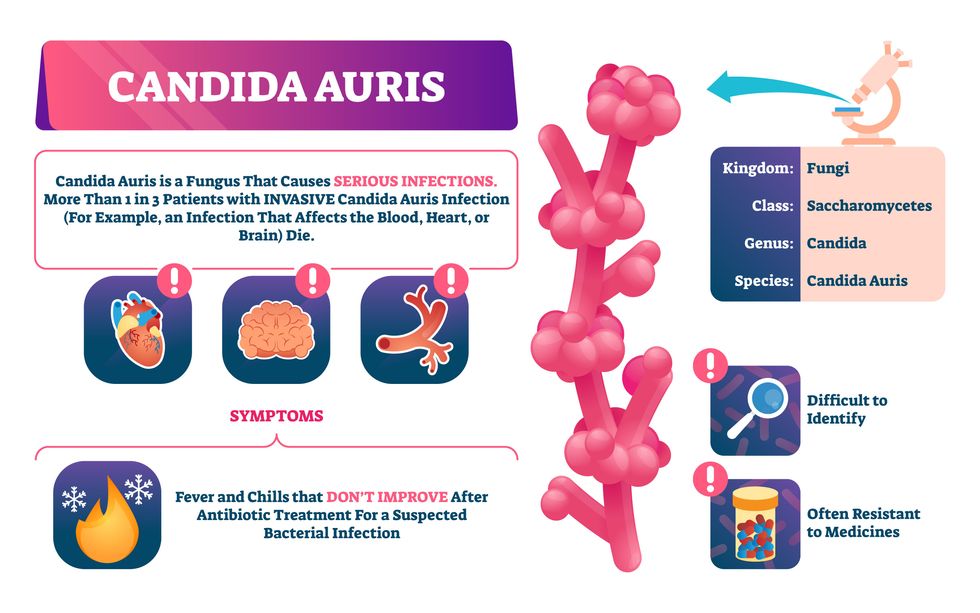

Doctors worry that fungal pathogens may cause the next pandemic.

Bacterial antibiotic resistance has been a concern in the medical field for several years. Now a new, similar threat is arising: drug-resistant fungal infections. The Centers for Disease Control and Prevention considers antifungal and antimicrobial resistance to be among the world’s greatest public health challenges.

One particular type of fungal infection caused by Candida auris is escalating rapidly throughout the world. And to make matters worse, C. auris is becoming increasingly resistant to current antifungal medications, which means that if you develop a C. auris infection, the drugs your doctor prescribes may not work. “We’re effectively out of medicines,” says Thomas Walsh, founding director of the Center for Innovative Therapeutics and Diagnostics, a translational research center dedicated to solving the antimicrobial resistance problem. Walsh spoke about the challenges at a Demy-Colton Virtual Salon, one in a series of interactive discussions among life science thought leaders.

Although C. auris typically doesn’t sicken healthy people, it afflicts immunocompromised hospital patients and may cause severe infections that can lead to sepsis, a life-threatening condition in which the overwhelmed immune system begins to attack the body’s own organs. Between 30 and 60 percent of patients who contract a C. auris infection die from it, according to the CDC. People who are undergoing stem cell transplants, have catheters or have taken antifungal or antibiotic medicines are at highest risk. “We’re coming to a perfect storm of increasing resistance rates, increasing numbers of immunosuppressed patients worldwide and a bug that is adapting to higher temperatures as the climate changes,” says Prabhavathi Fernandes, chair of the National BioDefense Science Board.

Most Candida species aren’t well-adapted to our body temperatures so they aren’t a threat. C. auris, however, thrives at human body temperatures.

Although medical professionals aren’t concerned at this point about C. auris evolving to affect healthy people, they worry that its presence in hospitals can turn routine surgeries into life-threatening calamities. “It’s coming,” says Fernandes. “It’s just a matter of time.”

An emerging global threat

“Fungi are found in the environment,” explains Fernandes, so Candida spores can easily wind up on people’s skin. In hospitals, they can be transferred from contact with healthcare workers or contaminated surfaces. Most Candida species aren’t well-adapted to our body temperatures so they aren’t a threat. C. auris, however, thrives at human body temperatures. It can enter the body during medical treatments that break the skin—and cause an infection. Overall, fungal infections cost some $48 billion in the U.S. each year. And infection rates are increasing because, in an ironic twist, advanced medical therapies are enabling severely ill patients to live longer and, therefore, be exposed to this pathogen.

The first-ever case of a C. auris infection was reported in Japan in 2009, although an analysis of Candida samples dated the earliest strain to a 1996 sample from South Korea. Since then, five separate varieties – called clades, which are similar to strains among bacteria – developed independently in different geographies: South Asia, East Asia, South Africa, South America and, recently, Iran. So far, C. auris infections have been reported in 35 countries.

In the U.S., the first infection was reported in 2016, and the CDC started tracking it nationally two years later. During that time, 5,654 cases have been reported to the CDC, which only tracks U.S. data.

What’s more notable than the number of cases is their rate of increase. In 2016, new cases increased by 175 percent and, on average, they have approximately doubled every year. From 2016 through 2022, the number of infections jumped from 63 to 2,377, a roughly 37-fold increase.

“This reminds me of what we saw with epidemics from 2013 through 2020… with Ebola, Zika and the COVID-19 pandemic,” says Robin Robinson, CEO of Spriovas and founding director of the Biomedical Advanced Research and Development Authority (BARDA), which is part of the U.S. Department of Health and Human Services. These epidemics started with a hockey stick trajectory, Robinson says—a gradual growth leading to a sharp spike, just like the shape of a hockey stick.

Another challenge is that right now medics don’t have rapid diagnostic tests for fungal infections. Currently, patients are often misdiagnosed because C. auris resembles several other easily treated fungi. Or they are diagnosed long after the infection begins and is harder to treat.

The problem is that existing diagnostics tests can only identify C. auris once it reaches the bloodstream. Yet, because this pathogen infects bodily tissues first, it should be possible to catch it much earlier before it becomes life-threatening. “We have to diagnose it before it reaches the bloodstream,” Walsh says.

The most alarming fact is that some Candida infections no longer respond to standard therapeutics.

“We need to focus on rapid diagnostic tests that do not rely on a positive blood culture,” says John Sperzel, president and CEO of T2 Biosystems, a company specializing in diagnostics solutions. Blood cultures typically take two to three days for the concentration of Candida to become large enough to detect. The company’s novel test detects about 90 percent of Candida species within three to five hours—thanks to its ability to spot minute quantities of the pathogen in blood samples instead of waiting for them to incubate and proliferate.

Unlike other Candida species C. auris thrives at human body temperatures

Adobe Stock

Tackling the resistance challenge

The most alarming fact is that some Candida infections no longer respond to standard therapeutics. The number of cases that stopped responding to echinocandin, the first-line therapy for most Candida infections, tripled in 2020, according to a study by the CDC.

Now, each of the first four clades shows varying levels of resistance to all three commonly prescribed classes of antifungal medications, such as azoles, echinocandins, and polyenes. For example, 97 percent of infections from C. auris Clade I are resistant to fluconazole, 54 percent to voriconazole and 30 percent of amphotericin. Nearly half are resistant to multiple antifungal drugs. Even with Clade II fungi, which has the least resistance of all the clades, 11 to 14 percent have become resistant to fluconazole.

Anti-fungal therapies typically target specific chemical compounds present on fungi’s cell membranes, but not on human cells—otherwise the medicine would cause damage to our own tissues. Fluconazole and other azole antifungals target a compound called ergosterol, preventing the fungal cells from replicating. Over the years, however, C. auris evolved to resist it, so existing fungal medications don’t work as well anymore.

A newer class of drugs called echinocandins targets a different part of the fungal cell. “The echinocandins – like caspofungin – inhibit (a part of the fungi) involved in making glucan, which is an essential component of the fungal cell wall and is not found in human cells,” Fernandes says. New antifungal treatments are needed, she adds, but there are only a few magic bullets that will hit just the fungus and not the human cells.

Research to fight infections also has been challenged by a lack of government support. That is changing now that BARDA is requesting proposals to develop novel antifungals. “The scope includes C. auris, as well as antifungals following a radiological/nuclear emergency, says BARDA spokesperson Elleen Kane.

The remaining challenge is the number of patients available to participate in clinical trials. Large numbers are needed, but the available patients are quite sick and often die before trials can be completed. Consequently, few biopharmaceutical companies are developing new treatments for C. auris.

ClinicalTrials.gov reports only two drugs in development for invasive C. auris infections—those than can spread throughout the body rather than localize in one particular area, like throat or vaginal infections: ibrexafungerp by Scynexis, Inc., fosmanogepix, by Pfizer.

Scynexis’ ibrexafungerp appears active against C. auris and other emerging, drug-resistant pathogens. The FDA recently approved it as a therapy for vaginal yeast infections and it is undergoing Phase III clinical trials against invasive candidiasis in an attempt to keep the infection from spreading.

“Ibreafungerp is structurally different from other echinocandins,” Fernandes says, because it targets a different part of the fungus. “We’re lucky it has activity against C. auris.”

Pfizer’s fosmanogepix is in Phase II clinical trials for patients with invasive fungal infections caused by multiple Candida species. Results are showing significantly better survival rates for people taking fosmanogepix.

Although C. auris does pose a serious threat to healthcare worldwide, scientists try to stay optimistic—because they recognized the problem early enough, they might have solutions in place before the perfect storm hits. “There is a bit of hope,” says Robinson. “BARDA has finally been able to fund the development of new antifungal agents and, hopefully, this year we can get several new classes of antifungals into development.”

New elevators could lift up our access to space

A space elevator would be cheaper and cleaner than using rockets

Story by Big Think

When people first started exploring space in the 1960s, it cost upwards of $80,000 (adjusted for inflation) to put a single pound of payload into low-Earth orbit.

A major reason for this high cost was the need to build a new, expensive rocket for every launch. That really started to change when SpaceX began making cheap, reusable rockets, and today, the company is ferrying customer payloads to LEO at a price of just $1,300 per pound.

This is making space accessible to scientists, startups, and tourists who never could have afforded it previously, but the cheapest way to reach orbit might not be a rocket at all — it could be an elevator.

The space elevator

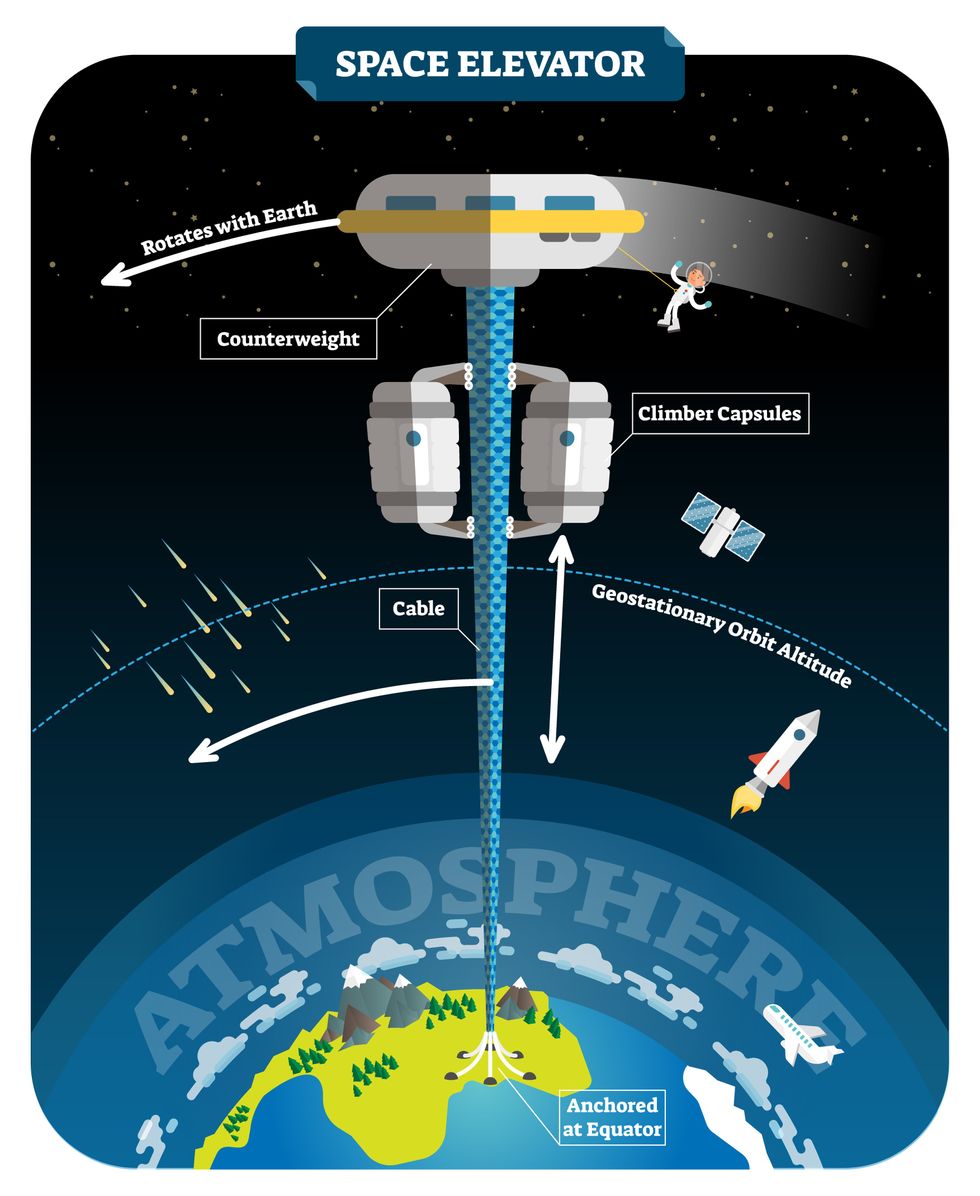

The seeds for a space elevator were first planted by Russian scientist Konstantin Tsiolkovsky in 1895, who, after visiting the 1,000-foot (305 m) Eiffel Tower, published a paper theorizing about the construction of a structure 22,000 miles (35,400 km) high.

This would provide access to geostationary orbit, an altitude where objects appear to remain fixed above Earth’s surface, but Tsiolkovsky conceded that no material could support the weight of such a tower.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit.

In 1959, soon after Sputnik, Russian engineer Yuri N. Artsutanov proposed a way around this issue: instead of building a space elevator from the ground up, start at the top. More specifically, he suggested placing a satellite in geostationary orbit and dropping a tether from it down to Earth’s equator. As the tether descended, the satellite would ascend. Once attached to Earth’s surface, the tether would be kept taut, thanks to a combination of gravitational and centrifugal forces.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit. According to physicist Bradley Edwards, who researched the concept for NASA about 20 years ago, it’d cost $10 billion and take 15 years to build a space elevator, but once operational, the cost of sending a payload to any Earth orbit could be as low as $100 per pound.

“Once you reduce the cost to almost a Fed-Ex kind of level, it opens the doors to lots of people, lots of countries, and lots of companies to get involved in space,” Edwards told Space.com in 2005.

In addition to the economic advantages, a space elevator would also be cleaner than using rockets — there’d be no burning of fuel, no harmful greenhouse emissions — and the new transport system wouldn’t contribute to the problem of space junk to the same degree that expendable rockets do.

So, why don’t we have one yet?

Tether troubles

Edwards wrote in his report for NASA that all of the technology needed to build a space elevator already existed except the material needed to build the tether, which needs to be light but also strong enough to withstand all the huge forces acting upon it.

The good news, according to the report, was that the perfect material — ultra-strong, ultra-tiny “nanotubes” of carbon — would be available in just two years.

“[S]teel is not strong enough, neither is Kevlar, carbon fiber, spider silk, or any other material other than carbon nanotubes,” wrote Edwards. “Fortunately for us, carbon nanotube research is extremely hot right now, and it is progressing quickly to commercial production.”Unfortunately, he misjudged how hard it would be to synthesize carbon nanotubes — to date, no one has been able to grow one longer than 21 inches (53 cm).

Further research into the material revealed that it tends to fray under extreme stress, too, meaning even if we could manufacture carbon nanotubes at the lengths needed, they’d be at risk of snapping, not only destroying the space elevator, but threatening lives on Earth.

Looking ahead

Carbon nanotubes might have been the early frontrunner as the tether material for space elevators, but there are other options, including graphene, an essentially two-dimensional form of carbon that is already easier to scale up than nanotubes (though still not easy).

Contrary to Edwards’ report, Johns Hopkins University researchers Sean Sun and Dan Popescu say Kevlar fibers could work — we would just need to constantly repair the tether, the same way the human body constantly repairs its tendons.

“Using sensors and artificially intelligent software, it would be possible to model the whole tether mathematically so as to predict when, where, and how the fibers would break,” the researchers wrote in Aeon in 2018.

“When they did, speedy robotic climbers patrolling up and down the tether would replace them, adjusting the rate of maintenance and repair as needed — mimicking the sensitivity of biological processes,” they continued.Astronomers from the University of Cambridge and Columbia University also think Kevlar could work for a space elevator — if we built it from the moon, rather than Earth.

They call their concept the Spaceline, and the idea is that a tether attached to the moon’s surface could extend toward Earth’s geostationary orbit, held taut by the pull of our planet’s gravity. We could then use rockets to deliver payloads — and potentially people — to solar-powered climber robots positioned at the end of this 200,000+ mile long tether. The bots could then travel up the line to the moon’s surface.

This wouldn’t eliminate the need for rockets to get into Earth’s orbit, but it would be a cheaper way to get to the moon. The forces acting on a lunar space elevator wouldn’t be as strong as one extending from Earth’s surface, either, according to the researchers, opening up more options for tether materials.

“[T]he necessary strength of the material is much lower than an Earth-based elevator — and thus it could be built from fibers that are already mass-produced … and relatively affordable,” they wrote in a paper shared on the preprint server arXiv.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one.

Electrically powered climber capsules could go up down the tether to deliver payloads to any Earth orbit.

Adobe Stock

Some Chinese researchers, meanwhile, aren’t giving up on the idea of using carbon nanotubes for a space elevator — in 2018, a team from Tsinghua University revealed that they’d developed nanotubes that they say are strong enough for a tether.

The researchers are still working on the issue of scaling up production, but in 2021, state-owned news outlet Xinhua released a video depicting an in-development concept, called “Sky Ladder,” that would consist of space elevators above Earth and the moon.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one. If the project could be pulled off — a huge if — China predicts Sky Ladder could cut the cost of sending people and goods to the moon by 96 percent.

The bottom line

In the 120 years since Tsiolkovsky looked at the Eiffel Tower and thought way bigger, tremendous progress has been made developing materials with the properties needed for a space elevator. At this point, it seems likely we could one day have a material that can be manufactured at the scale needed for a tether — but by the time that happens, the need for a space elevator may have evaporated.

Several aerospace companies are making progress with their own reusable rockets, and as those join the market with SpaceX, competition could cause launch prices to fall further.

California startup SpinLaunch, meanwhile, is developing a massive centrifuge to fling payloads into space, where much smaller rockets can propel them into orbit. If the company succeeds (another one of those big ifs), it says the system would slash the amount of fuel needed to reach orbit by 70 percent.

Even if SpinLaunch doesn’t get off the ground, several groups are developing environmentally friendly rocket fuels that produce far fewer (or no) harmful emissions. More work is needed to efficiently scale up their production, but overcoming that hurdle will likely be far easier than building a 22,000-mile (35,400-km) elevator to space.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.