As More People Crowdfund Medical Bills, Beware of Dubious Campaigns

Individuals seeking funding for experimental therapies may enroll in legitimate clinical trials -- or fall prey to snake oil.

Nearly a decade ago, Jamie Anderson hit his highest weight ever: 618 pounds. Depression drove him to eat and eat. He tried all kinds of diets, losing and regaining weight again and again. Then, four years ago, a friend nudged him to join a gym, and with a trainer's guidance, he embarked on a life-altering path.

Ethicists become particularly alarmed when medical crowdfunding appeals are for scientifically unfounded and potentially harmful interventions.

"The big catalyst for all of this is, I was diagnosed as a diabetic," says Anderson, a 46-year-old sales associate in the auto care department at Walmart. Within three years, he was down to 276 pounds but left with excess skin, which sagged from his belly to his mid-thighs.

Plastic surgery would cost $4,000 more than the sum his health insurance approved. That's when Anderson, who lives in Cabot, Arkansas, a suburb outside of Little Rock, turned to online crowdfunding to raise money. In a few months last year, current and former co-workers and friends of friends came up with that amount, covering the remaining expenses for the tummy tuck and overnight hospital stay.

The crowdfunding site that he used, CoFund Health, aimed to give his donors some peace of mind about where their money was going. Unlike GoFundMe and other platforms that don't restrict how donations are spent, Anderson's funds were loaded on a debit card that only worked at health care providers, so the donors "were assured that it was for medical bills only," he says.

CoFund Health was started in January 2019 in response to concerns about the legitimacy of many medical crowdfunding campaigns. As crowdfunding for health-related expenses has gained more traction on social media sites, with countless campaigns seeking to subsidize the high costs of care, it has given rise to some questionable transactions and legitimate ethical concerns.

Common examples of alleged fraud have involved misusing the donations for nonmedical purposes, feigning or embellishing the story of one's own unfortunate plight or that of another person, or impersonating someone else with an illness. Ethicists become particularly alarmed when medical crowdfunding appeals are for scientifically unfounded and potentially harmful interventions.

About 20 percent of American adults reported giving to a crowdfunding campaign for medical bills or treatments, according to a survey by AmeriSpeak Spotlight on Health from NORC, formerly called the National Opinion Research Center, a non-partisan research institution at the University of Chicago. The self-funded poll, conducted in November 2019, included 1,020 interviews with a representative sample of U.S. households. Researchers cited a 2019 City University of New York-Harvard study, which noted that medical bills are the most common basis for declaring personal bankruptcy.

Some experts contend that crowdfunding platforms should serve as gatekeepers in prohibiting campaigns for unproven treatments. Facing a dire diagnosis, individuals may go out on a limb to try anything and everything to prolong and improve the quality of their lives.

They may enroll in well-designed clinical trials, or they could fall prey "to snake oil being sold by people out there just making a buck," says Jeremy Snyder, a health sciences professor at Simon Fraser University in British Columbia, Canada, and the lead author of a December 2019 article in The Hastings Report about crowdfunding for dubious treatments.

For instance, crowdfunding campaigns have sought donations for homeopathic healing for cancer, unapproved stem cell therapy for central nervous system injury, and extended antibiotic use for chronic Lyme disease, according to an October 2018 report in the Journal of the American Medical Association.

Ford Vox, the lead author and an Atlanta-based physician specializing in brain injury, maintains that a repository should exist to monitor the outcomes of experimental treatments. "At the very least, there ought to be some tracking of what happens to the people the funds are being raised for," he says. "It would be great for an independent organization to do so."

"Even if it appears like a good cause, consumers should still do some research before donating to a crowdfunding campaign."

The Federal Trade Commission, the national consumer watchdog, cautions online that "it might be impossible for you to know if the cause is real and if the money actually gets to the intended recipient." Another caveat: Donors can't deduct contributions to individuals on tax returns.

"Even if it appears like a good cause, consumers should still do some research before donating to a crowdfunding campaign," says Malini Mithal, associate director of financial practices at the FTC. "Don't assume all medical treatments are tested and safe."

Before making any donation, it would be wise to check whether a crowdfunding site offers some sort of guarantee if a campaign ends up being fraudulent, says Kristin Judge, chief executive and founder of the Cybercrime Support Network, a Michigan-based nonprofit that serves victims before, during, and after an incident. They should know how the campaign organizer is related to the intended recipient and note whether any direct family members and friends have given funds and left supportive comments.

Donating to vetted charities offers more assurance than crowdfunding that the money will be channeled toward helping someone in need, says Daniel Billingsley, vice president of external affairs for the Oklahoma Center of Nonprofits. "Otherwise, you could be putting money into all sorts of scams." There is "zero accountability" for the crowdfunding site or the recipient to provide proof that the dollars were indeed funneled into health-related expenses.

Even if donors may have limited recourse against scammers, the "platforms have an ethical obligation to protect the people using their site from fraud," says Bryanna Moore, a postdoctoral fellow at Baylor College of Medicine's Center for Medical Ethics and Health Policy. "It's easy to take advantage of people who want to be charitable."

There are "different layers of deception" on a broad spectrum of fraud, ranging from "outright lying for a self-serving reason" to publicizing an imaginary illness to collect money genuinely needed for basic living expenses. With medical campaigns being a top category among crowdfunding appeals, it's "a lot of money that's exchanging hands," Moore says.

The advent of crowdfunding "reveals and, in some ways, reinforces a health care system that is totally broken," says Jessica Pierce, a faculty affiliate in the Center for Bioethics and Humanities at the University of Colorado Anschutz Medical Campus in Denver. "The fact that people have to scrounge for money to get life-saving treatment is unethical."

Crowdfunding also highlights socioeconomic and racial disparities by giving an unfair advantage to those who are social-media savvy and capable of crafting a compelling narrative that attracts donors. Privacy issues enter into the picture as well, because telling that narrative entails revealing personal details, Pierce says, particularly when it comes to children, "who may not be able to consent at a really informed level."

CoFund Health, the crowdfunding site on which Anderson raised the money for his plastic surgery, offers to help people write their campaigns and copy edit for proper language, says Matthew Martin, co-founder and chief executive officer. Like other crowdfunding sites, it retains a few percent of the donations for each campaign. Martin is the husband of Anderson's acquaintance from high school.

So far, the site, which is based in Raleigh, North Carolina, has hosted about 600 crowdfunding campaigns, some completed and some still in progress. Campaigns have raised as little as $300 to cover immediate dental expenses and as much as $12,000 for cancer treatments, Martin says, but most have set a goal between $5,000 and $10,000.

Whether or not someone's campaign is based on fact or fiction remains for prospective donors to decide.

The services could be cosmetic—for example, a breast enhancement or reduction, laser procedures for the eyes or skin, and chiropractic care. A number of campaigns have sought funding for transgender surgeries, which many insurers consider optional, he says.

In July 2019, a second site was hatched out of pet owners' requests for assistance with their dogs' and cats' medical expenses. Money raised on CoFund My Pet can only be used at veterinary clinics. Martin says the debit card would be declined at other merchants, just as its CoFund Health counterpart for humans will be rejected at places other than health care facilities, dental and vision providers, and pharmacies.

Whether or not someone's campaign is based on fact or fiction remains for prospective donors to decide. If a donor were to regret a transaction, he says the site would reach out to the campaign's owner but ultimately couldn't force a refund, Martin explains, because "it's hard to chase down fraud without having access to people's health records."

In some crowdfunding campaigns, the individual needs some or all the donated resources to pay for travel and lodging at faraway destinations to receive care, says Snyder, the health sciences professor and crowdfunding report author. He suggests people only give to recipients they know personally.

"That may change the calculus a little bit," tipping the decision in favor of donating, he says. As long as the treatment isn't harmful, the funds are a small gesture of support. "There's some value in that for preserving hope or just showing them that you care."

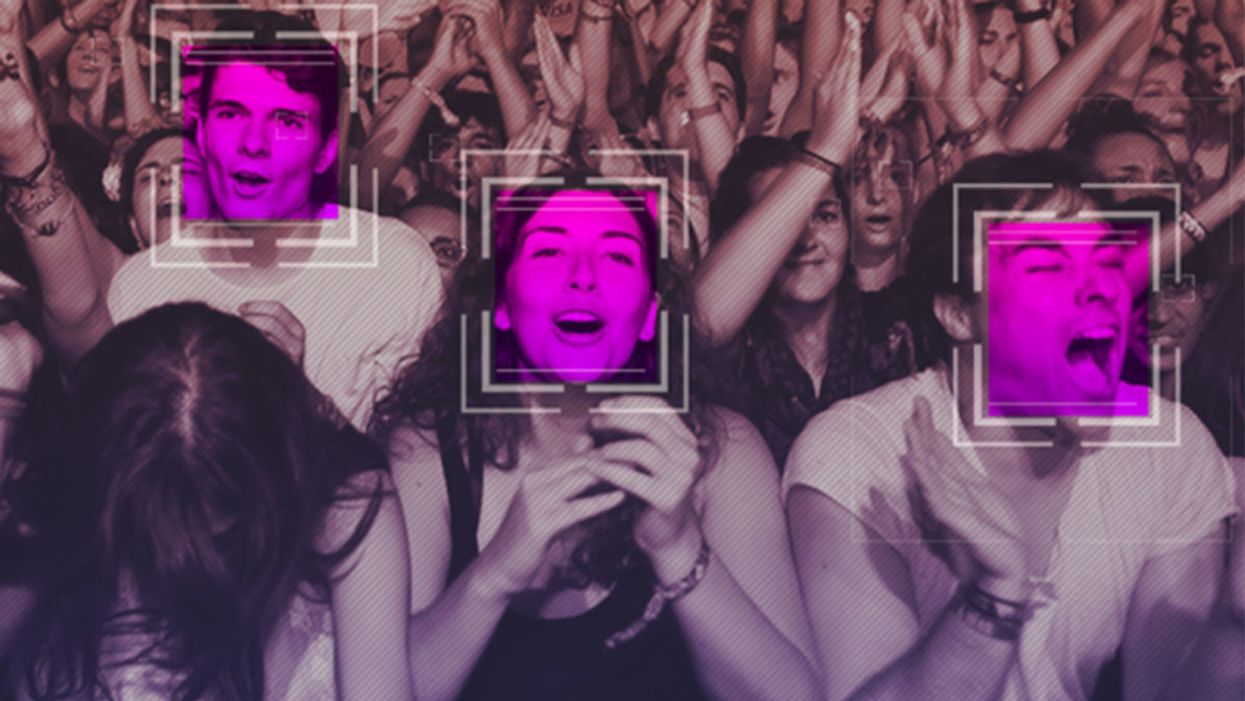

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

Scientists Are Building an “AccuWeather” for Germs to Predict Your Risk of Getting the Flu

A future app may help you avoid getting the flu by informing you of your local risk on a given day.

Applied mathematician Sara del Valle works at the U.S.'s foremost nuclear weapons lab: Los Alamos. Once colloquially called Atomic City, it's a hidden place 45 minutes into the mountains northwest of Santa Fe. Here, engineers developed the first atomic bomb.

Like AccuWeather, an app for disease prediction could help people alter their behavior to live better lives.

Today, Los Alamos still a small science town, though no longer a secret, nor in the business of building new bombs. Instead, it's tasked with, among other things, keeping the stockpile of nuclear weapons safe and stable: not exploding when they're not supposed to (yes, please) and exploding if someone presses that red button (please, no).

Del Valle, though, doesn't work on any of that. Los Alamos is also interested in other kinds of booms—like the explosion of a contagious disease that could take down a city. Predicting (and, ideally, preventing) such epidemics is del Valle's passion. She hopes to develop an app that's like AccuWeather for germs: It would tell you your chance of getting the flu, or dengue or Zika, in your city on a given day. And like AccuWeather, it could help people alter their behavior to live better lives, whether that means staying home on a snowy morning or washing their hands on a sickness-heavy commute.

Sara del Valle of Los Alamos is working to predict and prevent epidemics using data and machine learning.

Since the beginning of del Valle's career, she's been driven by one thing: using data and predictions to help people behave practically around pathogens. As a kid, she'd always been good at math, but when she found out she could use it to capture the tentacular spread of disease, and not just manipulate abstractions, she was hooked.

When she made her way to Los Alamos, she started looking at what people were doing during outbreaks. Using social media like Twitter, Google search data, and Wikipedia, the team started to sift for trends. Were people talking about hygiene, like hand-washing? Or about being sick? Were they Googling information about mosquitoes? Searching Wikipedia for symptoms? And how did those things correlate with the spread of disease?

It was a new, faster way to think about how pathogens propagate in the real world. Usually, there's a 10- to 14-day lag in the U.S. between when doctors tap numbers into spreadsheets and when that information becomes public. By then, the world has moved on, and so has the disease—to other villages, other victims.

"We found there was a correlation between actual flu incidents in a community and the number of searches online and the number of tweets online," says del Valle. That was when she first let herself dream about a real-time forecast, not a 10-days-later backcast. Del Valle's group—computer scientists, mathematicians, statisticians, economists, public health professionals, epidemiologists, satellite analysis experts—has continued to work on the problem ever since their first Twitter parsing, in 2011.

They've had their share of outbreaks to track. Looking back at the 2009 swine flu pandemic, they saw people buying face masks and paying attention to the cleanliness of their hands. "People were talking about whether or not they needed to cancel their vacation," she says, and also whether pork products—which have nothing to do with swine flu—were safe to buy.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place.

They watched internet conversations during the measles outbreak in California. "There's a lot of online discussion about anti-vax sentiment, and people trying to convince people to vaccinate children and vice versa," she says.

Today, they work on predicting the spread of Zika, Chikungunya, and dengue fever, as well as the plain old flu. And according to the CDC, that latter effort is going well.

Since 2015, the CDC has run the Epidemic Prediction Initiative, a competition in which teams like de Valle's submit weekly predictions of how raging the flu will be in particular locations, along with other ailments occasionally. Michael Johannson is co-founder and leader of the program, which began with the Dengue Forecasting Project. Its goal, he says, was to predict when dengue cases would blow up, when previously an area just had a low-level baseline of sick people. "You'll get this massive epidemic where all of a sudden, instead of 3,000 to 4,000 cases, you have 20,000 cases," he says. "They kind of come out of nowhere."

But the "kind of" is key: The outbreaks surely come out of somewhere and, if scientists applied research and data the right way, they could forecast the upswing and perhaps dodge a bomb before it hit big-time. Questions about how big, when, and where are also key to the flu.

A big part of these projects is the CDC giving the right researchers access to the right information, and the structure to both forecast useful public-health outcomes and to compare how well the models are doing. The extra information has been great for the Los Alamos effort. "We don't have to call departments and beg for data," says del Valle.

When data isn't available, "proxies"—things like symptom searches, tweets about empty offices, satellite images showing a green, wet, mosquito-friendly landscape—are helpful: You don't have to rely on anyone's health department.

At the latest meeting with all the prediction groups, del Valle's flu models took first and second place. But del Valle wants more than weekly numbers on a government website; she wants that weather-app-inspired fortune-teller, incorporating the many diseases you could get today, standing right where you are. "That's our dream," she says.

This plot shows the the correlations between the online data stream, from Wikipedia, and various infectious diseases in different countries. The results of del Valle's predictive models are shown in brown, while the actual number of cases or illness rates are shown in blue.

(Courtesy del Valle)

The goal isn't to turn you into a germophobic agoraphobe. It's to make you more aware when you do go out. "If you know it's going to rain today, you're more likely to bring an umbrella," del Valle says. "When you go on vacation, you always look at the weather and make sure you bring the appropriate clothing. If you do the same thing for diseases, you think, 'There's Zika spreading in Sao Paulo, so maybe I should bring even more mosquito repellent and bring more long sleeves and pants.'"

They're not there yet (don't hold your breath, but do stop touching your mouth). She estimates it's at least a decade away, but advances in machine learning could accelerate that hypothetical timeline. "We're doing baby steps," says del Valle, starting with the flu in the U.S., dengue in Brazil, and other efforts in Colombia, Ecuador, and Canada. "Going from there to forecasting all diseases around the globe is a long way," she says.

But even AccuWeather started small: One man began predicting weather for a utility company, then helping ski resorts optimize their snowmaking. His influence snowballed, and now private forecasting apps, including AccuWeather's, populate phones across the planet. The company's progression hasn't been without controversy—privacy incursions, inaccuracy of long-term forecasts, fights with the government—but it has continued, for better and for worse.

Disease apps, perhaps spun out of a small, unlikely team at a nuclear-weapons lab, could grow and breed in a similar way. And both the controversies and public-health benefits that may someday spin out of them lie in the future, impossible to predict with certainty.