Meet the Scientists on the Frontlines of Protecting Humanity from a Man-Made Pathogen

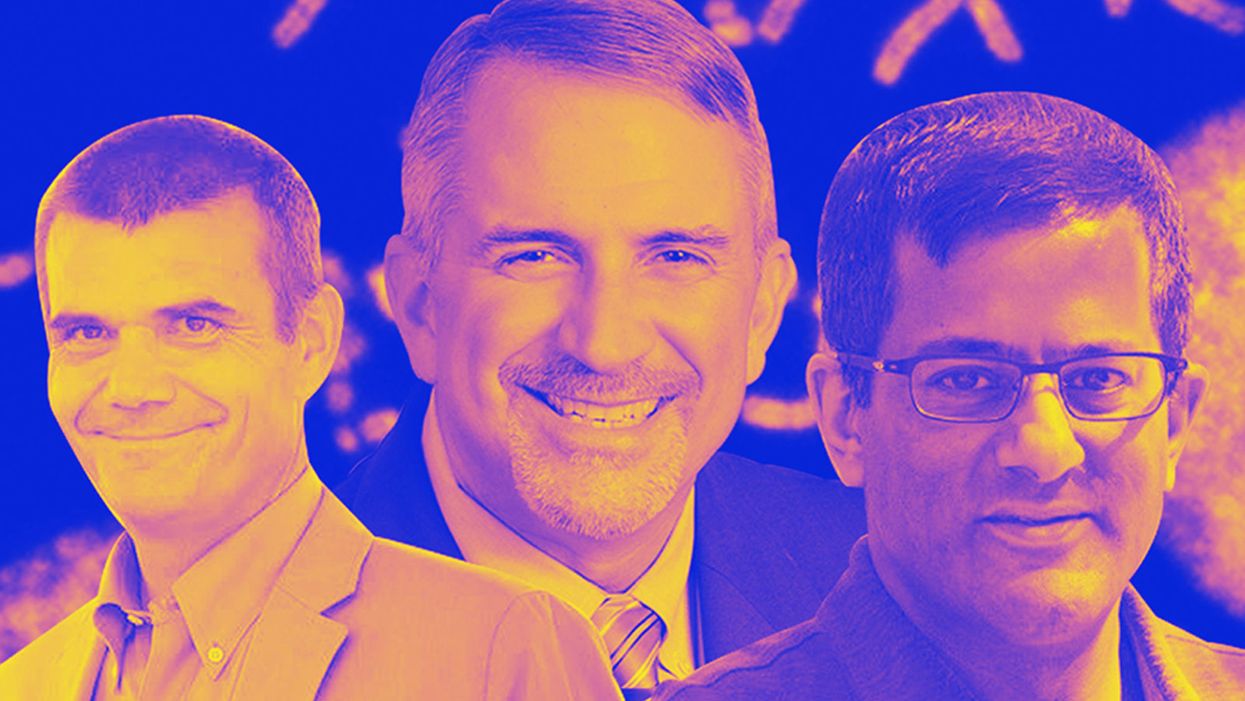

From left: Jean Peccoud, Randall Murch, and Neeraj Rao.

Jean Peccoud wasn't expecting an email from the FBI. He definitely wasn't expecting the agency to invite him to a meeting. "My reaction was, 'What did I do wrong to be on the FBI watch list?'" he recalls.

You use those blueprints for white-hat research—which is, indeed, why the open blueprints exist—or you can do the same for a black-hat attack.

He didn't know what the feds could possibly want from him. "I was mostly scared at this point," he says. "I was deeply disturbed by the whole thing."

But he decided to go anyway, and when he traveled to San Francisco for the 2008 gathering, the reason for the e-vite became clear: The FBI was reaching out to researchers like him—scientists interested in synthetic biology—in anticipation of the potential nefarious uses of this technology. "The whole purpose of the meeting was, 'Let's start talking to each other before we actually need to talk to each other,'" says Peccoud, now a professor of chemical and biological engineering at Colorado State University. "'And let's make sure next time you get an email from the FBI, you don't freak out."

Synthetic biology—which Peccoud defines as "the application of engineering methods to biological systems"—holds great power, and with that (as always) comes great responsibility. When you can synthesize genetic material in a lab, you can create new ways of diagnosing and treating people, and even new food ingredients. But you can also "print" the genetic sequence of a virus or virulent bacterium.

And while it's not easy, it's also not as hard as it could be, in part because dangerous sequences have publicly available blueprints. You use those blueprints for white-hat research—which is, indeed, why the open blueprints exist—or you can do the same for a black-hat attack. You could synthesize a dangerous pathogen's code on purpose, or you could unwittingly do so because someone tampered with your digital instructions. Ordering synthetic genes for viral sequences, says Peccoud, would likely be more difficult today than it was a decade ago.

"There is more awareness of the industry, and they are taking this more seriously," he says. "There is no specific regulation, though."

Trying to lock down the interconnected machines that enable synthetic biology, secure its lab processes, and keep dangerous pathogens out of the hands of bad actors is part of a relatively new field: cyberbiosecurity, whose name Peccoud and colleagues introduced in a 2018 paper.

Biological threats feel especially acute right now, during the ongoing pandemic. COVID-19 is a natural pathogen -- not one engineered in a lab. But future outbreaks could start from a bug nature didn't build, if the wrong people get ahold of the right genetic sequences, and put them in the right sequence. Securing the equipment and processes that make synthetic biology possible -- so that doesn't happen -- is part of why the field of cyberbiosecurity was born.

The Origin Story

It is perhaps no coincidence that the FBI pinged Peccoud when it did: soon after a journalist ordered a sequence of smallpox DNA and wrote, for The Guardian, about how easy it was. "That was not good press for anybody," says Peccoud. Previously, in 2002, the Pentagon had funded SUNY Stonybrook researchers to try something similar: They ordered bits of polio DNA piecemeal and, over the course of three years, strung them together.

Although many years have passed since those early gotchas, the current patchwork of regulations still wouldn't necessarily prevent someone from pulling similar tricks now, and the technological systems that synthetic biology runs on are more intertwined — and so perhaps more hackable — than ever. Researchers like Peccoud are working to bring awareness to those potential problems, to promote accountability, and to provide early-detection tools that would catch the whiff of a rotten act before it became one.

Peccoud notes that if someone wants to get access to a specific pathogen, it is probably easier to collect it from the environment or take it from a biodefense lab than to whip it up synthetically. "However, people could use genetic databases to design a system that combines different genes in a way that would make them dangerous together without each of the components being dangerous on its own," he says. "This would be much more difficult to detect."

After his meeting with the FBI, Peccoud grew more interested in these sorts of security questions. So he was paying attention when, in 2010, the Department of Health and Human Services — now helping manage the response to COVID-19 — created guidance for how to screen synthetic biology orders, to make sure suppliers didn't accidentally send bad actors the sequences that make up bad genomes.

Guidance is nice, Peccoud thought, but it's just words. He wanted to turn those words into action: into a computer program. "I didn't know if it was something you can run on a desktop or if you need a supercomputer to run it," he says. So, one summer, he tasked a team of student researchers with poring over the sentences and turning them into scripts. "I let the FBI know," he says, having both learned his lesson and wanting to get in on the game.

Peccoud later joined forces with Randall Murch, a former FBI agent and current Virginia Tech professor, and a team of colleagues from both Virginia Tech and the University of Nebraska-Lincoln, on a prototype project for the Department of Defense. They went into a lab at the University of Nebraska at Lincoln and assessed all its cyberbio-vulnerabilities. The lab develops and produces prototype vaccines, therapeutics, and prophylactic components — exactly the kind of place that you always, and especially right now, want to keep secure.

"We were creating wiki of all these nasty things."

The team found dozens of Achilles' heels, and put them in a private report. Not long after that project, the two and their colleagues wrote the paper that first used the term "cyberbiosecurity." A second paper, led by Murch, came out five months later and provided a proposed definition and more comprehensive perspective on cyberbiosecurity. But although it's now a buzzword, it's the definition, not the jargon, that matters. "Frankly, I don't really care if they call it cyberbiosecurity," says Murch. Call it what you want: Just pay attention to its tenets.

A Database of Scary Sequences

Peccoud and Murch, of course, aren't the only ones working to screen sequences and secure devices. At the nonprofit Battelle Memorial Institute in Columbus, Ohio, for instance, scientists are working on solutions that balance the openness inherent to science and the closure that can stop bad stuff. "There's a challenge there that you want to enable research but you want to make sure that what people are ordering is safe," says the organization's Neeraj Rao.

Rao can't talk about the work Battelle does for the spy agency IARPA, the Intelligence Advanced Research Projects Activity, on a project called Fun GCAT, which aims to use computational tools to deep-screen gene-sequence orders to see if they pose a threat. It can, though, talk about a twin-type internal project: ThreatSEQ (pronounced, of course, "threat seek").

The project started when "a government customer" (as usual, no one will say which) asked Battelle to curate a list of dangerous toxins and pathogens, and their genetic sequences. The researchers even started tagging sequences according to their function — like whether a particular sequence is involved in a germ's virulence or toxicity. That helps if someone is trying to use synthetic biology not to gin up a yawn-inducing old bug but to engineer a totally new one. "How do you essentially predict what the function of a novel sequence is?" says Rao. You look at what other, similar bits of code do.

"We were creating wiki of all these nasty things," says Rao. As they were working, they realized that DNA manufacturers could potentially scan in sequences that people ordered, run them against the database, and see if anything scary matched up. Kind of like that plagiarism software your college professors used.

Battelle began offering their screening capability, as ThreatSEQ. When customers -- like, currently, Twist Bioscience -- throw their sequences in, and get a report back, the manufacturers make the final decision about whether to fulfill a flagged order — whether, in the analogy, to give an F for plagiarism. After all, legitimate researchers do legitimately need to have DNA from legitimately bad organisms.

"Maybe it's the CDC," says Rao. "If things check out, oftentimes [the manufacturers] will fulfill the order." If it's your aggrieved uncle seeking the virulent pathogen, maybe not. But ultimately, no one is stopping the manufacturers from doing so.

Beyond that kind of tampering, though, cyberbiosecurity also includes keeping a lockdown on the machines that make the genetic sequences. "Somebody now doesn't need physical access to infrastructure to tamper with it," says Rao. So it needs the same cyber protections as other internet-connected devices.

Scientists are also now using DNA to store data — encoding information in its bases, rather than into a hard drive. To download the data, you sequence the DNA and read it back into a computer. But if you think like a bad guy, you'd realize that a bad guy could then, for instance, insert a computer virus into the genetic code, and when the researcher went to nab her data, her desktop would crash or infect the others on the network.

Something like that actually happened in 2017 at the USENIX security symposium, an annual programming conference: Researchers from the University of Washington encoded malware into DNA, and when the gene sequencer assembled the DNA, it corrupted the sequencer's software, then the computer that controlled it.

"This vulnerability could be just the opening an adversary needs to compromise an organization's systems," Inspirion Biosciences' J. Craig Reed and Nicolas Dunaway wrote in a paper for Frontiers in Bioengineering and Biotechnology, included in an e-book that Murch edited called Mapping the Cyberbiosecurity Enterprise.

Where We Go From Here

So what to do about all this? That's hard to say, in part because we don't know how big a current problem any of it poses. As noted in Mapping the Cyberbiosecurity Enterprise, "Information about private sector infrastructure vulnerabilities or data breaches is protected from public release by the Protected Critical Infrastructure Information (PCII) Program," if the privateers share the information with the government. "Government sector vulnerabilities or data breaches," meanwhile, "are rarely shared with the public."

"What I think is encouraging right now is the fact that we're even having this discussion."

The regulations that could rein in problems aren't as robust as many would like them to be, and much good behavior is technically voluntary — although guidelines and best practices do exist from organizations like the International Gene Synthesis Consortium and the National Institute of Standards and Technology.

Rao thinks it would be smart if grant-giving agencies like the National Institutes of Health and the National Science Foundation required any scientists who took their money to work with manufacturing companies that screen sequences. But he also still thinks we're on our way to being ahead of the curve, in terms of preventing print-your-own bioproblems: "What I think is encouraging right now is the fact that we're even having this discussion," says Rao.

Peccoud, for his part, has worked to keep such conversations going, including by doing training for the FBI and planning a workshop for students in which they imagine and work to guard against the malicious use of their research. But actually, Peccoud believes that human error, flawed lab processes, and mislabeled samples might be bigger threats than the outside ones. "Way too often, I think that people think of security as, 'Oh, there is a bad guy going after me,' and the main thing you should be worried about is yourself and errors," he says.

Murch thinks we're only at the beginning of understanding where our weak points are, and how many times they've been bruised. Decreasing those contusions, though, won't just take more secure systems. "The answer won't be technical only," he says. It'll be social, political, policy-related, and economic — a cultural revolution all its own.

Have You Heard of the Best Sport for Brain Health?

In this week's Friday Five, research points to this brain healthiest of sports. Plus, the natural way to reprogram cells to a younger state, the network that could underlie many different mental illnesses, and a new test could diagnose autism in newborns. Plus, scientists 3D print an ear and attach it to woman

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five:

- Reprogram cells to a younger state

- Pick up this sport for brain health

- Do all mental illnesses have the same underlying cause?

- New test could diagnose autism in newborns

- Scientists 3D print an ear and attach it to woman

Can blockchain help solve the Henrietta Lacks problem?

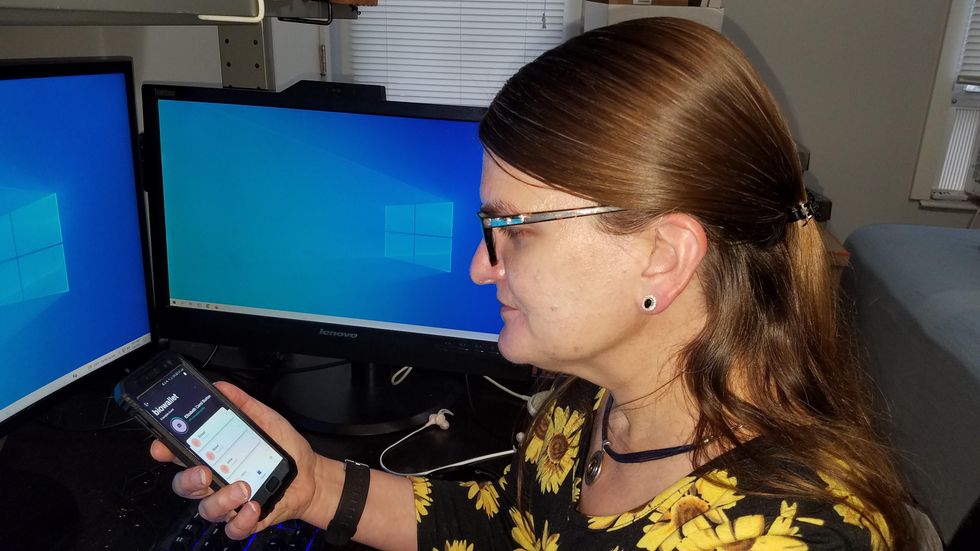

Marielle Gross, a professor at the University of Pittsburgh, shows patients a new app that tracks how their samples are used during biomedical research.

Science has come a long way since Henrietta Lacks, a Black woman from Baltimore, succumbed to cervical cancer at age 31 in 1951 -- only eight months after her diagnosis. Since then, research involving her cancer cells has advanced scientific understanding of the human papilloma virus, polio vaccines, medications for HIV/AIDS and in vitro fertilization.

Today, the World Health Organization reports that those cells are essential in mounting a COVID-19 response. But they were commercialized without the awareness or permission of Lacks or her family, who have filed a lawsuit against a biotech company for profiting from these “HeLa” cells.

While obtaining an individual's informed consent has become standard procedure before the use of tissues in medical research, many patients still don’t know what happens to their samples. Now, a new phone-based app is aiming to change that.

Tissue donors can track what scientists do with their samples while safeguarding privacy, through a pilot program initiated in October by researchers at the Johns Hopkins Berman Institute of Bioethics and the University of Pittsburgh’s Institute for Precision Medicine. The program uses blockchain technology to offer patients this opportunity through the University of Pittsburgh's Breast Disease Research Repository, while assuring that their identities remain anonymous to investigators.

A blockchain is a digital, tamper-proof ledger of transactions duplicated and distributed across a computer system network. Whenever a transaction occurs with a patient’s sample, multiple stakeholders can track it while the owner’s identity remains encrypted. Special certificates called “nonfungible tokens,” or NFTs, represent patients’ unique samples on a trusted and widely used blockchain that reinforces transparency.

Blockchain could be used to notify people if cancer researchers discover that they have certain risk factors.

“Healthcare is very data rich, but control of that data often does not lie with the patient,” said Julius Bogdan, vice president of analytics for North America at the Healthcare Information and Management Systems Society (HIMSS), a Chicago-based global technology nonprofit. “NFTs allow for the encapsulation of a patient’s data in a digital asset controlled by the patient.” He added that this technology enables a more secure and informed method of participating in clinical and research trials.

Without this technology, de-identification of patients’ samples during biomedical research had the unintended consequence of preventing them from discovering what researchers find -- even if that data could benefit their health. A solution was urgently needed, said Marielle Gross, assistant professor of obstetrics, gynecology and reproductive science and bioethics at the University of Pittsburgh School of Medicine.

“A researcher can learn something from your bio samples or medical records that could be life-saving information for you, and they have no way to let you or your doctor know,” said Gross, who is also an affiliate assistant professor at the Berman Institute. “There’s no good reason for that to stay the way that it is.”

For instance, blockchain could be used to notify people if cancer researchers discover that they have certain risk factors. Gross estimated that less than half of breast cancer patients are tested for mutations in BRCA1 and BRCA2 — tumor suppressor genes that are important in combating cancer. With normal function, these genes help prevent breast, ovarian and other cells from proliferating in an uncontrolled manner. If researchers find mutations, it’s relevant for a patient’s and family’s follow-up care — and that’s a prime example of how this newly designed app could play a life-saving role, she said.

Liz Burton was one of the first patients at the University of Pittsburgh to opt for the app -- called de-bi, which is short for decentralized biobank -- before undergoing a mastectomy for early-stage breast cancer in November, after it was diagnosed on a routine mammogram. She often takes part in medical research and looks forward to tracking her tissues.

“Anytime there’s a scientific experiment or study, I’m quick to participate -- to advance my own wellness as well as knowledge in general,” said Burton, 49, a life insurance service representative who lives in Carnegie, Pa. “It’s my way of contributing.”

Liz Burton was one of the first patients at the University of Pittsburgh to opt for the app before undergoing a mastectomy for early-stage breast cancer.

Liz Burton

The pilot program raises the issue of what investigators may owe study participants, especially since certain populations, such as Black and indigenous peoples, historically were not treated in an ethical manner for scientific purposes. “It’s a truly laudable effort,” Tamar Schiff, a postdoctoral fellow in medical ethics at New York University’s Grossman School of Medicine, said of the endeavor. “Research participants are beautifully altruistic.”

Lauren Sankary, a bioethicist and associate director of the neuroethics program at Cleveland Clinic, agrees that the pilot program provides increased transparency for study participants regarding how scientists use their tissues while acknowledging individuals’ contributions to research.

However, she added, “it may require researchers to develop a process for ongoing communication to be responsive to additional input from research participants.”

Peter H. Schwartz, professor of medicine and director of Indiana University’s Center for Bioethics in Indianapolis, said the program is promising, but he wonders what will happen if a patient has concerns about a particular research project involving their tissues.

“I can imagine a situation where a patient objects to their sample being used for some disease they’ve never heard about, or which carries some kind of stigma like a mental illness,” Schwartz said, noting that researchers would have to evaluate how to react. “There’s no simple answer to those questions, but the technology has to be assessed with an eye to the problems it could raise.”

To truly make a difference, blockchain must enable broad consent from patients, not just de-identification.

As a result, researchers may need to factor in how much information to share with patients and how to explain it, Schiff said. There are also concerns that in tracking their samples, patients could tell others what they learned before researchers are ready to publicly release this information. However, Bogdan, the vice president of the HIMSS nonprofit, believes only a minimal study identifier would be stored in an NFT, not patient data, research results or any type of proprietary trial information.

Some patients may be confused by blockchain and reluctant to embrace it. “The complexity of NFTs may prevent the average citizen from capitalizing on their potential or vendors willing to participate in the blockchain network,” Bogdan said. “Blockchain technology is also quite costly in terms of computational power and energy consumption, contributing to greenhouse gas emissions and climate change.”

In addition, this nascent, groundbreaking technology is immature and vulnerable to data security flaws, disputes over intellectual property rights and privacy issues, though it does offer baseline protections to maintain confidentiality. To truly make a difference, blockchain must enable broad consent from patients, not just de-identification, said Robyn Shapiro, a bioethicist and founding attorney at Health Sciences Law Group near Milwaukee.

The Henrietta Lacks story is a prime example, Shapiro noted. During her treatment for cervical cancer at Johns Hopkins, Lacks’s tissue was de-identified (albeit not entirely, because her cell line, HeLa, bore her initials). After her death, those cells were replicated and distributed for important and lucrative research and product development purposes without her knowledge or consent.

Nonetheless, Shapiro thinks that the initiative by the University of Pittsburgh and Johns Hopkins has potential to solve some ethical challenges involved in research use of biospecimens. “Compared to the system that allowed Lacks’s cells to be used without her permission, Shapiro said, “blockchain technology using nonfungible tokens that allow patients to follow their samples may enhance transparency, accountability and respect for persons who contribute their tissue and clinical data for research.”

Read more about laws that have prevented people from the rights to their own cells.