How a Deadly Fire Gave Birth to Modern Medicine

The Cocoanut Grove fire in Boston in 1942 tragically claimed 490 lives, but was the catalyst for several important medical advances.

On the evening of November 28, 1942, more than 1,000 revelers from the Boston College-Holy Cross football game jammed into the Cocoanut Grove, Boston's oldest nightclub. When a spark from faulty wiring accidently ignited an artificial palm tree, the packed nightspot, which was only designed to accommodate about 500 people, was quickly engulfed in flames. In the ensuing panic, hundreds of people were trapped inside, with most exit doors locked. Bodies piled up by the only open entrance, jamming the exits, and 490 people ultimately died in the worst fire in the country in forty years.

"People couldn't get out," says Dr. Kenneth Marshall, a retired plastic surgeon in Boston and president of the Cocoanut Grove Memorial Committee. "It was a tragedy of mammoth proportions."

Within a half an hour of the start of the blaze, the Red Cross mobilized more than five hundred volunteers in what one newspaper called a "Rehearsal for Possible Blitz." The mayor of Boston imposed martial law. More than 300 victims—many of whom subsequently died--were taken to Boston City Hospital in one hour, averaging one victim every eleven seconds, while Massachusetts General Hospital admitted 114 victims in two hours. In the hospitals, 220 victims clung precariously to life, in agonizing pain from massive burns, their bodies ravaged by infection.

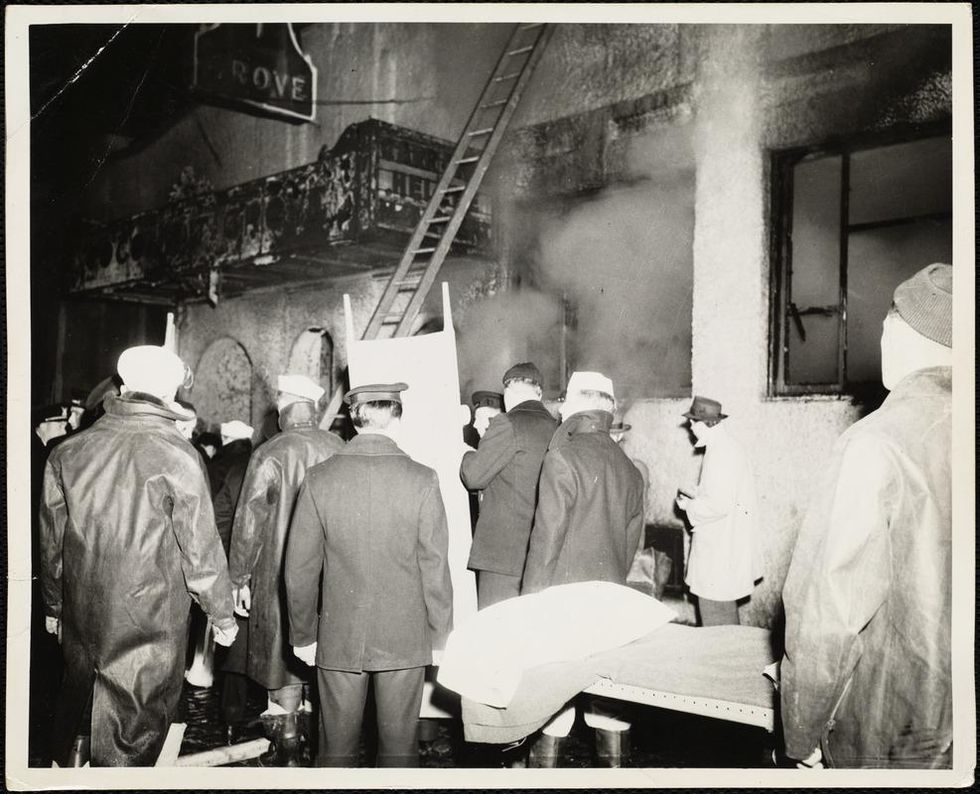

The scene of the fire.

Boston Public Library

Tragic Losses Prompted Revolutionary Leaps

But there is a silver lining: this horrific disaster prompted dramatic changes in safety regulations to prevent another catastrophe of this magnitude and led to the development of medical techniques that eventually saved millions of lives. It transformed burn care treatment and the use of plasma on burn victims, but most importantly, it introduced to the public a new wonder drug that revolutionized medicine, midwifed the birth of the modern pharmaceutical industry, and nearly doubled life expectancy, from 48 years at the turn of the 20th century to 78 years in the post-World War II years.

The devastating grief of the survivors also led to the first published study of post-traumatic stress disorder by pioneering psychiatrist Alexandra Adler, daughter of famed Viennese psychoanalyst Alfred Adler, who was a student of Freud. Dr. Adler studied the anxiety and depression that followed this catastrophe, according to the New York Times, and "later applied her findings to the treatment World War II veterans."

Dr. Ken Marshall is intimately familiar with the lingering psychological trauma of enduring such a disaster. His mother, an Irish immigrant and a nurse in the surgical wards at Boston City Hospital, was on duty that cold Thanksgiving weekend night, and didn't come home for four days. "For years afterward, she'd wake up screaming in the middle of the night," recalls Dr. Marshall, who was four years old at the time. "Seeing all those bodies lined up in neat rows across the City Hospital's parking lot, still in their evening clothes. It was always on her mind and memories of the horrors plagued her for the rest of her life."

The sheer magnitude of casualties prompted overwhelmed physicians to try experimental new procedures that were later successfully used to treat thousands of battlefield casualties. Instead of cutting off blisters and using dyes and tannic acid to treat burned tissues, which can harden the skin, they applied gauze coated with petroleum jelly. Doctors also refined the formula for using plasma--the fluid portion of blood and a medical technology that was just four years old--to replenish bodily liquids that evaporated because of the loss of the protective covering of skin.

"Every war has given us a new medical advance. And penicillin was the great scientific advance of World War II."

"The initial insult with burns is a loss of fluids and patients can die of shock," says Dr. Ken Marshall. "The scientific progress that was made by the two institutions revolutionized fluid management and topical management of burn care forever."

Still, they could not halt the staph infections that kill most burn victims—which prompted the first civilian use of a miracle elixir that was being secretly developed in government-sponsored labs and that ultimately ushered in a new age in therapeutics. Military officials quickly realized this disaster could provide an excellent natural laboratory to test the effectiveness of this drug and see if it could be used to treat the acute traumas of combat in this unfortunate civilian approximation of battlefield conditions. At the time, the very existence of this wondrous medicine—penicillin—was a closely guarded military secret.

From Forgotten Lab Experiment to Wonder Drug

In 1928, Alexander Fleming discovered the curative powers of penicillin, which promised to eradicate infectious pathogens that killed millions every year. But the road to mass producing enough of the highly unstable mold was littered with seemingly unsurmountable obstacles and it remained a forgotten laboratory curiosity for over a decade. But Fleming never gave up and penicillin's eventual rescue from obscurity was a landmark in scientific history.

In 1940, a group at Oxford University, funded in part by the Rockefeller Foundation, isolated enough penicillin to test it on twenty-five mice, which had been infected with lethal doses of streptococci. Its therapeutic effects were miraculous—the untreated mice died within hours, while the treated ones played merrily in their cages, undisturbed. Subsequent tests on a handful of patients, who were brought back from the brink of death, confirmed that penicillin was indeed a wonder drug. But Britain was then being ravaged by the German Luftwaffe during the Blitz, and there were simply no resources to devote to penicillin during the Nazi onslaught.

In June of 1941, two of the Oxford researchers, Howard Florey and Ernst Chain, embarked on a clandestine mission to enlist American aid. Samples of the temperamental mold were stored in their coats. By October, the Roosevelt Administration had recruited four companies—Merck, Squibb, Pfizer and Lederle—to team up in a massive, top-secret development program. Merck, which had more experience with fermentation procedures, swiftly pulled away from the pack and every milligram they produced was zealously hoarded.

After the nightclub fire, the government ordered Merck to dispatch to Boston whatever supplies of penicillin that they could spare and to refine any crude penicillin broth brewing in Merck's fermentation vats. After working in round-the-clock relays over the course of three days, on the evening of December 1st, 1942, a refrigerated truck containing thirty-two liters of injectable penicillin left Merck's Rahway, New Jersey plant. It was accompanied by a convoy of police escorts through four states before arriving in the pre-dawn hours at Massachusetts General Hospital. Dozens of people were rescued from near-certain death in the first public demonstration of the powers of the antibiotic, and the existence of penicillin could no longer be kept secret from inquisitive reporters and an exultant public. The next day, the Boston Globe called it "priceless" and Time magazine dubbed it a "wonder drug."

Within fourteen months, penicillin production escalated exponentially, churning out enough to save the lives of thousands of soldiers, including many from the Normandy invasion. And in October 1945, just weeks after the Japanese surrender ended World War II, Alexander Fleming, Howard Florey and Ernst Chain were awarded the Nobel Prize in medicine. But penicillin didn't just save lives—it helped build some of the most innovative medical and scientific companies in history, including Merck, Pfizer, Glaxo and Sandoz.

"Every war has given us a new medical advance," concludes Marshall. "And penicillin was the great scientific advance of World War II."

Black Participants Are Sorely Absent from Medical Research

Black participants are under-represented in clinical research.

After years of suffering from mysterious symptoms, my mother Janice Thomas finally found a doctor who correctly diagnosed her with two autoimmune diseases, Lupus and Sjogren's. Both diseases are more prevalent in the black population than in other races and are often misdiagnosed.

The National Institutes of Health has found that minorities make up less than 10 percent of trial participants.

Like many chronic health conditions, a lack of understanding persists about their causes, individual manifestations, and best treatment strategies.

On the search for relief from chronic pain, my mother started researching options and decided to participate in clinical trials as a way to gain much-needed insights. In return, she received discounted medical testing and has played an active role in finding answers for all.

"When my doctor told me I could get financial or medical benefits from participating in clinical trials for the same test I was already doing, I figured it would be an easy way to get some answers at little to no cost," she says.

As a person of color, her presence in clinical studies is rare. The National Institutes of Health has found that minorities make up less than 10 percent of trial participants.

Without trial participation that is reflective of the general population, pharmaceutical companies and medical professionals are left guessing how various drugs work across racial lines. For example, albuterol, a widely used asthma treatment, was found to have decreased effectiveness for black American and Puerto Rican children. Many high mortality conditions, like cancer, also show different outcomes based on race.

Over the last decade, the pervasive lack of representation has left communities of color demanding higher levels of involvement in the research process. However, no consensus yet exists on how best to achieve this.

But experts suggest that before we can improve black participation in medical studies, key misconceptions must be addressed, such as the false assumption that such patients are unwilling to participate because they distrust scientists.

Jill A. Fisher, a professor in the Center for Bioethics at the University of North Carolina at Chapel Hill, learned in one study that mistrust wasn't the main barrier for black Americans. "There is a lot of evidence that researchers' recruitment of black Americans is generally poorly done, with many black patients simply not asked," Fisher says. "Moreover, the underrepresentation of black Americans is primarily true for efficacy trials - those testing whether an investigational drug might therapeutically benefit patients with specific illnesses."

Without increased minority participation, research will not accurately reflect the diversity of the general population.

Dr. Joyce Balls-Berry, a psychiatric epidemiologist and health educator, agrees that black Americans are often overlooked in the process. One study she conducted found that "enrollment of minorities in clinical trials meant using a variety of culturally appropriate strategies to engage participants," she explained.

To overcome this hurdle, The National Black Church Initiative (NBCI), a faith-based organization made up of 34,000 churches and over 15.7 million African Americans, last year urged the Food and Drug Administration to mandate diversity in all clinical trials before approving a drug or device. However, the FDA declined to implement the mandate, declaring that they don't have the authority to regulate diversity in clinical trials.

"African Americans have not been successfully incorporated into the advancement of medicine and research technologies as legitimate and natural born citizens of this country," admonishes NBCI's president Rev. Anthony Evans.

His words conjure a reminder of the medical system's insidious history for people of color. The most infamous incident is the Tuskegee syphilis scandal, in which white government doctors perpetrated harmful experiments on hundreds of unsuspecting black men for forty years, until the research was shut down in the early 1970s.

Today, in the second decade of twenty-first century, the pernicious narrative that blacks are outsiders in science and medicine must be challenged, says Dr. Danielle N. Lee, assistant professor of biological sciences at Southern Illinois University. And having majority white participants in clinical trials only furthers the notion that "whiteness" is the default.

According to Lee, black individuals often see themselves disconnected from scientific and medical processes. "One of the critiques with science and medical research is that communities of color, and black communities in particular, regard ourselves as outsiders of science," Lee says. "We are othered."

Without increased minority participation, research will not accurately reflect the diversity of the general population.

"We are all human, but we are different, and yes, even different populations of people require modified medical responses," she points out.

Another obstacle is that many trials have health requirements that exclude black Americans, like not wanting individuals who have high blood pressure or a history of stroke. Considering that this group faces health disparities at a higher rate than whites, this eliminates eligibility for millions of potential participants.

One way to increase the diversity in sample participation without an FDA mandate is to include more black Americans in both volunteer and clinical roles during the research process to increase accountability in treatment, education, and advocacy.

"When more of us participate in clinical trials, we help build out the basic data sets that account for health disparities from the start, not after the fact," Lee says. She also suggests that researchers involve black patient representatives throughout the clinical trial process, from the study design to the interpretation of results.

"This allows for the black community to give insight on how to increase trial enrollment and help reduce stigma," she explains.

Thankfully, partnerships are popping up like the one between The Howard University's Cancer Center and Driver, a platform that connects cancer patients to treatment and trials. These sorts of targeted and culturally tailored efforts allow black patients to receive assistance from well-respected organizations.

Some observers suggest that the federal government and pharmaceutical industries must step up to address the gap.

However, some experts say that the black community should not be held solely responsible for solving a problem it did not cause. Instead, some observers suggest that the federal government and pharmaceutical industries must step up to address the gap.

According to Balls-Berry, socioeconomic barriers like transportation, time off work, and childcare related to trial participation must be removed. "These are real-world issues and yet many times researchers have not included these things in their budgets."

When asked to comment, a spokesperson for BIO, the world's largest biotech trade association, emailed the following statement:

"BIO believes that that our members' products and services should address the needs of a diverse population, and enhancing participation in clinical trials by a diverse patient population is a priority for BIO and our member companies. By investing in patient education to improve awareness of clinical trial opportunities, we can reduce disparities in clinical research to better reflect the country's changing demographics."

For my mother, the patient suffering from autoimmune disease, the need for broad participation in medical research is clear. "Without clinical trials, we would have less diagnosis and solutions to diseases," she says. "I think it's an underutilized resource."

Why You Can’t Blame Your Behavior On Your Gut Microbiome

People eating pizza; are they being influenced by their gut microbiome?

See a hot pizza sitting on a table. Count the missing pieces: three. They tasted delicious and yes, you've eaten enough—but you're still eyeing a fourth piece. Do you reach out and take it, or not?

"The difficulty comes in translating the animal data into the human situation."

Your behavior in that next moment is anything but simple: as far as scientists can tell, it comes down to a complex confluence of circumstances, genes, and personality characteristics. And the latest proposed addition to this list is the gut microbiome—the community of microorganisms, including bacteria, archaea, fungi, and viruses—that are full-time residents of your digestive tract.

It is entirely plausible that your gut microbiome might influence your behavior, scientists say: a well-known communication channel, called the gut-brain axis, runs both ways between your brain and your digestive tract. Gut bugs, which are close to the action, could amplify or dampen the messages, thereby shaping how you act. Messages about food-related behaviors could be particularly susceptible to interception by these microorganisms.

Perhaps it's convenient to imagine your resident microbes sitting greedily in your gut, crying for more pizza and tricking your brain into getting them what they want. The problem is, there's a distinct lack of scientific support for this actually happening in humans.

John Bienenstock, professor of pathology and molecular medicine at McMaster University (Canada), has worked on the gut microbiome-behavior connection for several decades. "There's a lot of evidence now in animals—particularly in mice," he says.

Indeed, his group and others have shown that, by eliminating or altering gut bugs, they can make mice exhibit different social behaviors or respond more coolly to stress; they can even make a shy mouse turn brave. But Bienenstock cautions: "The difficulty comes in translating the animal data into the human situation."

Animal behaviors are worlds apart from what we do on a daily basis—from brushing our teeth to navigating complex social situations.

Not that it's an easy task to figure out which aspects of animal research are relevant to people in everyday life. Animal behaviors are worlds apart from what we do on a daily basis—from brushing our teeth to navigating complex social situations.

Elaine Hsiao, assistant professor of integrative biology and physiology at UCLA, has also looked closely at the microbiome-gut-brain axis in mice and pondered how to translate the results into humans. She says, "Both the microbiome and behavior vary substantially [from person to person] and can be strongly influenced by environmental factors—which makes it difficult to run a well-controlled study on effects of the microbiome on human behavior."

She adds, "Human behaviors are very complex and the metrics used to quantify behavior are often not precise enough to derive clear interpretations." So the challenge is not only to figure out what people actually do, but also to give those actions numerical codes that allow them to be compared against other actions.

Hsiao and colleagues are nevertheless attempting to make connections: building on some animal research, their recent study found a three-way association in humans between molecules produced by their gut bacteria (that is, indole metabolites), the connectedness of different brain regions as measured through functional magnetic resonance imaging, and measures of behavior: questionnaires assessing food addiction and anxiety.

Meanwhile, other studies have found it may be possible to change a person's behavior through either probiotics or gut-localized antibiotics. Several probiotics even show promise for altering behavior in clinical conditions like depression. Yet how these phenomena occur is still unknown and, overall, scientists lack solid evidence on how bugs control behavior.

Bienenstock, however, is one of many continuing to investigate. He says, "Some of these observations are very striking. They're so striking that clearly something's up."

He says that after identifying a behavior-changing bug, or set of bugs, in mice: "The obvious next thing is: How [is it] occurring? Why is it occurring? What are the molecules involved?" Bienenstock favors the approach of nailing down a mechanism in animal models before starting to investigate its relevance to humans.

He explains, "[This preclinical work] should allow us to identify either target molecules or target pathways, which then can be translated."

Bienenstock also acknowledges the 'hype' that appears to surround this particular field of study. Despite the decidedly slow emergence of data linking the microbiome to human behavior, scientific reviews have appeared in brain-related scientific journals—for instance, Trends in Cognitive Sciences; CNS Drugs—with remarkable frequency. Not only this, but popular books and media articles have given the idea wings.

It might be compelling to blame our microbiomes for behaviors we don't prefer or can't explain—like reaching for another slice of pizza. But until the scientific observations yield stronger results, we still lack proof that we're doing what we do—or eating what we eat—exclusively at the behest of our resident microorganisms.