Pregnant & Breastfeeding Women Who Get the COVID-19 Vaccine Are Protecting Their Infants, Research Suggests

Becky Cummings, who got vaccinated in December, snuggles her newborn, Clark, while he takes a nap.

Becky Cummings had multiple reasons to get vaccinated against COVID-19 while tending to her firstborn, Clark, who arrived in September 2020 at 27 weeks.

The 29-year-old intensive care unit nurse in Greensboro, North Carolina, had witnessed the devastation day in and day out as the virus took its toll on the young and old. But when she was offered the vaccine, she hesitated, skeptical of its rapid emergency use authorization.

Exclusion of pregnant and lactating mothers from clinical trials fueled her concerns. Ultimately, though, she concluded the benefits of vaccination outweighed the risks of contracting the potentially deadly virus.

"Long story short," Cummings says, in December "I got vaccinated to protect myself, my family, my patients, and the general public."

At the time, Cummings remained on the fence about breastfeeding, citing a lack of evidence to support its safety after vaccination, so she pumped and stashed breast milk in the freezer. Her son is adjusting to life as a preemie, requiring mother's milk to be thickened with formula, but she's becoming comfortable with the idea of breastfeeding as more research suggests it's safe.

"If I could pop him on the boob," she says, "I would do it in a heartbeat."

Now, a study recently published in the Journal of the American Medical Association found "robust secretion" of specific antibodies in the breast milk of mothers who received a COVID-19 vaccine, indicating a potentially protective effect against infection in their infants.

The presence of antibodies in the breast milk, detectable as early as two weeks after vaccination, lasted for six weeks after the second dose of the Pfizer-BioNTech vaccine.

"We believe antibody secretion into breast milk will persist for much longer than six weeks, but we first wanted to prove any secretion at all after vaccination," says Ilan Youngster, the study's corresponding author and head of pediatric infectious diseases at Shamir Medical Center in Zerifin, Israel.

That's why the research team performed a preliminary analysis at six weeks. "We are still collecting samples from participants and hope to soon be able to comment about the duration of secretion."

As with other respiratory illnesses, such as influenza and pertussis, secretion of antibodies in breast milk confers protection from infection in infants. The researchers expect a similar immune response from the COVID-19 vaccine and are expecting the findings to spur an increase in vaccine acceptance among pregnant and lactating women.

A COVID-19 outbreak struck three families the research team followed in the study, resulting in at least one non-breastfed sibling developing symptomatic infection; however, none of the breastfed babies became ill. "This is obviously not empirical proof," Youngster acknowledges, "but still a nice anecdote."

Leaps.org inquired whether infants who derive antibodies only through breast milk are likely to have a lower immunity than infants whose mothers were vaccinated while they were in utero. In other words, is maternal transmission of antibodies stronger during pregnancy than during breastfeeding, or about the same?

"This is a different kind of transmission," Youngster explains. "When a woman is infected or vaccinated during pregnancy, some antibodies will be transferred through the placenta to the baby's bloodstream and be present for several months." But in the nursing mother, that protection occurs through local action. "We always recommend breastfeeding whenever possible, and, in this case, it might have added benefits."

A study published online in March found COVID-19 vaccination provided pregnant and lactating women with robust immune responses comparable to those experienced by their nonpregnant counterparts. The study, appearing in the American Journal of Obstetrics and Gynecology, documented the presence of vaccine-generated antibodies in umbilical cord blood and breast milk after mothers had been vaccinated.

Natali Aziz, a maternal-fetal medicine specialist at Stanford University School of Medicine, notes that it's too early to draw firm conclusions about the reduction in COVID-19 infection rates among newborns of vaccinated mothers. Citing the two aforementioned research studies, she says it's biologically plausible that antibodies passed through the placenta and breast milk impart protective benefits. While thousands of pregnant and lactating women have been vaccinated against COVID-19, without incurring adverse outcomes, many are still wondering whether it's safe to breastfeed afterward.

It's important to bear in mind that pregnant women may develop more severe COVID-19 complications, which could lead to intubation or admittance to the intensive care unit. "We, in our practice, are supporting pregnant and breastfeeding patients to be vaccinated," says Aziz, who is also director of perinatal infectious diseases at Stanford Children's Health, which has been vaccinating new mothers and other hospitalized patients at discharge since late April.

Earlier in April, Huntington Hospital in Long Island, New York, began offering the COVID-19 vaccine to women after they gave birth. The hospital chose the one-shot Johnson & Johnson vaccine for postpartum patients, so they wouldn't need to return for a second shot while acclimating to life with a newborn, says Mitchell Kramer, chairman of obstetrics and gynecology.

The hospital suspended the program when the Food and Drug Administration and the Centers for Disease Control and Prevention paused use of the J&J vaccine starting April 13, while investigating several reports of dangerous blood clots and low platelet counts among more than 7 million people in the United States who had received that vaccine.

In lifting the pause April 23, the agencies announced the vaccine's fact sheets will bear a warning of the heightened risk for a rare but serious blood clot disorder among women under age 50. As a result, Kramer says, "we will likely not be using the J&J vaccine for our postpartum population."

So, would it make sense to vaccinate infants when one for them eventually becomes available, not just their mothers? "In general, most of the time, infants do not have as good of an immune response to vaccines," says Jonathan Temte, associate dean for public health and community engagement at the University of Wisconsin School of Medicine and Public Health in Madison.

"Many of our vaccines are held until children are six months of age. For example, the influenza vaccine starts at age six months, the measles vaccine typically starts one year of age, as do rubella and mumps. Immune response is typically not very good for viral illnesses in young infants under the age of six months."

So far, the FDA has granted emergency use authorization of the Pfizer-BioNTech vaccine for children as young as 16 years old. The agency is considering data from Pfizer to lower that age limit to 12. Studies are also underway in children under age 12. Meanwhile, data from Moderna on 12-to 17-year-olds and from Pfizer on 12- to 15-year-olds have not been made public. (Pfizer announced at the end of March that its vaccine is 100 percent effective in preventing COVID-19 in the latter age group, and FDA authorization for this population is expected soon.)

"There will be step-wise progression to younger children, with infants and toddlers being the last ones tested," says James Campbell, a pediatric infectious diseases physician and head of maternal and child clinical studies at the University of Maryland School of Medicine Center for Vaccine Development.

"Once the data are analyzed for safety, tolerability, optimal dose and regimen, and immune responses," he adds, "they could be authorized and recommended and made available to American children." The data on younger children are not expected until the end of this year, with regulatory authorization possible in early 2022.

For now, Vonnie Cesar, a family nurse practitioner in Smyrna, Georgia, is aiming to persuade expectant and new mothers to get vaccinated. She has observed that patients in metro Atlanta seem more inclined than their rural counterparts.

To quell some of their skepticism and fears, Cesar, who also teaches nursing students, conceived a visual way to demonstrate the novel mechanism behind the COVID-19 vaccine technology. Holding a palm-size physical therapy ball outfitted with clear-colored push pins, she simulates the spiked protein of the coronavirus. Slime slathered at the gaps permeates areas around the spikes—a process similar to how our antibodies build immunity to the virus.

These conversations often lead hesitant patients to discuss vaccination with their husbands or partners. "The majority of people I'm speaking with," she says, "are coming to the conclusion that this is the right thing for me, this is the common good, and they want to make sure that they're here for their children."

CORRECTION: An earlier version of this article mistakenly stated that the COVID-19 vaccines were granted emergency "approval." They have been granted emergency use authorization, not full FDA approval. We regret the error.

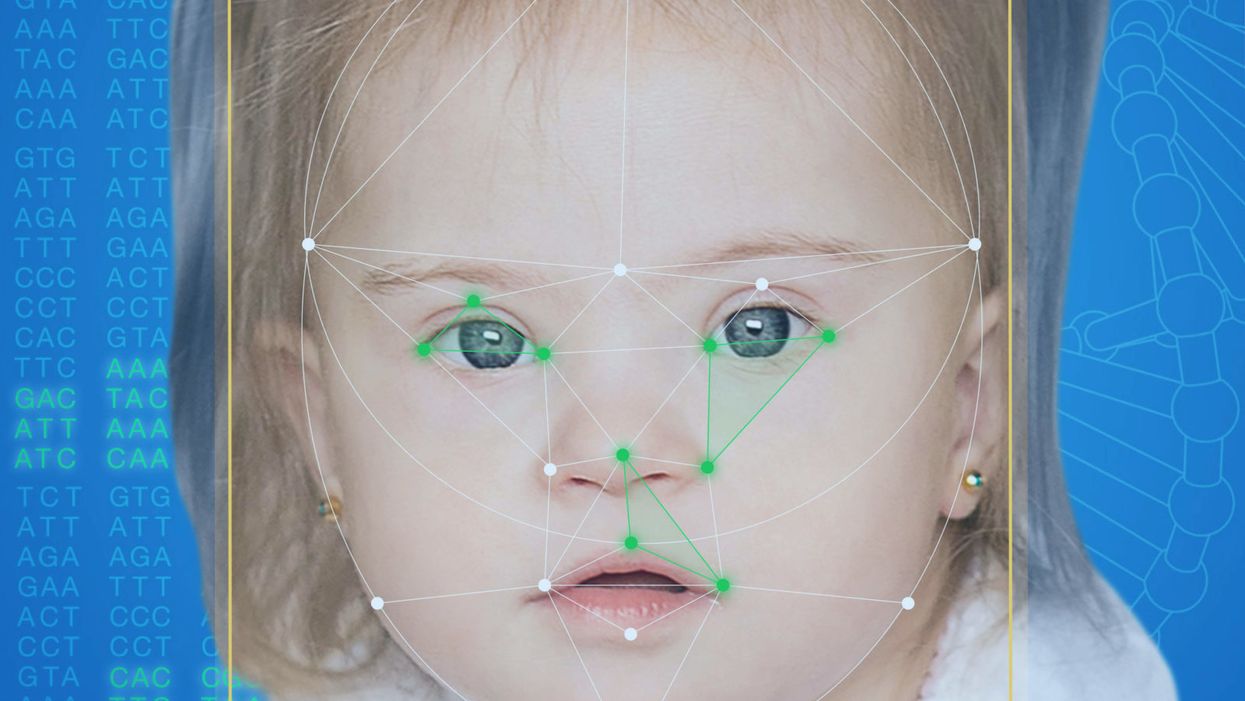

This App Helps Diagnose Rare Genetic Disorders from a Picture

FDNA's Face2Gene technology analyzes patient biometric data using artificial intelligence, identifying correlations with disease-causing genetic variations.

Medical geneticist Omar Abdul-Rahman had a hunch. He thought that the three-year-old boy with deep-set eyes, a rounded nose, and uplifted earlobes might have Mowat-Wilson syndrome, but he'd never seen a patient with the rare disorder before.

"If it weren't for the app I'm not sure I would have had the confidence to say 'yes you should spend $1000 on this test."

Rahman had already ordered genetic tests for three different conditions without any luck, and he didn't want to cost the family any more money—or hope—if he wasn't sure of the diagnosis. So he took a picture of the boy and uploaded the photo to Face2Gene, a diagnostic aid for rare genetic disorders. Sure enough, Mowat-Wilson came up as a potential match. The family agreed to one final genetic test, which was positive for the syndrome.

"If it weren't for the app I'm not sure I would have had the confidence to say 'yes you should spend $1000 on this test,'" says Rahman, who is now the director of Genetic Medicine at the University of Nebraska Medical Center, but saw the boy when he was in the Department of Pediatrics at the University of Mississippi Medical Center in 2012.

"Families who are dealing with undiagnosed diseases never know what's going to come around the corner, what other organ system might be a problem next week," Rahman says. With a diagnosis, "You don't have to wait for the other shoe to drop because now you know the extent of the condition."

A diagnosis is the first and most important step for patients to attain medical care. Disease prognosis, treatment plans, and emotional coping all stem from this critical phase. But diagnosis can also be the trickiest part of the process, particularly for rare disorders. According to one European survey, 40 percent of rare diseases are initially misdiagnosed.

Healthcare professionals and medical technology companies hope that facial recognition software will help prevent families from facing difficult disruptions due to misdiagnoses.

"Patients with rare diseases or genetic disorders go through a long period of diagnostic odyssey, and just putting a name to a syndrome or finding a diagnosis can be very helpful and relieve a lot of tension for the family," says Dekel Gelbman, CEO of FDNA.

Consequently, a misdiagnosis can be devastating for families. Money and time may have been wasted on fruitless treatments, while opportunities for potentially helpful therapies or clinical trials were missed. Parents led down the wrong path must change their expectations of their child's long-term prognosis and care. In addition, they may be misinformed regarding future decisions about family planning.

Healthcare professionals and medical technology companies hope that facial recognition software will help prevent families from facing these difficult disruptions by improving the accuracy and ease of diagnosing genetic disorders. Traditionally, doctors diagnose these types of conditions by identifying unique patterns of facial features, a practice called dysmorphology. Trained physicians can read a child's face like a map and detect any abnormal ridges or plateaus—wide-set eyes, broad forehead, flat nose, rotated ears—that, combined with other symptoms such as intellectual disability or abnormal height and weight, signify a specific genetic disorder.

These morphological changes can be subtle, though, and often only specialized medical geneticists are able to detect and interpret these facial clues. What's more, some genetic disorders are so rare that even a specialist may not have encountered it before, much less a general practitioner. Diagnosing rare conditions has improved thanks to genomic testing that can confirm (or refute) a doctor's suspicion. Yet with thousands of variants in each person's genome, identifying the culprit mutation or deletion can be extremely difficult if you don't know what you're looking for.

Facial recognition technology is trying to take some of the guesswork out of this process. Software such as the Face2Gene app use machine learning to compare a picture of a patient against images of thousands of disorders and come back with suggestions of possible diagnoses.

"This is a classic field for artificial intelligence because no human being can really have enough knowledge and enough experience to be able to do this for thousands of different disorders."

"When we met a geneticist for the first time we were pretty blown away with the fact that they actually use their own human pattern recognition" to diagnose patients, says Gelbman. "This is a classic field for AI [artificial intelligence], for machine learning because no human being can really have enough knowledge and enough experience to be able to do this for thousands of different disorders."

When a physician uploads a photo to the app, they are given a list of different diagnostic suggestions, each with a heat map to indicate how similar the facial features are to a classic representation of the syndrome. The physician can hone the suggestions by adding in other symptoms or family history. Gelbman emphasized that the app is a "search and reference tool" and should not "be used to diagnose or treat medical conditions." It is not approved by the FDA as a diagnostic.

"As a tool, we've all been waiting for this, something that can help everyone," says Julian Martinez-Agosto, an associate professor in human genetics and pediatrics at UCLA. He sees the greatest benefit of facial recognition technology in its ability to empower non-specialists to make a diagnosis. Many areas, including rural communities or resource-poor countries, do not have access to either medical geneticists trained in these types of diagnostics or genomic screens. Apps like Face2Gene can help guide a general practitioner or flag diseases they might not be familiar with.

One concern is that most textbook images of genetic disorders come from the West, so the "classic" face of a condition is often a child of European descent.

Maximilian Muenke, a senior investigator at the National Human Genome Research Institute (NHGRI), agrees that in many countries, facial recognition programs could be the only way for a doctor to make a diagnosis.

"There are only geneticists in countries like the U.S., Canada, Europe, Japan. In most countries, geneticists don't exist at all," Muenke says. "In Nigeria, the most populous country in all of Africa with 160 million people, there's not a single clinical geneticist. So in a country like that, facial recognition programs will be sought after and will be extremely useful to help make a diagnosis to the non-geneticists."

One concern about providing this type of technology to a global population is that most textbook images of genetic disorders come from the West, so the "classic" face of a condition is often a child of European descent. However, the defining facial features of some of these disorders manifest differently across ethnicities, leaving clinicians from other geographic regions at a disadvantage.

"Every syndrome is either more easy or more difficult to detect in people from different geographic backgrounds," explains Muenke. For example, "in some countries of Southeast Asia, the eyes are slanted upward, and that happens to be one of the findings that occurs mostly with children with Down Syndrome. So then it might be more difficult for some individuals to recognize Down Syndrome in children from Southeast Asia."

There is a risk that providing this type of diagnostic information online will lead to parents trying to classify their own children.

To combat this issue, Muenke helped develop the Atlas of Human Malformation Syndromes, a database that incorporates descriptions and pictures of patients from every continent. By providing examples of rare genetic disorders in children from outside of the United States and Europe, Muenke hopes to provide clinicians with a better understanding of what to look for in each condition, regardless of where they practice.

There is a risk that providing this type of diagnostic information online will lead to parents trying to classify their own children. Face2Gene is free to download in the app store, although users must be authenticated by the company as a healthcare professional before they can access the database. The NHGRI Atlas can be accessed by anyone through their website. However, Martinez and Muenke say parents already use Google and WebMD to look up their child's symptoms; facial recognition programs and databases are just an extension of that trend. In fact, Martinez says, "Empowering families is another way to facilitate access to care. Some families live in rural areas and have no access to geneticists. If they can use software to get a diagnosis and then contact someone at a large hospital, it can help facilitate the process."

Martinez also says the app could go further by providing greater transparency about how the program makes its assessments. Giving clinicians feedback about why a diagnosis fits certain facial features would offer a valuable teaching opportunity in addition to a diagnostic aid.

Both Martinez and Muenke think the technology is an innovation that could vastly benefit patients. "In the beginning, I was quite skeptical and I could not believe that a machine could replace a human," says Muenke. "However, I am a convert that it actually can help tremendously in making a diagnosis. I think there is a place for facial recognition programs, and I am a firm believer that this will spread over the next five years."

A thinking person.

"A world where people are slotted according to their inborn ability – well, that is Gattaca. That is eugenics."

This was the assessment of Dr. Catherine Bliss, a sociologist who wrote a new book on social science genetics, when asked by MIT Technology Review about polygenic scores that can predict a person's intelligence or performance in school. Like a credit score, a polygenic score is statistical tool that combines a lot of information about a person's genome into a single number. Fears about using polygenic scores for genetic discrimination are understandable, given this country's ugly history of using the science of heredity to justify atrocities like forcible sterilization. But polygenic scores are not the new eugenics. And, rushing to discuss polygenic scores in dystopian terms only contributes to widespread public misunderstanding about genetics.

Can we start genotyping toddlers to identify the budding geniuses among them? The short answer is no.

Let's begin with some background on how polygenic scores are developed. In a genome wide-association study, researchers conduct millions of statistical tests to identify small differences in people's DNA sequence that are correlated with differences in a target outcome (beyond what can attributed to chance or ancestry differences). Successful studies of this sort require enormous sample sizes, but companies like 23andMe are now contributing genetic data from their consumers to research studies, and national biorepositories like U.K. Biobank have put genetic information from hundreds of thousands of people online. When applied to studying blood lipids or myopia, this kind of study strikes people as a straightforward and uncontroversial scientific tool. But it can also be conducted for cognitive and behavioral outcomes, like how many years of school a person has completed. When researchers have finished a genome-wide association study, they are left with a dataset with millions of rows (one for each genetic variant analyzed) and one column with the correlations between each variant and the outcome being studied.

The trick to polygenic scoring is to use these results and apply them to people who weren't participants in the original study. Measure the genes of a new person, weight each one of her millions of genetic variants by its correlation with educational attainment from a genome-wide association study, and then simply add everything up into a single number. Voila! -- you've created a polygenic score for educational attainment. On its face, the idea of "scoring" a person's genotype does immediately suggest Gattaca-type applications. Can we now start screening embryos for their "inborn ability," as Bliss called it? Can we start genotyping toddlers to identify the budding geniuses among them?

The short answer is no. Here are four reasons why dystopian projections about polygenic scores are out of touch with the current science:

The phrase "DNA tests for IQ" makes for an attention-grabbing headline, but it's scientifically meaningless.

First, a polygenic score currently predicts the life outcomes of an individual child with a great deal of uncertainty. The amount of uncertainty around polygenic predictions will decrease in the future, as genetic discovery samples get bigger and genetic studies include more of the variation in the genome, including rare variants that are particular to a few families. But for now, knowing a child's polygenic score predicts his ultimate educational attainment about as well as knowing his family's income, and slightly worse than knowing how far his mother went in school. These pieces of information are also readily available about children before they are born, but no one is writing breathless think-pieces about the dystopian outcomes that will result from knowing whether a pregnant woman graduated from college.

Second, using polygenic scoring for embryo selection requires parents to create embryos using reproductive technology, rather than conceiving them by having sex. The prediction that many women will endure medically-unnecessary IVF, in order to select the embryo with the highest polygenic score, glosses over the invasiveness, indignity, pain, and heartbreak that these hormonal and surgical procedures can entail.

Third, and counterintuitively, a polygenic score might be using DNA to measure aspects of the child's environment. Remember, a child inherits her DNA from her parents, who typically also shape the environment she grows up in. And, children's environments respond to their unique personalities and temperaments. One Icelandic study found that parents' polygenic scores predicted their children's educational attainment, even if the score was constructed using only the half of the parental genome that the child didn't inherit. For example, imagine mom has genetic variant X that makes her more likely to smoke during her pregnancy. Prenatal exposure to nicotine, in turn, affects the child's neurodevelopment, leading to behavior problems in school. The school responds to his behavioral problems with suspension, causing him to miss out on instructional content. A genome-wide association study will collapse this long and winding causal path into a simple correlation -- "genetic variant X is correlated with academic achievement." But, a child's polygenic score, which includes variant X, will partly reflect his likelihood of being exposed to adverse prenatal and school environments.

Finally, the phrase "DNA tests for IQ" makes for an attention-grabbing headline, but it's scientifically meaningless. As I've written previously, it makes sense to talk about a bacterial test for strep throat, because strep throat is a medical condition defined as having streptococcal bacteria growing in the back of your throat. If your strep test is positive, you have strep throat, no matter how serious your symptoms are. But a polygenic score is not a test "for" IQ, because intelligence is not defined at the level of someone's DNA. It doesn't matter how high your polygenic score is, if you can't reason abstractly or learn from experience. Equating your intelligence, a cognitive capacity that is tested behaviorally, with your polygenic score, a number that is a weighted sum of genetic variants discovered to be statistically associated with educational attainment in a hypothesis-free data mining exercise, is misleading about what intelligence is and is not.

The task for many scientists like me, who are interested in understanding why some children do better in school than other children, is to disentangle correlations from causation.

So, if we're not going to build a Gattaca-style genetic hierarchy, what are polygenic scores good for? They are not useless. In fact, they give scientists a valuable new tool for studying how to improve children's lives. The task for many scientists like me, who are interested in understanding why some children do better in school than other children, is to disentangle correlations from causation. The best way to do that is to run an experiment where children are randomized to environments, but often a true experiment is unethical or impractical. You can't randomize children to be born to a teenage mother or to go to school with inexperienced teachers. By statistically controlling for some of the relevant genetic differences between people using a polygenic score, scientists are better able to identify potential environmental causes of differences in children's life outcomes. As we have seen with other methods from genetics, like twin studies, understanding genes illuminates the environment.

Research that examines genetics in relation to social inequality, such as differences in higher education outcomes, will obviously remind people of the horrors of the eugenics movement. Wariness regarding how genetic science will be applied is certainly warranted. But, polygenic scores are not pure measures of "inborn ability," and genome-wide association studies of human intelligence and educational attainment are not inevitably ushering in a new eugenics age.